At MIT, AI has become so pervasive that you can almost find your way into it without meaning to. Take Sili Deng, an associate professor of mechanical engineering. Deng says she still doesn’t know whether she’d have gone all in on artificial intelligence had it not been for the covid pandemic. She had joined the faculty in 2019 and was in the process of setting up her lab to study combustion kinetics, emissions reduction, and flame synthesis of energy materials when covid hit, putting a halt to all lab renovations. Because she needed to start from scratch, she challenged herself and her postdocs to try machine learning “and see, with the fundamental knowledge we have on the combustion side, what are the gaps that we think machine learning could [fill].” Under her leadership, Deng’s Energy and Nanotechnology Group used AI to develop a “digital twin” that mirrors the performance of an energy/flow device—a digital replica of a physical system. Eventually, this model should be able to predict and control the workings of fuel combustion systems in real time.

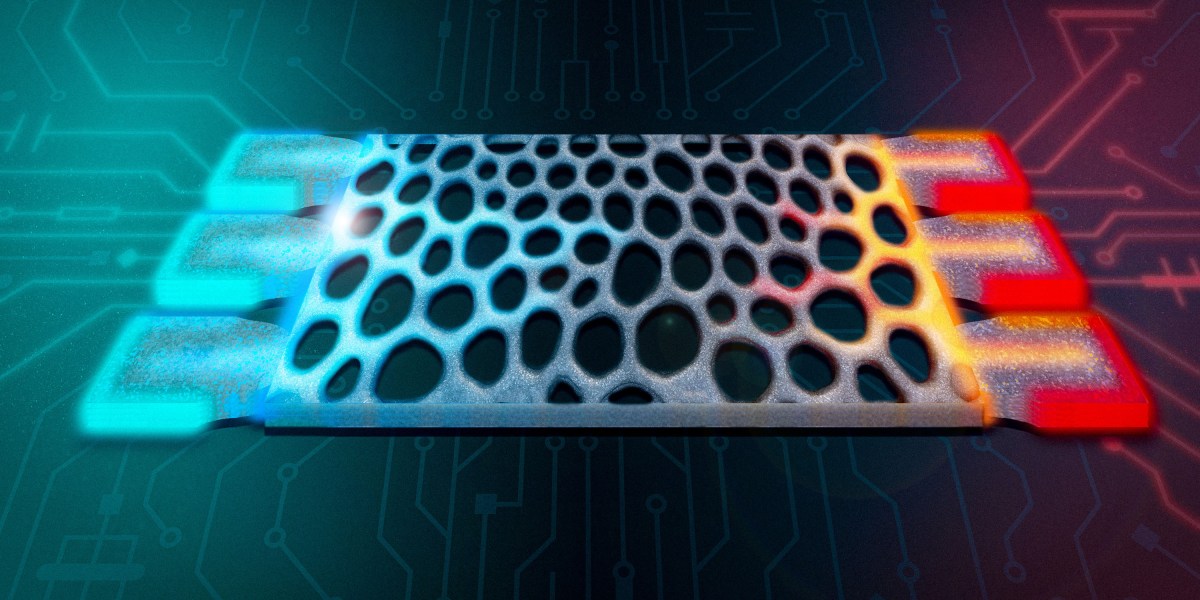

Unlike Deng, who came to AI through the slings and arrows of outrageous fortune, Zachary Cordero, an associate professor of aero-astro, began using AI thanks to a colleague’s expertise. In 2024 John Hart, head of the Department of Mechanical Engineering, suggested that Cordero, who develops novel materials and structures for emerging aerospace applications, meet with Faez Ahmed, an associate professor of mechanical engineering and an expert in machine learning and optimization for engineering design. Cordero says he hadn’t previously been pursuing AI-related research: “This is all totally new to me.” Working with Ahmed and other collaborators on a project sponsored by the US Defense Advanced Research Projects Agency (DARPA), Cordero developed an AI tool that can optimize the material composition of what’s known as a blisk—a bladed disk that’s a key component in jet and rocket turbine engines. Their work aims to improve engine performance and longevity and could lead to more reliable reusable rocket engines for heavy-lift launch vehicles. Cordero says the AI system augmented human intuition—even “on problems where it’s almost impossible to have intuition.”

Professor Ju Li posits that if AI is given autonomy to do experiments, to try different things and fail and learn from that, it could evolve into something very similar to human intelligence.

Stories like these abound at MIT. In every department, in almost every lab on campus, AI technologies such as machine learning, large language models, and neural networks are transforming research—turbocharging existing methods, opening previously unexplored or inaccessible pathways, and creating novel opportunities in drug development, computing, energy technologies, manufacturing, robotics, neuroscience, metallurgy, and even wildlife preservation. “I cannot think of a single group meeting that we have where we’re not talking about these tools,” says Angela Koehler, the Charles W. and Jennifer C. Johnson Professor of Biological Engineering and faculty lead of the MIT Health and Life Sciences Collaborative (MIT HEALS). Her research group uses AI models to develop drug candidates designed to attach to molecular targets previously considered “undruggable,” such as transcription factors, RNA-binding proteins, or cytokines. “I would say 90% of the thesis committees I’m on involve a significant AI component,” she says. “And that definitely was not the case five years ago.”

“Artificial intelligence is everywhere on campus,” says Ian Waitz, MIT’s vice president for research and the Jerome C. Hunsaker Professor of Aero-Astro. “Any field with a tremendous amount of complexity will benefit from it. Life sciences. Materials science. Anyone who does any kind of image analysis uses these tools now. I don’t know of a single research field here at MIT that hasn’t been impacted by AI.”

AI isn’t exactly new at MIT

Though Deng and Cordero may have come to it through happenstance or clever matchmaking, most developments in AI at MIT don’t arise that way. Nor is the interest in it new. More than 70 years ago, in 1954, computer researcher Belmont G. Farley and physicist Wesley A. Clark ran the world’s first computer simulation of a neural network at MIT. Interest in neural network technology—now better known as deep learning—waxed and waned over the next decades. Ju Li, PhD ’00, the Carl Richard Soderberg Professor of Power Engineering (as well as a professor of nuclear science and engineering and materials science and engineering), remembers taking a course on neural networks during Independent Activities Period (IAP) in 1995, when he was a graduate student. “It was not a deep network—just a few layers,” recalls Li, who researches materials used in nuclear energy, batteries, electrolyzers, and energy-efficient computing. He characterizes it as essentially a regression tool that they used to fit curves.

But over the past few years, activity in AI has exploded globally, fueled by powerful new models and an enormous increase in the computing power of chips; the resulting proliferation and evolution of data centers has in turn sparked more activity. Today, neural networks can have more than a thousand layers. Backed by massive investments in AI in both the public and private spheres, AI researchers have created a suite of tools that can scan almost immeasurable quantities and types of data; interface with sensors, robotics, and other mechanical devices; and communicate with human researchers in natural language.

“Many of the tools that we developed in the lab— they’re very broadly used in the pharmaceutical industry. And they’re really making significant impact.”

Regina Barzilay

Regina Barzilay has been working on AI since she came to MIT in 2003. Today, she’s the School of Engineering Distinguished Professor for AI and Health and AI faculty lead of the MIT Abdul Latif Jameel Clinic for Machine Learning in Health. But she says that if anyone had told her even 10 years ago where the field would be now and what kinds of things she’d be working on, she “absolutely” wouldn’t have believed it.

AI applications for drug discovery and development, one of Barzilay’s areas of expertise, have been particularly prolific and successful at MIT. Giovanni Traverso’s lab, for example, has used AI to design nanoparticles that can deliver RNA vaccines and other therapies more efficiently than previous systems. Researchers at CSAIL (the Computer Science & Artificial Intelligence Laboratory, where Barzilay is a principal investigator) have used AI models to explain how a narrow-spectrum antibiotic specifically targets harmful microbes in people with Crohn’s disease. The Jameel Clinic has helped build models that can predict which flu vaccine will be most effective in a given year. “Many of the tools that we developed in the lab—they’re very broadly used in the pharmaceutical industry,” she explains. “And they’re really making significant impact.” She says there’s not even a question anymore about whether they make a difference. They’ve become standard tools because they work every day.

One such tool is Boltz, an open-source AI model developed by a group at the Jameel Clinic and initially released in November 2024 as Boltz-1. Inspired by DeepMind’s AlphaFold2—a model that earned Demis Hassabis and John Jumper the 2024 Nobel Prize in chemistry—Boltz-1 helps scientists predict the 3D structures of proteins and other biological molecules. The Jameel Clinic researchers soon followed up with Boltz-2, which in addition to predicting molecular structure can also predict affinity—the strength with which a protein binds with a small molecule. Assays to measure affinity, a vital measure in drug development, are among the most importantperformed in biology and chemistry labs.

In October 2025, the Jameel Clinic released its latest iteration, BoltzGen—a generative AI model capable of designing custom proteins that could bind with a wide range of biomolecular targets. Molecular binders already play important roles in fields including therapeutics, diagnostics, and biotechnology. BoltzGen is the first advanced, large-scale model that considers every single atom in the potential new protein and every atom in its target molecule, providing greater accuracy.

Hannes Stärk, the fourth-year PhD student at CSAIL who built BoltzGen, says the model works because it actually learns—drawing inferences from the data it is trained with and then producing novel ideas inspired by that data. With machine learning, you want the model to generalize from the data you use to train it, says Stärk, who created BoltzGen over seven months, often working up to 12 hours a day. “Because otherwise,” he says, “your solution is already in your training data.” Stärk has also assembled a network of over 30 scientists both within and beyond MIT to explore the design and applications of molecular binders for use in drug development, metabolomics, and structural biology as well as in treating cancer, autoimmune diseases, and genetic diseases. “It’s nice to have one model that can do all of this,” he says. Training across all these areas also makes the model better at generalizing.

Beyond drug discovery

As labs working in drug development continue to reap benefits from AI, other researchers across the Institute are busy applying existing AI tools or, more often, developing their own models for use in myriad disciplines and applications. A cross-disciplinary group involving the Department of Electrical Engineering and Computer Science (EECS), CSAIL, and Mass General Hospital has launched MultiverSeg, a tool that quickly annotates areas of interest in medical images and could help scientists develop new treatments and map disease progression. MIT researchers are also designing and running AI-directed automated laboratories to accelerate and refine the process of discovering new components for sustainable materials and solar panels. And Ahmed’s MechE group is developing AI models to do such things as help automakers design high-performance vehicles or determine whether a large shipping vessel can be considered seaworthy. Ahmed also teaches a course titled AI and Machine Learning for Engineering Design. First offered in 2021, it attracts not only mechanical, civil, and environmental engineers but students from aero-astro, Sloan, and more.

“The goal is to tap into diverse types of raw data and turn that into “something that helps us understand what is putting species at risk.”

Sara Beery

Meanwhile, Priya Donti, an assistant professor of EECS and a PI at the Laboratory for Information & Decision Systems (LIDS), has developed AI-enabled optimization approaches to help schedule power generation resources on power grids. The machine-learning tools her group builds will help utility operators respond to many inevitable grid issues. “The big challenge is that on a power grid, you need to maintain this exact balance between the amount of power you’re producing and putting into the grid and the amount that you’re taking out on the other side,” she explains. “When you have a lot of variation from solar, wind, and other sources of power whose output varies based on the weather, you have to coordinate the grid much more tightly in order to maintain that balance.” Information about the physics of how power grids work is embedded in Donti’s AI model, so it functions and reacts much as a real grid would.

MIT researchers are even applying AI tools to explore and analyze the natural world. Sara Beery, an assistant professor of EECS who specializes in AI and decision-making, develops AI methods that discover and dig into ecological data collected by a wide range of remote sensing technologies to analyze and predict how species and ecosystems are changing around the globe. These technologies enable Beery and her colleagues to gather data on a far greater number of endangered species than ever before, and at an unprecedented scale. Historically, most ecological research has focused on collecting incredibly rich data about single species in really small regions, she says, but “we’ve realized that’s not sufficient.” Information gleaned from, say, a small part of one river ecosystem will not help us understand or prevent what she calls “the exponential increase in species extinction rates that we’re currently facing.” Already, Beery says, “we’re using multimodal AI to enable experts to quickly search massive repositories of image data, to discover data points that were previously very difficult to find.” But she says the goal is to be able to readily tap into diverse types of raw data—from satellite and bioacoustic sensor data to camera images and DNA—and “actually turn that into some sort of scientific insight, something that helps us understand what is putting species at risk.”

Mens et manus in AI

While some MIT researchers have successfully used AI to help invent technologies ranging from novel cancer therapies to safer high-performance automobiles, others are also using machine learning and other AI tools to help determine whether these technologies perform as promised—or can be produced successfully and economically at scale. Connor Coley, SM ’16, PhD ’19, an associate professor of chemical engineering and EECS, designs new molecules—and recipes for making new molecules, primarily small organic molecules—for potential use by pharmaceutical, agricultural, and other chemical companies. Coley, a former MIT Technology Review Innovators Under 35 honoree, has developed a “genetic” algorithm that uses biologically inspired processes including selection and mutation. This tool encodes potential polymer blends drawn from a large database of polymers into what is effectively a digital chromosome, which the algorithm then improves to generate the most promising material combinations.

Working at the intersection of chemistry and computer science, Coley believes AI could one day help his lab discover polymer blends that would lead to improved battery electrolytes and tailored nanoparticles for safer drug delivery. He and his lab also work to develop machine-learning tools that streamline the discovery and production processes. “If you want AI to be the brain behind some of the science you’re doing, you need the hands as well,” says Coley, who was one of the first MIT faculty members hired into the MIT Schwarzman College of Computing. He and his group have coupled a robotic liquid-handling platform with an optimization algorithm. In the project designed to look for optimal polymer blends, the autonomous system not only chooses which polymer solutions to test but also performs the physical testing. The system, which can generate and test 700 new polymer blends in a day, has identified one that performed 18% better than any of its components.

Systems with a similar level of autonomy could also have a big impact on early-stage drug discovery. One effect, he observes, should be to reduce the time it takes to advance a drug from the lab into clinical trials. But the real question, he says, is “What might we be able to do that we just couldn’t do with any reasonable amount of resources previously?”

Alexander Siemenn, PhD ’25, also uses AI both to search for new materials and to control robots that test the physical properties of those materials. For his doctoral thesis, Siemenn built from scratch a fully autonomous AI-driven robotic laboratory to discover and test sustainable high-performance materials for solar panels. The system incorporates computer vision, machine learning, and an optimization algorithm and runs 24 hours a day.

“We are pairing conventional methods [of measurement] that have been almost entirely manual to this point with the AI methods,” says Siemenn. “The goal is to be able to not just improve their accuracy but also make them fast and autonomous.”

Hits and near misses

Institute labs are also encountering some of the first real borders of the brave new AI-enhanced world. Many researchers at MIT and elsewhere agree that most of the “low-hanging fruit” has already been collected. That includes AI’s contributions to managing massive data sets and accelerating existing discovery and testing processes, at times to near light speed. Beyond those immediate gains, though, results vary—even in drug development, which has seen some of the most spectacular achievements of AI.

“There are some areas where you would assume we should be doing much better here and we are not,” observes Barzilay. “The reason we cannot cure neurodegenerative diseases like Alzheimer’s or very advanced cancer is because we don’t really understand fully—on the molecular level—the disease itself, the drivers, and how to control it.” And AI still hasn’t made what she calls “a significant transformation” in terms of understanding those underlying disease mechanisms. “There are some helper tools,” she says, but AI hasn’t provided a profoundly new understanding of any disease—“So this is a place that we would hope to see more.”

“In AI, scaling is synergistic and good. In chemistry and materials, scaling is kind of a scary beast that you need to beat in order to make an impact.”

Rafael Gómez-Bombarelli

Limits in materials science are also emerging, particularly in translating digital solutions proposed by AI into objects made of atoms and molecules. Rafael Gómez-Bombarelli, an associate professor of materials science and engineering, develops physics-based machine-learning simulations to accelerate the discovery cycle for sustainable polymers and materials for use in energy, health care, and batteries. While physics-based simulations in themselves have been an unmitigated success, he says, results have been spottier when it comes to manufacturing the materials themselves; many of the solutions generated by these simulators fail in the physical world. “It turns out these simulators don’t capture lots of things that are important,” he says. “They operate on the atomically resolved problems for nanosecond-timescale questions. But many, many [materials] problems don’t happen in nanoseconds, don’t involve just a few ten thousands of atoms.” And they often involve physics more complicated than current AI models account for. What’s more, when the goal might be to produce millions of tons of a new material, scaling errors can be disastrous. “In AI, scaling is synergistic and good,” Gómez-Bombarelli says. “In chemistry and materials, scaling is kind of a scary beast that you need to beat in order to make an impact.”

New methods, new insights

While AI has already produced myriad results and surprises, researchers at MIT believe much of its potential is still waiting to be discovered. And they are eager to search for high-impact applications. Ila Fiete, a professor of brain and cognitive sciences, builds AI tools and mathematical models to expand our knowledge of how the brain develops and reshapes its neural connections. Her work, she believes, can help us understand how we form memories or perceive ourselves in space—and that, in turn, can lead to improvements in AI. Many features of AI, including parallel computing in neural networks, were inspired by the human brain. “AI has [helped] and will continue to help us do more science and better science,” she says. “But neuroscientists believe there is a lot about how humans and other biological intelligences learn and solve problems that is better in some dimensions than current AI models. And by learning better how that works, we can actually inform better AI architectures.”

Li agrees that certain elements of human intelligence and learning could benefit AI and help it solve some of our world’s most pressing and complex problems, including global poverty and climate change. “Large language models today have read tens of millions of papers and books,” he says, adding that they are “much more interdisciplinary than any of us.” Yet he notes that scientific literature is strongly biased toward success. “The day-to-day experience in the lab is 95% frustration, and I think it’s the failure cases which build character,” he says. He posits that if AI is given autonomy to do experiments, to try different things and fail and learn from that, it could evolve into something very similar to human intelligence.

Researchers at MIT believe that as AI continues to evolve, expand, and proliferate, the Institute has a special duty to channel these technologies toward useful, attainable ends. “Right now, in the AI world there is a lot of hype and fluff,” says Ahmed, who is developing generative AI tools to help tackle complex engineering and design problems. “The digital world is overflowing with stuff,” he says, and there’s a lot happening on the AI front with images, text, and video. “But the physical world is still less affected, and we are seeing a lot more happening at the intersection of physical and AI at MIT.”

AI’s future includes potential triumphs and potential pitfalls. Researchers still worry about “hallucinations”—results spit out of AI models that make no sense in the real world. They worry, as well, that some practitioners will rely too heavily on AI tools, omitting key insights and safeguards that keep an experiment or production facility on track. And they worry about overpromising—unrealistically presenting AI as a magical solution to all problems great and small. “It’s impossible to predict how good these models are going to get,” says MechE’s Hart. “Where they are going to shine and where they are going to limit.” But instead of sensing danger, Hart sees opportunity, especially at MIT: “We have the learned expertise and experience that allows us to frame the right questions and use these tools in the right way.” The challenge for the MITs of the world, he says, is to figure out how to use AI tools to create faster, better solutions and navigate more complex problems than we ever could before.