Krishna Rangasayee is CEO of SiMa.ai, a software-centric, embedded edge machine learning system-on-chip company.

As the last year falls further into the rearview and we are full steam ahead into 2025, the discussion surrounding AI’s massive energy consumption has reached an inflection point. The rapid advancement of AI has resulted in unprecedented demands on global energy infrastructure, threatening to outpace our ability to deliver power — and AI benefits — where they’re needed most.

With AI already accounting for up to 4% of U.S. electricity use (a figure projected to nearly triple to 11% by 2030), reducing the strain on our energy systems is a priority, requiring a thorough reexamination of how AI’s energy needs could affect our long-term climate goals, infrastructure, resource availability and the scale at which this technology operates.

And the hype doesn’t look to be slowing down anytime soon. A new executive order was issued last month to prioritize and speed up the development of AI infrastructure, including data centers and other power facilities, while proposing new restrictions on exports of AI chips to keep innovation local.

While political debates rage about energy sources and environmental regulation, the fundamental challenge lies in the stark mismatch between AI’s accelerating power requirements and our aging energy distribution infrastructure. This has now become a race against time, and though AI has inevitably become a “problem” — it also offers the path to a solution.

The scale of AI’s energy challenge

Most AI applications we use today — from chatbots to image generators — rely on the cloud to run models and process queries. These AI data centers serve as a hub, steadily accounting for around 4.4% of U.S. electrical demand, potentially increasing to more than a tenth of the total U.S. electrical demand by 2028.

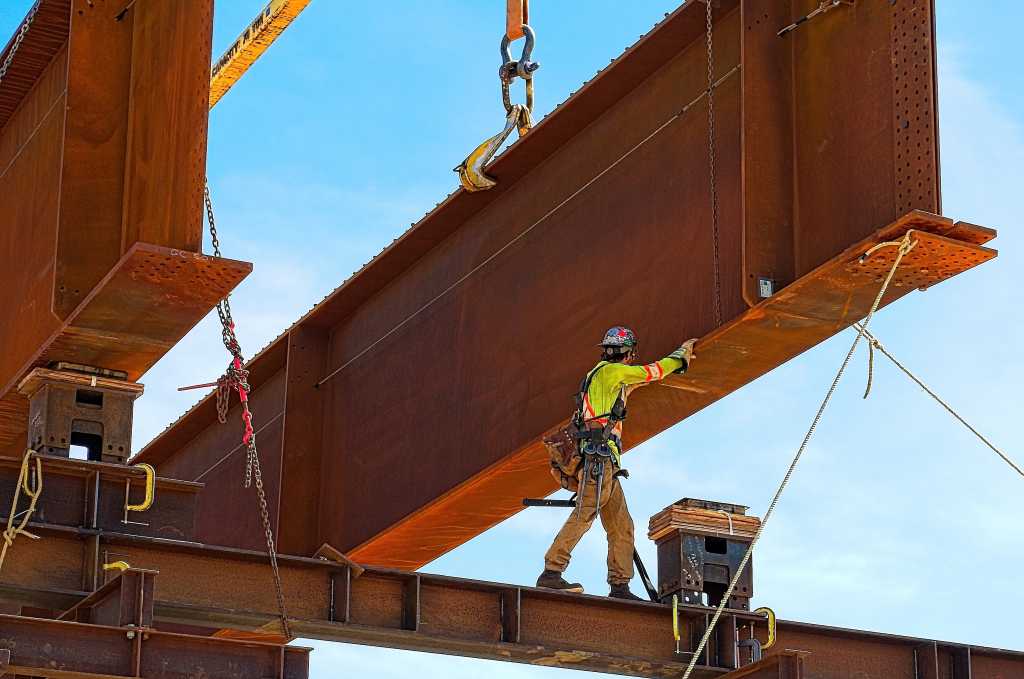

Recent data points to the growth of EV infrastructure and the expansion of AI data centers, with more on the way, as the primary contributors to these growing numbers. As edge computing proliferates across industries, it offers a partial solution to the pressing issues seen with the growing AI energy demands. While not immune to the power struggle, edge computing can reduce power use and latency by enabling localized AI deployments, offering a more efficient and less energy-intensive approach than our current infrastructure.

A report from Goldman Sachs revealed a stunning statistic: the average ChatGPT query requires nearly 10 times as much electricity to process compared to a Google search. While an alarming number by itself, especially when considering the rapid adoption of the chatbot, data like this underscores the fundamental mismatch between AI development cycles, which operate in 100-day sprints, and energy infrastructure projects that typically span 100-year timelines.

Regulatory changes are a false flag

On their first day in office, the new administration began taking action by not only withdrawing the United States from the Paris Agreement — limiting the country’s access to clean energy and green tech markets — but also vowing to end the “electric vehicle mandate” and reversing a 2021 executive order, which aimed to ensure that half of all new vehicles sold in the U.S. by 2030 would be electric, potentially eliminating EV tax credits.

Many anticipate the government repealing the existing executive order on AI will accelerate AI development, especially in Silicon Valley. Pundits presume that the 2021 Bipartisan Infrastructure Law, which supports the development of green energy projects, will eventually be rolled back, with fund disbursement already on hold.

The recent appointment of Lee Zeldin to head the EPA, along with the potential rollback of initiatives from the Inflation Reduction Act, may ease some environmental restrictions for now. But these policy shifts won’t address the fundamental challenges of energy distribution — the massive infrastructure required to distribute energy where it’s needed most. The critical bottleneck isn’t the energy source itself; it’s the massive infrastructure required to deliver it where needed.

It is these limitations, not any policy changes, that will continue stifling innovation in the space. In fact, the continued advancement of electric and autonomous vehicles will only emphasize the need for additional fuel sources. If energy efficiency continues improving at its current pace, the electricity required by in-vehicle computers could reach 26 terawatt-hours by 2040 — equivalent to the total consumption of about 59 million desktop PCs.

Market forces driving change

The aggressive competition for limited power infrastructure is already creating a natural selection process for providers in AI deployment. The growing gap between AI’s rapid development cycle and infrastructure’s glacial pace of change is forcing companies to innovate in three key areas:

- Energy-Efficient AI Architecture: Companies are investing in specialized, smaller language models that can operate within existing power constraints while delivering targeted business value.

- Geographic Strategy: Businesses are making AI deployment decisions based primarily on power availability and distribution capabilities.

- Competitive Innovation: As companies realize that waiting for infrastructure catch-up isn’t viable, they’re turning to new approaches to AI deployment that treat energy efficiency as a core competitive advantage rather than a compliance requirement.

The data center capacity limitations we see today are forcing companies to make hard choices about where and how to deploy AI resources. It won’t be long until a complete reevaluation of how we distribute these resources becomes necessary.

Edge computing is the catalyst for change

So what’s the solution? As traditional infrastructure scaling remains inadequate when faced with the rise in demand, companies are increasingly turning to edge computing solutions that distribute computational loads closer to end users, reducing the strain on centralized data centers.

The emergence of smaller, specialized language models reflects a growing recognition that energy efficiency must be a core consideration in AI development. These specialized models not only reduce power use but often provide better performance for specific tasks, suggesting a future where AI development may be shaped as much by energy constraints as by technological capabilities.

Energy efficiency is the future

When looking at what is on the horizon for AI, it’s become obvious that industry collaboration on energy-efficient computing has evolved beyond an environmental imperative and into a business necessity as infrastructure limitations threaten to bottleneck AI deployment.

There must be a seismic shift in how the industry defines and measures AI advancement, pivoting our idea of technological evolution from raw computing power to energy efficiency. The impact of edge computing goes beyond the macro level. Less bandwidth and lower network operating costs make energy more efficient and cost-effective, freeing up resources elsewhere to help propel necessary progress.

The future of AI development will be shaped not by political decisions about energy sources, but by the physical realities of power distribution infrastructure. While this perspective may challenge popular narratives about environmental regulation being the primary constraint on AI development, the mathematics of energy distribution cannot be overcome by the wave of a politician’s magic wand.

Industry leaders must confront these infrastructure limitations head-on, driving innovation in both AI efficiency and power distribution solutions, ensuring we leave this planet better than we found it.