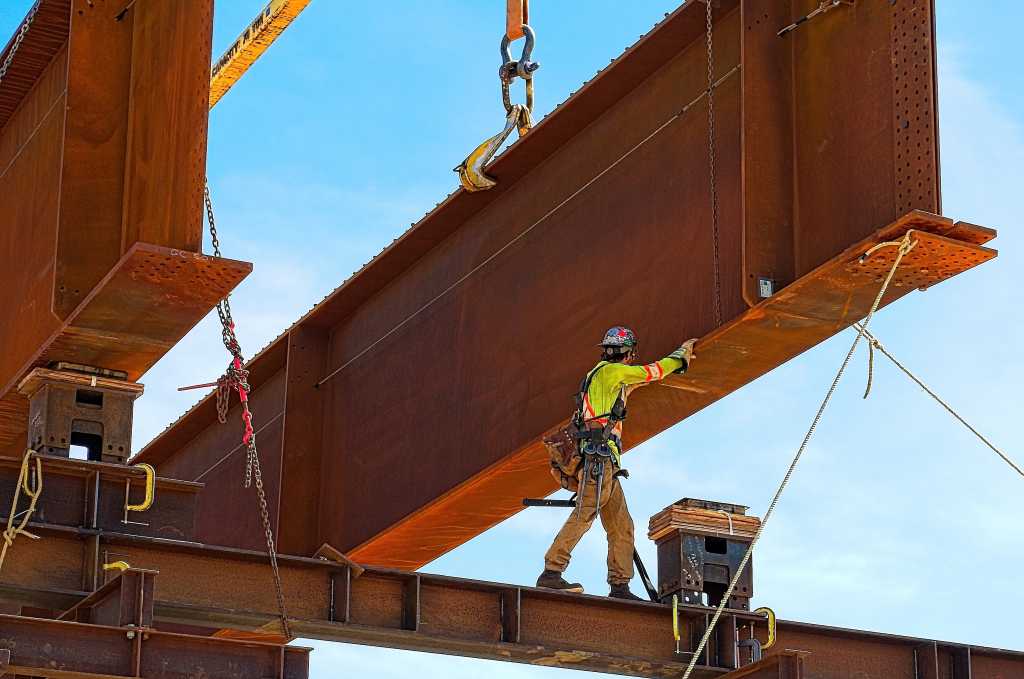

Phillips 66 Co. and Kinder Morgan Inc. have secured sufficient shipper interest to advance the proposed Western Gateway refined products pipeline project to supply fuel to Arizona and California, the companies said in a joint release Apr. 20. Following a second open season to secure long-term shipper commitments, the companies will “move the project forward, subject to the execution of definitive transportation service agreements, joint venture agreements, and respective board approvals,” the companies said. “Customer response during the open season underscores the importance of Western Gateway in addressing long term refined products logistics needs in the region,” said Phillips 66 chairman and chief executive officer Mark Lashier. “By utilizing existing pipeline assets across multiple states along the route, we’re uniquely well-positioned to support a refined products transportation solution,” said Kim Dang, Kinder Morgan chief executive officer. Western Gateway pipeline specs The planned 200,000-b/d Western Gateway project is designed as a 1,300-mile refined products system with a new-build pipeline from Borger, Tex. to Phoenix, Ariz., combined with Kinder Morgan’s existing SFPP LP pipeline from Colton, Calif. to Phoenix, Ariz., which will be reversed to enable east-to-west product flows into California. It will be fed from supplies connected to Borger as well as supplies already connected to SFPP’s system in El Paso, Tex. The Gold Pipeline, operated by Phillips 66, which currently flows from Borger to St. Louis, will be reversed to enable refined products from midcontinent refineries to flow toward Borger and supply the Western Gateway pipeline. Western Gateway will also have connectivity to Las Vegas, Nev. via Kinder Morgan’s 566-mile CALNEV Pipeline. The Western Gateway Pipeline is targeting completion by 2029. Phillips 66 will build the entirety of the new pipeline and will operate the line from Borger, Tex., to El Paso, Tex. Kinder Morgan will operate the line from El