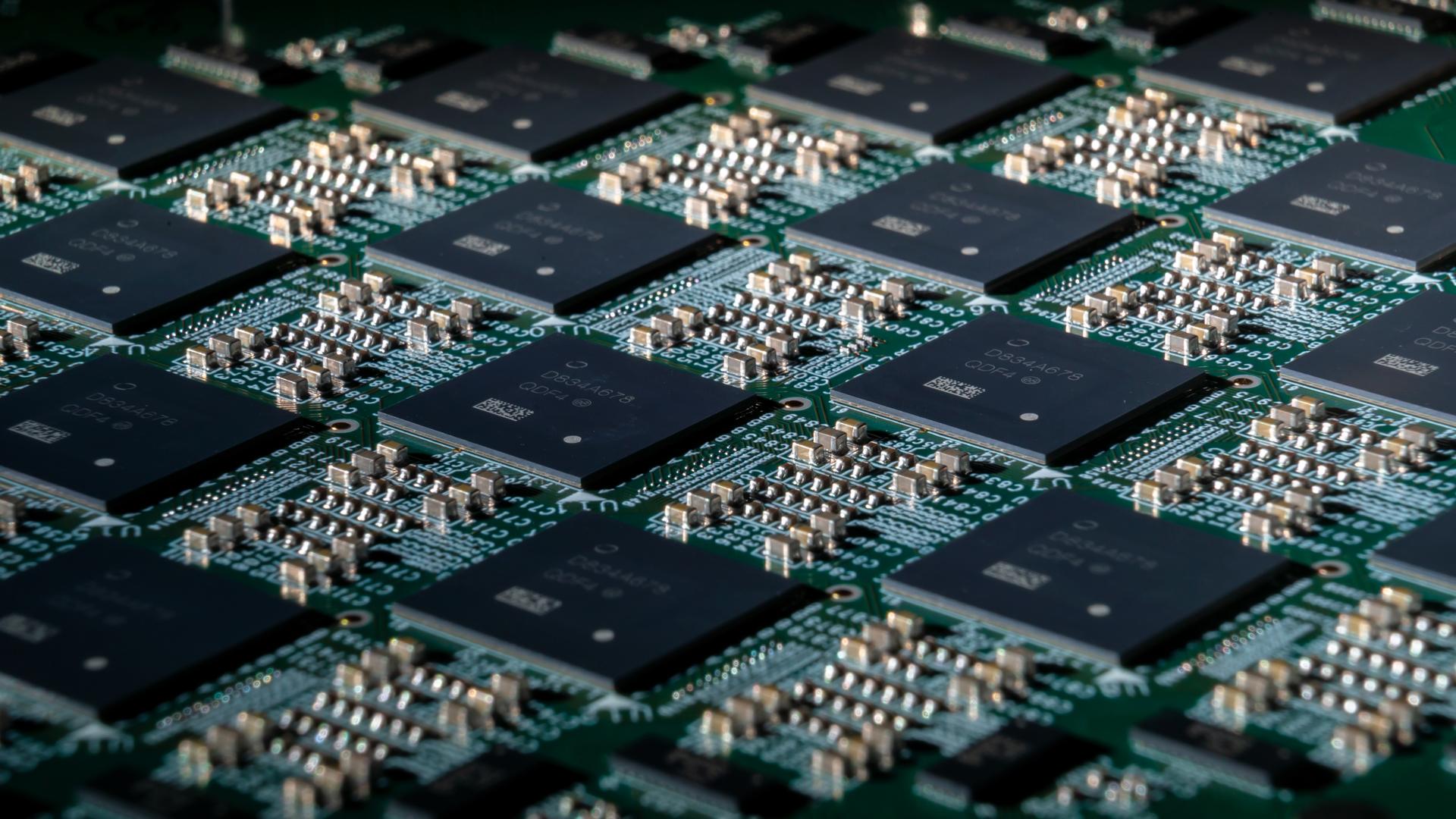

“Broadcom is taking a lead here in terms of unifying all of these various networking fabrics so they can truly incorporate using standards-based technology, which is BGP and EVPN, which is the market,” said Prashanth Shenoy, CMO and vice president of marketing for the VMware Cloud Foundation (VCF) Division at Broadcom, during a press briefing. “We truly believe that it will drastically simplify networking operations in the modern, private cloud world, and this unified standards-based fabric approach will also enhance interoperability between the application environment and the underlying network infrastructure, providing that cloud-like simplicity that our customers really, really require.”

Broadcom explained its strategy to use Ethernet VPN (EVPN) and Border Gateway Protocol (BGP) networking to enhance interoperability between application environments and the network. The strategy is based on the standards EVPN and BGP: EVPN extends Layer 2 Ethernet connectivity across a Layer 3 network, such as MPLS or IP, which integrates different control planes and simplifies network management; and BGP which enables capabilities such as network redundancy and traffic management.

Broadcom said its standards approach will help enterprises overcome the challenges associated with adopting AI at scale, which can intensify the requirements for performance, security, and resiliency. “Doing that, we truly believe that it will drastically simplify networking operations in the modern, private cloud world, and this unified standards-based fabric approach will also enhance interoperability between the application environment and the underlying network infrastructure, providing that cloud-like simplicity that our customers really, really require,” Shenoy said.