@import url(‘/fonts/fira_sans.css’);

a { color: #0074c7; }

.ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4,

.ebm-page__main h5, .ebm-page__main h6 {

font-family: “Fira Sans”, Arial, sans-serif;

}

body {

letter-spacing: 0.025em;

font-family: “Fira Sans”, Arial, sans-serif;

}

button, .ebm-button-wrapper { font-family: “Fira Sans”, Arial, sans-serif; }

.label-style {

text-transform: uppercase;

color: var(–color-grey);

font-weight: 600;

font-size: 0.75rem;

}

.caption-style {

font-size: 0.75rem;

opacity: .6;

}

#onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] {

background-color: #005ea0 !important;

border-color: #005ea0 !important;

}

#onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a {

color: #005ea0 !important;

}

#onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu {

border-color: #005ea0 !important;

}

#onetrust-consent-sdk #onetrust-accept-btn-handler,

#onetrust-banner-sdk #onetrust-reject-all-handler,

#onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link {

background-color: #005ea0 !important;

border-color: #005ea0 !important;

}

#onetrust-consent-sdk

.onetrust-pc-btn-handler {

color: #005ea0 !important;

border-color: #005ea0 !important;

background-color: undefined !important;

}

Starting in early 2025, Telehouse International Corporation of Europe will offer an advanced liquid cooling lab at their newest data center, Telehouse South at the London Docklands campus in Blackwall Yard.

Telehouse has partnered with four leading liquid-cooling technology vendors — Accelsius, JetCool, Legrand, and EkkoSense — to allow customers to explore different cooling technologies and management tools while evaluating suitability for their use in the customer applications.

Dr. Stu Redshaw, Chief Technology and Innovation Officer at EkkoSense, said about the project:

Given that it’s not possible to run completely liquid-cooled data centers, the reality for most data center operators is that liquid cooling and air cooling will have an important role to play in the cooling mix – most likely as part of an evolving hybrid cooling approach. However, key engineering questions need answering before simply deploying liquid cooling – including establishing the exact blend of air and liquid cooling technologies you’ll need. And also recognizing the complexity of managing the operation of a hybrid air cooling and liquid cooling approach within the same room. This increases the need for absolute real-time white space visibility, and Telehouse’s liquid cooling lab will provide a great way to explore these issues.

<!–>

–>

Partnering with a Range of Technology Vendors

The four companies partnering with Telehouse represent different technologies currently available in the liquid cooling market.

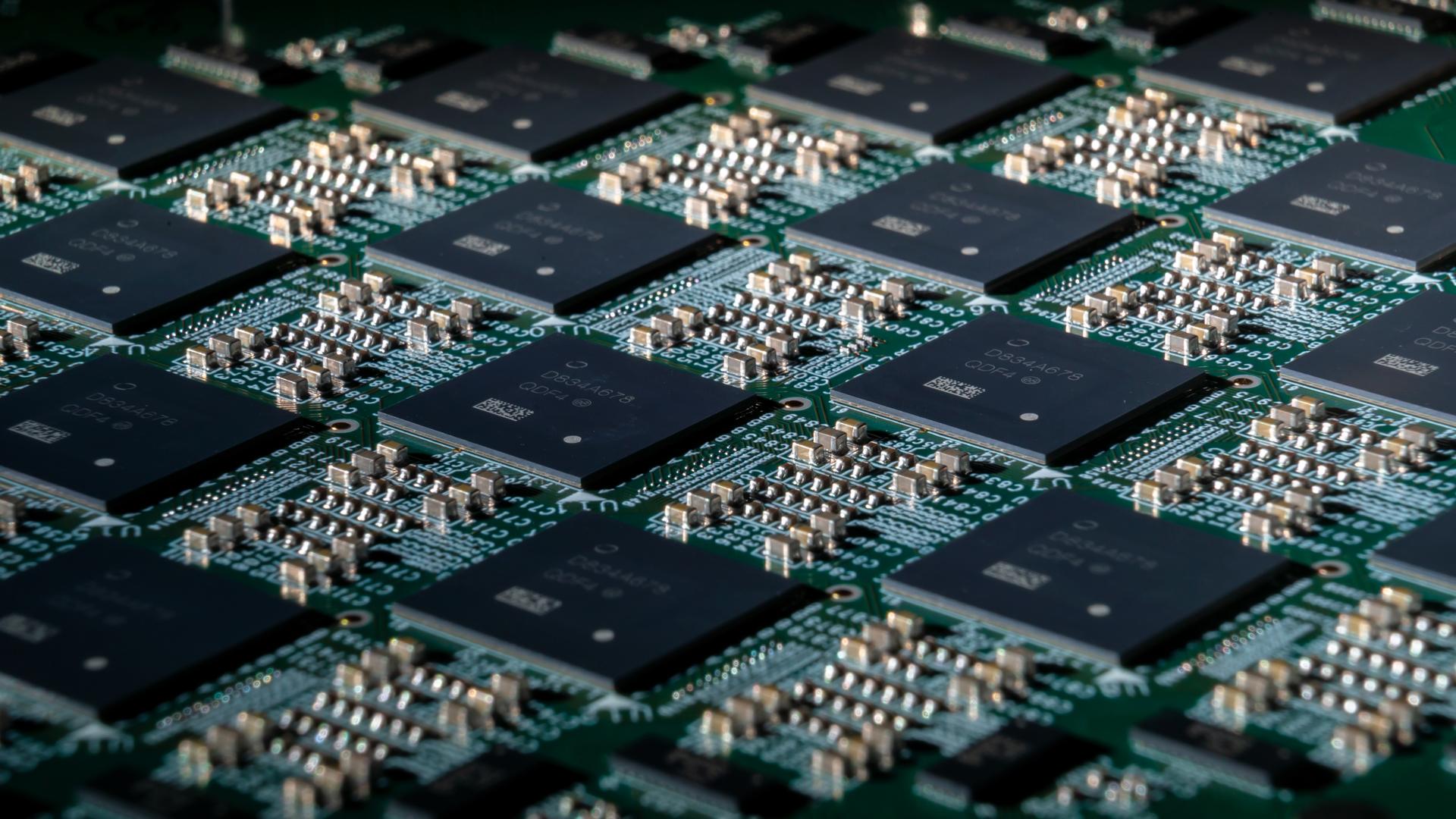

Accelsius: The company will be providing its NeuCool platform. This direct-to-chip cooling solution uses a waterless, nonconductive refrigerant to remove heat and offer an environmentally friendly, platform that minimizes a data center’s water usage footprint. Designed to provide sufficient headroom for AI and HPC deployments, the system is designed to remove an average heat flux of 250W/cm2 and hot spot heat fluxes above 500W/cm2.

Included in the lab will be the Accelsius Thermal Simulation Rack, which was demonstrated at last years SuperComputing 24 conference and was announced earlier this month for a planned test deployment at a data center lab in Miami, Florida.

Josh Claman, CEO of Accelsius, remarked:

Partnering with Telehouse represents a significant milestone in our global expansion strategy. By showcasing our innovative cooling technology in one of the world’s most significant data centre hubs, we’re demonstrating our commitment to the UK market and ensuring that our cutting-edge solutions are readily accessible to potential clients across the region. As AI and other compute-intense applications continue to expand exponentially, supported by higher performance and hotter chips, our NeuCoolT system provides the headroom to manage these new, powerful accelerators, essentially future-proofing the data centre cooling system.

<!–>

–>

JetCool: For the Telehouse liquid cooling lab, JetCool (as recently acquired by Flex) is offering a 1U and 2U self contained liquid cooling solution that requires no piping, plumbing, or facility modifications, aka their SmartPlate System. The technology uses a microconvective liquid cooling technology and is capable of handling processors over 3500 Watts, making it suitable for all current and announced CPUs, GPUs, and AI accelerators.

The deployment in the lab will also include the JetCool in-rack SmartSense Coolant Distribution Unit (CDU). With scalability over 2 MW per row, this CDU can be used to cool over 300 kW per rack.

Dr. Bernie Malouin, CEO of JetCool, said:

Telehouse has been a trusted partner for industries with the most demanding performance and reliability needs, including finance and telecommunications, where we already share valued customers. This partnership offers a unique opportunity to bring JetCool’s advanced liquid cooling technology to colocation customers facing power constraints, enabling greater efficiency and compute density. Together with Telehouse, we are delivering practical, high-performance cooling solutions that address real-world challenges and unlock new sustainability and performance metrics from the rack to the facility level.

<!–>

–>

USystems: This Legrand company will be installing their USystems ColdLogikCL20 Rear Door Heat Exchanger, which enables an equipped rack to address over 90 kW of capacity per cabinet. As displayed at the Telehouse lab, this family of RDHx units offers both active and passive cooling technologies addressing a wide range of customer demands.

Designed to address heat at its source, the technology can remove the need to explicit air mixing or containment solutions. This is done by drawing in ambient air to the rack using IT equipment fans and then moving the air via fans in the RDHx over the equipped heat exchanger, with the cooled air then moved into the equipment room.

The process is managed by the proprietary ColdLogik adaptive intelligence which uses air-assisted liquid-cooling to efficiently control the entire room temperature. According to James Giblette, Business Unit Director, Legrand UK & Ireland:

This is a very promising partnership which provides us with another opportunity to demonstrate how our solutions-based, problem-solving, integrated approach helps the data centre industry move towards a more sustainable, energy-efficient future at a time of high-density, high-performance computing. This is a fast-changing market where coordinated innovation and comprehensive approach are what deliver results.

<!–>

–>

EkkoSense: Telehouse already makes use of this company’s EkkoSoft Critical Platform across its London Dockyards campus, Telehouse London, which offers five collocation sites across the city. This AI-powered data center optimization software will be joined in the lab by the company’s EkkoSim ‘what-if?’ scenario simulations, air-side and liquid-side monitoring sensors, and web-based EkkoSoft Critical 3D visualisations. This AI-driven solution has already enabled the Telehouse operations team to get a better view of cooling and capacity performance, and also made the operations team more efficient in their day-to-day tasks.

]–>

]–>

The Telehouse South data center where the liquid cooling lab is deployed is a multi-story building that offers 31,000 square meters of floor space with 2.7 MW of capacity per floor, totalling 18 MW of critical power. Each floor can support 667 industry standard racks and has dual redundant power with N+1 configured backup generators.

In discussing the company’s liquid cooling research announcement, Mark Pestridge, Executive Vice President & General Manager, Telehouse Europe, said:

Our liquid cooling lab is a very exciting partnership with three of the most innovative and experienced companies in the cutting-edge field of liquid cooling technology. We are committed to providing our customers with the best solutions that meet their needs for high levels of efficiency and sustainability.

The challenges generated by the growth of high-performance, high-density computing and AI are very significant, but we are confident that our collaborative approach and a firm focus on what is practical in an advanced data center will deliver real results and provide customers and prospects with a range of realistic options.

<!–>

Concurrent Advancements In Data Center Liquid Cooling

As of January 2025, numerous other industry players are actively advancing liquid cooling technologies for data centers, as driven by the escalating demands of AI and HPC applications. Notable companies and organizations currently also focusing on developing and implementing innovative data center liquid cooling solutions include the following:

- Last October, Schneider Electric announced its acquisition of a 75% controlling stake in Motivair Corp., a U.S.-based liquid cooling specialist, for approximately $850 million. This acquisition aims to enhance Schneider’s offerings in direct-to-chip liquid cooling and high-capacity thermal systems, essential for handling the increased heat generated by the growing use of generative AI and LLMs.

- NVIDIA has been steadily advancing liquid cooling technology within its GB200 server racks, incorporating liquid cooling systems instead of traditional air cooling. This initiative is driven by the need to manage the increasing power consumption of global data centers, projected to reach 8% of total U.S. power demand by 2030.

- Supermicro last year introduced clusters utilizing direct liquid cooling, resulting in higher performance and lower power consumption for entire data centers. The company notes this approach not only enhances efficiency but also reduces operational expenses.

- Hewlett Packard Enterprise (HPE) has launched purpose-built solutions powered by AMD, featuring optional direct liquid cooling. The company says this option aids data centers in meeting escalating power requirements, advancing sustainability goals, and lowering operational costs.

- The iDataCool project, a collaboration between the University of Regensburg’s physics department and IBM’s Research and Development Laboratory in Böblingen, Germany focuses on cooling IT equipment with hot water and efficiently reusing waste heat. Operating since 2011, the project serves as a research platform for innovative cooling solutions in data centers.