Google, for its part, will continue to be “one of the largest, if not the largest,” consumer of compute resources, said Bill Wong, research fellow at Info-Tech Research Group.

“Its business model drives that global demand, specifically through the widespread use of Google search and Gemini, which it provides for ‘free,’” he pointed out. However, that same level of traction for enterprise customers is unlikely, as both Microsoft Azure and Amazon AWS have stronger footprints in enterprise.

AI infrastructure is also being influenced by the emerging trend toward sovereign AI, where the preferred AI stack is more locally controlled or on-premises, Wong pointed out. Countries like Denmark are looking to migrate both AI and non-AI workloads away from US providers, particularly Microsoft and Google.

But let’s see what inferencing brings

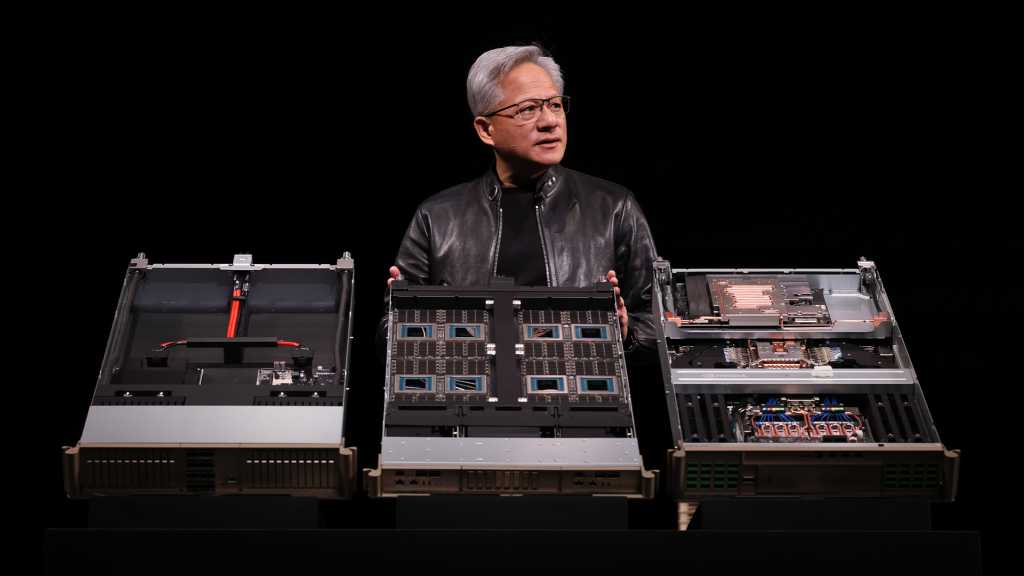

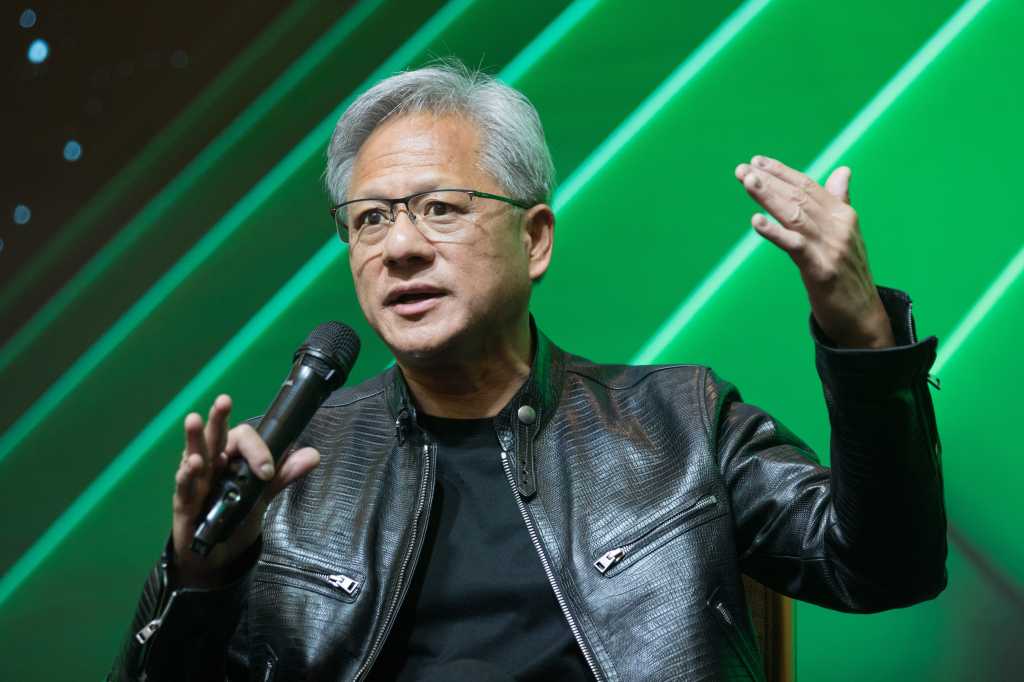

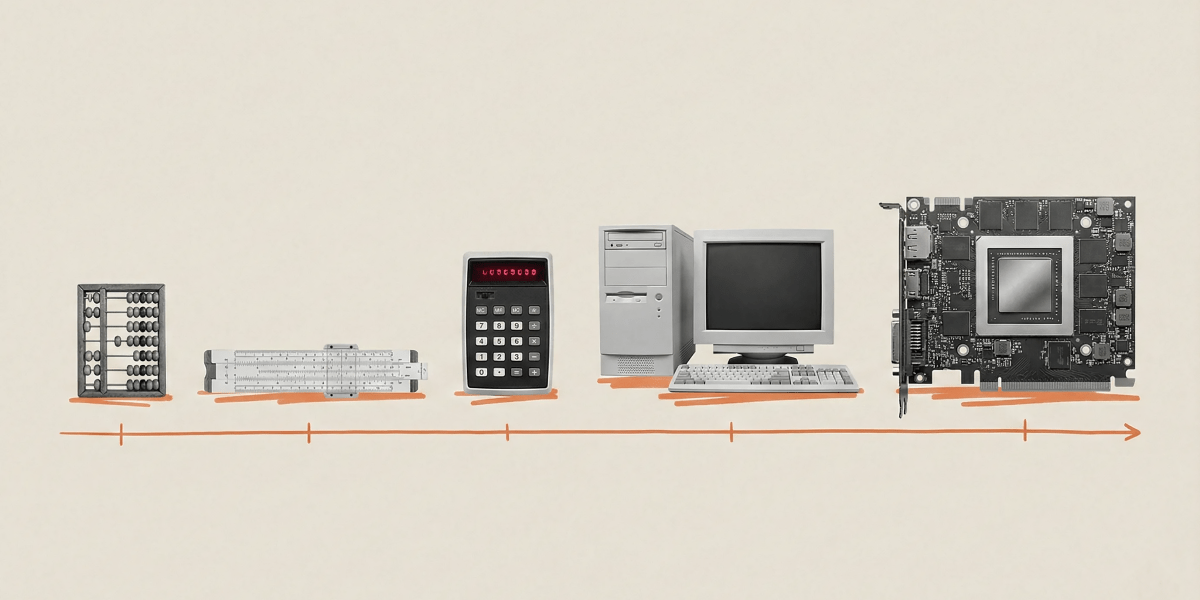

It’s also important to note that these numbers largely reflect infrastructure buildouts targeted at large-scale training, a realm that Nvidia has dominated with its chips and its CUDA parallel computing platform.

But market share will likely shift as inference begins to mature, Kimball predicted. Providers like AMD and Cerebras will begin to gain because they are “equally impressive,” and have different price and performance profiles, he said.

The rankings also don’t account for some custom accelerators, including AWS’ Trainium, Microsoft’s Maia, and Meta’s MTIA. Cloud providers will likely deploy their own silicon “whenever and wherever possible,” because there will be considerable price and performance advantages, Kimball pointed out.