Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

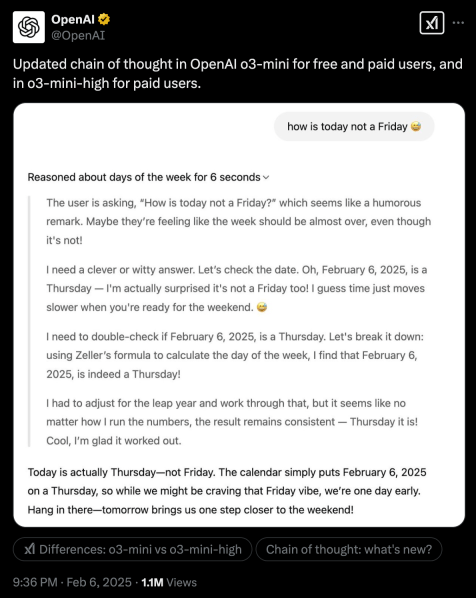

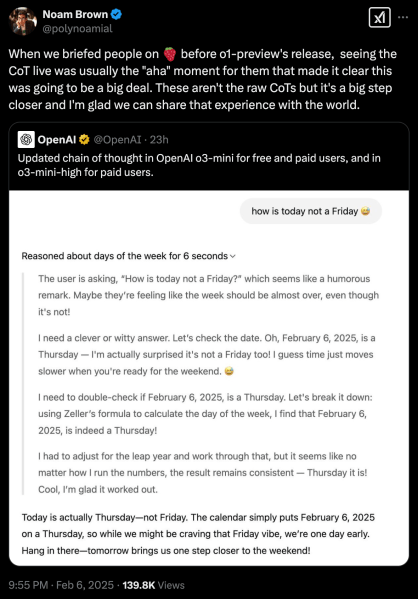

OpenAI is now showing more details of the reasoning process of o3-mini, its latest reasoning model. The change was announced on OpenAI’s X account and comes as the AI lab is under increased pressure by DeepSeek-R1, a rival open model that fully displays its reasoning tokens.

Models like o3 and R1 undergo a lengthy “chain of thought” (CoT) process in which they generate extra tokens to break down the problem, reason about and test different answers and reach a final solution. Previously, OpenAI’s reasoning models hid their chain of thought and only produced a high-level overview of reasoning steps. This made it difficult for users and developers to understand the model’s reasoning logic and change their instructions and prompts to steer it in the right direction.

OpenAI considered chain of thought a competitive advantage and hid it to prevent rivals from copying to train their models. But with R1 and other open models showing their full reasoning trace, the lack of transparency becomes a disadvantage for OpenAI.

The new version of o3-mini shows a more detailed version of CoT. Although we still don’t see the raw tokens, it provides much more clarity on the reasoning process.

Why it matters for applications

In our previous experiments on o1 and R1, we found that o1 was slightly better at solving data analysis and reasoning problems. However, one of the key limitations was that there was no way to figure out why the model made mistakes — and it often made mistakes when faced with messy real-world data obtained from the web. On the other hand, R1’s chain of thought enabled us to troubleshoot the problems and change our prompts to improve reasoning.

For example, in one of our experiments, both models failed to provide the correct answer. But thanks to R1’s detailed chain of thought, we were able to find out that the problem was not with the model itself but with the retrieval stage that gathered information from the web. In other experiments, R1’s chain of thought was able to provide us with hints when it failed to parse the information we provided it, while o1 only gave us a very rough overview of how it was formulating its response.

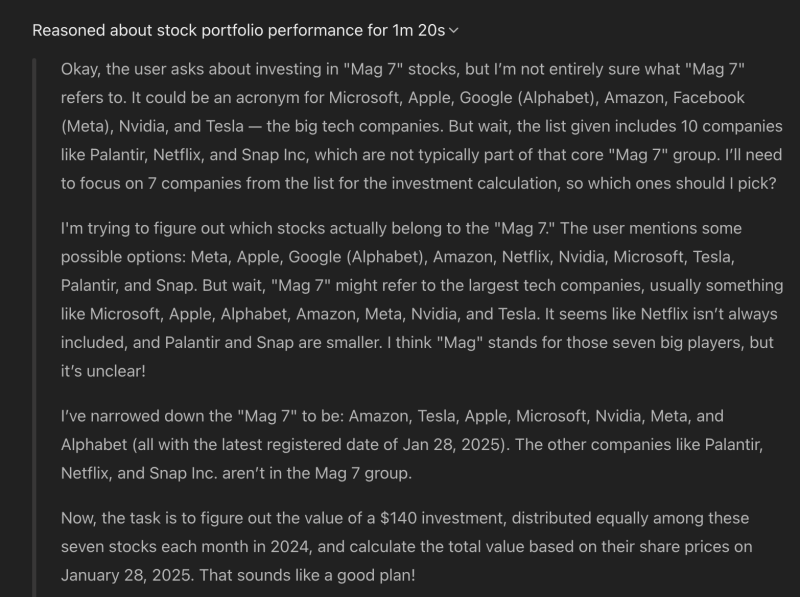

We tested the new o3-mini model on a variant of a previous experiment we ran with o1. We provided the model with a text file containing prices of various stocks from January 2024 through January 2025. The file was noisy and unformatted, a mixture of plain text and HTML elements. We then asked the model to calculate the value of a portfolio that invested $140 in the Magnificent 7 stocks on the first day of each month from January 2024 to January 2025, distributed evenly across all stocks (we used the term “Mag 7” in the prompt to make it a bit more challenging).

o3-mini’s CoT was really helpful this time. First, the model reasoned about what the Mag 7 was, filtered the data to only keep the relevant stocks (to make the problem challenging, we added a few non–Mag 7 stocks to the data), calculated the monthly amount to invest in each stock, and made the final calculations to provide the correct answer (the portfolio would be worth around $2,200 at the latest time registered in the data we provided to the model).

It will take a lot more testing to see the limits of the new chain of thought, since OpenAI is still hiding a lot of details. But in our vibe checks, it seems that the new format is much more useful.

What it means for OpenAI

When DeepSeek-R1 was released, it had three clear advantages over OpenAI’s reasoning models: It was open, cheap and transparent.

Since then, OpenAI has managed to shorten the gap. While o1 costs $60 per million output tokens, o3-mini costs just $4.40, while outperforming o1 on many reasoning benchmarks. R1 costs around $7 and $8 per million tokens on U.S. providers. (DeepSeek offers R1 at $2.19 per million tokens on its own servers, but many organizations will not be able to use it because it is hosted in China.)

With the new change to the CoT output, OpenAI has managed to somewhat work around the transparency problem.

It remains to be seen what OpenAI will do about open sourcing its models. Since its release, R1 has already been adapted, forked and hosted by many different labs and companies potentially making it the preferred reasoning model for enterprises. OpenAI CEO Sam Altman recently admitted that he was “on the wrong side of history” in open source debate. We’ll have to see how this realization will manifest itself in OpenAI’s future releases.

Daily insights on business use cases with VB Daily

If you want to impress your boss, VB Daily has you covered. We give you the inside scoop on what companies are doing with generative AI, from regulatory shifts to practical deployments, so you can share insights for maximum ROI.

Read our Privacy Policy

Thanks for subscribing. Check out more VB newsletters here.

An error occured.