2. Rethinking Power on Every Level

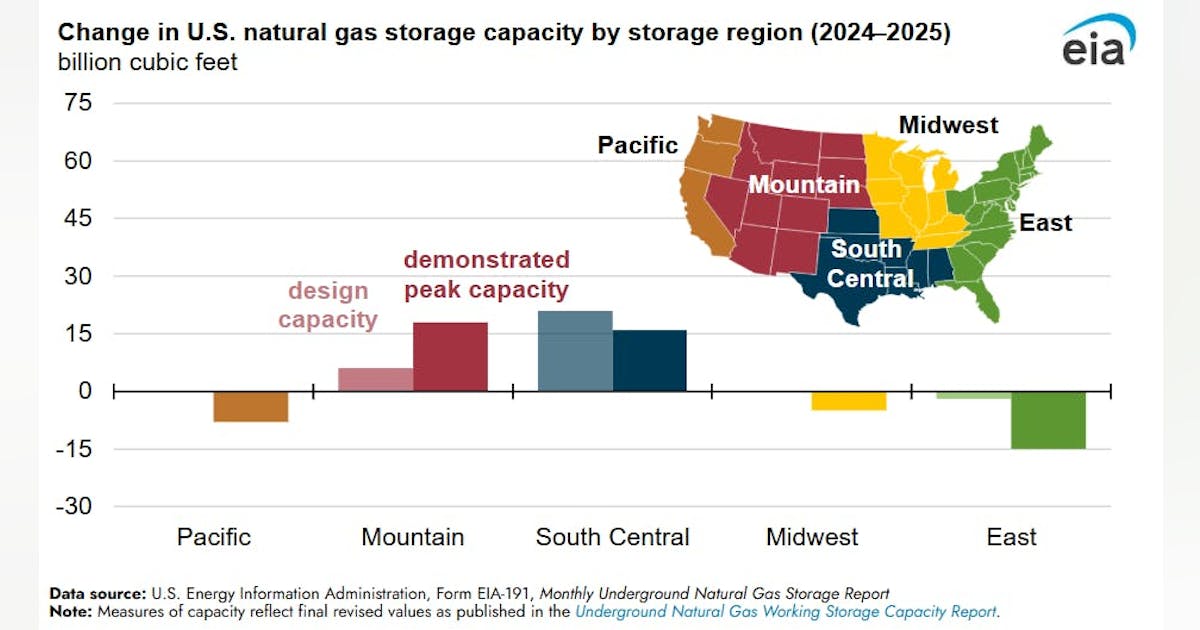

PREDICTION: Utilities are struggling to upgrade transmission networks to support the surging requirement for electricity to power data centers. CBRE recently said that data center construction completion timelines have been extended by 24 to 72 months due to power supply delays. Although the constraints in Northern Virginia have made headlines, power availability has quickly become a global challenge, impacting major markets in Europe and Asia as well as U.S. hubs like Ashburn, Santa Clara, and sections of Dallas and Suburban Chicago. Last year we predicted the rise of on-site power generation, but we’ve yet to truly see this at scale. But data center operators are working on a range of new approaches to power. Expect to see innovations in power continue as data centers seek better visibility into their power sourcing.

MASSIVE HIT: This prediction was a huge “Hit,” as evidenced by 2024 data from leading commercial real estate firms CBRE, JLL, and Cushman & Wakefield, and other sources. Throughout the year, data center operators reported facing significant challenges in securing adequate power from utilities, leading to increased interest in adoption of on-site power generation solutions, as reflected by many industry discussions this year. The bottom line on this prediction might be the release of this year’s DOE-backed report indicating that U.S. data center power demand could nearly triple in the next three years, potentially consuming up to 12% of the country’s electricity, underscoring the urgency for alternative power solutions. In terms of the largest data center markets, VPM and others noted how Dominion Energy is projecting unprecedented energy demand from data centers in Virginia, posing significant challenges for accommodating this industry growth in the coming decades. In a noteable effort to shore up that gap, Dominion Energy, American Electric Power (AEP), and FirstEnergy this year reached a joint planning agreement to propose regional transmission projects across the PJM footprint, aiming to strengthen electric reliability over the next decade.

In terms of utilities struggling with power demand, CBRE this year reported that low supply, construction delays, and power challenges are impacting all markets. For example, highlighting the global nature of power constraints, Querétaro, Mexico, has only 0.6 MW available for new data center projects. JLL noted that power challenges are not dampening record demand for U.S. data centers, and emphasized that while demand is high, power availability remains a critical concern. CBRE’s analysis also stated that difficulty in procuring critical equipment could lead to power delivery delays of up to four years, extending data center construction timelines significantly. For its part, Cushman & Wakefield noted that where utility providers have been unable to provide power promptly, certain operators have collaborated with power companies to deliver substations, transmission lines, or source microgrid power. The firm noted that many of these agreements are now being signed directly with third-party energy generation developers, with wind, solar, battery storage, natural gas, and even geothermal developments moving quickly across markets.

In terms of on-site power generation adoption, one major example from the past year is Bloom Energy partnering with AEP to deploy fuel cells that convert natural gas into electricity, providing an alternative to overburdened grids. Barron’s reported that AEP plans to purchase up to 1,000 megawatts of these cells, with a confirmed contract for 100 MW. In terms of fresh data center nuclear energy initiatives, there have really been too many over the course of the past year to succinctly cite here. The most recent major example is Oklo, the ubiquitous new nuclear start-up backed by Sam Altman, this month entering an agreement with Switch to supply up to 12 GW of electricity for data centers as produced by small modular reactor (SMR) nuclear installations over the next two decades. Last year Oklo also announced it had secured partnerships to provide up to 750 MW of power for U.S. data centers, as just one indication of the industry’s steady shift toward factoring in innovative on-site power generation solutions.

All such data points corroborate our prediction, demonstrating that in 2024, utilities are indeed struggling to upgrade transmission networks to meet the surging electricity requirements of data centers. This has led to extended construction timelines, a global impact on major data center markets, and ongoing heavy interest in deploying innovative on-site powering solutions as operators seek better visibility and control over their energy sourcing.