It’s a weird time to be an AI doomer.

This small but influential community of researchers, scientists, and policy experts believes, in the simplest terms, that AI could get so good it could be bad—very, very bad—for humanity. Though many of these people would be more likely to describe themselves as advocates for AI safety than as literal doomsayers, they warn that AI poses an existential risk to humanity. They argue that absent more regulation, the industry could hurtle toward systems it can’t control. They commonly expect such systems to follow the creation of artificial general intelligence (AGI), a slippery concept generally understood as technology that can do whatever humans can do, and better.

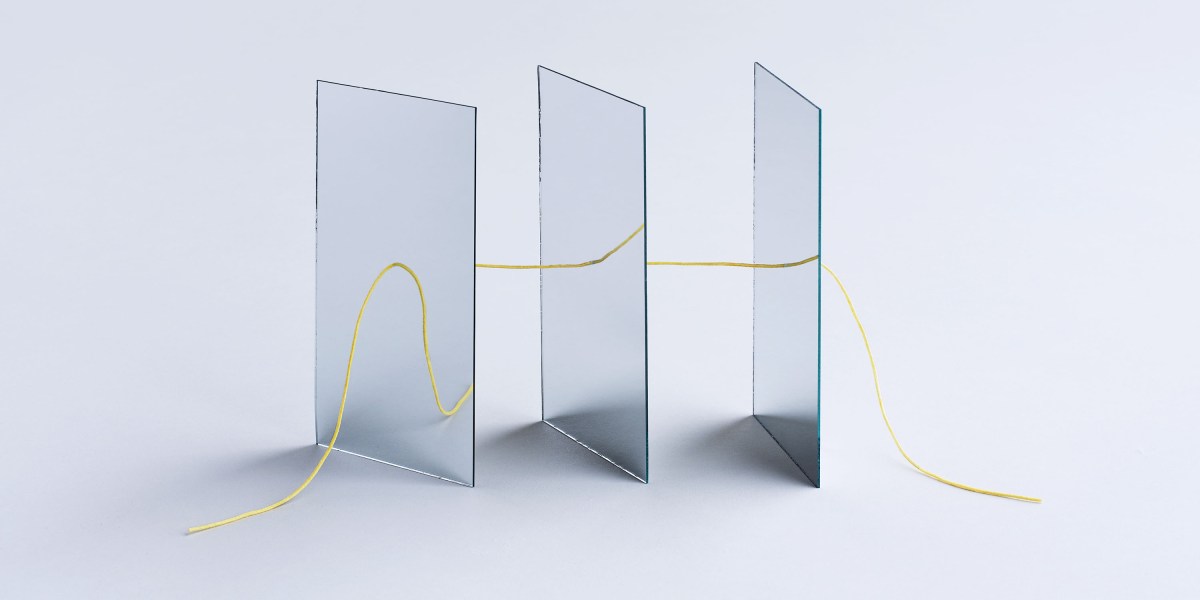

This story is part of MIT Technology Review’s Hype Correction package, a series that resets expectations about what AI is, what it makes possible, and where we go next.

Though this is far from a universally shared perspective in the AI field, the doomer crowd has had some notable success over the past several years: helping shape AI policy coming from the Biden administration, organizing prominent calls for international “red lines” to prevent AI risks, and getting a bigger (and more influential) megaphone as some of its adherents win science’s most prestigious awards.

But a number of developments over the past six months have put them on the back foot. Talk of an AI bubble has overwhelmed the discourse as tech companies continue to invest in multiple Manhattan Projects’ worth of data centers without any certainty that future demand will match what they’re building.

And then there was the August release of OpenAI’s latest foundation model, GPT-5, which proved something of a letdown. Maybe that was inevitable, since it was the most hyped AI release of all time; OpenAI CEO Sam Altman had boasted that GPT-5 felt “like a PhD-level expert” in every topic and told the podcaster Theo Von that the model was so good, it had made him feel “useless relative to the AI.”

Many expected GPT-5 to be a big step toward AGI, but whatever progress the model may have made was overshadowed by a string of technical bugs and the company’s mystifying, quickly reversed decision to shut off access to every old OpenAI model without warning. And while the new model achieved state-of-the-art benchmark scores, many people felt, perhaps unfairly, that in day-to-day use GPT-5 was a step backward.

All this would seem to threaten some of the very foundations of the doomers’ case. In turn, a competing camp of AI accelerationists, who fear AI is actually not moving fast enough and that the industry is constantly at risk of being smothered by overregulation, is seeing a fresh chance to change how we approach AI safety (or, maybe more accurately, how we don’t).

This is particularly true of the industry types who’ve decamped to Washington: “The Doomer narratives were wrong,” declared David Sacks, the longtime venture capitalist turned Trump administration AI czar. “This notion of imminent AGI has been a distraction and harmful and now effectively proven wrong,” echoed the White House’s senior policy advisor for AI and tech investor Sriram Krishnan. (Sacks and Krishnan did not reply to requests for comment.)

(There is, of course, another camp in the AI safety debate: the group of researchers and advocates commonly associated with the label “AI ethics.” Though they also favor regulation, they tend to think the speed of AI progress has been overstated and have often written off AGI as a sci-fi story or a scam that distracts us from the technology’s immediate threats. But any potential doomer demise wouldn’t exactly give them the same opening the accelerationists are seeing.)

So where does this leave the doomers? As part of our Hype Correction package, we decided to ask some of the movement’s biggest names to see if the recent setbacks and general vibe shift had altered their views. Are they frustrated that policymakers no longer seem to heed their threats? Are they quietly adjusting their timelines for the apocalypse?

Recent interviews with 20 people who study or advocate AI safety and governance—including Nobel Prize winner Geoffrey Hinton, Turing Prize winner Yoshua Bengio, and high-profile experts like former OpenAI board member Helen Toner—reveal that rather than feeling chastened or lost in the wilderness, they’re still deeply committed to their cause, believing that AGI remains not just possible but incredibly dangerous.

At the same time, they seem to be grappling with a near contradiction. While they’re somewhat relieved that recent developments suggest AGI is further out than they previously thought (“Thank God we have more time,” says AI researcher Jeffrey Ladish), they also feel angry that people in power are not taking them seriously enough (Daniel Kokotajlo, lead author of a cautionary forecast called “AI 2027,” calls the Sacks and Krishnan tweets “deranged and/or dishonest”).

Broadly speaking, these experts see the talk of an AI bubble as no more than a speed bump, and disappointment in GPT-5 as more distracting than illuminating. They still generally favor more robust regulation and worry that progress on policy—the implementation of the EU AI Act; the passage of the first major American AI safety bill, California’s SB 53; and new interest in AGI risk from some members of Congress—has become vulnerable as Washington overreacts to what doomers see as short-term failures to live up to the hype.

Some were also eager to correct what they see as the most persistent misconceptions about the doomer world. Though their critics routinely mock them for predicting that AGI is right around the corner, they claim that’s never been an essential part of their case: It “isn’t about imminence,” says Berkeley professor Stuart Russell, the author of Human Compatible: Artificial Intelligence and the Problem of Control. Most people I spoke with say their timelines to dangerous systems have actually lengthened slightly in the last year—an important change given how quickly the policy and technical landscapes can shift.

“If someone said there’s a four-mile-diameter asteroid that’s going to hit the Earth in 2067, we wouldn’t say, ‘Remind me in 2066 and we’ll think about it.’”

Many of them, in fact, emphasize the importance of changing timelines. And even if they are just a tad longer now, Toner tells me that one big-picture story of the ChatGPT era is the dramatic compression of these estimates across the AI world. For a long while, she says, AGI was expected in many decades. Now, for the most part, the predicted arrival is sometime in the next few years to 20 years. So even if we have a little bit more time, she (and many of her peers) continue to see AI safety as incredibly, vitally urgent. She tells me that if AGI were possible anytime in even the next 30 years, “It’s a huge fucking deal. We should have a lot of people working on this.”

So despite the precarious moment doomers find themselves in, their bottom line remains that no matter when AGI is coming (and, again, they say it’s very likely coming), the world is far from ready.

Maybe you agree. Or maybe you may think this future is far from guaranteed. Or that it’s the stuff of science fiction. You may even think AGI is a great big conspiracy theory. You’re not alone, of course—this topic is polarizing. But whatever you think about the doomer mindset, there’s no getting around the fact that certain people in this world have a lot of influence. So here are some of the most prominent people in the space, reflecting on this moment in their own words.

Interviews have been edited and condensed for length and clarity.

The Nobel laureate who’s not sure what’s coming

Geoffrey Hinton, winner of the Turing Award and the Nobel Prize in physics for pioneering deep learning

The biggest change in the last few years is that there are people who are hard to dismiss who are saying this stuff is dangerous. Like, [former Google CEO] Eric Schmidt, for example, really recognized this stuff could be really dangerous. He and I were in China recently talking to someone on the Politburo, the party secretary of Shanghai, to make sure he really understood—and he did. I think in China, the leadership understands AI and its dangers much better because many of them are engineers.

I’ve been focused on the longer-term threat: When AIs get more intelligent than us, can we really expect that humans will remain in control or even relevant? But I don’t think anything is inevitable. There’s huge uncertainty on everything. We’ve never been here before. Anybody who’s confident they know what’s going to happen seems silly to me. I think this is very unlikely but maybe it’ll turn out that all the people saying AI is way overhyped are correct. Maybe it’ll turn out that we can’t get much further than the current chatbots—we hit a wall due to limited data. I don’t believe that. I think that’s unlikely, but it’s possible.

I also don’t believe people like Eliezer Yudkowsky, who say if anybody builds it, we’re all going to die. We don’t know that.

But if you go on the balance of the evidence, I think it’s fair to say that most experts who know a lot about AI believe it’s very probable that we’ll have superintelligence within the next 20 years. [Google DeepMind CEO] Demis Hassabis says maybe 10 years. Even [prominent AI skeptic] Gary Marcus would probably say, “Well, if you guys make a hybrid system with good old-fashioned symbolic logic … maybe that’ll be superintelligent.” [Editor’s note: In September, Marcus predicted AGI would arrive between 2033 and 2040.]

And I don’t think anybody believes progress will stall at AGI. I think more or less everybody believes a few years after AGI, we’ll have superintelligence, because the AGI will be better than us at building AI.

So while I think it’s clear that the winds are getting more difficult, simultaneously, people are putting in many more resources [into developing advanced AI]. I think progress will continue just because there’s many more resources going in.

The deep learning pioneer who wishes he’d seen the risks sooner

Yoshua Bengio, winner of the Turing Award, chair of the International AI Safety Report, and founder of LawZero

Some people thought that GPT-5 meant we had hit a wall, but that isn’t quite what you see in the scientific data and trends.

There have been people overselling the idea that AGI is tomorrow morning, which commercially could make sense. But if you look at the various benchmarks, GPT-5 is just where you would expect the models at that point in time to be. By the way, it’s not just GPT-5, it’s Claude and Google models, too. In some areas where AI systems weren’t very good, like Humanity’s Last Exam or FrontierMath, they’re getting much better scores now than they were at the beginning of the year.

At the same time, the overall landscape for AI governance and safety is not good. There’s a strong force pushing against regulation. It’s like climate change. We can put our head in the sand and hope it’s going to be fine, but it doesn’t really deal with the issue.

The biggest disconnect with policymakers is a misunderstanding of the scale of change that is likely to happen if the trend of AI progress continues. A lot of people in business and governments simply think of AI as just another technology that’s going to be economically very powerful. They don’t understand how much it might change the world if trends continue, and we approach human-level AI.

Like many people, I had been blinding myself to the potential risks to some extent. I should have seen it coming much earlier. But it’s human. You’re excited about your work and you want to see the good side of it. That makes us a little bit biased in not really paying attention to the bad things that could happen.

Even a small chance—like 1% or 0.1%—of creating an accident where billions of people die is not acceptable.

The AI veteran who believes AI is progressing—but not fast enough to prevent the bubble from bursting

Stuart Russell, distinguished professor of computer science, University of California, Berkeley, and author of Human Compatible

I hope the idea that talking about existential risk makes you a “doomer” or is “science fiction” comes to be seen as fringe, given that most leading AI researchers and most leading AI CEOs take it seriously.

There have been claims that AI could never pass a Turing test, or you could never have a system that uses natural language fluently, or one that could parallel-park a car. All these claims just end up getting disproved by progress.

People are spending trillions of dollars to make superhuman AI happen. I think they need some new ideas, but there’s a significant chance they will come up with them, because many significant new ideas have happened in the last few years.

My fairly consistent estimate for the last 12 months has been that there’s a 75% chance that those breakthroughs are not going to happen in time to rescue the industry from the bursting of the bubble. Because the investments are consistent with a prediction that we’re going to have much better AI that will deliver much more value to real customers. But if those predictions don’t come true, then there’ll be a lot of blood on the floor in the stock markets.

However, the safety case isn’t about imminence. It’s about the fact that we still don’t have a solution to the control problem. If someone said there’s a four-mile-diameter asteroid that’s going to hit the Earth in 2067, we wouldn’t say, “Remind me in 2066 and we’ll think about it.” We don’t know how long it takes to develop the technology needed to control superintelligent AI.

Looking at precedents, the acceptable level of risk for a nuclear plant melting down is about one in a million per year. Extinction is much worse than that. So maybe set the acceptable risk at one in a billion. But the companies are saying it’s something like one in five. They don’t know how to make it acceptable. And that’s a problem.

The professor trying to set the narrative straight on AI safety

David Krueger, assistant professor in machine learning at the University of Montreal and Yoshua Bengio’s Mila Institute, and founder of Evitable

I think people definitely overcorrected in their response to GPT-5. But there was hype. My recollection was that there were multiple statements from CEOs at various levels of explicitness who basically said that by the end of 2025, we’re going to have an automated drop-in replacement remote worker. But it seems like it’s been underwhelming, with agents just not really being there yet.

I’ve been surprised how much these narratives predicting AGI in 2027 capture the public attention. When 2027 comes around, if things still look pretty normal, I think people are going to feel like the whole worldview has been falsified. And it’s really annoying how often when I’m talking to people about AI safety, they assume that I think we have really short timelines to dangerous systems, or that I think LLMs or deep learning are going to give us AGI. They ascribe all these extra assumptions to me that aren’t necessary to make the case.

I’d expect we need decades for the international coordination problem. So even if dangerous AI is decades off, it’s already urgent. That point seems really lost on a lot of people. There’s this idea of “Let’s wait until we have a really dangerous system and then start governing it.” Man, that is way too late.

I still think people in the safety community tend to work behind the scenes, with people in power, not really with civil society. It gives ammunition to people who say it’s all just a scam or insider lobbying. That’s not to say that there’s no truth to these narratives, but the underlying risk is still real. We need more public awareness and a broad base of support to have an effective response.

If you actually believe there’s a 10% chance of doom in the next 10 years—which I think a reasonable person should, if they take a close look—then the first thing you think is: “Why are we doing this? This is crazy.” That’s just a very reasonable response once you buy the premise.

The governance expert worried about AI safety’s credibility

Helen Toner, acting executive director of Georgetown University’s Center for Security and Emerging Technology and former OpenAI board member

When I got into the space, AI safety was more of a set of philosophical ideas. Today, it’s a thriving set of subfields of machine learning, filling in the gulf between some of the more “out there” concerns about AI scheming, deception, or power-seeking and real concrete systems we can test and play with.

“I worry that some aggressive AGI timeline estimates from some AI safety people are setting them up for a boy-who-cried-wolf moment.”

AI governance is improving slowly. If we have lots of time to adapt and governance can keep improving slowly, I feel not bad. If we don’t have much time, then we’re probably moving too slow.

I think GPT-5 is generally seen as a disappointment in DC. There’s a pretty polarized conversation around: Are we going to have AGI and superintelligence in the next few years? Or is AI actually just totally all hype and useless and a bubble? The pendulum had maybe swung too far toward “We’re going to have super-capable systems very, very soon.” And so now it’s swinging back toward “It’s all hype.”

I worry that some aggressive AGI timeline estimates from some AI safety people are setting them up for a boy-who-cried-wolf moment. When the predictions about AGI coming in 2027 don’t come true, people will say, “Look at all these people who made fools of themselves. You should never listen to them again.” That’s not the intellectually honest response, if maybe they later changed their mind, or their take was that they only thought it was 20 percent likely and they thought that was still worth paying attention to. I think that shouldn’t be disqualifying for people to listen to you later, but I do worry it will be a big credibility hit. And that’s applying to people who are very concerned about AI safety and never said anything about very short timelines.

The AI security researcher who now believes AGI is further out—and is grateful

Jeffrey Ladish, executive director at Palisade Research

In the last year, two big things updated my AGI timelines.

First, the lack of high-quality data turned out to be a bigger problem than I expected.

Second, the first “reasoning” model, OpenAI’s o1 in September 2024, showed reinforcement learning scaling was more effective than I thought it would be. And then months later, you see the o1 to o3 scale-up and you see pretty crazy impressive performance in math and coding and science—domains where it’s easier to sort of verify the results. But while we’re seeing continued progress, it could have been much faster.

All of this bumps up my median estimate to the start of fully automated AI research and development from three years to maybe five or six years. But those are kind of made up numbers. It’s hard. I want to caveat all this with, like, “Man, it’s just really hard to do forecasting here.”

Thank God we have more time. We have a possibly very brief window of opportunity to really try to understand these systems before they are capable and strategic enough to pose a real threat to our ability to control them.

But it’s scary to see people think that we’re not making progress anymore when that’s clearly not true. I just know it’s not true because I use the models. One of the downsides of the way AI is progressing is that how fast it’s moving is becoming less legible to normal people.

Now, this is not true in some domains—like, look at Sora 2. It is so obvious to anyone who looks at it that Sora 2 is vastly better than what came before. But if you ask GPT-4 and GPT-5 why the sky is blue, they’ll give you basically the same answer. It is the correct answer. It’s already saturated the ability to tell you why the sky is blue. So the people who I expect to most understand AI progress right now are the people who are actually building with AIs or using AIs on very difficult scientific problems.

The AGI forecaster who saw the critics coming

Daniel Kokotajlo, executive director of the AI Futures Project; an OpenAI whistleblower; and lead author of “AI 2027,” a vivid scenario where—starting in 2027—AIs progress from “superhuman coders” to “wildly superintelligent” systems in the span of months

AI policy seems to be getting worse, like the “Pro-AI” super PAC [launched earlier this year by executives from OpenAI and Andreessen Horowitz to lobby for a deregulatory agenda], and the deranged and/or dishonest tweets from Sriram Krishnan and David Sacks. AI safety research is progressing at the usual pace, which is excitingly rapid compared to most fields, but slow compared to how fast it needs to be.

We said on the first page of “AI 2027” that our timelines were somewhat longer than 2027. So even when we launched AI 2027, we expected there to be a bunch of critics in 2028 triumphantly saying we’ve been discredited, like the tweets from Sacks and Krishnan. But we thought, and continue to think, that the intelligence explosion will probably happen sometime in the next five to 10 years, and that when it does, people will remember our scenario and realize it was closer to the truth than anything else available in 2025.

Predicting the future is hard, but it’s valuable to try; people should aim to communicate their uncertainty about the future in a way that is specific and falsifiable. This is what we’ve done and very few others have done. Our critics mostly haven’t made predictions of their own and often exaggerate and mischaracterize our views. They say our timelines are shorter than they are or ever were, or they say we are more confident than we are or were.

I feel pretty good about having longer timelines to AGI. It feels like I just got a better prognosis from my doctor. The situation is still basically the same, though.

Garrison Lovely is a freelance journalist and the author of Obsolete, an online publication and forthcoming book on the discourse, economics, and geopolitics of the race to build machine superintelligence (out spring 2026). His writing on AI has appeared in the New York Times, Nature, Bloomberg, Time, the Guardian, The Verge, and elsewhere.