Audio Processing is one of the most important application domains of digital signal processing (DSP) and machine learning. Modeling acoustic environments is an essential step in developing digital audio processing systems such as: speech recognition, speech enhancement, acoustic echo cancellation, etc.

Acoustic environments are filled with background noise that can have multiple sources. For example, when sitting in a coffee shop, walking down the street, or driving your car, you hear sounds that can be considered as interference or background noise. Such interferences do not necessarily follow the same statistical model, and hence, a mixture of models can be useful in modeling them.

Those statistical models can also be useful in classifying acoustic environments into different categories, e.g., a quiet auditorium (class 1), or a slightly noisier room with closed windows (class 2), and a third option with windows open (class 3). In each case, the level of background noise can be modeled using a mixture of noise sources, each happening with a different probability and with a different acoustic level.

Another application of such models is in the simulation of acoustic noise in different environments based on which DSP and machine learning solutions can be designed to solve specific acoustic problems in practical audio systems such as interference cancellation, echo cancellation, speech recognition, speech enhancement, etc.

A simple statistical model that can be useful in such scenarios is the Gaussian Mixture Model (GMM) in which each of the different noise sources is assumed to follow a specific Gaussian distribution with a certain variance. All the distributions can be assumed to have zero mean while still being sufficiently accurate for this application, as also shown in this article.

Each of the GMM distributions has its own probability of contributing to the background noise. For example, there could be a consistent background noise that occurs most of the time, while other sources can be intermittent, such as the noise coming through windows, etc. All this has to be considered in our statistical model.

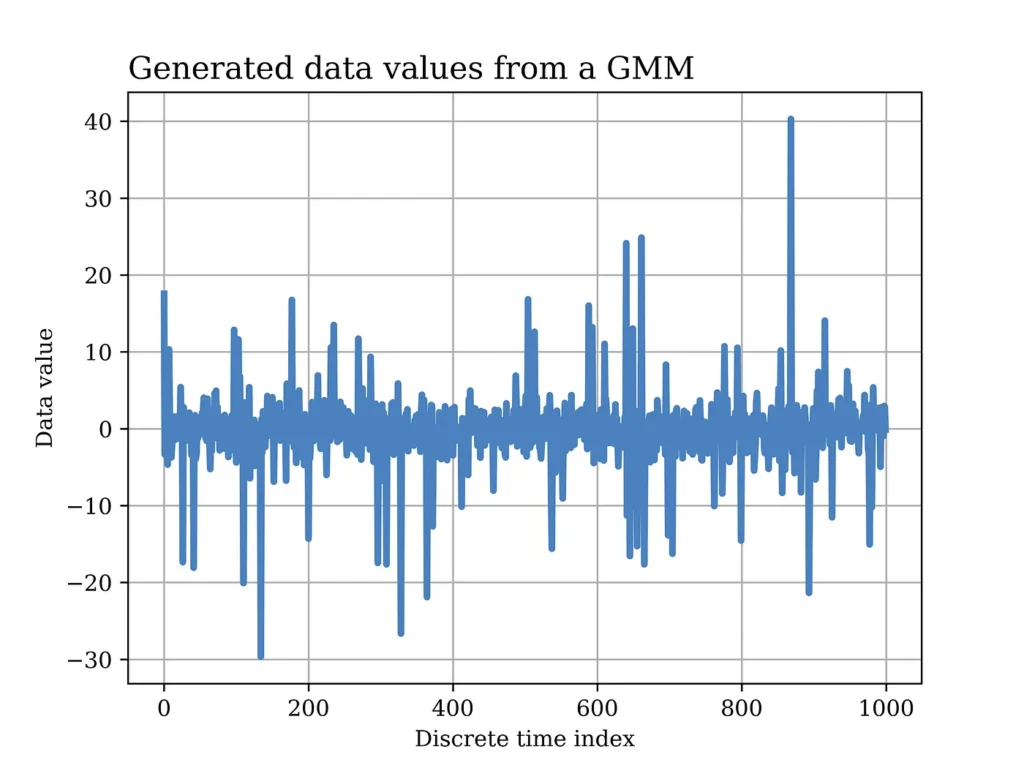

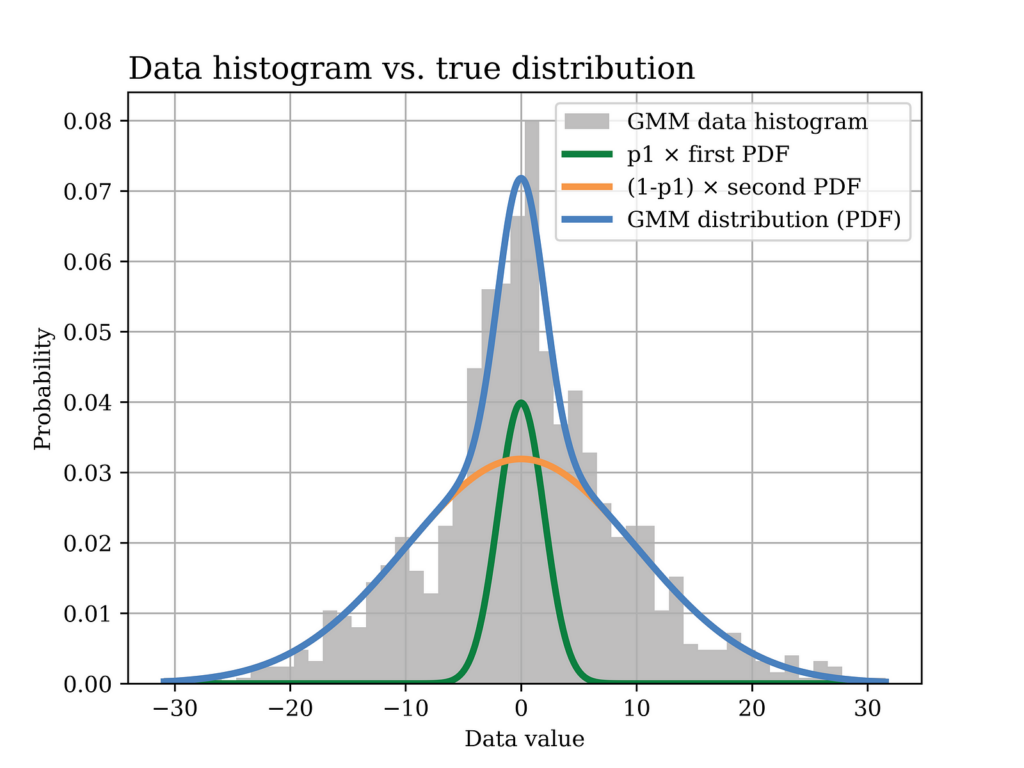

An example of simulated GMM data over time (normalized to the sampling time) is shown in the figure below in which there are two Gaussian noise sources, both of zero mean but with two different variances. In this example, the lower variance signal occurs more often with 90% probability hence the intermittent spikes in the generated data representing the signal with higher variance.

In other scenarios and depending on the application, it could be the other way around in which the high variance noise signal occurs more often (as will be shown in a later example in this article). Python code used to generate and analyze GMM data will also be shown later in this article.

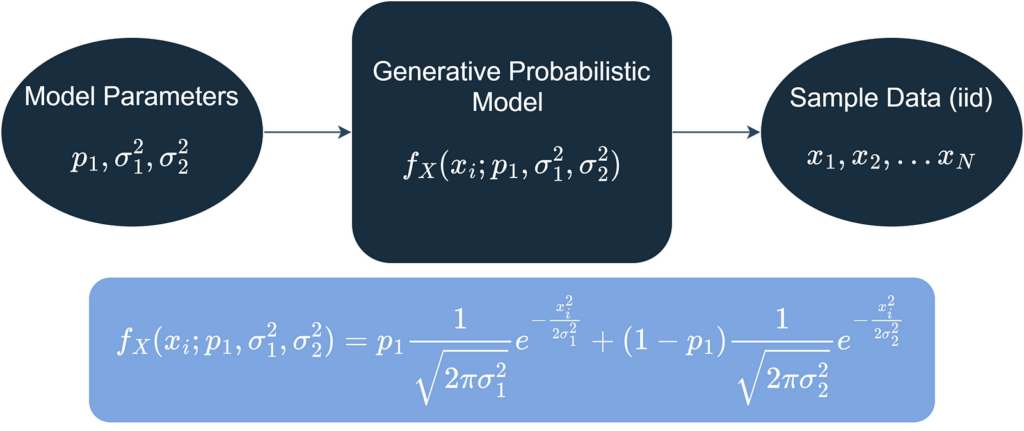

Turning to a more formal modelling language, let’s assume that the background noise signal that is collected (using a high-quality microphone for example) is modeled as realizations of independent and identically distributed (iid) random variables that follow a GMM as shown below.

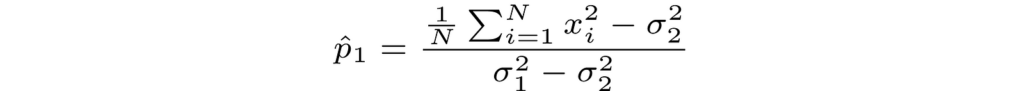

The modeling problem thus boils down to estimating the model parameters (i.e., p1, σ²1, and σ²2) using the observed data (iid). In this article, we will be using the method of moments (MoM) estimator for such purpose.

To simplify things further, we can assume that the noise variances (σ²1 and σ²2) are known and that only the mixing parameter (p1) is to be estimated. The MoM estimator can be used to estimate more than one parameter (i.e., p1, σ²1, and σ²2) as shown in Chapter 9 of the book: “Statistical Signal Processing: Estimation Theory”, by Steven Kay. However, in this example, we will assume that only p1 is unknown and to be estimated.

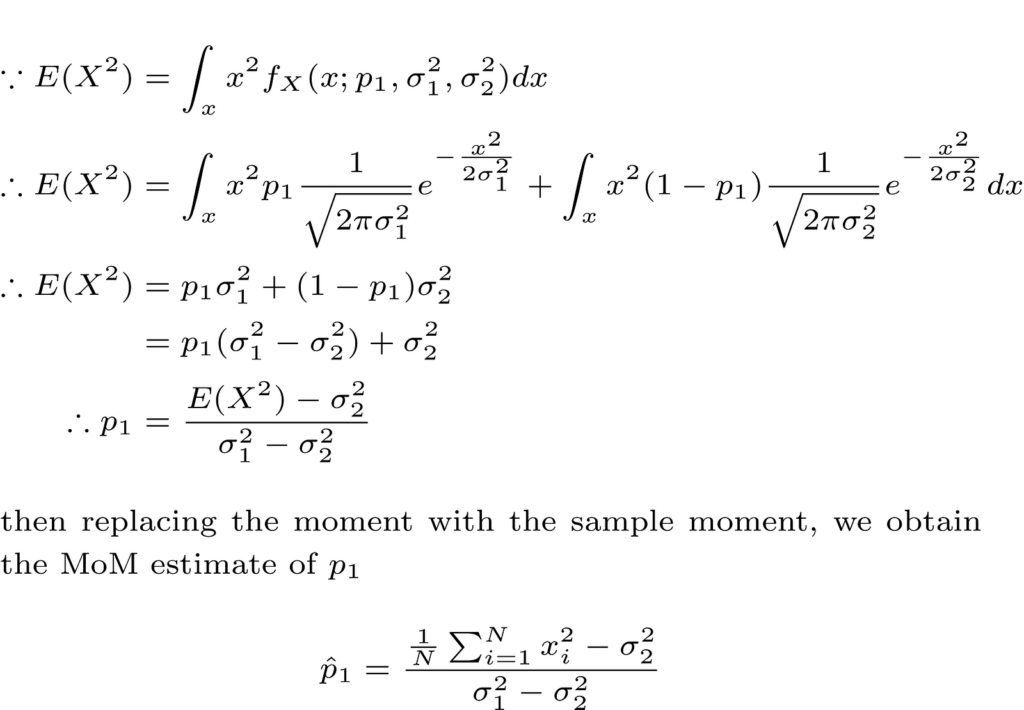

Since both gaussians in the GMM are zero mean, we will start with the second moment and try to obtain the unknown parameter p1 as a function of the second moment as follows.

Note that another simple method to obtain the moments of a random variable (e.g., second moment or higher) is by using the moment generating function (MGF). A good textbook in probability theory that covers such topics, and more is: “Introduction to Probability for Data Science”, by Stanley H. Chan.

Before proceeding any further, we would like to quantify this estimator in terms of the fundamental properties of estimators such as bias, variance, consistency, etc. We will verify this later numerically with a Python example.

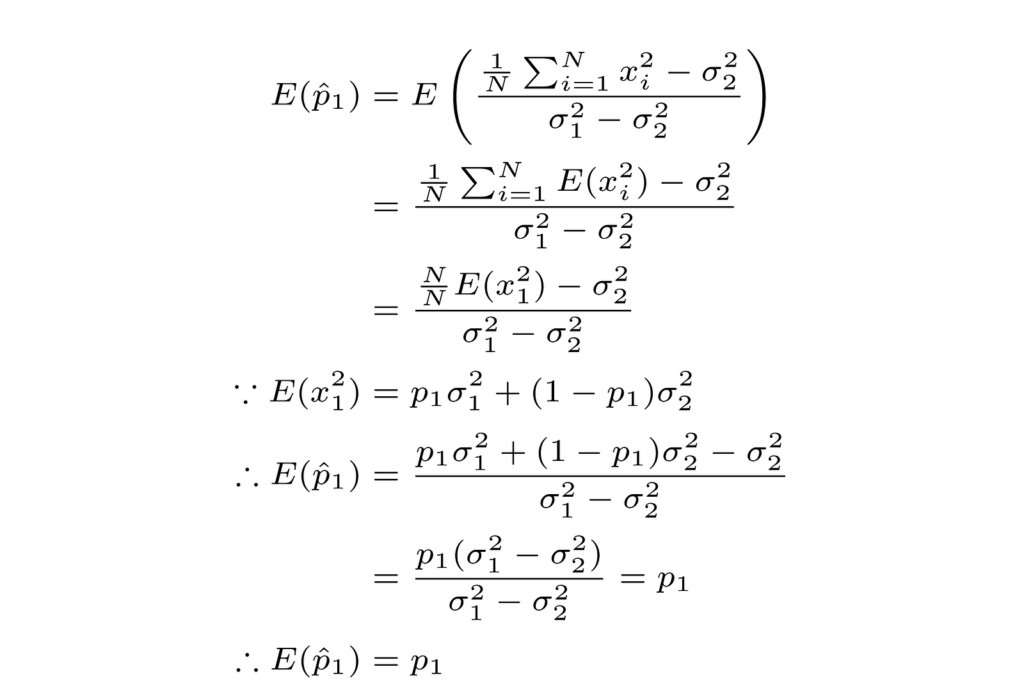

Starting with the estimator bias, we can show that the above estimator of p1 is indeed unbiased as follows.

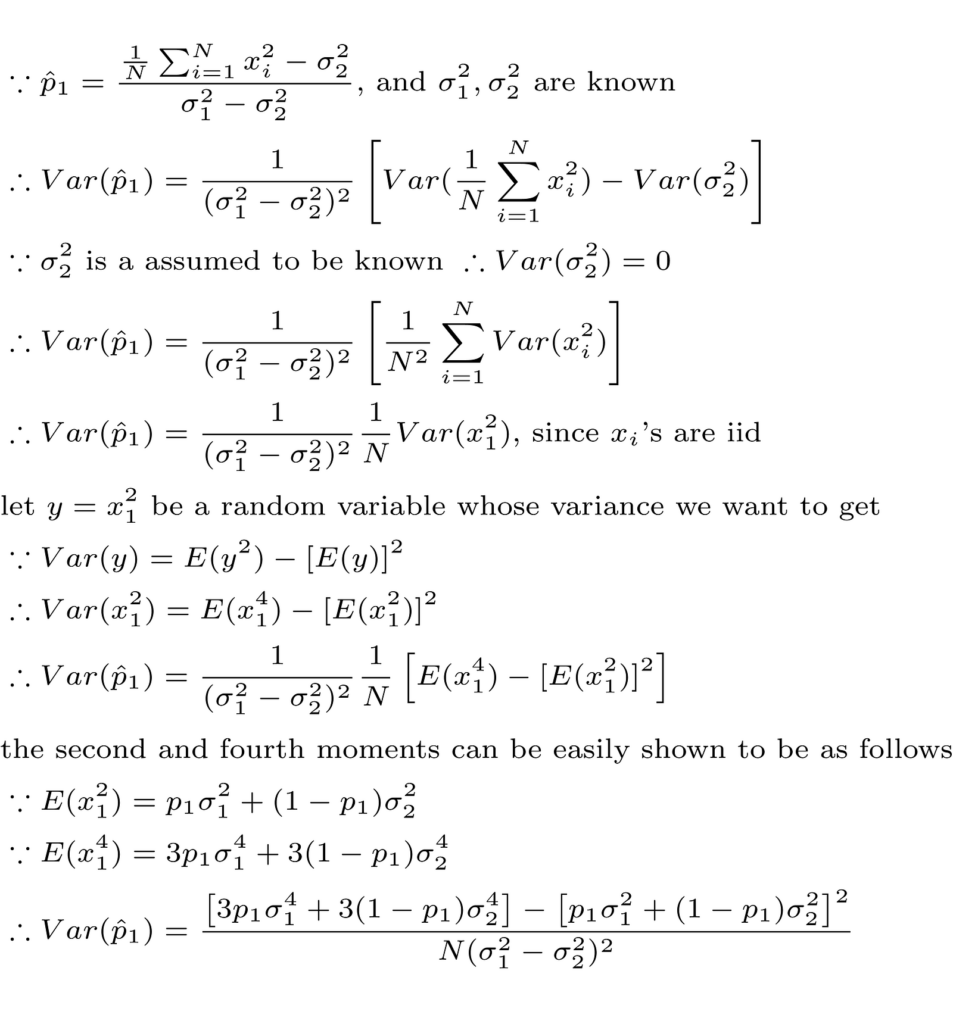

We can then proceed to derive the variance of our estimator as follows.

It is also clear from the above analysis that the estimator is consistent since it is unbiased and also its variance decreases when the sample size (N) increases. We will also use the above formula of the p1 estimator variance in our Python numerical example (shown in detail later in this article) when comparing theory with practical numerical results.

Now let’s introduce some Python code and do some fun stuff!

First, we generate our data that follows a GMM with zero means and standard deviations equal to 2 and 10, respectively, as shown in the code below. In this example, the mixing parameter p1 = 0.2, and the sample size of the data equals 1000.

# Import the Python libraries that we will need in this GMM example

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

# GMM data generation

mu = 0 # both gaussians in GMM are zero mean

sigma_1 = 2 # std dev of the first gaussian

sigma_2 = 10 # std dev of the second gaussian

norm_params = np.array([[mu, sigma_1],

[mu, sigma_2]])

sample_size = 1000

p1 = 0.2 # probability that the data point comes from first gaussian

mixing_prob = [p1, (1-p1)]

# A stream of indices from which to choose the component

GMM_idx = np.random.choice(len(mixing_prob), size=sample_size, replace=True,

p=mixing_prob)

# GMM_data is the GMM sample data

GMM_data = np.fromiter((stats.norm.rvs(*(norm_params[i])) for i in GMM_idx),

dtype=np.float64)Then we plot the histogram of the generated data versus the probability density function as shown below. The figure shows the contribution of both Gaussian densities in the overall GMM, with each density scaled by its corresponding factor.

The Python code used to generate the above figure is shown below.

x1 = np.linspace(GMM_data.min(), GMM_data.max(), sample_size)

y1 = np.zeros_like(x1)

# GMM probability distribution

for (l, s), w in zip(norm_params, mixing_prob):

y1 += stats.norm.pdf(x1, loc=l, scale=s) * w

# Plot the GMM probability distribution versus the data histogram

fig1, ax = plt.subplots()

ax.hist(GMM_data, bins=50, density=True, label="GMM data histogram",

color = GRAY9)

ax.plot(x1, p1*stats.norm(loc=mu, scale=sigma_1).pdf(x1),

label="p1 × first PDF",color = GREEN1,linewidth=3.0)

ax.plot(x1, (1-p1)*stats.norm(loc=mu, scale=sigma_2).pdf(x1),

label="(1-p1) × second PDF",color = ORANGE1,linewidth=3.0)

ax.plot(x1, y1, label="GMM distribution (PDF)",color = BLUE2,linewidth=3.0)

ax.set_title("Data histogram vs. true distribution", fontsize=14, loc='left')

ax.set_xlabel('Data value')

ax.set_ylabel('Probability')

ax.legend()

ax.grid()After that, we compute the estimate of the mixing parameter p1 that we derived earlier using MoM and which is shown here again below for reference.

The Python code used to compute the above equation using our GMM sample data is shown below.

# Estimate the mixing parameter p1 from the sample data using MoM estimator

p1_hat = (sum(pow(x,2) for x in GMM_data) / len(GMM_data) - pow(sigma_2,2))

/(pow(sigma_1,2) - pow(sigma_2,2))In order to properly assess this estimator, we use Monte Carlo simulation by generating multiple realizations of the GMM data and estimate p1 for each realization as shown in the Python code below.

# Monte Carlo simulation of the MoM estimator

num_monte_carlo_iterations = 500

p1_est = np.zeros((num_monte_carlo_iterations,1))

sample_size = 1000

p1 = 0.2 # probability that the data point comes from first gaussian

mixing_prob = [p1, (1-p1)]

# A stream of indices from which to choose the component

GMM_idx = np.random.choice(len(mixing_prob), size=sample_size, replace=True,

p=mixing_prob)

for iteration in range(num_monte_carlo_iterations):

sample_data = np.fromiter((stats.norm.rvs(*(norm_params[i])) for i in GMM_idx))

p1_est[iteration] = (sum(pow(x,2) for x in sample_data)/len(sample_data)

- pow(sigma_2,2))/(pow(sigma_1,2) - pow(sigma_2,2))Then, we check for the bias and variance of our estimator and compare to the theoretical results that we derived earlier as shown below.

p1_est_mean = np.mean(p1_est)

p1_est_var = np.sum((p1_est-p1_est_mean)**2)/num_monte_carlo_iterations

p1_theoritical_var_num = 3*p1*pow(sigma_1,4) + 3*(1-p1)*pow(sigma_2,4)

- pow(p1*pow(sigma_1,2) + (1-p1)*pow(sigma_2,2),2)

p1_theoritical_var_den = sample_size*pow(sigma_1**2-sigma_2**2,2)

p1_theoritical_var = p1_theoritical_var_num/p1_theoritical_var_den

print('Sample variance of MoM estimator of p1 = %.6f' % p1_est_var)

print('Theoretical variance of MoM estimator of p1 = %.6f' % p1_theoritical_var)

print('Mean of MoM estimator of p1 = %.6f' % p1_est_mean)

# Below are the results of the above code

Sample variance of MoM estimator of p1 = 0.001876

Theoretical variance of MoM estimator of p1 = 0.001897

Mean of MoM estimator of p1 = 0.205141We can observe from the above results that the mean of the p1 estimate equals 0.2051 which is very close to the true parameter p1 = 0.2. This mean gets even closer to the true parameter as the sample size increases. Thus, we have numerically shown that the estimator is unbiased as confirmed by the theoretical results done earlier.

Moreover, the sample variance of the p1 estimator (0.001876) is almost identical to the theoretical variance (0.001897) which is beautiful.

It is always a happy moment when theory matches practice!

All images in this article, unless otherwise noted, are by the author.