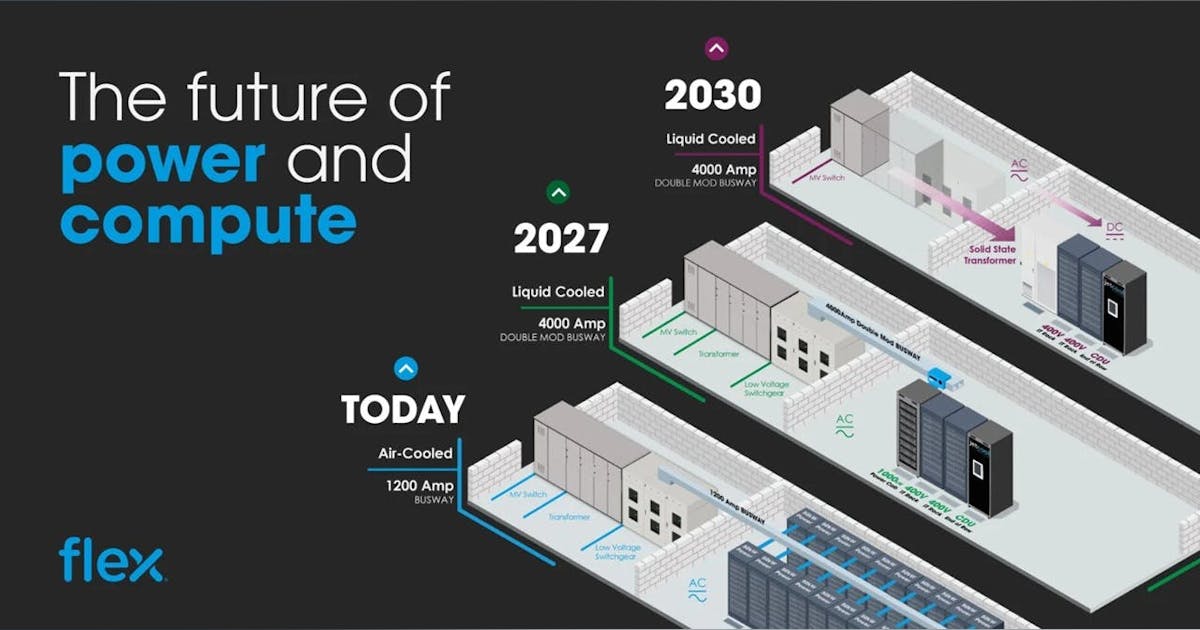

Over the last 18 months or so, the energy generation industry and its public utilities have been significantly impacted by the AI data center boom. It has been demonstrated across North America that the increase in demand for power, as driven by the demand for hyperscale and AI data centers, greatly exceeds the ability of the industry to actually generate and deliver power to meet the demand.

We have covered many of the efforts being made to control the availability of power. In response, utilities and regulators have begun rethinking how to manage power availability through means such as: temporary moratoriums on new data center interconnections; the creation of new rate classes; cogeneration and load-sharing agreements; renewable integration; and power-driven site selection strategies.

But the bottom line is that in many locations utilities will need to change the way they work and how and where they spend their CAPEX budgets. The industry has already realized that their demand forecast models are hugely out of date, and that has had a ripple effect on much of the planning done by public utilities to meet the next generation of power demand.

Most utilities now acknowledge that their demand forecasting models have fallen behind reality, triggering revisions to Integrated Resource Plans (IRPs) and transmission buildouts nationwide. This mismatch between forecast and actual demand is forcing a fundamental rethink of capital expenditure priorities and long-term grid planning.

Spend More, Build Faster

Utilities are sharply increasing CAPEX and rebalancing their resource portfolios—not just for decarbonization, but to keep pace with multi-hundred-megawatt data center interconnects. This trend is spreading across the industry, not confined to a few isolated utilities. Notable examples include:

-

Duke Energy raised its five-year CAPEX plan to $83 billion (a 13.7% increase) and plans to add roughly 5 GW of natural gas capacity by 2029 to meet accelerating data center and industrial load growth.

-

Dominion Energy lifted its five-year capital plan to $50.1 billion, citing data centers as a primary driver while warning of potential rate pressure from grid modernization and capacity expansion.

-

NV Energy’s 2024 Integrated Resource Plan explicitly models about 5.9 GW of bundled-service data center requests by 2033, supported by new gas, storage, and renewable additions.

-

Salt River Project (Phoenix) is advancing a mixed portfolio of peaking gas and clean capacity, including the Coolidge Generating Station Expansion—a 575-MW project approved and now under construction—with additional peak resources under review.

-

Tennessee Valley Authority (TVA) is moving forward on advanced nuclear for long-duration firming, including a BWRX-300 small modular reactor application for the Clinch River site, and a power purchase agreement with Kairos Power’s Hermes-2 test reactor expected to support Google data centers around 2030.

These CAPEX expansions fund both new generation capacity in the form of modern combined-cycle and combustion-turbine plants, nuclear pilots, and renewables, and grid-side investments to move bulk power to where data centers are locating. This demand is now an explicit planning driver in utility integrated resource plans (IRPs) and board approvals. It’s important to note that these investments encompass both generation and transmission infrastructure.

New Rate Designs and Risk-Transfer Contracts Shift More Responsibility to Data Centers

Utilities are retooling tariffs to protect existing ratepayers and de-risk megawatt-scale customers. With a goal of protecting consumer rate payers, a number of different tariff designs have been put forth.

The goal is to ensure that the cost of new capacity and infrastructure upgrades falls primarily on large commercial users – particularly data centers -rather than being spread across residential and small-business customers. Several new tariff structures explicitly define data center capacity obligations and cost recovery terms. Examples include:

-

New data center rate class: Dominion Energy has proposed a dedicated rate category for customers ≥25 MW with ≥75% load factor, featuring 14-year take-or-pay–style commitments to cover stranded-cost risk and ensure “full cost of service.”

-

Take-or-pay / minimum-take provisions: Duke Energy is pursuing minimum-take contracts (requiring payment regardless of utilization) and up-front contributions for network upgrades tied to data center interconnections—part of a broader shift toward “contribution in aid of construction” (CIAC) requirements.

-

Standby and curtailable service riders: Large-load customers are increasingly directed toward standby-generator and curtailable-service schedules to formalize flexibility. Dominion’s Schedule SG and Schedule CS are examples under their targeted sector programs.

The net effect of these evolving tariffs is that utilities are socializing less risk from speculative or timing-uncertain AI loads, while providing greater queue certainty for data center developers willing to shoulder their share of capital costs.

Changing the Relationship Between Data Centers and Utilities

A major shift is underway: load flexibility is becoming a formal part of interconnection agreements. New legislation and regulatory frameworks across multiple regions are requiring data centers to demonstrate the ability to adjust or curtail power consumption during grid stress events.

From on-site cogeneration and load balancing to demand-response participation and remote-curtailment capabilities, utilities and regulators are increasingly embedding flexibility requirements into contracts and permitting. Examples include:

-

Under Texas SB 6, ratified in June 2025: Beginning December 31, 2025, new large-load customers (≥75 MW) must be able to remotely curtail load or switch to on-site generation during emergencies. ERCOT will also begin procuring demand reductions from major customers. Interconnection agreements are expected to include fees, disclosure of backup power systems, and cost-sharing provisions as standard components.

-

PJM and SPP: Both grid operators are fast-tracking policies to curtail or manage large loads during scarcity events. The SPP “HILL/CHILL” framework establishes a 90-day expedited interconnection path when large-load customers pair with generation resources, defining curtailable service classes for planning and market participation.

-

Voluntary demand-response partnerships: To preempt mandatory curtailment mandates, some hyperscalers are signing voluntary flexibility agreements. Google, for instance, has reached demand-flexibility deals with Indiana Michigan Power and the Tennessee Valley Authority (TVA) to shift or reduce AI workloads during peak grid demand—an emerging model for bridging near-term capacity gaps.

Collectively, these developments signal a new era of active coordination between utilities and data centers, in which grid reliability, flexibility, and cost allocation are shared operational priorities.

Upgrading Power Transmission: Accelerate, Reconductor, Right-Size

Serving today’s multi-gigawatt data centers and campus-scale developments requires both new transmission lines and rapid capacity upgrades along existing corridors. With data center interconnection queues expanding across nearly every regional transmission organization (RTO), utilities and regulators are under pressure to streamline approvals and unlock capacity faster.

-

PJM approved $5.9–$6.7 billion in near-term transmission projects in 2025, explicitly citing data center–driven load growth and resource shifts as key justifications.

-

Advanced conductors: Google and CTC Global have partnered with utilities to reconduct existing lines using ACCC® (Aluminum Conductor Composite Core) technology, boosting transmission capacity in months rather than years. Priority is being given to reconductoring lines that unlock capacity for new data center sites.

-

Aggressive planning upgrades: PJM’s evolving Regional Transmission Expansion Plan (RTEP) process aims to “right-size” near-term fixes for long-term needs. The DOE and NREL have identified Grid-Enhancing Technologies (GETs) such as Dynamic Line Rating (DLR) and topology optimization as practical, near-term pathways for unlocking transmission capacity without lengthy new-build timelines.

-

Federal rulemaking: Two FERC orders are reshaping how utilities and RTOs plan for both new generation and surging load growth:

-

FERC Order 2023 (2023): Requires cluster-based interconnection studies with enforceable deadlines and penalties, designed to reduce the interconnection backlog and expedite capacity additions.

-

FERC Order 1920/A/B (2024–2025): Mandates 20-year regional transmission plans, state involvement in cost allocation, and greater transparency, giving utilities a framework to plan around structural load growth driven by AI and industrial electrification.

-

-

Process innovation: SPP is implementing a 90-day fast-track path for “high-impact large loads” paired with new generation under its HILL/CHILL policy framework.

Together, these measures reflect a system-wide acceleration of transmission modernization, combining new lines, smarter planning, and digital tools to move power where AI and hyperscale demand is rising fastest.

Green Tariffs and “Bring-Your-Own-Clean-Power” Programs Are Changing How Data Centers Source Energy

Utilities are expanding subscription-based renewable programs and custom clean-energy constructs that enable data centers to meet sustainability and carbon-reduction goals within regulated tariffs. For large-scale operators, the challenge lies in navigating a fragmented landscape: each utility administers its own program, requiring project-specific power purchase agreements (PPAs) and regional tailoring to reflect local policy and grid conditions.

Examples of key programs include:

-

Duke Energy – Green Source Advantage (GSA / GSA Choice, North and South Carolina): Allows large customers to contract utility-backed renewable projects through long-term agreements. The program was expanded in 2024 to add new capacity and greater flexibility for participants.

-

Georgia Power – CRSP / CARES: The Clear and Renewable Energy Subscription Program offers 1,000–2,100 MW of utility-procured renewables for large-customer subscription. QTS Data Centers subscribed to approximately 350 MW under CRSP for its Atlanta-area facilities.

-

Dominion Energy – Schedule RG / Schedule CFG: Provides utility-sourced carbon-free and renewable energy options for large commercial and industrial (C&I) customers. Schedule RG (approved in 2018) and the newer Schedule CFG (for projects ≥1 MW) are voluntary programs that allow customers to directly procure renewable generation through Dominion-backed assets.

A growing number of utilities are evolving from pass-through energy suppliers to active counterparties, developing or contracting clean-energy assets that are directly matched to data center load profiles. This integrated approach (where power buyers participate in generation planning) aims to improve efficiency, transparency, and reliability in how large loads source renewable energy.

Regional Utility Responses Are Diverging

Not all power generation, and not all regulatory response, is created equal. Across the U.S., states and regional transmission operators (RTOs) are adapting to data center–driven load growth in markedly different ways, reflecting local politics, resource mixes, and grid constraints.

-

Virginia (PJM): Data centers now account for approximately 25% of statewide electricity consumption, according to Dominion’s filings and state reports. Dominion’s Integrated Resource Plan (IRP) and new large-load rate class proposals formally recognize data centers as the primary driver of load growth and cost allocation debates. Transmission capacity remains the chokepoint, making advanced conductor pilots and PJM’s RTEP expansion windows key to sustaining growth.

-

Texas (ERCOT): Senate Bill 6 (2025) effectively resets the social contract for large loads—requiring operators to flex or face emergency curtailment—and is expected to push wider adoption of on-site generation, energy storage, and hybrid PPAs. Other utilities and cooperatives across the state are expected to mirror ERCOT’s flexibility protocols in their own tariffs.

-

Arizona (SRP / APS): Rapid regional growth is driving a buildout of gas peaker plants paired with battery storage, alongside stricter queue management and prioritization. Salt River Project’s Coolidge Expansion exemplifies a reliability-first strategy, with clean-energy components layered in to support long-term decarbonization goals.

-

Midwest (MISO / Indiana): The Indiana Utility Regulatory Commission (IURC) has approved new large-load interconnection rules, while utilities such as Indiana Michigan Power (I&M) are signing demand-response agreements—including with Google—to mitigate peak load. Policymakers are advancing cost-sharing requirements for new generation supporting big-load customers.

-

SPP (Plains states): The Southwest Power Pool (SPP) is actively marketing the region to High Impact Large Load (HILL) customers through accelerated interconnection studies and firm/curtailable service classes, aiming to attract data center development away from saturated hubs like Virginia and northern Texas.

Regional differences in regulation and resource strategy are now shaping the geography of new data center investment, rewarding jurisdictions that can align policy agility, grid capacity, and clean-power availability.

What This Means for Data Center Developers

Over the next several years, the structure of power procurement deals for data centers is likely to evolve significantly. Utilities will increasingly require greater up-front financial participation from customers, shifting capital and risk onto large-load developers. Expect to see:

-

Contribution-in-aid-of-construction (CIAC) payments or similar up-front contributions to fund new substations, network upgrades, and capacity expansions.

-

Minimum-take or take-or-pay obligations to guarantee long-term revenue recovery for utilities.

-

Extended contract terms—typically 10 to 15 years—to secure capacity and protect other ratepayers from stranded-cost exposure.

Flexibility will become a baseline requirement. Interconnection agreements may now mandate curtailment capabilities, demand-response participation, and certified backup generation. In ERCOT, for example, new large loads ≥75 MW must be equipped for remote disconnection or on-site generation under Senate Bill 6 (2025).

Transmission-friendly regions will rise in priority for new site development. Locations within PJM subregions or along SPP’s HILL corridor, where reconductoring and RTEP upgrades can unlock capacity quickly, will gain competitive advantage as interconnection bottlenecks persist elsewhere.

Data center developers will also need to maintain ongoing visibility into utility and regulatory planning cycles, tracking updates from individual utilities, regional transmission operators (RTOs), and federal agencies such as FERC. Understanding how generation mix, transmission planning, and tariff reform interact will be essential to long-term siting and investment strategy.

In short, the path to power is becoming more capital-intensive, flexible, and policy-dependent, requiring data center developers to act as active partners in the evolving utility ecosystem.