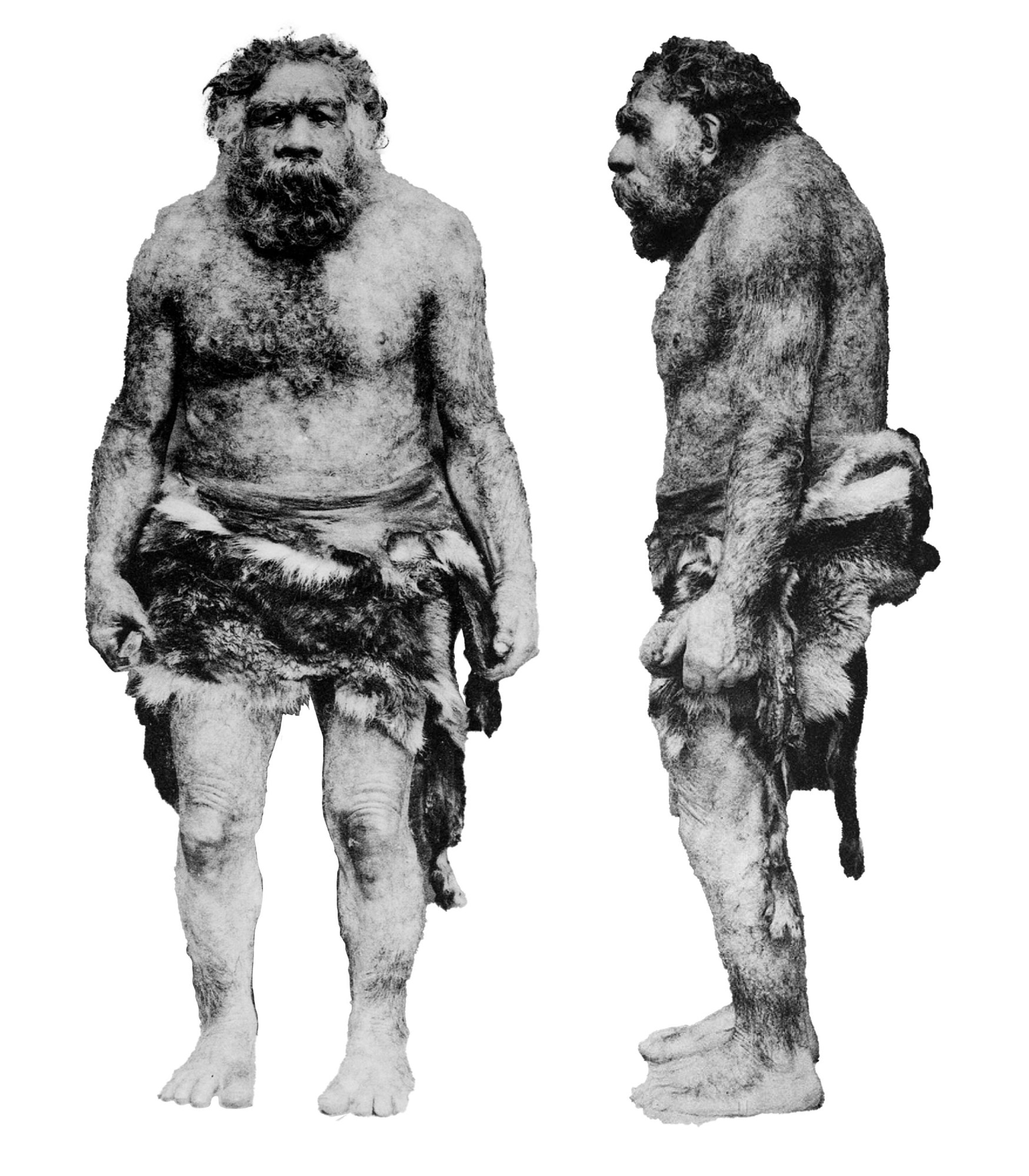

You’ve probably heard some version of this idea before: that many of us have an “inner Neanderthal.” That is to say, around 45,000 years ago, when Homo sapiens first arrived in Europe, they met members of a cousin species—the broad-browed, heavier-set Neanderthals—and, well, one thing led to another, which is why some people now carry a small amount of Neanderthal DNA.

This DNA is arguably the 21st century’s most celebrated discovery in human evolution. It has been connected to all kinds of traits and health conditions, and it helped win the Swedish geneticist Svante Pääbo a Nobel Prize.

But in 2024, a pair of French population geneticists called into question the foundation of the popular and pervasive theory.

Lounès Chikhi and Rémi Tournebize, then colleagues at the Université de Toulouse, proposed an alternative explanation for the very same genomic patterns. The problem, they said, was that the original evidence for the inner Neanderthal was based on a statistical assumption: that humans, Neanderthals, and their ancestors all mated randomly in huge, continent-size populations. That meant a person in South Africa was just as likely to reproduce with a person in West Africa or East Africa as with someone from their own community.

Archaeological, genetic, and fossil evidence all shows, though, that Homo sapiens evolved in Africa in smaller groups, cut off from one another by deserts, mountains, and cultural divides. People sometimes crossed those barriers, but more often they partnered up within them.

In the terminology of the field, this dynamic is called population structure. Because of structure, genes do not spread evenly through a population but can concentrate in some places and be totally absent from others. The human gene pool is not so much an Olympic-size swimming pool as a complex network of tidal pools whose connectivity ebbs and flows over time.

This dynamic greatly complicates the math at the heart of evolutionary biology, which long relied on assumptions like randomly mating populations to extract general principles from limited data. If you take structure into account, Chikhi told me recently, then there are other ways to explain the DNA that some living people share with Neanderthals—ways that don’t require any interspecies sex at all.

“I believe most species are spatially organized and structured in different, complex ways,” says Chikhi, who has researched population structure for more than two decades and has also studied lemurs, orangutans, and island birds. “It’s a general failure of our field that we do not compare our results in a clear way with alternative scenarios.” (Pääbo did not respond to multiple requests for comment.)

The inner Neanderthal became a story we could tell ourselves about our flaws and genetic destiny: Don’t blame me; blame the prognathic caveman hiding in my cells.

Chikhi and Tournebize’s argument is about population structure, yes, but at heart, it is actually one about methods—how modern evolutionary science deploys computer models and statistical techniques to make sense of mountains upon mountains of genetic data.

They’re not the only scientists who are worried. “People think we really understand how genomes evolve and can write sophisticated algorithms for saying what happened,” says William Amos, a University of Cambridge population geneticist who has been critical of the “inner Neanderthal” theory. But, he adds, those models are “based on simple assumptions that are often wrong.”

And if they’re wrong, what’s at stake is far more than a single evolutionary mystery.

A captivating story of interspecies passion

Back in 2010, Pääbo’s lab pulled off something of a miracle. The researchers were able to extract DNA from nuclei in the cells of 40,000-year-old Neanderthal bones. DNA breaks down quickly after death, but the group got enough of it from three different individuals to produce a draft sequence of the entire Neanderthal genome, with 4 billion base pairs.

As part of their study, they performed a statistical test comparing their Neanderthal genome with the genomes of five present-day people from different parts of the world. That’s how they discovered that modern humans of non-African ancestry had a small amount of DNA in common with Neanderthals, a species that diverged from the Homo sapiens line more than 400,000 years ago, that they did not share with either modern humans of African ancestry or our closest living relative, the chimpanzee.

Pääbo’s team interpreted this as evidence of sexual reproduction between ancient Homo sapiens and the Neanderthals they encountered after they expanded out of Africa. “Neanderthals are not totally extinct,” Pääbo said to the BBC in 2010. “In some of us, they live on a little bit.”

The discovery was monumental on its own—but even more so because it reversed a previous consensus. More than a decade earlier, in 1997, Pääbo had sequenced a much smaller amount of Neanderthal DNA, in that case from a cell structure called a mitochondrion. It was different enough from Homo sapiens mitochondrial DNA for his team to cautiously conclude there had been “little or no interbreeding” between the two species.

After 2010, though, the idea of hybridization, also called admixture, effectively became canon. Top journals like Science and Nature published study after study on the inner Neanderthal. Some scientists have argued that Homo sapiens would never have adapted to colder habitats in Europe and Asia without an infusion of Neanderthal DNA. Other research teams used Pääbo’s techniques to find genetic traces of interbreeding with an extinct group of hominins in Asia, called the Denisovans, and a mysterious “ghost lineage” in Africa. Biologists used similar tests to find evidence of interbreeding between chimpanzees and bonobos, polar and brown bears, and all kinds of other animals.

The inner-Neanderthal hypothesis also took a turn for the personal. Various studies linked Neanderthal DNA to a head-spinning range of conditions: alcoholism, asthma, autism, ADHD, depression, diabetes, heart disease, skin cancer, and severe covid-19. Some researchers suggested that Neanderthal DNA had an impact on hair and skin color, while others assigned individuals a “NeanderScore” that was correlated with skull shape and prevalence of schizophrenia markers. Commercial genetic testing companies like 23andMe started offering customers Neanderthal ancestry reports.

The inner Neanderthal became a story we could tell ourselves about our flaws and genetic destiny: Don’t blame me; blame the prognathic caveman hiding in my cells. Or as Latif Nasser, a host of the popular-science program Radiolab, put it when he was hospitalized with Crohn’s disease, another Neanderthal-associated condition: “I just keep imagining these tiny Neanderthals … just, like, stabbing me and drawing these little droplets of blood out of me.”

“These things become meaningful to people,” Chikhi says. “What we say will be important to how people view themselves.”

The pitfalls of simplistic solutions

When population geneticists built the theoretical framework for evolutionary biology in the early 20th century, genes were only abstract units of heredity inferred from experiments with peas and fruit flies. Population genetics developed theory far more quickly than it accumulated data. As a result, many data-driven scientists dismissed the study of evolution as a form of storytelling based on unexamined assumptions and preconceived ideas.

By the ’90s, though, genes were no longer abstractions but sequenced segments of DNA. Genomic sequencing grounded evolutionary studies in the kind of hard data that a chemist or physicist could respect.

Yet biologists could not simply read evolutionary history from genomes as though they were books. They were trying to determine which of a nearly infinite number of plausible histories was the most likely to have created the patterns they observed in a small sample of genomes. For that, they needed simplified, algorithmic models of evolution. The study of evolution shifted from storytelling to statistics, and from biology to computer science.

That suited Chikhi, who as a child was drawn to the predictable laws and numerical precision of math and science. He entered the field in the mid-’90s just as the first big studies of human DNA were settling old debates about human origins. DNA showed that Africa harbored far more genetic diversity than the entire rest of the planet. The new evidence supported the idea that modern humans evolved for hundreds of thousands of years in Africa and expanded to the other continents only in the last 100,000 years. For Chikhi, whose parents were Algerian immigrants, this discovery was a powerful challenge to the way some archaeologists and biologists talked about race. DNA could be used to deconstruct rather than encourage the pernicious idea that human races had deep-seated evolutionary differences based on their places of origin.

At the same time, though, he was wary of the tendency to treat DNA as the final verdict on open questions in evolution. Chikhi had been surprised when, back in 1997, Pääbo and his team used that small amount of mitochondrial DNA to rule out hybridization between Homo sapiens and Neanderthals. He didn’t think that the absence of Neanderthal DNA there necessarily meant it wouldn’t be found elsewhere in the Homo sapiens genome.

Chikhi’s own research in the aughts opened his eyes to the gaps between historical reality and models of evolution. For one, despite the assumption of random mating, none of the animals Chikhi studied actually mated randomly. Orangutans lived in highly fragmented habitats, which restricted their pool of potential mates, and female birds were often extremely picky about their male partners.

These factors could confound an evolutionary biologist’s traditional statistical tool kit. Scientists were starting to apply a mathematical technique to estimate historical population sizes for a species from the genome of just a single individual. This method showed sharp population declines in the histories of many different species. Chikhi realized, though, that the apparent declines could be an artifact of treating a structured population as one that evolved with random mating; in that case, the technique could indicate a bottleneck even if all the subgroups were actually growing in size. “This is completely counterintuitive,” he says.

That’s at least partly why, when Pääbo’s 2010 Neanderthal genome came out, Chikhi was impressed with the sheer technical accomplishment but also leery of the findings about hybridization. “It was the type of thing we conclude too quickly based on genetic data,” he says. Pääbo’s work mentioned population structure as a possible alternative explanation—but didn’t follow up.

Just a couple of years later, a pair of independent scientists named Anders Eriksson and Andrea Manica picked up the idea, building a model with simple population structure that explicitly excluded admixture. They simulated human evolution starting from 500,000 years ago and found that their model produced the same genomic patterns Pääbo’s group had interpreted as evidence of hybridization.

“Working with structured models is really out of the comfort zone of a lot of population geneticists,” says Eriksson, now a professor at the University of Tartu in Estonia.

Their research impressed Chikhi. “At the time, I thought people would focus on population structure in the evolution of humans,” he says. Instead, he watched as the inner-Neanderthal hypothesis took on a life of its own. Scientists produced new methods to quantify hybridization but rarely examined whether population structure would yield the same results. To Chikhi, this wasn’t science; it was storytelling, like some of the old narratives about the evolution of racial differences.

Chikhi and Tournebize decided to take a crack at the problem themselves. “I’ve always been very skeptical about science, and population genetics in particular,” says Tournebize, now a researcher at the French National Research Institute for Sustainable Development. “We make a lot of assumptions, and the models we use are very simplistic.” As detailed in a 2024 paper published in Nature Ecology & Evolution, they built a model of human evolution that replaced randomly mating continent-wide populations with many smaller populations linked by occasional migration. Then they let it run—a million times.

At the end of the simulation, they kept the 20 scenarios that produced genomes most similar to the ones in a sample of actual Homo sapiens and Neanderthals. Many of these scenarios produced long segments of DNA like the ones their peers argued could only have been inherited from Neanderthals. They showed that several statistics, which other scientists had proposed as measurements of Neanderthal DNA, couldn’t actually distinguish between hybridization and population structure. What’s more, they showed that many of the models that supported hybridization failed to accurately predict other known features of human evolution.

“A model will say there was admixture but then predict diversity that is totally incompatible with what we actually know of human diversity,” Chikhi says. “Nobody seems to care.”

So how did Neanderthal DNA wind up in living people if not via interspecies passion? Chikhi and Tournebize think it’s more likely that it was inherited by both Neanderthals and some sapiens groups in Africa from a common ancestor living at least half a million years ago. If the sapiens groups carrying those genetic variants included the people who migrated out of Africa, then the two human species would have already had the DNA in common when they came into contact in Europe and Asia—no sex required.

“The interpretation of genetic data is not straightforward,” Chikhi says. “We always have to make assumptions. Nobody takes data and magically comes up with a solution.”

Embracing the uncertainty

Most of the half-dozen population geneticists I spoke with praised Chikhi and Tournebize’s ingenuity and appreciated the spirit of their critique. “Their paper forces us to think more critically about the model we use for inference and consider alternatives,” says Aaron Ragsdale, a population geneticist at the University of Wisconsin–Madison. His own work likewise suggests that the earliest Homo sapiens populations in Africa were probably structured—and that this is the likely reason for genomic patterns that other research groups had attributed to hybridization with a mysterious “ghost lineage” of hominins in Africa.

Yet most researchers still believe that modern humans and Neanderthals did probably have children with each other tens of thousands of years ago. Several pointed to the fact that fossil DNA of Homo sapiens who died thousands of years ago had longer chunks of apparent Neanderthal DNA than living people, which is exactly what you would expect if they had a more recent Neanderthal ancestor. (To address this possibility, Chikhi and Tournebize included DNA from 10 ancient humans in their study and found that most of them fit the structured model.) And while the Harvard population geneticist David Reich, who helped design the statistical test from Pääbo’s 2010 study, declined an interview, he did say he thought Chikhi and Tournebize’s model was “weak” and “very contrived,” adding that “there are multiple lines of evidence for Neanderthal admixture into modern humans that make the evidence for this overwhelming.” (Two other authors of that study, Richard Green and Nick Patterson, did not respond to requests for comment.)

Nevertheless, most scientists these days welcome the development of structured, or “spatially explicit,” models that account for the fact that any given member of a population is usually more closely related to individuals living nearby than to those living far away.

Loosening our attachment to certain narratives of evolution can create space for wonder at the sheer complexity of life’s history.

Other scientists also say that random mating isn’t the only assumption in population genetics that merits scrutiny. Models rarely factor in natural selection, which can also create genetic patterns that look like hybridization. Another common assumption is that everyone’s DNA mutates at the same, constant rate. “All the theory says the mutation rate is fixed,” says Amos, the Cambridge population geneticist. But he thinks that rate would have slowed drastically in the group of Homo sapiens that expanded to Europe around 45,000 years ago. This, too, could have created genomic patterns that other scientists interpret as evidence of interbreeding with Neanderthals.

The point here isn’t that a complex model of evolution with many moving pieces is necessarily better than a simple one. Scientists need to reduce complexity in order to see the underlying processes more clearly. But simple models require assumptions, and scientists need to reevaluate those assumptions in light of what they learn. “As you get more data, you can justify more complex models of the world,” says Mark Thomas, a population geneticist at University College London, who wrote a history of random mating in population genetics that highlighted how the field was starting to see it as “a limiting assumption as opposed to a simplifying one.”

It can feel discouraging to couch conversations about the past in confusing terms like “population structure” and “mutation rates.” It seems almost antithetical to the spirit of science to talk more about uncertainty at the same time we are developing powerful technologies and enormous data sets for analyzing evolution. These tools often yield novel answers, but they can also limit the questions we ask. The French archaeologist Ludovic Slimak, for example, has complained that the idea of the inner Neanderthal has domesticated our image of Neanderthals and made it difficult to imagine their humanity as distinct from our own. Investigating Neanderthal DNA is sexier to many young researchers than searching for archaeological and fossil evidence of how Neanderthals actually lived.

Loosening our attachment to certain narratives of evolution can create space for wonder at the sheer complexity of life’s history. Ultimately, that’s what Chikhi and Tournebize hope to do. After all, they don’t believe the question of population structure versus hybridization is either-or. It’s possible, and even likely, that both played a role in human evolution. “Our structured model does not necessarily mean that no admixture ever took place,” Chikhi and Tournebize wrote in their study. “What our results suggest is that, if admixture ever occurred, it is currently hard to identify using existing methods.”

Future methods might disentangle the different factors, but it’s just as important, Chikhi says, for scientists to be up-front about their assumptions and test alternatives. “There’s still so much uncertainty on so many aspects of the demographic history of Neanderthals and Homo sapiens,” he notes.

Keep that in mind the next time you read about your inner Neanderthal. The association between this DNA and some diseases may be real, of course—but would journals publish these studies without the additional claim that the DNA is from Neanderthals? Any good storyteller knows that sex sells, even in science.

Ben Crair is a science and travel writer based in Berlin.