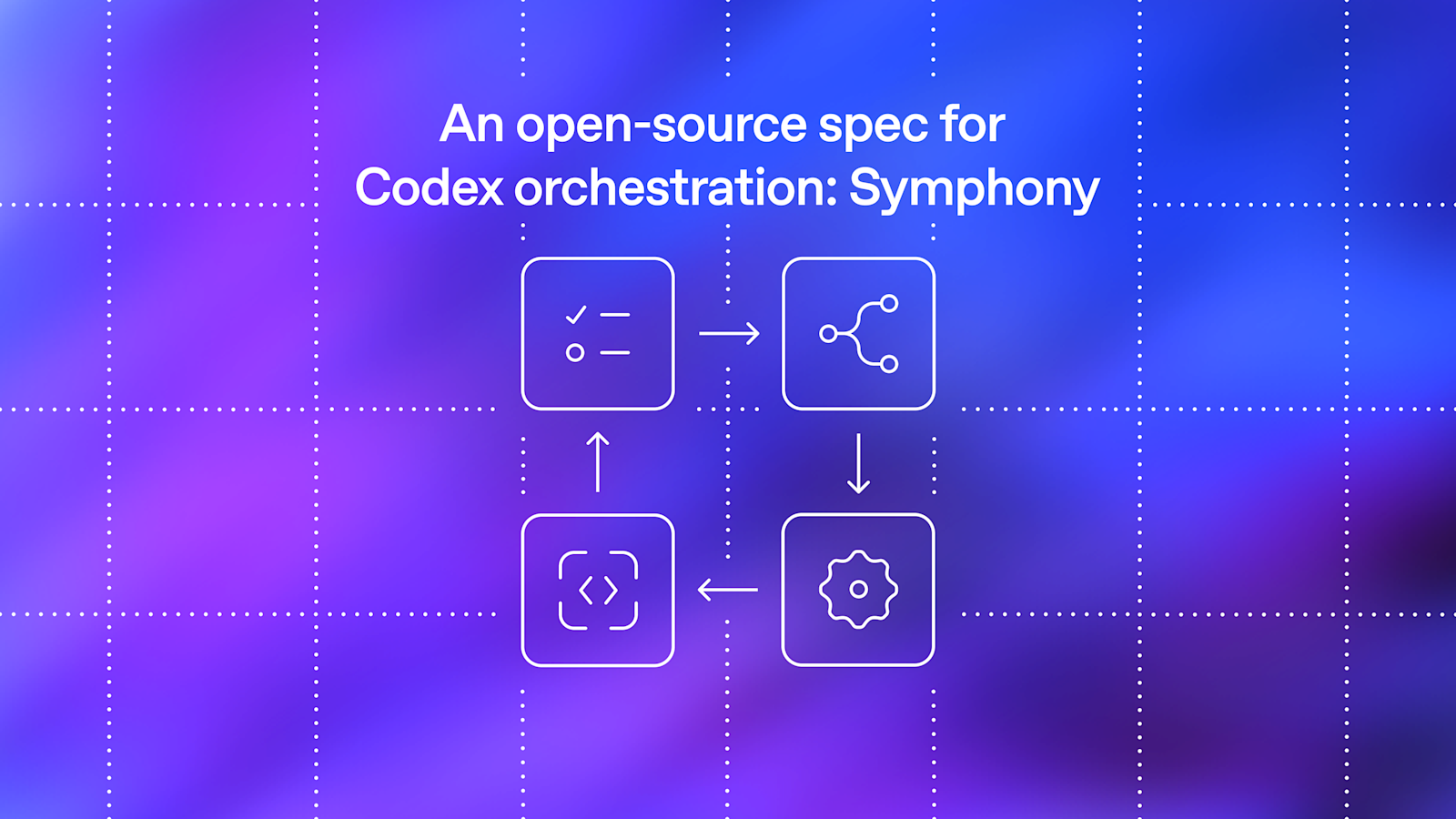

# Symphony Service Specification

Status: Draft v1 (language-agnostic)

Purpose: Define a service that orchestrates coding agents to get project work done.

## 1. Problem Statement

Symphony is a long-running automation service that continuously reads work from an issue tracker

(Linear in this specification version), creates an isolated workspace for each issue, and runs a

coding agent session for that issue inside the workspace.

The service solves four operational problems:

– It turns issue execution into a repeatable daemon workflow instead of manual scripts.

– It isolates agent execution in per-issue workspaces so agent commands run only inside per-issue

workspace directories.

– It keeps the workflow policy in-repo (`WORKFLOW.md`) so teams version the agent prompt and runtime

settings with their code.

– It provides enough observability to operate and debug multiple concurrent agent runs.

Implementations are expected to document their trust and safety posture explicitly. This

specification does not require a single approval, sandbox, or operator-confirmation policy; some

implementations may target trusted environments with a high-trust configuration, while others may

require stricter approvals or sandboxing.

Important boundary:

– Symphony is a scheduler/runner and tracker reader.

– Ticket writes (state transitions, comments, PR links) are typically performed by the coding agent

using tools available in the workflow/runtime environment.

– A successful run may end at a workflow-defined handoff state (for example `Human Review`), not

necessarily `Done`.

## 2. Goals and Non-Goals

### 2.1 Goals

– Poll the issue tracker on a fixed cadence and dispatch work with bounded concurrency.

– Maintain a single authoritative orchestrator state for dispatch, retries, and reconciliation.

– Create deterministic per-issue workspaces and preserve them across runs.

– Stop active runs when issue state changes make them ineligible.

– Recover from transient failures with exponential backoff.

– Load runtime behavior from a repository-owned `WORKFLOW.md` contract.

– Expose operator-visible observability (at minimum structured logs).

– Support restart recovery without requiring a persistent database.

### 2.2 Non-Goals

– Rich web UI or multi-tenant control plane.

– Prescribing a specific dashboard or terminal UI implementation.

– General-purpose workflow engine or distributed job scheduler.

– Built-in business logic for how to edit tickets, PRs, or comments. (That logic lives in the

workflow prompt and agent tooling.)

– Mandating strong sandbox controls beyond what the coding agent and host OS provide.

– Mandating a single default approval, sandbox, or operator-confirmation posture for all

implementations.

## 3. System Overview

### 3.1 Main Components

1. `Workflow Loader`

– Reads `WORKFLOW.md`.

– Parses YAML front matter and prompt body.

– Returns `{config, prompt_template}`.

2. `Config Layer`

– Exposes typed getters for workflow config values.

– Applies defaults and environment variable indirection.

– Performs validation used by the orchestrator before dispatch.

3. `Issue Tracker Client`

– Fetches candidate issues in active states.

– Fetches current states for specific issue IDs (reconciliation).

– Fetches terminal-state issues during startup cleanup.

– Normalizes tracker payloads into a stable issue model.

4. `Orchestrator`

– Owns the poll tick.

– Owns the in-memory runtime state.

– Decides which issues to dispatch, retry, stop, or release.

– Tracks session metrics and retry queue state.

5. `Workspace Manager`

– Maps issue identifiers to workspace paths.

– Ensures per-issue workspace directories exist.

– Runs workspace lifecycle hooks.

– Cleans workspaces for terminal issues.

6. `Agent Runner`

– Creates workspace.

– Builds prompt from issue + workflow template.

– Launches the coding agent app-server client.

– Streams agent updates back to the orchestrator.

7. `Status Surface` (optional)

– Presents human-readable runtime status (for example terminal output, dashboard, or other

operator-facing view).

8. `Logging`

– Emits structured runtime logs to one or more configured sinks.

### 3.2 Abstraction Levels

Symphony is easiest to port when kept in these layers:

1. `Policy Layer` (repo-defined)

– `WORKFLOW.md` prompt body.

– Team-specific rules for ticket handling, validation, and handoff.

2. `Configuration Layer` (typed getters)

– Parses front matter into typed runtime settings.

– Handles defaults, environment tokens, and path normalization.

3. `Coordination Layer` (orchestrator)

– Polling loop, issue eligibility, concurrency, retries, reconciliation.

4. `Execution Layer` (workspace + agent subprocess)

– Filesystem lifecycle, workspace preparation, coding-agent protocol.

5. `Integration Layer` (Linear adapter)

– API calls and normalization for tracker data.

6. `Observability Layer` (logs + optional status surface)

– Operator visibility into orchestrator and agent behavior.

### 3.3 External Dependencies

– Issue tracker API (Linear for `tracker.kind: linear` in this specification version).

– Local filesystem for workspaces and logs.

– Optional workspace population tooling (for example Git CLI, if used).

– Coding-agent executable that supports JSON-RPC-like app-server mode over stdio.

– Host environment authentication for the issue tracker and coding agent.

## 4. Core Domain Model

### 4.1 Entities

#### 4.1.1 Issue

Normalized issue record used by orchestration, prompt rendering, and observability output.

Fields:

– `id` (string)

– Stable tracker-internal ID.

– `identifier` (string)

– Human-readable ticket key (example: `ABC-123`).

– `title` (string)

– `description` (string or null)

– `priority` (integer or null)

– Lower numbers are higher priority in dispatch sorting.

– `state` (string)

– Current tracker state name.

– `branch_name` (string or null)

– Tracker-provided branch metadata if available.

– `url` (string or null)

– `labels` (list of strings)

– Normalized to lowercase.

– `blocked_by` (list of blocker refs)

– Each blocker ref contains:

– `id` (string or null)

– `identifier` (string or null)

– `state` (string or null)

– `created_at` (timestamp or null)

– `updated_at` (timestamp or null)

#### 4.1.2 Workflow Definition

Parsed `WORKFLOW.md` payload:

– `config` (map)

– YAML front matter root object.

– `prompt_template` (string)

– Markdown body after front matter, trimmed.

#### 4.1.3 Service Config (Typed View)

Typed runtime values derived from `WorkflowDefinition.config` plus environment resolution.

Examples:

– poll interval

– workspace root

– active and terminal issue states

– concurrency limits

– coding-agent executable/args/timeouts

– workspace hooks

#### 4.1.4 Workspace

Filesystem workspace assigned to one issue identifier.

Fields (logical):

– `path` (workspace path; current runtime typically uses absolute paths, but relative roots are

possible if configured without path separators)

– `workspace_key` (sanitized issue identifier)

– `created_now` (boolean, used to gate `after_create` hook)

#### 4.1.5 Run Attempt

One execution attempt for one issue.

Fields (logical):

– `issue_id`

– `issue_identifier`

– `attempt` (integer or null, `null` for first run, `>=1` for retries/continuation)

– `workspace_path`

– `started_at`

– `status`

– `error` (optional)

#### 4.1.6 Live Session (Agent Session Metadata)

State tracked while a coding-agent subprocess is running.

Fields:

– `session_id` (string, `-`)

– `thread_id` (string)

– `turn_id` (string)

– `codex_app_server_pid` (string or null)

– `last_codex_event` (string/enum or null)

– `last_codex_timestamp` (timestamp or null)

– `last_codex_message` (summarized payload)

– `codex_input_tokens` (integer)

– `codex_output_tokens` (integer)

– `codex_total_tokens` (integer)

– `last_reported_input_tokens` (integer)

– `last_reported_output_tokens` (integer)

– `last_reported_total_tokens` (integer)

– `turn_count` (integer)

– Number of coding-agent turns started within the current worker lifetime.

#### 4.1.7 Retry Entry

Scheduled retry state for an issue.

Fields:

– `issue_id`

– `identifier` (best-effort human ID for status surfaces/logs)

– `attempt` (integer, 1-based for retry queue)

– `due_at_ms` (monotonic clock timestamp)

– `timer_handle` (runtime-specific timer reference)

– `error` (string or null)

#### 4.1.8 Orchestrator Runtime State

Single authoritative in-memory state owned by the orchestrator.

Fields:

– `poll_interval_ms` (current effective poll interval)

– `max_concurrent_agents` (current effective global concurrency limit)

– `running` (map `issue_id -> running entry`)

– `claimed` (set of issue IDs reserved/running/retrying)

– `retry_attempts` (map `issue_id -> RetryEntry`)

– `completed` (set of issue IDs; bookkeeping only, not dispatch gating)

– `codex_totals` (aggregate tokens + runtime seconds)

– `codex_rate_limits` (latest rate-limit snapshot from agent events)

### 4.2 Stable Identifiers and Normalization Rules

– `Issue ID`

– Use for tracker lookups and internal map keys.

– `Issue Identifier`

– Use for human-readable logs and workspace naming.

– `Workspace Key`

– Derive from `issue.identifier` by replacing any character not in `[A-Za-z0-9._-]` with `_`.

– Use the sanitized value for the workspace directory name.

– `Normalized Issue State`

– Compare states after `lowercase`.

– `Session ID`

– Compose from coding-agent `thread_id` and `turn_id` as `-`.

## 5. Workflow Specification (Repository Contract)

### 5.1 File Discovery and Path Resolution

Workflow file path precedence:

1. Explicit application/runtime setting (set by CLI startup path).

2. Default: `WORKFLOW.md` in the current process working directory.

Loader behavior:

– If the file cannot be read, return `missing_workflow_file` error.

– The workflow file is expected to be repository-owned and version-controlled.

### 5.2 File Format

`WORKFLOW.md` is a Markdown file with optional YAML front matter.

Design note:

– `WORKFLOW.md` should be self-contained enough to describe and run different workflows (prompt,

runtime settings, hooks, and tracker selection/config) without requiring out-of-band

service-specific configuration.

Parsing rules:

– If file starts with `—`, parse lines until the next `—` as YAML front matter.

– Remaining lines become the prompt body.

– If front matter is absent, treat the entire file as prompt body and use an empty config map.

– YAML front matter must decode to a map/object; non-map YAML is an error.

– Prompt body is trimmed before use.

Returned workflow object:

– `config`: front matter root object (not nested under a `config` key).

– `prompt_template`: trimmed Markdown body.

### 5.3 Front Matter Schema

Top-level keys:

– `tracker`

– `polling`

– `workspace`

– `hooks`

– `agent`

– `codex`

Unknown keys should be ignored for forward compatibility.

– The workflow front matter is extensible. Optional extensions may define additional top-level keys

(for example `server`) without changing the core schema above.

– Extensions should document their field schema, defaults, validation rules, and whether changes

apply dynamically or require restart.

– Common extension: `server.port` (integer) enables the optional HTTP server described in Section

#### 5.3.1 `tracker` (object)

Fields:

– `kind` (string)

– Required for dispatch.

– Current supported value: `linear`

– `endpoint` (string)

– Default for `tracker.kind == “linear”`: `https://api.linear.app/graphql`

– `api_key` (string)

– May be a literal token or `$VAR_NAME`.

– Canonical environment variable for `tracker.kind == “linear”`: `LINEAR_API_KEY`.

– If `$VAR_NAME` resolves to an empty string, treat the key as missing.

– `project_slug` (string)

– Required for dispatch when `tracker.kind == “linear”`.

– `active_states` (list of strings)

– Default: `Todo`, `In Progress`

– `terminal_states` (list of strings)

– Default: `Closed`, `Cancelled`, `Canceled`, `Duplicate`, `Done`

#### 5.3.2 `polling` (object)

Fields:

– `interval_ms` (integer or string integer)

– Default: `30000`

– Changes should be re-applied at runtime and affect future tick scheduling without restart.

#### 5.3.3 `workspace` (object)

Fields:

– `root` (path string or `$VAR`)

– Default: `/symphony_workspaces`

– `~` and strings containing path separators are expanded.

– Bare strings without path separators are preserved as-is (relative roots are allowed but

discouraged).

#### 5.3.4 `hooks` (object)

Fields:

– `after_create` (multiline shell script string, optional)

– Runs only when a workspace directory is newly created.

– Failure aborts workspace creation.

– `before_run` (multiline shell script string, optional)

– Runs before each agent attempt after workspace preparation and before launching the coding

agent.

– Failure aborts the current attempt.

– `after_run` (multiline shell script string, optional)

– Runs after each agent attempt (success, failure, timeout, or cancellation) once the workspace

exists.

– Failure is logged but ignored.

– `before_remove` (multiline shell script string, optional)

– Runs before workspace deletion if the directory exists.

– Failure is logged but ignored; cleanup still proceeds.

– `timeout_ms` (integer, optional)

– Default: `60000`

– Applies to all workspace hooks.

– Non-positive values should be treated as invalid and fall back to the default.

– Changes should be re-applied at runtime for future hook executions.

#### 5.3.5 `agent` (object)

Fields:

– `max_concurrent_agents` (integer or string integer)

– Default: `10`

– Changes should be re-applied at runtime and affect subsequent dispatch decisions.

– `max_retry_backoff_ms` (integer or string integer)

– Default: `300000` (5 minutes)

– Changes should be re-applied at runtime and affect future retry scheduling.

– `max_concurrent_agents_by_state` (map `state_name -> positive integer`)

– Default: empty map.

– State keys are normalized (`lowercase`) for lookup.

– Invalid entries (non-positive or non-numeric) are ignored.

#### 5.3.6 `codex` (object)

Fields:

For Codex-owned config values such as `approval_policy`, `thread_sandbox`, and

`turn_sandbox_policy`, supported values are defined by the targeted Codex app-server version.

Implementors should treat them as pass-through Codex config values rather than relying on a

hand-maintained enum in this spec. To inspect the installed Codex schema, run

`codex app-server generate-json-schema –out ` and inspect the relevant definitions referenced

by `v2/ThreadStartParams.json` and `v2/TurnStartParams.json`. Implementations may validate these

fields locally if they want stricter startup checks.

– `command` (string shell command)

– Default: `codex app-server`

– The runtime launches this command via `bash -lc` in the workspace directory.

– The launched process must speak a compatible app-server protocol over stdio.

– `approval_policy` (Codex `AskForApproval` value)

– Default: implementation-defined.

– `thread_sandbox` (Codex `SandboxMode` value)

– Default: implementation-defined.

– `turn_sandbox_policy` (Codex `SandboxPolicy` value)

– Default: implementation-defined.

– `turn_timeout_ms` (integer)

– Default: `3600000` (1 hour)

– `read_timeout_ms` (integer)

– Default: `5000`

– `stall_timeout_ms` (integer)

– Default: `300000` (5 minutes)

– If `<= 0`, stall detection is disabled.

### 5.4 Prompt Template Contract

The Markdown body of `WORKFLOW.md` is the per-issue prompt template.

Rendering requirements:

– Use a strict template engine (Liquid-compatible semantics are sufficient).

– Unknown variables must fail rendering.

– Unknown filters must fail rendering.

Template input variables:

– `issue` (object)

– Includes all normalized issue fields, including labels and blockers.

– `attempt` (integer or null)

– `null`/absent on first attempt.

– Integer on retry or continuation run.

Fallback prompt behavior:

– If the workflow prompt body is empty, the runtime may use a minimal default prompt

(`You are working on an issue from Linear.`).

– Workflow file read/parse failures are configuration/validation errors and should not silently fall

back to a prompt.

### 5.5 Workflow Validation and Error Surface

Error classes:

– `missing_workflow_file`

– `workflow_parse_error`

– `workflow_front_matter_not_a_map`

– `template_parse_error` (during prompt rendering)

– `template_render_error` (unknown variable/filter, invalid interpolation)

Dispatch gating behavior:

– Workflow file read/YAML errors block new dispatches until fixed.

– Template errors fail only the affected run attempt.

## 6. Configuration Specification

### 6.1 Source Precedence and Resolution Semantics

Configuration precedence:

1. Workflow file path selection (runtime setting -> cwd default).

2. YAML front matter values.

3. Environment indirection via `$VAR_NAME` inside selected YAML values.

4. Built-in defaults.

Value coercion semantics:

– Path/command fields support:

– `~` home expansion

– `$VAR` expansion for env-backed path values

– Apply expansion only to values intended to be local filesystem paths; do not rewrite URIs or

arbitrary shell command strings.

### 6.2 Dynamic Reload Semantics

Dynamic reload is required:

– The software should watch `WORKFLOW.md` for changes.

– On change, it should re-read and re-apply workflow config and prompt template without restart.

– The software should attempt to adjust live behavior to the new config (for example polling

cadence, concurrency limits, active/terminal states, codex settings, workspace paths/hooks, and

prompt content for future runs).

– Reloaded config applies to future dispatch, retry scheduling, reconciliation decisions, hook

execution, and agent launches.

– Implementations are not required to restart in-flight agent sessions automatically when config

changes.

– Extensions that manage their own listeners/resources (for example an HTTP server port change) may

require restart unless the implementation explicitly supports live rebind.

– Implementations should also re-validate/reload defensively during runtime operations (for example

before dispatch) in case filesystem watch events are missed.

– Invalid reloads should not crash the service; keep operating with the last known good effective

configuration and emit an operator-visible error.

### 6.3 Dispatch Preflight Validation

This validation is a scheduler preflight run before attempting to dispatch new work. It validates

the workflow/config needed to poll and launch workers, not a full audit of all possible workflow

behavior.

Startup validation:

– Validate configuration before starting the scheduling loop.

– If startup validation fails, fail startup and emit an operator-visible error.

Per-tick dispatch validation:

– Re-validate before each dispatch cycle.

– If validation fails, skip dispatch for that tick, keep reconciliation active, and emit an

operator-visible error.

Validation checks:

– Workflow file can be loaded and parsed.

– `tracker.kind` is present and supported.

– `tracker.api_key` is present after `$` resolution.

– `tracker.project_slug` is present when required by the selected tracker kind.

– `codex.command` is present and non-empty.

### 6.4 Config Fields Summary (Cheat Sheet)

This section is intentionally redundant so a coding agent can implement the config layer quickly.

– `tracker.kind`: string, required, currently `linear`

– `tracker.endpoint`: string, default `https://api.linear.app/graphql` when `tracker.kind=linear`

– `tracker.api_key`: string or `$VAR`, canonical env `LINEAR_API_KEY` when `tracker.kind=linear`

– `tracker.project_slug`: string, required when `tracker.kind=linear`

– `tracker.active_states`: list of strings, default `[“Todo”, “In Progress”]`

– `tracker.terminal_states`: list of strings, default `[“Closed”, “Cancelled”, “Canceled”, “Duplicate”, “Done”]`

– `polling.interval_ms`: integer, default `30000`

– `workspace.root`: path, default `/symphony_workspaces`

– `worker.ssh_hosts` (extension): list of SSH host strings, optional; when omitted, work runs

locally

– `worker.max_concurrent_agents_per_host` (extension): positive integer, optional; shared per-host

cap applied across configured SSH hosts

– `hooks.after_create`: shell script or null

– `hooks.before_run`: shell script or null

– `hooks.after_run`: shell script or null

– `hooks.before_remove`: shell script or null

– `hooks.timeout_ms`: integer, default `60000`

– `agent.max_concurrent_agents`: integer, default `10`

– `agent.max_turns`: integer, default `20`

– `agent.max_retry_backoff_ms`: integer, default `300000` (5m)

– `agent.max_concurrent_agents_by_state`: map of positive integers, default `{}`

– `codex.command`: shell command string, default `codex app-server`

– `codex.approval_policy`: Codex `AskForApproval` value, default implementation-defined

– `codex.thread_sandbox`: Codex `SandboxMode` value, default implementation-defined

– `codex.turn_sandbox_policy`: Codex `SandboxPolicy` value, default implementation-defined

– `codex.turn_timeout_ms`: integer, default `3600000`

– `codex.read_timeout_ms`: integer, default `5000`

– `codex.stall_timeout_ms`: integer, default `300000`

– `server.port` (extension): integer, optional; enables the optional HTTP server, `0` may be used

for ephemeral local bind, and CLI `–port` overrides it

## 7. Orchestration State Machine

The orchestrator is the only component that mutates scheduling state. All worker outcomes are

reported back to it and converted into explicit state transitions.

### 7.1 Issue Orchestration States

This is not the same as tracker states (`Todo`, `In Progress`, etc.). This is the service’s internal

claim state.

1. `Unclaimed`

– Issue is not running and has no retry scheduled.

2. `Claimed`

– Orchestrator has reserved the issue to prevent duplicate dispatch.

– In practice, claimed issues are either `Running` or `RetryQueued`.

3. `Running`

– Worker task exists and the issue is tracked in `running` map.

4. `RetryQueued`

– Worker is not running, but a retry timer exists in `retry_attempts`.

5. `Released`

– Claim removed because issue is terminal, non-active, missing, or retry path completed without

re-dispatch.

Important nuance:

– A successful worker exit does not mean the issue is done forever.

– The worker may continue through multiple back-to-back coding-agent turns before it exits.

– After each normal turn completion, the worker re-checks the tracker issue state.

– If the issue is still in an active state, the worker should start another turn on the same live

coding-agent thread in the same workspace, up to `agent.max_turns`.

– The first turn should use the full rendered task prompt.

– Continuation turns should send only continuation guidance to the existing thread, not resend the

original task prompt that is already present in thread history.

– Once the worker exits normally, the orchestrator still schedules a short continuation retry

(about 1 second) so it can re-check whether the issue remains active and needs another worker

session.

### 7.2 Run Attempt Lifecycle

A run attempt transitions through these phases:

1. `PreparingWorkspace`

2. `BuildingPrompt`

3. `LaunchingAgentProcess`

4. `InitializingSession`

5. `StreamingTurn`

6. `Finishing`

7. `Succeeded`

8. `Failed`

9. `TimedOut`

10. `Stalled`

11. `CanceledByReconciliation`

Distinct terminal reasons are important because retry logic and logs differ.

### 7.3 Transition Triggers

– `Poll Tick`

– Reconcile active runs.

– Validate config.

– Fetch candidate issues.

– Dispatch until slots are exhausted.

– `Worker Exit (normal)`

– Remove running entry.

– Update aggregate runtime totals.

– Schedule continuation retry (attempt `1`) after the worker exhausts or finishes its in-process

turn loop.

– `Worker Exit (abnormal)`

– Remove running entry.

– Update aggregate runtime totals.

– Schedule exponential-backoff retry.

– `Codex Update Event`

– Update live session fields, token counters, and rate limits.

– `Retry Timer Fired`

– Re-fetch active candidates and attempt re-dispatch, or release claim if no longer eligible.

– `Reconciliation State Refresh`

– Stop runs whose issue states are terminal or no longer active.

– `Stall Timeout`

– Kill worker and schedule retry.

### 7.4 Idempotency and Recovery Rules

– The orchestrator serializes state mutations through one authority to avoid duplicate dispatch.

– `claimed` and `running` checks are required before launching any worker.

– Reconciliation runs before dispatch on every tick.

– Restart recovery is tracker-driven and filesystem-driven (no durable orchestrator DB required).

– Startup terminal cleanup removes stale workspaces for issues already in terminal states.

## 8. Polling, Scheduling, and Reconciliation

### 8.1 Poll Loop

At startup, the service validates config, performs startup cleanup, schedules an immediate tick, and

then repeats every `polling.interval_ms`.

The effective poll interval should be updated when workflow config changes are re-applied.

Tick sequence:

1. Reconcile running issues.

2. Run dispatch preflight validation.

3. Fetch candidate issues from tracker using active states.

4. Sort issues by dispatch priority.

5. Dispatch eligible issues while slots remain.

6. Notify observability/status consumers of state changes.

If per-tick validation fails, dispatch is skipped for that tick, but reconciliation still happens

### 8.2 Candidate Selection Rules

An issue is dispatch-eligible only if all are true:

– It has `id`, `identifier`, `title`, and `state`.

– Its state is in `active_states` and not in `terminal_states`.

– It is not already in `running`.

– It is not already in `claimed`.

– Global concurrency slots are available.

– Per-state concurrency slots are available.

– Blocker rule for `Todo` state passes:

– If the issue state is `Todo`, do not dispatch when any blocker is non-terminal.

Sorting order (stable intent):

1. `priority` ascending (1..4 are preferred; null/unknown sorts last)

2. `created_at` oldest first

3. `identifier` lexicographic tie-breaker

### 8.3 Concurrency Control

Global limit:

– `available_slots = max(max_concurrent_agents – running_count, 0)`

Per-state limit:

– `max_concurrent_agents_by_state[state]` if present (state key normalized)

– otherwise fallback to global limit

The runtime counts issues by their current tracked state in the `running` map.

Optional SSH host limit:

– When `worker.max_concurrent_agents_per_host` is set, each configured SSH host may run at most

that many concurrent agents at once.

– Hosts at that cap are skipped for new dispatch until capacity frees up.

### 8.4 Retry and Backoff

Retry entry creation:

– Cancel any existing retry timer for the same issue.

– Store `attempt`, `identifier`, `error`, `due_at_ms`, and new timer handle.

Backoff formula:

– Normal continuation retries after a clean worker exit use a short fixed delay of `1000` ms.

– Failure-driven retries use `delay = min(10000 * 2^(attempt – 1), agent.max_retry_backoff_ms)`.

– Power is capped by the configured max retry backoff (default `300000` / 5m).

Retry handling behavior:

1. Fetch active candidate issues (not all issues).

2. Find the specific issue by `issue_id`.

3. If not found, release claim.

4. If found and still candidate-eligible:

– Dispatch if slots are available.

– Otherwise requeue with error `no available orchestrator slots`.

5. If found but no longer active, release claim.

– Terminal-state workspace cleanup is handled by startup cleanup and active-run reconciliation

(including terminal transitions for currently running issues).

– Retry handling mainly operates on active candidates and releases claims when the issue is absent,

rather than performing terminal cleanup itself.

### 8.5 Active Run Reconciliation

Reconciliation runs every tick and has two parts.

Part A: Stall detection

– For each running issue, compute `elapsed_ms` since:

– `last_codex_timestamp` if any event has been seen, else

– `started_at`

– If `elapsed_ms > codex.stall_timeout_ms`, terminate the worker and queue a retry.

– If `stall_timeout_ms <= 0`, skip stall detection entirely.

Part B: Tracker state refresh

– Fetch current issue states for all running issue IDs.

– For each running issue:

– If tracker state is terminal: terminate worker and clean workspace.

– If tracker state is still active: update the in-memory issue snapshot.

– If tracker state is neither active nor terminal: terminate worker without workspace cleanup.

– If state refresh fails, keep workers running and try again on the next tick.

### 8.6 Startup Terminal Workspace Cleanup

When the service starts:

1. Query tracker for issues in terminal states.

2. For each returned issue identifier, remove the corresponding workspace directory.

3. If the terminal-issues fetch fails, log a warning and continue startup.

This prevents stale terminal workspaces from accumulating after restarts.

## 9. Workspace Management and Safety

### 9.1 Workspace Layout

Workspace root:

– `workspace.root` (normalized path; the current config layer expands path-like values and preserves

bare relative names)

Per-issue workspace path:

– `/`

Workspace persistence:

– Workspaces are reused across runs for the same issue.

– Successful runs do not auto-delete workspaces.

### 9.2 Workspace Creation and Reuse

Input: `issue.identifier`

Algorithm summary:

1. Sanitize identifier to `workspace_key`.

2. Compute workspace path under workspace root.

3. Ensure the workspace path exists as a directory.

4. Mark `created_now=true` only if the directory was created during this call; otherwise

`created_now=false`.

5. If `created_now=true`, run `after_create` hook if configured.

– This section does not assume any specific repository/VCS workflow.

– Workspace preparation beyond directory creation (for example dependency bootstrap, checkout/sync,

code generation) is implementation-defined and is typically handled via hooks.

### 9.3 Optional Workspace Population (Implementation-Defined)

The spec does not require any built-in VCS or repository bootstrap behavior.

Implementations may populate or synchronize the workspace using implementation-defined logic and/or

hooks (for example `after_create` and/or `before_run`).

Failure handling:

– Workspace population/synchronization failures return an error for the current attempt.

– If failure happens while creating a brand-new workspace, implementations may remove the partially

prepared directory.

– Reused workspaces should not be destructively reset on population failure unless that policy is

explicitly chosen and documented.

### 9.4 Workspace Hooks

Supported hooks:

– `hooks.after_create`

– `hooks.before_run`

– `hooks.after_run`

– `hooks.before_remove`

Execution contract:

– Execute in a local shell context appropriate to the host OS, with the workspace directory as

`cwd`.

– On POSIX systems, `sh -lc