Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

Flawed Cisco update threatens to stop APs from getting further patches

Johannes Ullrich, dean of research at the SANS Institute, called this particular problem uncommon, although he acknowledged flash memory space in IoT devices like access points is limited and may fill up from time to time. “But,” he added, “there is a bigger issue: A competent [vendor] vulnerability management program must always include verification that the patch was indeed applied as expected. There are many reasons why a patch may not be applied correctly, and this is just one way a patch may fail to apply.” Kellman Meghu, CTO of incident response firm DeepCove Cybersecurity, said overflowing a fixed device’s memory due to a bug “would have me rather annoyed with this vendor. This is very rare in my experience, and something that was an issue way back when storage costs were a factor. I would expect my vendor to be able to clean and manage storage for fixed devices. If this device is supported, this would be an RMA [return merchandise authorization] or fix issue, and expectation [for vendor action] would be right away/proactive.” Affected are access points running IOS XE versions 17.12.4, 17.12.5, 17.12.6, and 17.12.6a. These include Cisco Catalyst 9130AX series APs, as well as 9130AX models with a Stadium Antenna, Catalyst 91361, 91621, 9163E, 91641, 9166D1, and IW9167 series APs, and Wi-Fi 6 Outdoor APs, There are two ways for admins to solve the problem: Download a Cisco tool called WLANPoller, which automates execution of a fix across multiple APs, or manually use the show boot command on each device to look into the boot partition and see if it has enough space for an upgrade. Greater detail on the necessary action is in the Cisco advisory.

Energy Department Awards New Contracts from Strategic Petroleum Reserve, Advancing Emergency Exchange

WASHINGTON—The U.S. Department of Energy’s (DOE) Hydrocarbons and Geothermal Energy Office (HGEO) today announced awards of contracts to exchange 26 million barrels of crude oil from the Strategic Petroleum Reserve (SPR) at the West Hackberry site, marking the next phase of DOE’s execution of the United States’ 172-million-barrel contribution to the International Energy Agency’s collective action to stabilize global oil supply. These awards follow DOE’s recent Request for Proposal (RFP) for this portion of the emergency exchange, with deliveries beginning immediately as the Department continues to move quickly to address short-term supply disruptions and strengthen energy security for the United States. “Through this emergency exchange, the Department is taking swift action to support near‑term supply needs while strengthening the Strategic Petroleum Reserve for the long term,” said Kyle Haustveit, Assistant Secretary of the Hydrocarbons and Geothermal Energy Office. “By returning additional premium barrels at no cost to taxpayers, this exchange reinforces market reliability today and delivers meaningful value to the American people when those barrels are returned.” Under these awards, DOE will move forward with an exchange of 26 million barrels of crude oil, which will be returned with additional premium barrels by next year—supporting energy security and delivering value for the American people at no cost to taxpayers. This action builds on earlier exchange actions, which have already awarded approximately 55 million barrels from the Bayou Choctaw, Bryan Mound, and West Hackberry sites, demonstrating the reserve’s ability to deliver crude efficiently under emergency conditions. To date, more than 10 million barrels have already been delivered to market. The exchange also allows participating companies to take advantage of the President’s limited Jones Act waiver, helping accelerate critical near-term oil flows into the market. Companies can begin scheduling deliveries immediately. DOE will continue to evaluate market conditions and operational capacity as it advances

IPv6 may briefly have accounted for more than half of internet traffic

Has IPv6 finally reached its day of glory? It’s fair to say that IPv6 has not had the level of take-up expected when the Internet Engineering Task Force (IETF) ratified it back in 1998. Take-up has been agonizingly slow, not reaching 5 percent of traffic until 2014. However, the use of IPv6 has been slowly climbing since, and according to Google statistics, briefly accounted for 50.1% of the internet traffic Google sees on March 28. However, technology publication The Register, which spotted the tiny but significant blip in Google’s traffic graphic, quoted two other sources: Cloudflare and APNIC Labs as stating that IPv6 had yet to reach such an exalted level: Cloudflare tracked it at a high of 43 percent, while APNIC registered that 43.13% of network hosts across the world were IPv6 capable.

Broadcom’s Facebook friend will help train it to accelerate AI workloads

As part of the agreement, Broadcom is deploying its Ethernet-based backbone to scale up compute within MTIA racks. Its enhanced network capacity is designed to eliminate network congestion, even under the most intensive AI workloads. “This initial MTIA deployment is just the beginning of a sustained, multi-generation roadmap to serve the trajectory of massive growth over the next few years highlighting Broadcom’s unmatched leadership in AI networking and the power of our foundational XPU custom accelerator platform,” Broadcom CEO Hock Tan said, according to a company news release. Broadcom has been busy polishing its AI credentials. Just last week, it signed agreements with Anthropic to develop a new generation of AI chips, and with Google to boost its infrastructure for AI development.

Data centers are costing local governments billions

Tax benefits for hyperscalers and other data center operators are costing local administrations billions of dollars. In the US, three states are already giving away more than $1 billion in potential tax revenue, while 14 are failing to declare how much data center subsidies are costing taxpayers, according to Good Jobs First. The campaign group said the failure to declare the tax subsidies goes against US Generally Accepted Accounting Principles (GAAP) and that they should, since 2017, be declared as lost revenue. “Tax-abatement laws written long ago for much smaller data centers, predating massive artificial intelligence (AI) facilities, are now unexpectedly costing governments billions of dollars in lost tax revenue,” Good Jobs First said. “Three states, Georgia, Virginia, and Texas, already lose $1 billion or more per year,” it reported in its new study, “Data Center Tax Abatements: Why States and Localities Must Disclose These Soaring Revenue Losses.”

Equinix offering targets automated AI-centric network operations

Another component, Fabric Application Connect, functions as a private, dedicated connectivity marketplace for AI services. It lets enterprises access inference, training, storage, and security providers over private connections, bypassing the public Internet and limiting data exposure during AI development and deployment. Operational visibility is provided through Fabric Insights, an AI-powered monitoring layer that analyzes real-time network telemetry to detect anomalies and predict potential issues before they impact workloads. Fabric Insights integrates with security information and event management (SIEM) platforms such as Splunk and Datadog and feeds data directly into Fabric Super-Agent to support automated remediation. Fabric Intelligence operates on top of Equinix’s global infrastructure footprint, which includes hundreds of data centers across dozens of metropolitan markets. The platform is positioned as part of Equinix Fabric, a connectivity portfolio used by thousands of customers worldwide to link cloud providers, enterprises, and network services. Fabric Intelligence is available now to preview.

Flawed Cisco update threatens to stop APs from getting further patches

Johannes Ullrich, dean of research at the SANS Institute, called this particular problem uncommon, although he acknowledged flash memory space in IoT devices like access points is limited and may fill up from time to time. “But,” he added, “there is a bigger issue: A competent [vendor] vulnerability management program must always include verification that the patch was indeed applied as expected. There are many reasons why a patch may not be applied correctly, and this is just one way a patch may fail to apply.” Kellman Meghu, CTO of incident response firm DeepCove Cybersecurity, said overflowing a fixed device’s memory due to a bug “would have me rather annoyed with this vendor. This is very rare in my experience, and something that was an issue way back when storage costs were a factor. I would expect my vendor to be able to clean and manage storage for fixed devices. If this device is supported, this would be an RMA [return merchandise authorization] or fix issue, and expectation [for vendor action] would be right away/proactive.” Affected are access points running IOS XE versions 17.12.4, 17.12.5, 17.12.6, and 17.12.6a. These include Cisco Catalyst 9130AX series APs, as well as 9130AX models with a Stadium Antenna, Catalyst 91361, 91621, 9163E, 91641, 9166D1, and IW9167 series APs, and Wi-Fi 6 Outdoor APs, There are two ways for admins to solve the problem: Download a Cisco tool called WLANPoller, which automates execution of a fix across multiple APs, or manually use the show boot command on each device to look into the boot partition and see if it has enough space for an upgrade. Greater detail on the necessary action is in the Cisco advisory.

Energy Department Awards New Contracts from Strategic Petroleum Reserve, Advancing Emergency Exchange

WASHINGTON—The U.S. Department of Energy’s (DOE) Hydrocarbons and Geothermal Energy Office (HGEO) today announced awards of contracts to exchange 26 million barrels of crude oil from the Strategic Petroleum Reserve (SPR) at the West Hackberry site, marking the next phase of DOE’s execution of the United States’ 172-million-barrel contribution to the International Energy Agency’s collective action to stabilize global oil supply. These awards follow DOE’s recent Request for Proposal (RFP) for this portion of the emergency exchange, with deliveries beginning immediately as the Department continues to move quickly to address short-term supply disruptions and strengthen energy security for the United States. “Through this emergency exchange, the Department is taking swift action to support near‑term supply needs while strengthening the Strategic Petroleum Reserve for the long term,” said Kyle Haustveit, Assistant Secretary of the Hydrocarbons and Geothermal Energy Office. “By returning additional premium barrels at no cost to taxpayers, this exchange reinforces market reliability today and delivers meaningful value to the American people when those barrels are returned.” Under these awards, DOE will move forward with an exchange of 26 million barrels of crude oil, which will be returned with additional premium barrels by next year—supporting energy security and delivering value for the American people at no cost to taxpayers. This action builds on earlier exchange actions, which have already awarded approximately 55 million barrels from the Bayou Choctaw, Bryan Mound, and West Hackberry sites, demonstrating the reserve’s ability to deliver crude efficiently under emergency conditions. To date, more than 10 million barrels have already been delivered to market. The exchange also allows participating companies to take advantage of the President’s limited Jones Act waiver, helping accelerate critical near-term oil flows into the market. Companies can begin scheduling deliveries immediately. DOE will continue to evaluate market conditions and operational capacity as it advances

IPv6 may briefly have accounted for more than half of internet traffic

Has IPv6 finally reached its day of glory? It’s fair to say that IPv6 has not had the level of take-up expected when the Internet Engineering Task Force (IETF) ratified it back in 1998. Take-up has been agonizingly slow, not reaching 5 percent of traffic until 2014. However, the use of IPv6 has been slowly climbing since, and according to Google statistics, briefly accounted for 50.1% of the internet traffic Google sees on March 28. However, technology publication The Register, which spotted the tiny but significant blip in Google’s traffic graphic, quoted two other sources: Cloudflare and APNIC Labs as stating that IPv6 had yet to reach such an exalted level: Cloudflare tracked it at a high of 43 percent, while APNIC registered that 43.13% of network hosts across the world were IPv6 capable.

Broadcom’s Facebook friend will help train it to accelerate AI workloads

As part of the agreement, Broadcom is deploying its Ethernet-based backbone to scale up compute within MTIA racks. Its enhanced network capacity is designed to eliminate network congestion, even under the most intensive AI workloads. “This initial MTIA deployment is just the beginning of a sustained, multi-generation roadmap to serve the trajectory of massive growth over the next few years highlighting Broadcom’s unmatched leadership in AI networking and the power of our foundational XPU custom accelerator platform,” Broadcom CEO Hock Tan said, according to a company news release. Broadcom has been busy polishing its AI credentials. Just last week, it signed agreements with Anthropic to develop a new generation of AI chips, and with Google to boost its infrastructure for AI development.

Data centers are costing local governments billions

Tax benefits for hyperscalers and other data center operators are costing local administrations billions of dollars. In the US, three states are already giving away more than $1 billion in potential tax revenue, while 14 are failing to declare how much data center subsidies are costing taxpayers, according to Good Jobs First. The campaign group said the failure to declare the tax subsidies goes against US Generally Accepted Accounting Principles (GAAP) and that they should, since 2017, be declared as lost revenue. “Tax-abatement laws written long ago for much smaller data centers, predating massive artificial intelligence (AI) facilities, are now unexpectedly costing governments billions of dollars in lost tax revenue,” Good Jobs First said. “Three states, Georgia, Virginia, and Texas, already lose $1 billion or more per year,” it reported in its new study, “Data Center Tax Abatements: Why States and Localities Must Disclose These Soaring Revenue Losses.”

Equinix offering targets automated AI-centric network operations

Another component, Fabric Application Connect, functions as a private, dedicated connectivity marketplace for AI services. It lets enterprises access inference, training, storage, and security providers over private connections, bypassing the public Internet and limiting data exposure during AI development and deployment. Operational visibility is provided through Fabric Insights, an AI-powered monitoring layer that analyzes real-time network telemetry to detect anomalies and predict potential issues before they impact workloads. Fabric Insights integrates with security information and event management (SIEM) platforms such as Splunk and Datadog and feeds data directly into Fabric Super-Agent to support automated remediation. Fabric Intelligence operates on top of Equinix’s global infrastructure footprint, which includes hundreds of data centers across dozens of metropolitan markets. The platform is positioned as part of Equinix Fabric, a connectivity portfolio used by thousands of customers worldwide to link cloud providers, enterprises, and network services. Fabric Intelligence is available now to preview.

Energy Department Awards New Contracts from Strategic Petroleum Reserve, Advancing Emergency Exchange

WASHINGTON—The U.S. Department of Energy’s (DOE) Hydrocarbons and Geothermal Energy Office (HGEO) today announced awards of contracts to exchange 26 million barrels of crude oil from the Strategic Petroleum Reserve (SPR) at the West Hackberry site, marking the next phase of DOE’s execution of the United States’ 172-million-barrel contribution to the International Energy Agency’s collective action to stabilize global oil supply. These awards follow DOE’s recent Request for Proposal (RFP) for this portion of the emergency exchange, with deliveries beginning immediately as the Department continues to move quickly to address short-term supply disruptions and strengthen energy security for the United States. “Through this emergency exchange, the Department is taking swift action to support near‑term supply needs while strengthening the Strategic Petroleum Reserve for the long term,” said Kyle Haustveit, Assistant Secretary of the Hydrocarbons and Geothermal Energy Office. “By returning additional premium barrels at no cost to taxpayers, this exchange reinforces market reliability today and delivers meaningful value to the American people when those barrels are returned.” Under these awards, DOE will move forward with an exchange of 26 million barrels of crude oil, which will be returned with additional premium barrels by next year—supporting energy security and delivering value for the American people at no cost to taxpayers. This action builds on earlier exchange actions, which have already awarded approximately 55 million barrels from the Bayou Choctaw, Bryan Mound, and West Hackberry sites, demonstrating the reserve’s ability to deliver crude efficiently under emergency conditions. To date, more than 10 million barrels have already been delivered to market. The exchange also allows participating companies to take advantage of the President’s limited Jones Act waiver, helping accelerate critical near-term oil flows into the market. Companies can begin scheduling deliveries immediately. DOE will continue to evaluate market conditions and operational capacity as it advances

Apply Now: 2026 Waste to Energy and Materials Technical Assistance for State, Local, and Tribal Governments

The U.S. Department of Energy’s Alternative Fuels and Feedstocks Office (AFFO), formerly known as the Bioenergy Technologies Office, and the National Laboratory of the Rockies (NLR) are launching the 2026 Waste to Energy and Materials Technical Assistance Program for state, local, and Tribal governments. The scope of this year’s program has been expanded to include additional municipal solid waste materials such as electronics, industrial wastewater, and other byproducts. U.S. waste streams present significant logistical and economic challenges for states, counties, municipalities, and Tribal governments. However, waste is also a resource that can be used as an unconventional additional source of energy, advanced materials, and critical minerals. This program provides no-cost technical assistance to states, counties, municipalities, and Tribal governments with the most relevant data to guide decision-making—providing local solutions to the various aspects of waste management, taking into consideration current handling practices, costs, and infrastructure. It is designed to help officials evaluate the most sensible end uses for their waste, whether repurposing it for on-site heat and power, upgrading it into transportation fuels, or using it for material and mineral recovery. Program technical assistance includes: Waste resource information Infrastructure considerations Techno-economic comparison of energy, material, and mineral recovery options Evaluation and sharing of case studies (to the extent possible) from similar communities/projects The 2026 Waste to Energy and Materials Technical Assistance application portal is now open and applications will be accepted through May 30, 2026. For information on applicant eligibility and how to apply, please visit NLR’s technical assistance webpage. Timeline for Technical Assistance Opportunity Date Action April 15, 2026 Application Portal Opens May 30, 2026 Application Portal Closes July – August 2026 Selections Made and Recipients Informed Learn more about AFFO-supported waste to energy and materials technical assistance. If you have further questions, please see frequently asked questions or contact the Waste to

Energy Deputy Secretary Danly Commends FERC Action on Large Load Interconnection Reform

WASHINGTON—U.S. Deputy Secretary of Energy James P. Danly issued the following statement after the Federal Energy Regulatory Commission (FERC or Commission) announced it will take action by June 2026 on the large load interconnection proceeding initiated at the direction of U.S. Secretary of Energy Chris Wright: “FERC’s announcement today demonstrates Chairman Swett’s commitment to implement Secretary Wright’s directive that the Commission ensure the timely and orderly integration of large electric loads that deliver on President Trump’s goal of American energy dominance. “I expect that the Commission will act quickly and decisively to improve interconnection processes, support the co-location of load and generation, and accelerate the addition of new generation to ensure that supply is built alongside demand—delivering affordable, reliable, and secure energy for all Americans. “Having served at FERC as commissioner and chairman, I understand FERC’s role in ensuring the reliability of the nation’s bulk power system, and I commend Chairman Swett for focusing on affordability and reliability.” ###

Petrobras discovers hydrocarbons in Campos basin presalt offshore Brazil

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Petrobras has discovered presence in the Campos basin presalt offshore Brazil during exploration in sector SC-AP4, block CM-477. Samples taken from the well, 1-BRSA-1404DC-RJS, will be sent for laboratory analysis with the aim of characterizing the conditions of the reservoirs and fluids found to enable continued evaluation of the area’s potential, the company said in a release Apr. 13. The discovery well was drilled 201 km off the coast of the state of Rio de Janeiro in water depth of 2,984 m. The hydrocarbon-bearing interval was confirmed through electrical profiles, gas evidence, and fluid sampling. Petrobras is the operator of block CM-477 with 70% interest. bp plc holds the remaining 30%.

bp to operate blocks offshore Namibia through acquisition

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Map from bp plc <!–> –> bp plc aims to become operator of three exploration blocks offshore Namibia through acquisition of a 60% interest from Eco Atlantic Oil & Gas. Subject to Namibian government and joint venture partner approvals, bp will operate blocks PEL97, PEL99, and PEL100 in Walvis basin. In a release Apr. 13, bp said entering the blocks builds on its recent exploration successes in Namibia through Azule Energy, a 50-50 joint venture between bp and Eni. Eco Atlantic will remain a partner, along with Namibia’s national oil company NAMCOR, following the deal’s closing, which is subject to closing conditions.

ConocoPhillips sends team to Venezuela to evaluate oil, gas opportunities

ConocoPhillips sent a team to Venezuela to evaluate oil and gas opportunities, the company confirmed to Oil & Gas Journal Apr. 13. In an email to OGJ, a company spokesperson said “ConocoPhillips can confirm that we sent a small evaluation team to Venezuela during the week of Apr. 6 to better understand the potential for in-country oil and gas opportunities.” Asked what clarity the company seeks, the spokesperson said the team “will evaluate Venezuela against other international opportunities as part of our disciplined investment framework.” The operator left Venezuela in 2007 after then-President Hugo Chavez’s government reverted privately run oil fields to state control. ConocoPhillips, along with ExxonMobil, refused the government’s terms and took claims to the World Bank’s International Centre for the Settlement of Investment Disputes (ICSID). ConocoPhillips is owed about $12 billion following two judgements, an amount still sought by the company, which, prior to the expropriation of its interests, held a 50.1% interest in Petrozuata, a 40% interest in Hamaca, and a 32.5% interest in Corocoro heavy oil projects in Venezuela. In January, following the removal of Venezuela’s leader Nicolas Maduro, US President Donald Trump urged oil and gas companies to spend billions to rebuild Venezuela’s energy sector. ExxonMobil, which also exited the country in 2007, sent a technical team to Venezuela in March to evaluate the infrastructure and investment opportunities. In a discussion at CERAWeek by S&P Global in Houston in March, ConocoPhillips’ chief executive officer, Ryan Lance, said Venezuela needs to “completely rewire” its fiscal system to attract new investment. The South American country holds a large cache of proven oil reserves, but has faced decades of production challenges due to mismanagement, underinvestment, and sanctions.

Microsoft will invest $80B in AI data centers in fiscal 2025

And Microsoft isn’t the only one that is ramping up its investments into AI-enabled data centers. Rival cloud service providers are all investing in either upgrading or opening new data centers to capture a larger chunk of business from developers and users of large language models (LLMs). In a report published in October 2024, Bloomberg Intelligence estimated that demand for generative AI would push Microsoft, AWS, Google, Oracle, Meta, and Apple would between them devote $200 billion to capex in 2025, up from $110 billion in 2023. Microsoft is one of the biggest spenders, followed closely by Google and AWS, Bloomberg Intelligence said. Its estimate of Microsoft’s capital spending on AI, at $62.4 billion for calendar 2025, is lower than Smith’s claim that the company will invest $80 billion in the fiscal year to June 30, 2025. Both figures, though, are way higher than Microsoft’s 2020 capital expenditure of “just” $17.6 billion. The majority of the increased spending is tied to cloud services and the expansion of AI infrastructure needed to provide compute capacity for OpenAI workloads. Separately, last October Amazon CEO Andy Jassy said his company planned total capex spend of $75 billion in 2024 and even more in 2025, with much of it going to AWS, its cloud computing division.

John Deere unveils more autonomous farm machines to address skill labor shortage

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Self-driving tractors might be the path to self-driving cars. John Deere has revealed a new line of autonomous machines and tech across agriculture, construction and commercial landscaping. The Moline, Illinois-based John Deere has been in business for 187 years, yet it’s been a regular as a non-tech company showing off technology at the big tech trade show in Las Vegas and is back at CES 2025 with more autonomous tractors and other vehicles. This is not something we usually cover, but John Deere has a lot of data that is interesting in the big picture of tech. The message from the company is that there aren’t enough skilled farm laborers to do the work that its customers need. It’s been a challenge for most of the last two decades, said Jahmy Hindman, CTO at John Deere, in a briefing. Much of the tech will come this fall and after that. He noted that the average farmer in the U.S. is over 58 and works 12 to 18 hours a day to grow food for us. And he said the American Farm Bureau Federation estimates there are roughly 2.4 million farm jobs that need to be filled annually; and the agricultural work force continues to shrink. (This is my hint to the anti-immigration crowd). John Deere’s autonomous 9RX Tractor. Farmers can oversee it using an app. While each of these industries experiences their own set of challenges, a commonality across all is skilled labor availability. In construction, about 80% percent of contractors struggle to find skilled labor. And in commercial landscaping, 86% of landscaping business owners can’t find labor to fill open positions, he said. “They have to figure out how to do

2025 playbook for enterprise AI success, from agents to evals

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More 2025 is poised to be a pivotal year for enterprise AI. The past year has seen rapid innovation, and this year will see the same. This has made it more critical than ever to revisit your AI strategy to stay competitive and create value for your customers. From scaling AI agents to optimizing costs, here are the five critical areas enterprises should prioritize for their AI strategy this year. 1. Agents: the next generation of automation AI agents are no longer theoretical. In 2025, they’re indispensable tools for enterprises looking to streamline operations and enhance customer interactions. Unlike traditional software, agents powered by large language models (LLMs) can make nuanced decisions, navigate complex multi-step tasks, and integrate seamlessly with tools and APIs. At the start of 2024, agents were not ready for prime time, making frustrating mistakes like hallucinating URLs. They started getting better as frontier large language models themselves improved. “Let me put it this way,” said Sam Witteveen, cofounder of Red Dragon, a company that develops agents for companies, and that recently reviewed the 48 agents it built last year. “Interestingly, the ones that we built at the start of the year, a lot of those worked way better at the end of the year just because the models got better.” Witteveen shared this in the video podcast we filmed to discuss these five big trends in detail. Models are getting better and hallucinating less, and they’re also being trained to do agentic tasks. Another feature that the model providers are researching is a way to use the LLM as a judge, and as models get cheaper (something we’ll cover below), companies can use three or more models to

OpenAI’s red teaming innovations define new essentials for security leaders in the AI era

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More OpenAI has taken a more aggressive approach to red teaming than its AI competitors, demonstrating its security teams’ advanced capabilities in two areas: multi-step reinforcement and external red teaming. OpenAI recently released two papers that set a new competitive standard for improving the quality, reliability and safety of AI models in these two techniques and more. The first paper, “OpenAI’s Approach to External Red Teaming for AI Models and Systems,” reports that specialized teams outside the company have proven effective in uncovering vulnerabilities that might otherwise have made it into a released model because in-house testing techniques may have missed them. In the second paper, “Diverse and Effective Red Teaming with Auto-Generated Rewards and Multi-Step Reinforcement Learning,” OpenAI introduces an automated framework that relies on iterative reinforcement learning to generate a broad spectrum of novel, wide-ranging attacks. Going all-in on red teaming pays practical, competitive dividends It’s encouraging to see competitive intensity in red teaming growing among AI companies. When Anthropic released its AI red team guidelines in June of last year, it joined AI providers including Google, Microsoft, Nvidia, OpenAI, and even the U.S.’s National Institute of Standards and Technology (NIST), which all had released red teaming frameworks. Investing heavily in red teaming yields tangible benefits for security leaders in any organization. OpenAI’s paper on external red teaming provides a detailed analysis of how the company strives to create specialized external teams that include cybersecurity and subject matter experts. The goal is to see if knowledgeable external teams can defeat models’ security perimeters and find gaps in their security, biases and controls that prompt-based testing couldn’t find. What makes OpenAI’s recent papers noteworthy is how well they define using human-in-the-middle

Three Aberdeen oil company headquarters sell for £45m

Three Aberdeen oil company headquarters have been sold in a deal worth £45 million. The CNOOC, Apache and Taqa buildings at the Prime Four business park in Kingswells have been acquired by EEH Ventures. The trio of buildings, totalling 275,000 sq ft, were previously owned by Canadian firm BMO. The financial services powerhouse first bought the buildings in 2014 but took the decision to sell the buildings as part of a “long-standing strategy to reduce their office exposure across the UK”. The deal was the largest to take place throughout Scotland during the last quarter of 2024. Trio of buildings snapped up London headquartered EEH Ventures was founded in 2013 and owns a number of residential, offices, shopping centres and hotels throughout the UK. All three Kingswells-based buildings were pre-let, designed and constructed by Aberdeen property developer Drum in 2012 on a 15-year lease. © Supplied by CBREThe Aberdeen headquarters of Taqa. Image: CBRE The North Sea headquarters of Middle-East oil firm Taqa has previously been described as “an amazing success story in the Granite City”. Taqa announced in 2023 that it intends to cease production from all of its UK North Sea platforms by the end of 2027. Meanwhile, Apache revealed at the end of last year it is planning to exit the North Sea by the end of 2029 blaming the windfall tax. The US firm first entered the North Sea in 2003 but will wrap up all of its UK operations by 2030. Aberdeen big deals The Prime Four acquisition wasn’t the biggest Granite City commercial property sale of 2024. American private equity firm Lone Star bought Union Square shopping centre from Hammerson for £111m. © ShutterstockAberdeen city centre. Hammerson, who also built the property, had originally been seeking £150m. BP’s North Sea headquarters in Stoneywood, Aberdeen, was also sold. Manchester-based

2025 ransomware predictions, trends, and how to prepare

Zscaler ThreatLabz research team has revealed critical insights and predictions on ransomware trends for 2025. The latest Ransomware Report uncovered a surge in sophisticated tactics and extortion attacks. As ransomware remains a key concern for CISOs and CIOs, the report sheds light on actionable strategies to mitigate risks. Top Ransomware Predictions for 2025: ● AI-Powered Social Engineering: In 2025, GenAI will fuel voice phishing (vishing) attacks. With the proliferation of GenAI-based tooling, initial access broker groups will increasingly leverage AI-generated voices; which sound more and more realistic by adopting local accents and dialects to enhance credibility and success rates. ● The Trifecta of Social Engineering Attacks: Vishing, Ransomware and Data Exfiltration. Additionally, sophisticated ransomware groups, like the Dark Angels, will continue the trend of low-volume, high-impact attacks; preferring to focus on an individual company, stealing vast amounts of data without encrypting files, and evading media and law enforcement scrutiny. ● Targeted Industries Under Siege: Manufacturing, healthcare, education, energy will remain primary targets, with no slowdown in attacks expected. ● New SEC Regulations Drive Increased Transparency: 2025 will see an uptick in reported ransomware attacks and payouts due to new, tighter SEC requirements mandating that public companies report material incidents within four business days. ● Ransomware Payouts Are on the Rise: In 2025 ransom demands will most likely increase due to an evolving ecosystem of cybercrime groups, specializing in designated attack tactics, and collaboration by these groups that have entered a sophisticated profit sharing model using Ransomware-as-a-Service. To combat damaging ransomware attacks, Zscaler ThreatLabz recommends the following strategies. ● Fighting AI with AI: As threat actors use AI to identify vulnerabilities, organizations must counter with AI-powered zero trust security systems that detect and mitigate new threats. ● Advantages of adopting a Zero Trust architecture: A Zero Trust cloud security platform stops

The Download: bad news for inner Neanderthals, and AI warfare’s human illusion

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. The problem with thinking you’re part Neanderthal There’s a theory that many of us have an “inner Neanderthal.” The idea is that Homo sapiens and a cousin species once bred, leaving some people today with a trace of Neanderthal DNA. This DNA is arguably the 21st century’s most celebrated discovery in human evolution. But in 2024, a pair of French geneticists called into question the theory’s very foundations. They proposed that what scientists interpret as interbreeding could instead be explained by population structure—the way genes concentrate in smaller, isolated groups.

Find out what it all means for human evolution. —Ben Crair

This story is from the next issue of our print magazine, which is all about nature. Subscribe now to read it when it lands on Wednesday, April 22. Why having “humans in the loop” in an AI war is an illusion —Uri Maoz AI is starting to shape real wars. It’s at the center of a legal battle between Anthropic and the Pentagon, playing a growing role in the conflict with Iran, and raising questions about how much humans should remain “in the loop.” Under Pentagon guidelines, human oversight is meant to provide accountability, context, and security. But the idea of “humans in the loop” is a comforting distraction. The real danger isn’t that machines will act without oversight; it’s that human overseers have no idea what the machines are actually “thinking.” Thankfully, science may offer a way forward. Read the full op-ed on the urgent need for new safeguards around AI warfare. The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 Despite blacklisting Anthropic, the White House wants its new modelTrump officials are negotiating access to Mythos. (Axios)+ Anthropic said it was too dangerous for a public release. (Bloomberg $)+ Finance ministers are alarmed about the security risks. (BBC)+ Anthropic just rolled out a model that’s less risky than Mythos. (CNBC)+ The Pentagon has pursued a culture war against the company. (MIT Technology Review)2 Sam Altman’s side hustles have raised conflict-of-interest concernsHis opaque investments could influence decisions at OpenAI. (WSJ $)+ A jury will soon decide if OpenAI abandoned its founding mission. (Wired $)+ The company is making a big play for science. (MIT Technology Review)3 A Starlink outage during drone tests exposed the Pentagon’s SpaceX relianceIt was one of several Navy test disruptions linked to Starlink. (Reuters $)+ The DoD is also tapping Ford and GM for military innovations.(NYT $)4 Data center delays threaten to choke AI expansion40% of this year’s projects are at risk of falling behind schedule. (FT $)+ Partly because no one wants a data center in their backyard. (MIT Technology Review)5 Alibaba just released its own version of a world modelHappy Oyster is the latest attempt to extend AI’s ability to comprehend physical reality. (SCMP)+ But they still need to understand cause and effect. (FT $)6 Google’s Gemini is now generating AI images tailored to personal dataBy analyzing users’ Google services and data. (Quartz)+ Google says it will cut the need for detailed prompts. (TechCrunch) 7 OpenAI is beefing up its agentic coding and development systemIts Codex update is a direct shot at Claude Code. (The Verge)+ But not everyone is convinced about AI coding. (MIT Technology Review)8 Europe’s online age verification app is hereIt’s available for free to any company that wants it. (Wired $) 9 Smartglasses are giving Korean theaters hope of a K-Pop momentTheir AI-powered translations are taking the shows to the world. (NYT $)10 Global voice actors are fighting Hollywood’s AI pushTheir voices are training the models that are replacing them. (Rest of World) Quote of the day “There’s this dark period between now and some time in the future where the advantage is very much offensive AI.” —Rob Joyce, former director of cybersecurity at the National Security Agency, tells Bloomberg how AI is creating new hacking threats. One More Thing COURTESY OF NOVEON MAGNETICS The race to produce rare earth elements Access to rare earth elements will determine which countries meet their goals for lowering emissions or generating energy from non-fossil-fuel sources. But some nations, including the US, are worried about the supply of these elements. China dominates the market, while extraction in the US is limited. As a result, scientists and companies are exploring unconventional sources. Read the full story on their search for critical minerals. —Mureji Fatunde We can still have nice things A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line.)+ This ska cover of Rage Against the Machine is an upbeat way to start a revolution.+ We finally know how far Stretch Armstrong can really stretch.+ Customize these ambient sounds to wash away disruptive thoughts.+ Here’s proof childhood dreams can come true: a girl guiding a seal to perform tricks.

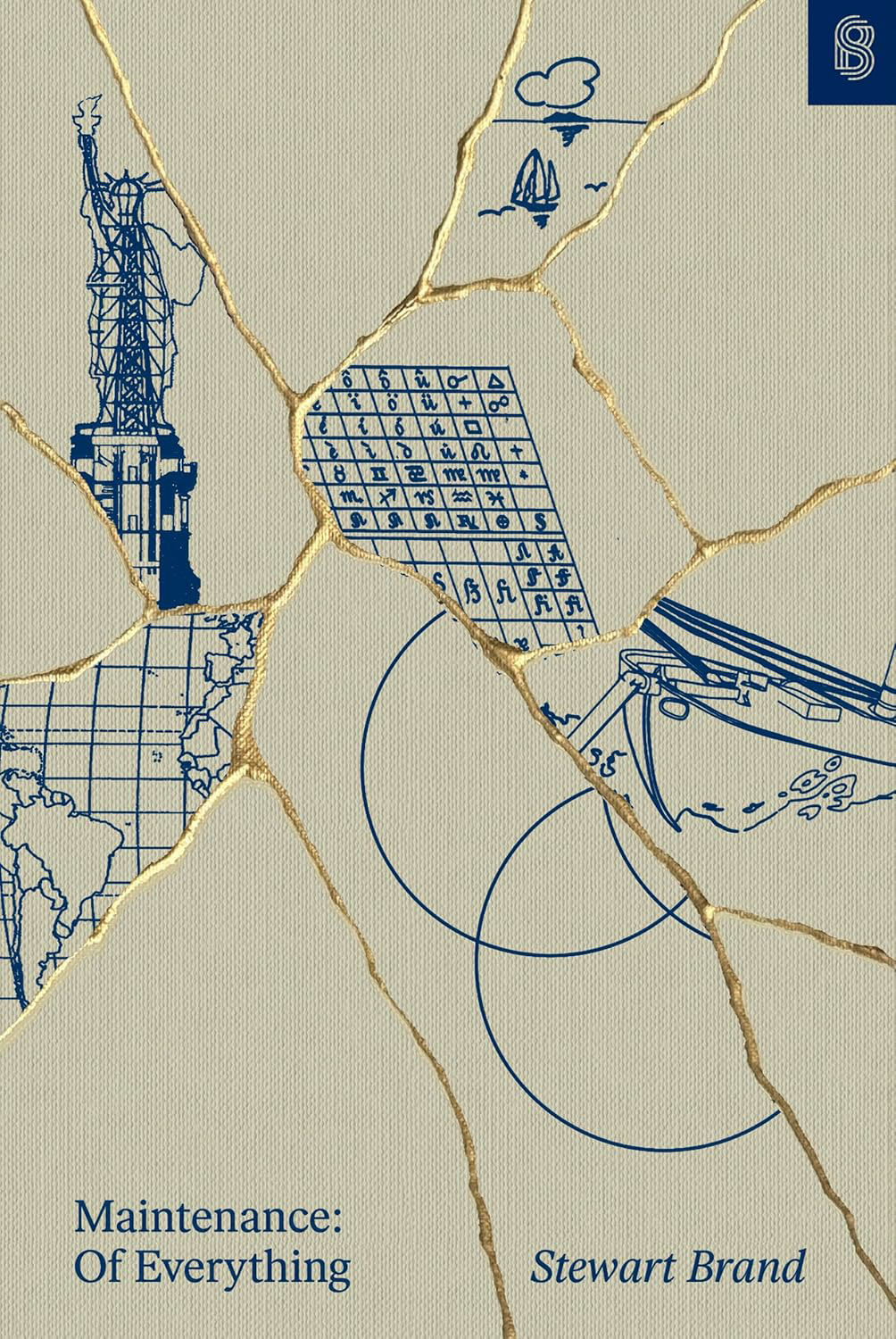

The case for fixing everything

The handsome new book Maintenance: Of Everything, Part One, by the tech industry legend Stewart Brand, promises to be the first in a series offering “a comprehensive overview of the civilizational importance of maintenance.” One of Brand’s several biographers described him as a mainstay of both counterculture and cyberculture, and with Maintenance, Brand wants us to understand that the upkeep and repair of tools and systems has profound impact on daily life. As he puts it, “Taking responsibility for maintaining something—whether a motorcycle, a monument, or our planet—can be a radical act.” Radical how? This volume doesn’t say. In an outline for the overall work, Brand says his goal is to “end with the nature of maintainers and the honor owed them.” The idea that maintainers are owed anything, much less honor, might surprise some readers. Actually, maintenance and repair have been hot topics in academia since the mid-2010s. I played some role in that movement as a cofounder of the Maintainers, a global, interdisciplinary network dedicated to the study of maintenance, repair, care, and all the work that goes into keeping the world going. Brand is right, too, that maintainers haven’t gotten the laurels they deserve. Over the past few decades, scholars have shown that work from oiling tools to replacing worn parts to updating code bases all tends to be lower in status than “innovation.” Maintenance gets neglected in many organizational and social settings. (Just look at some American infrastructure!) And as the right-to-repair movement has shown, companies in pursuit of greater profits have frequently locked us out of being able to do repairs or greatly reduced the maintainable life of their products. It’s hard to think of any other reason to put a computer in the door of a refrigerator.

Some of Brand’s earlier work helped inspire those insights. But his new book makes me think he doesn’t see things that way. For Brand, maintenance seems to be a solitary act, profound but more about personal success and fulfillment than tending to a shared world or making it better. Born in 1938, Brand is 87 years old. A sense hangs over the book—with its battles against corrosion, rust, and decay, with its attempts to keep things going even as they inevitably falter—of someone looking over life and pondering its end. Maintenance: Of Everything connects to every stage of Brand’s life. It’s worth reviewing where it falls in that arc. Brand has always been interested in tools and fixing things, but rarely has he focused on the systems that need the most care.

More than a half-century ago, Brand was a member of the Merry Pranksters, a countercultural, LSD-centered hippie collective famously led by Ken Kesey, the author of One Flew Over the Cuckoo’s Nest. In 1966, Brand co-produced the Trips Festival, where bands like the Grateful Dead and Big Brother and the Holding Company performed for thousands amid psychedelic light shows. Brand’s Whole Earth Catalog had a vision that might feel progressive, but its libertarian, rugged-individualist philosophy of remaking civilization alone stood in contrast to more collective social change movements. In some ways, the Trips Festival set a paradigm for the rest of his life’s work. Brand’s biographers have described him as a network celebrity—someone who got ahead by bringing people together, building coalitions of influential figures who could boost his signal. As Kesey put it in 1980, “Stewart recognizes power. And cleaves to it.” Brand applied this network logic to the undertaking he will always be best remembered for: the Whole Earth Catalog. First published in 1968 and aimed at hippies and members of the nascent back-to-the-land movement, the publication had the motto “Access to tools.” Its pages were full of Quonset huts, geodesic domes, solar panels, well pumps, water filters, and other technologies for life off the grid. It was a vision that might feel progressive or left-leaning, but the libertarian, rugged-individualist philosophy of eschewing corrupt systems and remaking civilization alone stood in contrast to the more collective movements pushing for deep social change at the time—like civil rights, feminism, and environmentalism. That vision also led straight to the empowerment that came with new digital tools, and to Silicon Valley. In 1985, Brand published the Whole Earth Software Catalog, the last of the series, and also cofounded the WELL—the Whole Earth ’Lectronic Link, a pioneering online community famous for, among other things, facilitating the trade of Grateful Dead bootlegs. He also wrote a hagiographic book about the MIT Media Lab, known for its corporate-sponsored research into new communications tech. “The Lab would cure the pathologies of technology not with economics or politics but with technology,” Brand wrote. Again, not collective action, not policymaking: tools. And Brand then cofounded the Global Business Network, a group of pricey consulting futurists that further connected him to MIT, Stanford, and the Valley. Brand had literally helped bring about the modern digital revolution. His attention then turned toward its upkeep. Brand’s 1994 book, How Buildings Learn: What Happens After They’re Built, argued against high-modernist architectural ideas. Nearly all buildings eventually get remade, he argued, but he especially favored cheap, simple structures that inhabitants could easily retool to suit changing needs. In some ways, Brand was recapitulating the liberated—or libertarian—philosophy of the Whole Earth Catalog: People can remake their world, if they have access to tools. In a chapter titled “The Romance of Maintenance,” he asked readers to see the beauty, value, and occasional pleasures of fixer-uppers of all kinds. This chapter was a touchstone for many of us in the academic subfield of maintenance studies. Researchers in disciplines like history, sociology, and anthropology, as well as artists and practitioners in fields like libraries, IT, and engineering, all started trying to understand the realities and, yes, romance of maintenance and repair. Brand joined and contributed to Listservs, attended conferences, chatted with intellectual leaders. So it’s a bit uncharitable when he writes that his new book is “the first to look at maintenance in general.” He knows better. The real question, though, is what his work has to teach us that others have not said before. In this first volume, the answer is unclear. Maintenance: Of Everything, Part One is an odd book. If so much of Brand’s thinking has been about access to tools, he now asks, in a more extended way: How are our tools maintained? But where Brand began his career with a catalogue, in this volume we get … what? A digest? An almanac? An encyclopedia? Its form and riotous variety fit no genre easily. The book has two chapters. The first, “The Maintenance Race,” recounts the story of three men who took part in the Golden Globe, a round-the-world race for solo sailors held in 1968. Each of the sailors, Brand explains, had a different philosophy of maintenance. One neglected it and hoped for the best. He died. Another thought of and prepared for everything in advance, and while he didn’t win the race, he completed it and once held the record for the “world’s longest recorded nonstop solo sailing voyage.” The final sailor won and did so through heroic acts of perseverance; his style was “Whatever comes, deal with it,” Brand explains. Structured like a fairy tale and unremittingly romantic, the story—like most of the anecdotes in the book—focuses on the derring-do of vigorous white guys. The strategy is no secret. Brand’s outline explains: “Start with a dramatic contest of maintenance styles under life-critical conditions—a true story told as a fable.” This myth is meant to inspire.

The second chapter, “Vehicles (and Weapons),” is over 150 pages long. It has five sections, multiple subsections, five subsections designated “digressions,” one called a “subdigression,” two “postscripts,” and several “footnotes” that are not footnotes in a formal sense but, rather, further addenda. At times, it all feels like notes for a future work. Brand makes no apology for the book’s woolliness. “All I can offer here,” he writes, “is to muse across a representative of maintenance domains and see what emerges.” Perhaps the most charitable reading of the potpourri is that it represents the return of a Merry Prankster, offering us a riotous varied light show. It’s a good book to leave on a table and occasionally open to a random page for entertainment. But it often seems as if it does not know what it wants to say or be. “Vehicles (and Weapons)” begins by paraphrasing two famous works of maintenance philosophy, Robert M. Pirsig’s Zen and the Art of Motorcycle Maintenance and Matthew B. Crawford’s Shop Class as Soulcraft. Maintenance involves both “problem finding” and “problem solving.” While much repair work is marked by anxiety, impatience, and boredom, it also offers positive values and outcomes. “Motorcycle maintainers take heart from what they repair for—the glory of the ride,” Brand writes. The beauty and triumph of cheapness is a running theme throughout the work, harking back to How Buildings Learn. Henry Ford’s Model T won out over early electric vehicles and hugely expensive luxury vehicles like Rolls-Royce’s Silver Ghost because it was cheap and easier to maintain. The three most popular cars in human history—the Ford Model T, the Volkswagen Bug, and the Lada “Classic” from Russia—all privileged cheapness, “retained their basic design for decades, and … invited repair by the owner.” Or, to be fair, maybe demanded it? For every hobbyist who delighted in being able to self-reliantly keep a VW running, there must have been thousands who appreciated how cheap it was and hated that it broke a lot. Brand never points to social research, like surveys, that might help us know people’s feelings on such matters. Other sections recount how Americans created interchangeable parts (enabling not only cheap mass production but also easy maintenance), examine how maintenance works with assault rifles and in war, and track the history of technical manuals from the early modern period to the age of YouTube. These stories are solid, but they’re also well known to students of technology, and nearly all are recycled from the work of others, featuring many large block quotes. The volume breaks little new ground. Brand treats maintenance as an unalloyed good. But the field of maintenance studies has moved on, burrowing into the domain’s ironies, complexities, and difficulties. A simple example: In most cases, it is environmentally far better to retire and recycle an internal-combustion vehicle and buy an electric one than to keep the polluting beast going forever. Maintaining a gas-guzzler or a coal-burning power plant isn’t a radical act but a regressive one. Also, maintenance can become a life-breaking burden on the poor, and it falls inequitably on the shoulders of women and people of color. Keeping existing systems going can be a way of avoiding tough, necessary change—like making technological systems more accessible for people with disabilities. In this volume, Brand is uninterested in such difficult trade-offs. He avoids any question of how politics shapes these issues, or how they shape politics. This avoidance comes out most clearly in a section of “Vehicles (and Weapons)” that talks about Elon Musk—a character of “unique mastery,” Brand informs us. He tells us that Bill Gates once shorted Tesla’s stock, only to lose $1.5 billion. The lesson is clear: Elon won. In what political and social vision is money the best way to keep the score? Brand rightly points out that electric vehicles have fewer moving parts and, in that sense, are more maintainable than internal-combustion vehicles. He celebrates Musk most of all because his products “have all proven to be game changers in part because they combine ingenious design with surprisingly low cost.” Again, it’s Brand’s “cheap, available tools” hypothesis. But there’s a real superficiality and lack of follow-through in thinking here: Teslas remain luxury vehicles whose sales have slumped since federal tax subsidies disappeared. The company has faced several right-to-repair lawsuits; there’s even a law review article on the topic. Musk is in no sense a maintenance hero. Yet Brand writes that with his companies, “Musk may have done more practical world saving than any other business leader of his time.” By the time Brand was writing this book, the controversies surrounding Musk for at least flirting with antisemitism, racism, sexism, authoritarianism, and more were quite clear. About this, the book says not a word. Maintenance: Of Everything, Part OneStewart BrandSTRIPE PRESS, 2026 For sure, Brand needn’t agree with Musk’s critics, but failing to even broach the subject is tone deaf and out of touch. Others have argued that Silicon Valley’s “Move fast and break things” mentality undermines healthy maintenance. Brand doesn’t raise the idea—even to dismiss it. It could be that with Maintenance: Of Everything, Part One Brand is just getting going; that in subsequent volumes he’ll have something more coherent to say; that he’ll raise really hard questions and try to answer them. But given his track record, we might reasonably doubt it. Kesey said Brand cleaves to power; he certainly doesn’t question it. Lee Vinsel is an associate professor of science, technology, and society at Virginia Tech and host of Peoples & Things, a podcast about human life with technology.

How robots learn: A brief, contemporary history

Roboticists used to dream big but build small. They’d hope to match or exceed the extraordinary complexity of the human body, and then they’d spend their career refining robotic arms for auto plants. Aim for C-3P0; end up with the Roomba. The real ambition for many of these researchers was the robot of science fiction—one that could move through the world, adapt to different environments, and interact safely and helpfully with people. For the socially minded, such a machine could help those with mobility issues, ease loneliness, or do work too dangerous for humans. For the more financially inclined, it would mean a bottomless source of wage-free labor. Either way, a long history of failure left most of Silicon Valley hesitant to bet on helpful robots. That has changed. The machines are yet unbuilt, but the money is flowing: Companies and investors put $6.1 billion into humanoid robots in 2025 alone, four times what was invested in 2024. What happened? A revolution in how machines have learned to interact with the world. Imagine you’d like a pair of robot arms installed in your home purely to do one thing: fold clothes. How would it learn to do that? You could start by writing rules. Check the fabric to figure out how much deformation it can tolerate before tearing. Identify a shirt’s collar. Move the gripper to the left sleeve, lift it, and fold it inward by exactly this distance. Repeat for the right sleeve. If the shirt is rotated, turn the plan accordingly. If the sleeve is twisted, correct it. Very quickly the number of rules explodes, but a complete accounting of them could produce reliable results. This was the original craft of robotics: anticipating every possibility and encoding it in advance. Around 2015, the cutting edge started to do things differently: Build a digital simulation of the robotic arms and the clothes, and give the program a reward signal every time it folds successfully and a ding every time it fails. This way, it gets better by trying all sorts of techniques through trial and error, with millions of iterations—the same way AI got good at playing games.

The arrival of ChatGPT in 2022 catalyzed the current boom. Trained on vast amounts of text, large language models work not through trial and error but by learning to predict what word should come next in a sentence. Similar models adapted to robotics were soon able to absorb pictures, sensor readings, and the position of a robot’s joints and predict the next action the machine should take, issuing dozens of motor commands every second. This conceptual shift—to reliance on AI models that ingest large amounts of data—seems to work whether that helpful robot is supposed to talk to people, move through an environment, or even do complicated tasks. And it was paired with other ideas about how to accomplish this new way of learning, like deploying robots even if they aren’t yet perfect so they can learn from the environment they’re meant to work in. Today, Silicon Valley roboticists are dreaming big again. Here’s how that happened.

Jibo Jibo A movable social robot carried out conversations long before the age of LLMs. An MIT robotics researcher named Cynthia Breazeal introduced an armless, legless, faceless robot called Jibo to the world in 2014. It looked, in fact, like a lamp. Breazeal’s aim was to create a social robot for families, and the idea pulled in $3.7 million in a crowdsourced funding campaign. Early preorders cost $749. The early Jibo could introduce itself and dance to entertain kids, but that was about it. The vision was always for it to become a sort of embodied assistant that could handle everything from scheduling and emails to telling stories. It earned a number of devoted users, but ultimately the company shut down in 2019. A crowdfunding campaign started in 2014 and drew 4,800 Jibo preorders.COURTESY OF MIT MEDIA LAB In retrospect, one thing that Jibo really needed was better language capabilities. It was competing against Apple’s Siri and Amazon’s Alexa, and all those technologies at the time relied on heavy scripting. In broad terms, when you spoke to them, software would translate your speech into text, analyze what you wanted, and create a response pulled from preapproved snippets. Those snippets could be charming, but they were also repetitive and simply boring—downright robotic. That was especially a challenge for a robot that was supposed to be social and family oriented. What has happened since, of course, is a revolution in how machines can generate language. Voice mode from any leading AI provider is now engaging and impressive, and multiple hardware startups are trying (and failing) to build products that take advantage of it. But that comes with a new risk: While scripted conversations can’t really go off the rails, ones generated by AI certainly can. Some popular AI toys have, for example, talked to kids about how to find matches and knives. OpenAI Dactyl A robot hand trained with simulations tries to model the unpredictability and variation of the real world. By 2018, every leading robotics lab was trying to scrap the old scripted rules and train robots through trial and error. OpenAI tried to train its robotic hand, Dactyl, virtually—with digital models of the hand and of the palm-size cubes Dactyl was supposed to manipulate. The cubes had letters and numbers on their faces; the model might set a task like “Rotate the cube so the red side with the letter O faces upward.”

Here’s the problem: A robotic hand might get really good at doing this in its simulated world, but when you take that program and ask it to work on a real version in the real world, the slight differences between the two can cause things to go awry. Colors might be slightly different, or the deformable rubber in the robot’s fingertips could turn out to be stretchier than it was in simulation. Dactyl, part of OpenAI’s first attempt at robotics, was trained in simulation to solve Rubik’s Cubes.COURTESY OF OPENAI The solution is called domain randomization. You essentially create millions of simulated worlds that all vary slightly and randomly from one another. In each one the friction might be less, or the lighting more harsh, or the colors darkened. Exposure to enough of this variation means the robots will be better able to manipulate the cube in the real world. The approach worked on Dactyl, and one year later it was able to use the same core techniques to do something harder: solving Rubik’s Cubes (though it worked only 60% of the time, and just 20% when the scrambles were particularly hard). Still, the limits of simulation mean that this technique plays a far smaller role today than it did in 2018. OpenAI shuttered its robotics effort in 2021 but has recently started the division up again—reportedly focusing on humanoids. Google DeepMind RT-2 Training on images from across the internet helps robots translate language into action. Around 2022, Google’s robotics team was up to some strange things. It spent 17 months handing people robot controllers and filming them doing everything from picking up bags of chips to opening jars. The team ended up cataloguing 700 different tasks. The point was to build and test one of the first large-scale foundation models for robotics. As with large language models, the idea was to input lots of text, tokenize it into a format an algorithm could work with, and then generate an output. Google’s RT-1 received input about what the robot was looking at and how the many parts of the robotic arm were positioned; then it took an instruction and translated it into motor commands to move the robot. When it had seen tasks before, it carried out 97% of them successfully; it succeeded at 76% of the instructions it hadn’t seen before. The model RT-2, for Robotic Transformer 2, incorporated internet data to help robots process what they were seeing.COURTESY OF GOOGLE DEEPMIND The second iteration, RT-2, came out the following year and went even further. Instead of training on data specific to robotics, it went broad: It trained on more general images from across the internet, like the vision-language models lots of researchers were working on at the time. That allowed the robot to interpret where certain objects were in the scene. “All these other things were unlocked,” says Kanishka Rao, a roboticist at Google DeepMind who led work on both iterations. “We could do things now like ‘Put the Coke can near the picture of Taylor Swift.’”

In 2025, Google DeepMind further fused the worlds of large language models and robotics, releasing a Gemini Robotics model with improved ability to understand commands in natural language. Covariant RFM-1 An AI model that allows robotic arms to act like coworkers.

In 2017, before OpenAI shuttered its first robotics team, a group of its engineers spun out a project called Covariant, aiming to build not sci-fi humanoids but the most pragmatic of all robots: an arm that could pick up and move things in warehouses. After building a system based on foundation models similar to Google’s, Covariant deployed this platform in warehouses like those operated by Crate & Barrel and treated it as a data collection pipeline. By 2024, Covariant had released a robotics model, RFM-1, that you could interact with like a coworker. If you showed an arm many sleeves of tennis balls, for example, you could then instruct it to move each sleeve to a separate area. And the robot could respond—perhaps predicting that it wouldn’t be able to get a good grip on the item and then asking for advice on which particular suction cups it should use. This sort of thing had been done in experiments, but Covariant was launching it at significant scale. The company now had cameras and data collection machines in every customer location, feeding back even more data for the model to train on. A Covariant robot demonstrates “induction”—the common warehouse task of placing objects on sorters or conveyors.COURTESY OF COVARIANT It wasn’t perfect. In a demo in March 2024 with an array of kitchen items, the robot struggled when it was asked to “return the banana” to its original location. It picked up a sponge, then an apple, then a host of other items before it finally accomplished the task. It “doesn’t understand the new concept” of retracing its steps, cofounder Peter Chen told me at the time. “But it’s a good example—it might not work well yet in the places where you don’t have good training data.” Chen and fellow founder Pieter Abbeel were soon hired by Amazon, which is currently licensing Covariant’s robotics model (Amazon did not respond to questions about how it’s being used, but the company runs an estimated 1,300 warehouses in the US alone).

Agility Robotics Digit Companies are putting this humanoid to the test in real-world settings. The new investment dollars flowing to robotics startups are aimed largely at robots shaped not like lamps or arms but like people. Humanoid robots are supposed to be able to seamlessly enter the spaces and jobs where humans currently work, avoiding the need to retool assembly lines to accommodate new shapes such as giant arms. It’s easier said than done. In the rare cases where humanoids appear in real warehouses, they’re often confined to test zones and pilot programs. Amazon and other companies are using Digit to help move shipping totes.COURTESY OF AGILITY ROBOTICS That said, Agility’s humanoid Digit appears to be doing some real work. The design—with exposed joints and a distinctly unhuman head—is driven more by function than by sci-fi aesthetics. Amazon, Toyota, and GXO (a logistics giant with customers like Apple and Nike) have all deployed it—making it one of the first examples of a humanoid robot that companies see as providing actual cost savings rather than novelty. Their Digits spend their days picking up, moving, and stacking shipping totes. The current Digit is still a long way from the humanlike helper Silicon Valley is betting on, though. It can lift only 35 pounds, for example—and every time Agility makes Digit stronger, its battery gets heavier and it has to recharge more often. And standards organizations say humanoids need stricter safety rules than most industrial robots, because they’re designed to be mobile and spend time in proximity to people. But Digit shows that this revolution in robot training isn’t converging on a single method. Agility relies on simulation techniques like those OpenAI used to train its hand, and the company has worked with Google’s Gemini models to help its robots adapt to new environments. That’s where more than a decade of experiments have gotten the industry: Now it’s building big.

Making AI operational in constrained public sector environments

In partnership withElastic The AI boom has hit across industries, and public sector organizations are facing pressure to accelerate adoption. At the same time, government institutions face distinct constraints around security, governance, and operations that set them apart from their business counterparts. For this reason, purpose-built small language models (SLMs) offer a promising path to operationalize AI in these environments. A Capgemini study found that 79 percent of public sector executives globally are wary about AI’s data security, an understandable figure given the heightened sensitivity of government data and the legal obligations surrounding its use. As Han Xiao, vice president of AI at Elastic, says, “Government agencies must be very restricted about what kind of data they send to the network. This sets a lot of boundaries on how they think about and manage their data.” The fundamental need for control over sensitive information is one of many factors complicating AI deployment, particularly when compared against the private sector’s standard operational assumptions. Unique operational challenges

When private-sector entities expand AI, they typically assume certain conditions will be in place, including continuous connectivity to the cloud, reliance on centralized infrastructure, acceptance of incomplete model transparency, and limited restrictions on data movement. For many state institutions, however, accepting these conditions could be anything from dangerous to impossible. Government agencies must ensure that their data stays under their control, that information can be checked and verified, and that operational disruptions are kept to an absolute minimum. At the same time, they often have to run their systems in environments where internet connectivity is limited, unreliable, or unavailable. These complexities prevent many promising public sector AI pilots from moving beyond experimentation. “Many people undervalue the operating challenge of AI,” Xiao says. “The public sector needs AI to perform reliably on all kinds of data, and then to be able to grow without breaking. Continuity of operations is often underestimated.” An Elastic survey of public sector leaders found that 65 percent struggle to use data continuously in real time and at scale.

Infrastructure constraints compound the problem. Government organizations may also struggle to obtain the graphics processing units (GPUs) used to train and access complex AI models. As Xiao points out, “Government doesn’t often purchase GPUs, unlike the private sector—they’re not used to managing GPU infrastructure. So accessing a GPU to run the model is a bottleneck for much of the public sector.” A smaller, more practical model The many nonnegotiable requirements in the public sector make large language models (LLMs) untenable. But SLMs can be housed locally, offering greater security and control. SLMs are specialized AI models that typically use billions rather than hundreds of billions of parameters, making them far less computationally demanding than the largest LLMs. The public sector does not need to build ever-larger models housed in offsite, centralized locations. An empirical study found that SLMs performed as well or better than LLMs. SLMs allow sensitive information to be used effectively and efficiently while avoiding the operational complexity of maintaining large models. Xiao puts it this way: “It is easy to use ChatGPT to do proofreading. It’s very difficult to run your own large language models just as smoothly in an environment with no network access.” SLMs are purpose-built for the needs of the department or agency that will use them. The data is stored securely outside the model, and is only accessed when queried. Carefully engineered prompts ensure that only the most relevant information is retrieved, providing more accurate responses. Using methods such as smart retrieval, vector search, and verifiable source grounding, AI systems can be built that cater to public sector needs. Thus, the next phase of AI adoption in the public sector may be to bring the AI tool to the data, rather than sending the data out into the cloud. Gartner predicts that by 2027, small, specialized AI models will be used three times more than LLMs. Superior search capabilities “When people in the public sector hear AI, they probably think about ChatGPT. But we can be much more ambitious,” says Xiao. “AI can revolutionize how the government searches and manages the large amounts of data they have.”

Looking beyond chatbots reveals one of AI’s most immediate opportunities: dramatically improved search. Like many organizations, the public sector has mountains of unstructured data—including technical reports, procurement documents, minutes, and invoices. Today’s AI, however, can deliver results sourced from mixed media, like readable PDFs, scans, images, spreadsheets, and recordings, and in multiple languages. All of this can be indexed by SLM-powered systems to provide tailored responses and to draft complex texts in any language, while ensuring outputs are legally compliant. “The public sector has a lot of data, and they don’t always know how to use this data. They don’t know what the possibilities are,” says Xiao. Even more powerful, AI can help government employees interpret the data they access. “Today’s AI can provide you with a completely new view of how to harness that data,” says Xiao. A well-trained SLM can interpret legal norms, extract insights from public consultations, support data-driven executive decision-making, and improve public access to services and administrative information. This can contribute to dramatic improvements in how the public sector conducts its operations. The small-language promise Focusing on SLMs shifts the conversation from how comprehensive the model can be to how efficient it is. LLMs incur significant performance and computational costs and require specialized hardware that many public entities cannot afford. Despite requiring some capital expenses, SLMs are less resource-intensive than LLMs, so they tend to be cheaper and reduce environmental impact. Public sector agencies often face stringent audit requirements, and SLM algorithms can be documented and certified as transparent. Some countries, particularly in Europe, also have privacy regulations such as GDPR that SLMs can be designed to meet. Tailored training data produces more targeted results, reducing errors, bias, and hallucinations that AI is prone to. As Xiao puts it, “Large language models generate text based on what they were trained on, so there is a cut-off date when they were trained. If you ask about anything after that, it will hallucinate. We can solve this by forcing the model to work from verified sources.” Risks are also minimized by keeping data on local servers, or even on a specific device. This isn’t about isolation but about strategic autonomy to enable trust, resilience, and relevance. By prioritizing task-specific models designed for environments that process data locally, and by continuously monitoring performance and impact, public sector organizations can build lasting AI capabilities that support real-world decisions. “Do not start with a chatbot; start with search,” Xiao advises. “Much of what we think of as AI intelligence is really about finding the right information.” This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

Treating enterprise AI as an operating layer