Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

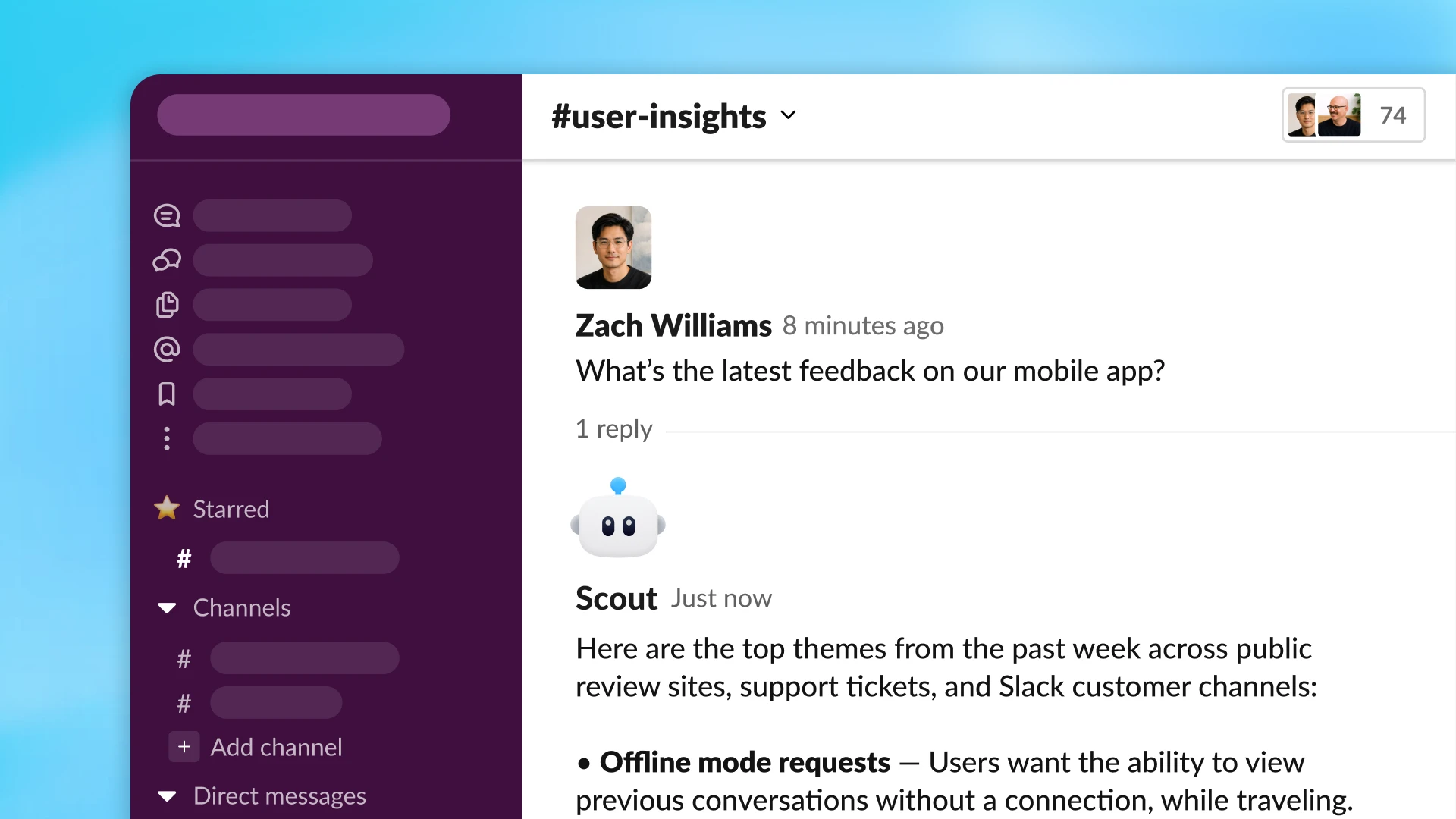

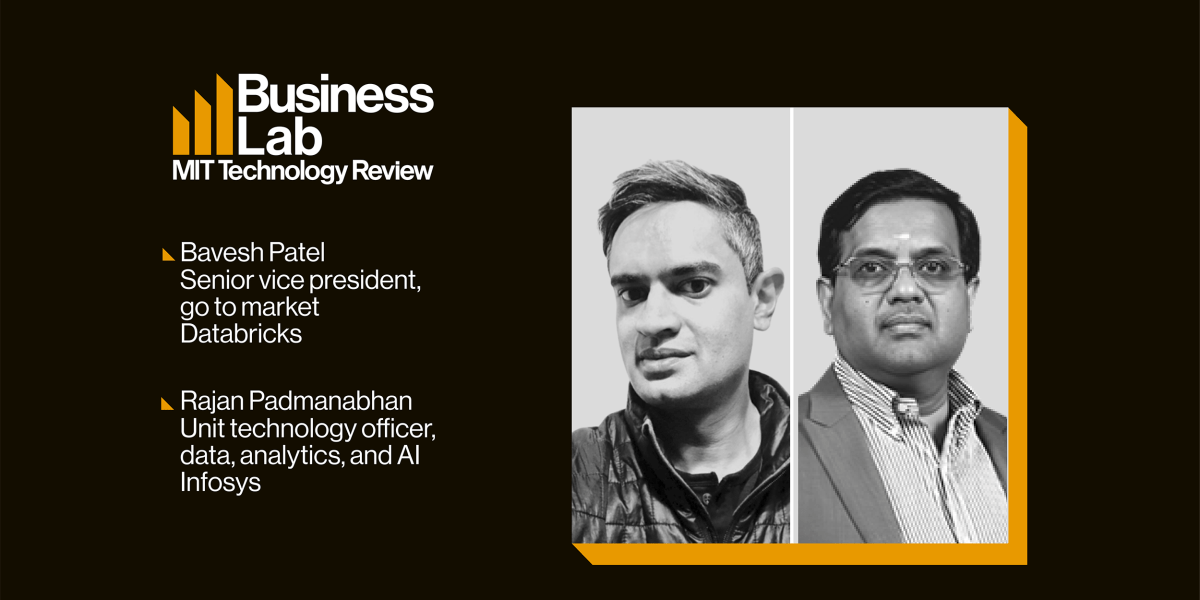

Rebuilding the data stack for AI

In partnership withInfosys Topaz Artificial intelligence may be dominating boardroom agendas, but many enterprises are discovering that the biggest obstacle to meaningful adoption is the state of their data. While consumer-facing AI tools have dazzled users with speed and ease, enterprise leaders are discovering that deploying AI at scale requires something far less glamorous but far more consequential: data infrastructure that is unified, governed, and fit for purpose. That gap between AI ambition and enterprise readiness is becoming one of the defining challenges of this next phase of digital transformation. As Bavesh Patel, senior vice president of Databricks, puts it, “the quality of that AI and how effective that AI is, is really dependent on information in your organization.” Yet in many companies, that information remains fragmented across legacy systems, siloed applications, and disconnected formats, making it nearly impossible for AI systems to generate trustworthy, context-rich outputs. “Really, the big competitive differentiator for most organizations is their own data and then their third-party data that they can add to it,” says Patel. For enterprise AI to deliver value, data must be consolidated into open formats, governed with precision, and made accessible across functions. Without that foundation, businesses risk “terrible AI,” as Patel bluntly describes it. That means moving beyond siloed SaaS platforms and disconnected dashboards toward a unified, open data architecture capable of combining structured and unstructured data, preserving real-time context, and enforcing rigorous access controls. When the groundwork is laid correctly, organizations can move toward measurable outcomes, unlocking efficiencies, automating complex workflows, and even launching entirely new lines of business.

That value focus is critical, says Rajan Padmanabhan, unit technology officer at Infosys, especially as enterprises seek precision in the outputs driving business decisions. Rather than treating AI initiatives as isolated innovation projects, leading companies are tying AI deployment directly to business metrics, using governance frameworks to determine what delivers results and what should be abandoned quickly. “We see this big opportunity just with AI literacy with business users, where they’re very eager to understand how they should be thinking about AI,” adds Patel. “What does AI mean when you peel the covers? What are the pieces and the building blocks that you need to put in place, both from a technology and a training and an enablement standpoint?”

The possibilities ahead are substantial. As AI agents evolve from copilots into autonomous operators capable of managing workflows and transactions, the organizations that win will be those that build the right foundation now. “What we are seeing as a new way of thinking is moving from a system of execution or a system of engagement to a system of action,” notes Padmanabhan. “That is the new way we see the road ahead.” The future of AI in the enterprise will be determined by whether businesses can turn fragmented information into a strategic asset capable of powering both smarter decisions and entirely new ways of operating. This episode of Business Lab is produced in partnership with Infosys Topaz. Full Transcript: Megan Tatum: From MIT Technology Review, I’m Megan Tatum, and this is Business Lab, the show that helps business leaders make sense of new technologies coming out of the lab and into the marketplace. This episode is produced in partnership with Infosys Topaz.Now, recent advancements in AI may have unlocked some compelling new industrial applications, but a reliance on inadequate data models means that many enterprises are hitting a brick wall. AI and agentic AI in particular place a whole new set of demands on data. The technology requires greater access, context, and guardrails to operate effectively. Existing data models often fall short. They’re too fragmented or siloed. Data itself often lacks quality. To bridge the gap, they require an AI-ready upgrade. Two words for you: data reconfigured.My guest today, are Bavesh Patel, senior vice president for Go-to-Market at Databricks, and Rajan Padmanabhan, unit technology officer for data analytics and AI at Infosys.

Welcome, Bavesh and Rajan. Rajan Padmanabhan: Thank you. Thanks for having us. Bavesh Patel: Thanks for having us. Megan: Fantastic. Thank you both so much for joining us today. Bavesh, if I could come to you first, when we talk about AI-ready data, what exactly do we mean? What new demands does AI place on data, and how does this impact the way it needs to be structured and used? Bavesh: Yeah. Great question. Appreciate you hosting us today. I think that obviously the whole world is enamored with AI because of all of the power that we can all see as users. AI is now democratized across hundreds of millions of users. And when we think about enterprises and businesses using AI, the quality of that AI and how effective that AI is really dependent on information in your organization, and that’s data. And what we found is that most enterprises, their data is kind of locked away in these different applications and different systems. And it’s very difficult to get a good view of, what is all my data? How trustworthy is it? How recent and fresh is it? And all of that is being injected into the AI. Unless you have a proper understanding of your data, the ability to ensure that it’s data that’s accurate and that can be used so that the AI can take advantage of it, you’re actually going to end up having terrible AI. We see a lot of customers spend time on cleansing their data, organizing their data, making sure it’s access controlled correctly, and that tends to be the fuel of good AI. Megan: Yeah. It’s such a foundational thing, isn’t it? But it can be missed, I think, quite easily. Rajan, what difference can having AI-ready data really make for enterprises as they unlock that full potential of AI and its applications? Rajan: First and foremost, thanks for having us. It’s a pleasure. I think in continuation of what Bavesh talked about, see, data and AI is pretty synonymous. And similarly, the consumer AI and enterprise AI and enterprise agentic AI are different because first and foremost, the business needs to have the context. That context from your enterprise information, which is not only structured, both structured and unstructured and user-generated contents and all forms of data is going to be very, very critical to really get the context right, and really get any model that you pick. That’s where the platforms like Databricks really help with the plethora of models or whether you want to build your own models or whether you want to ground the model based on your data. That is going to be very, very critical. That is where getting the data for AI is going to be very, very critical.

The third critical part, and this actually will be one of the roadblocks for adoption of AI. That’s why if you see the AI adoption on the consumer side is skyrocketing, but on the enterprise side, the enterprises are struggling is primarily around the precision of their output, because you are taking a business decisions where you are taking a buy decision, you are taking a sell decision, or you are trying to recommend something, recommend the content. It could be 20 different use cases. For that, the precision is going to be very critical. We are seeing our customers, the successful customers, definitely for the precision to be more than 92% is not aspiration, that is a must-have. If you have that, definitely being that AI data is going to be the entrepreneur right now for that. Megan: And I suppose if we’ve outlined there how critical this is, where should enterprises start then, professional perhaps, the level, what are the foundations when it comes to building an AI-ready data model?

Bavesh: Yeah. And I think Rajan hit the nail on the head. I mean, enterprises are grappling with a different set of problems than consumer AI. The first thing is that you’ve got to get a handle on your data. As I mentioned, a lot of the data is locked in. Ensuring that you have ability to put your data in a place where you can understand the holistic view of as much of your data as possible. That kind of starts with putting your data in open formats. A lot of the valuable data today in an organization is locked away in some proprietary SaaS app or some system, and all the datasets aren’t connected together to form that context. The first step is to really do an analysis of what is your data estate? What are the critical pieces of data that need to be put into a place where you can start to understand them and how they’re connected to one another? Thinking about how do you set up your data catalog, thinking about how do the relationships between the data assets work, putting data governance around it, that seems to be the first step. And if you think about how ChatGPT was built, it took all the data on the internet and then aggregated it, synthesized it, and then built these transformer models, while enterprises, they don’t really have a handle of all their data within the organization. That’s the first foundation that you really want to think about. The second thing is that you don’t want to just go ad hoc, go and do random AI projects. You really need to be thinking about business value. A lot of our customers are looking at AI much more strategically in that they want to be able to get projects on the board with wins and then generate business value. Building an AI value roadmap, which is connected to how well your data is organized, those two things seem to be foundational to how do you launch AI successfully in your organization. Megan: That value piece is so important, isn’t it? And as I understand it, Infosys and Databricks have worked closely together to guide organizations through this transformation. I wondered, can you share some examples of the impact you’ve seen enterprises you’ve worked with, Rajan, what difference has it made to the ways in which they can integrate more sophisticated AI and agentic AI applications? Rajan: Well, that’s a very, very good question. What both Databricks and Infosys has done is we have come up with, a kind of a framework first. First and foremost, it all needs to start with the value. One of the largest food products company where we collaborated together, what we have done is we have applied this framework. The framework consists of six different things. First and foremost, very critical is the value management, which Bavesh touched upon. We have worked together to come up with a 3M measurement framework, what we call adaptability, business value, and then responsible. You can’t just go and do a garage project. It has to be measurable. It should be responsible, follow all those things. That is going to be very critical. And we helped this client to prioritize, which will give them the most value for money, the investments that they are making. The second critical part here is it is not like most of the enterprises today are not everybody’s AI-born companies. Most of them were born during analog days; most of them were born in digital days. There are companies which are applying AI for modernization, because a lot of your historical information, which is actually helping you to build that long-term context. And that is where we have worked closely with some of the native tools of Databricks, like Lakebridge or the AI assistants that are there, and then create composable services on top of it to help the clients unlock the value bringing into Databricks. And then the second part where we help the client is exactly to the point, the readying of data. Now you brought in the data, now you have to bring both the structured, unstructured, analytical and all these aspects.

And that is where the third layer, we closely work with the Databricks, which is part of leveraging all the great capabilities within the Databricks, be it Unity Catalog, be it the open formats, or be it the gateways and other aspects. We were able to make the data available for this client. What has really helped our client, the third part, is Agent Bricks, which is one of the differentiatiors. It gives you the flavor for the enterprise. That is where we have closely worked, and we built some of our industry-specific agents, be it CPG, be it energy, be it FS. And for this client, what we have done is we have taken some of those CPG-specific use cases. Either it could be on the HR space or the procurement space or on the marketing space. And this has really helped our client be able to build a business capability surrounding this and unlock eight to nine use cases, we call it as a products, agentic AI products, which can really drive more value for them, solving the real business problems. And this kind of a comprehensive set of frameworks plus set of suites of services, plus our solution assets, Infosys solution assets, as well asunlocking the value from Databricks has really helped these clients. And we see similar patents for a lot of these successful engagements where we were able to continuously drive the value by applying this framework actually. Megan: Right. Sounds like it made a real material difference. Rajan mentioned a few of the tools in Databricks catalog there, Bavesh. I know you’ve recently worked to launch an operational database for AI agents and apps. I wonder how does a platform like that help organizations in this journey? What makes it different from some of the other platforms out there right now? Bavesh: Databricks has come to market with a new offering called Lakebase, which is really an OLTP database where you can build your AI apps. And if you think about it, there’s really two main types of data in an enterprise. There’s all the historical data, which is all the things that have happened, and that’s really what your analytics is based on. You have an old app system where you have put all your historical data and Databricks has come to market with what we call the Lakehouse, which is essentially a data warehouse with all of your data that is not operational in nature. It’s historical data. And I think that Lakehouse concept is really pushing forward with AI because a lot of our customers have thousands of users within their business and they need to get data. And what they’ve done is they’ve actually gone down the BI route, which is really building a dashboard or a report.

Most organizations have had thousands of these dashboards and reports proliferate across the organization and then they need to be customized. It just takes a long time for users inside of the business to actually get access to the data. AI now is really making that a lot easier from just the analytics perspective where we can now democratize access to the data, which has really been the holy grail for most data teams. They really want to get out of the way and just give the right data to the right people inside of the business with the right access. With a product like Genie at Databricks, you can just use English language or whatever your language is to ask questions of the data. And it’ll give you back data that answers your questions in context. It’ll give you not just what ChatGPT will give you, which is information about a topic that’s on the internet, but it will actually tell you, “Well, why did my sales numbers not reflect what I expected in the month of April?” It’ll give you some root cause analysis based on your enterprise data. Genie is going to be one of these things that’s really important where it’s going to truly kind of democratize data inside of the business. That’s kind of this OLAP world, which is what the Lakehouse is. More recently, we’ve come to market with what we call the Lakebase, which is the OLTP world. What we’re finding is that agents are now being deployed in these organizations, and those agents need a place to keep all of their orchestration, all of the context of what’s happening in that particular workflow. On the one hand, you’ve got users just asking questions. On the other hand, the next chapter is going to be around automating an entire business process. If you’re taking a function like generating a campaign in marketing, right? There are a lot of tools you use and a lot of steps you use. An agent can come in and really automate a lot of that. But on the back end of that agent, you’re going to need to stand up a real-time database to keep track of all the things that the agent is doing. That’s what Databricks has brought to market, which is this OLTP Lakebase solution. The innovation that we have brought to market is that it’s a modern kind of Postgres database where we have separated the compute and storage, very much like what we did with the data Lakehouse with the data warehouse. But on the Lakebase, the data is on one copy inside of your cloud storage, and then the compute is separated and it’s serverless. You can do things like branching and you can start up the OLTP database really quickly. What we found is that agents are actually starting these Lakebases because they can very quickly go start one up, keep it running, put it down when it needs to, make a copy of it. Agents are doing this, then they need the velocity, they need a cost-effective solution. And the beauty of all this is when you take the OLTP, which is all around the Lakebase and the real time, and you take the OLAP, you now have one system for all your data. You don’t have to copy the data around, you don’t have to manage all the permissions, you can set the context against it. We see these AI apps being really the future of how businesses run, where they’re going to take away all of the bottlenecks that humans are having to do repetitive work and automate these using LLMs and all these new technologies. We want to be the default for powering all that because we believe that our Lakebase technology is going to be faster, cheaper, and more secure for an AI database. Megan: Sounds like a real game changer. And we’ve touched on this a couple of times already, I mean, this idea of value. We know that engaging the commercial value of investments into AI is really high on the priorities right now for senior leaders. How important is this value measure piece when it comes to creating AI-ready data systems, Rajan? How can organizations ensure they’re monitoring what is delivering and what isn’t? Rajan: This is the paramount importance and most of the successful AI implementations or agentic AI implementations really required this value measurement. I’ll just extend the client example that I talked about, the large food products company, the global products company, to explain this question. I just want to create a metaphor. When the initial digital world came, we have a lot of these analytics primarily around defining those performance management KPIs, fact-based decisioning and other things were evolving over a period of time. Typically, a lot of these metrics are going to be very critical for them to measure how a function, how a business is doing. On a similar line for the value measurement, if I take the same example of the client, what is very critical for an organization is actually to map your outcome that you are expecting. Iin this case, how do I optimize my spend on direct and indirect purchases? So by applying AI, I would like to identify the areas where I can optimize the spend. That means one of the critical measures that you have is, what is your indirect expense classification and what spends you have been classified and how much you are able to reduce by bringing in this. Establishing these measures and the metrics is going to be very, very critical. And once you establish these base metrics and the measurement, and the beauty of it is some of these metrics, to just extend what Bavesh was talking about, the capabilities that Databricks gives you, like metrics view, features, tools, and other things would actually help you to translate those AI telemetries, business telemetries that is coming from your applications into a measurable metrics in terms of an outcome, which you can actually measure using the Genie room for value management measurement. Then what happens is two things that you can take, the use case, the products that as I said for this client, the products that we build either on the procurement side or on the marketing research side, if you find there is a value either because of VAC, they identify that they’re able to optimize or it is able to reachability, what is the reach, you can either accelerate that use case and further fine tune that product to expand it. Or there are, if you find it is not really driving the value or I’m not able to see the value that it is going to deliver, you can very well do a fast failure method rather than trying to make it work, you can understand and then you can take a call to pivot it to something else different. There are three aspects here. What we see from our experience, not only with this client across some of our other clients from industrial manufacturing or FS or in the energy, is by setting up this metrics-driven valuation method upfront and then leveraging the capabilities to establish, transform these telemetries, signals into a measurement, what we call an AI compass room so that you really measure the business stakeholders, whether it is coming from a marketing office or whether it is coming from supply chain office or whether it is coming from a CFO office where they can say, “Hey, this is what it is intended to do, this is what the current measurement, and this is where it’s failing that can help them to pivot.” And this will actually drive and democratize AI, all the agent decay across the enterprise, and that really drives the value. This is going to be one of the critical part that enterprise needs to do it. And that is where the six part framework that I talked about, applying that framework like value office, applying the ready for AI, applying the transformation fabric. Then the third part is the governance, which is going to be the entrepreneur of this. Then running your operations, not based on SLA, based on the experience level agreements and business metrics for you to continually measure, bringing all these six layers is going to be very critical. That’s when we see the organizations are very successful, and some of our proven examples exactly do the same that this is going to be very critical for organizations from a measurement standpoint. Megan: Lots of tangible ways there that you can actually gauge value here. And you touched on governance and the impact of AI on governance is another huge talking point among senior leaders and interactions with data are a core part of that. To what extent is having the right governance and security protocols an integral part of having AI-ready data? To Bavesh, what scenarios do these systems need to handle? What does that mean for data models? Bavesh: This is becoming kind of the prerequisite to deploying a successful AI project. I think MIT produced a report that said 95% of these new AI projects fail to actually generate business value. A big reason for that is you can go and prototype and stand up and vibe code a pilot, but when you’re actually moving a workload into production, you realize that governance becomes so critical. So what do we really mean by governance? I think the first thing is getting your data in order, like I said, in open formats. Most companies realize now that the way they engage with their customers, the way they develop a drug, the way they approve a person for a credit limit increase, all of that enterprise information is actually their competitive advantage. Because you can go and use a frontier model like ChatGPT or Claude that everybody has access to. Really the big competitive differentiator for most organizations is their own data and then their third-party data that they can add to it. Getting your data into an open format so you can understand your data and understanding your data is where governance comes in. Because when you think about governance, you really want to be able to find the data. If I’m an end user or if I’m building an AI product, I want to know what data’s available to me. Can I trust the data? How fresh is the data? Is it coming from my analytics world or do I need a real-time system like a OLTP system? I need to find the data. I also need to make sure that access is controlled in a way that doesn’t cause any huge headaches from my organization. This becomes critical. If I have a whole bunch of PDFs that have purchase orders in them, who actually has access to all that data? In a clinical trial, for example, in healthcare, you really want to ensure that people across trials don’t have visibility to patient data. Maybe the model that was used to build that was running across trial. Who has access to all the data? Who has access to only parts of the data? You really have to think about this. We also look at semantics of the data. Rajan brought this up right at the beginning of this, which is what is the context? How do we think about the metrics and all the things that the business users know in their head? We need to start codifying that somewhere. We have a product at Databricks called Unity Catalog where you can do the discovery, the access and the business semantics. You also want to share the data. And in the world of agents, what we see is something called agent sprawl. In a very short order, just like how SaaS applications became very prevalent within any organization where they really solved a business problem. You go to a line of business and you say, “I need to be able to do credit underwriting” or “I am doing a prior authorization use case or pick thousands of use cases.” There’s a SaaS app for that. Much like that, there’s going to be this world in which agents are going to come into play, and most organizations are going to have lots of agents running all the time, but the reality of it is that how did that agent perform? What was the feedback loop from the user? What was the cost of running that workload and is it going up dramatically? And if you don’t have a way to monitor, to understand, and trace all the questions and answers and responses at scale, you’re going to find yourself in a big pickle. This actually could hurt your organization because users will be very confused about what to do. When you look at governance, most organizations are recognizing that they have to start to understand what is it that they have put in place from a systems, from a process, from a tooling standpoint, focus on one use case, build out the governance for that, but build it in a way that’s going to allow you to become repeatable. AI is not going to be about one use case or two use cases. It’s whoever builds the flywheel of building many use cases in a safe, secure way, in a cost-effective way that’s driving a business outcome. If you don’t apply governance, it’s going to be very hard. At Databricks, we made a big bet on governance four or five years ago. This is one of the main reasons our company’s growing right now because we can ensure that there’s quality data that’s going into all of your AI. You can use things like Genie and you can use things like Agent Bricks and you can build apps using Lakebase. None of that really works without governance. It’s really what we call the brain inside of Databricks. Most of our customers spend a lot of time inside of Unity Catalog. And the great news is that AI is helping governance get set up much more quickly. We have a customer that three years ago, they were trying to get all of the data assets across all their domains from the customer, from the loyalty app, from the e-commerce engine. They had to go and map out all this data assets. AI is now doing a lot of their work for them. The human in the loop is just checking things. We’ve made this much easier with AI. We always think about AI as a business use case and an outcome, which I think is going to be where the biggest value is. But at Databricks, we’re using AI inside of our platform to make it much easier to operate and to make it much easier to provide all the right things for your business. This is a super critical part of how we plan to innovate as AI takes fruition in the market. Megan: And Rajan, Bavesh touched on this a little bit there, but does the integration of Agentic AI add another layer of complexity here too? What new consideration around governance does that raise? Rajan: That’s a very, very valid question. I would like to take a metaphor to really explain. We are getting into the world of self-driving cars, robotaxis, and other things. While that takes us to the autonomous world, but still there are rules that you need to adhere to when you are driving on a road. The reason I’m bringing this metaphor is because what is actually required is actually adhering to the rules and different topographies, different things, depends upon where you are driving is going to be very, very critical. The complexity that agents are going to add is basically how you operate with those constraints. For example, as a UTO, I can do 10 things, but say if I cannot approve a discount for more than 70% or I cannot give something as a bonus for someone because that is a part of the CFO, which an agent should be aware of. That is one aspect, applying the constraints around it and making sure that the agents are adhering to the constraints. The second set of complexity that it builds is the tools to access. As a business, in today’s world, when you define a process, certain processes need a certain set of tools to really actionize it. There are certain entitlements, only people entitled to do certain things based on their identity, based on the need or the situation need, you need to govern. The third is information sharing. While MCP and other aspects are great, UCP and other aspects are great, but one critical thing is what you need to share, what you don’t need to share. And those are the critical considerations. The last part is learning and relearning. Sometimes when you learn good things, you should keep something. Sometimes it is better for you to completely remove it and reevaluate in a newer way, relearn it in a newer way. These are all the critical things that are required. On the similar line for agents, it is going to be paramount, because when you are operating agents for an enterprise, you need to know, learn, and adhere to certain compliance related rules, business related constraints, and then the entitlement identity, and then sharing whatever that apply to a physical human will also start applying to an agent. That is where this is going to be very critical. This requires a new set of operating systems. That doesn’t really mean now get out of a new thing. That is where I’m just interpreting how Bavesh touched upon the Unity Catalog. The best part that which we see and some of our clients that which are implementing is extending the Unity Catalog and the capabilities like now you can catalog the tools, catalog the MCP as well as catalog these agents, and then govern those agents based on the constraints, ground them based on the constraints. It’s going to be very, very critical. Doing it not later, but starting that as part of your strategy and enforcing this as one of the critical dimensions of when you measure the value is also going to be very critical for an organization. It is like making sure that not only building the autonomous car, but as well as making sure that the car drives as per the rules of the road, not going rogue. Megan: Lots to think about there. Fascinating stuff. Thank you. Just to close, with a quick look ahead, we all know the pace of development in AI and Agentic AI is so rapid. For those organizations that can prioritize AI-ready data now, what are the most compelling use cases for the technology that you can see coming to the fore in the next few years, Bavesh? Bavesh: I think the excitement level is at its peak. We’ve seen so much investment in AI. I think the reason why there’s a lot of excitement is because you can look at the early adopters and you can see massive amounts of gains that these organizations are seeing. The one thing I will tell you is that the companies that there’s really three categories and the companies that I think are doing well, a lot of them started out with just copilots and things that are just giving people quick answers. Think about it as making an individual productive. That is the first phase. And the ROI on that has been somewhat questionable. With something like Genie, it makes it a lot more effective because it’s actually on your data and your data is contextualized in your organization. I think that’s one level of area that we’re going to see a lot of innovation. We’ll see most organizations just start to get the right information to the right person at the right time. And that has been a dream for a lot of organizations. The second one is around automating entire business processes. We see functions within marketing, like I described earlier, or whether you’re going through a process of rebates for a company. There’s a whole bunch of steps involved where you have to go into three different apps and export data from Excel and put it over here. There’s thousands of people doing very laborious, monotonous, repeatable work. These agents are really going to help get an immense amount of not only productivity for the business process, but it’s just going to make things faster. Processes that took weeks are now going to take days. Processes that took days are going to take hours and minutes now. One trend we’ve seen is that the AI world is so dynamic. In a world where you got lots of different players, you want to think about first principles, what are the foundations? You want to think about owning your data, making sure you have a handle on your structured and unstructured data. You want to put governance on that. But the other thing that you want to make sure that you don’t do is lock yourself in. Today, if you think about it, Gemini is really good with multimodal. Anytime you have pictures or videos or things like that, Gemini just is super good. Whereas if you’re writing code, Claude is really good. If you’re just doing certain types of questions around introspection, ChatGPT is really good. What you really want is an open data platform where you can build your open AI on multiple clouds, which is what we built at Databricks. I think that’ll help with the second piece, which is you can pick and choose because when you build these agents, you don’t have to be locked into just one. You should be picking the best quality and the best security and the best ROI and cost for a particular workload. One workload may use multiple of these models, and they might be even specific industry models. You need a system and a platform that can really handle this complexity. I think the third category is business reimagination. A lot of people talk about this where, yes, you’re going to go and take the data and make it available and give everybody access to the data. You’re going to make existing processes much more efficient. But the third thing is there’s going to be brand new things that come out of it. We have a very large customer who’s a bank and they have built a product that they didn’t have a year ago. Essentially, it’s machine learning and LLMs helping treasury departments forecast what their balances are going to be because they have more data at their fingertips. Historically, it took a long time for the data to get to the bankers. They were not able to really predict what a balance would be for a treasury department. Think about this for a big enterprise company, they have now built a brand new data AI solution that they’re monetizing and it’s generated hundreds of millions of dollars in the first six months. We’re seeing brand new lines of business open up and that is going to be really exciting because that’s where a lot of the transformation is going to happen. There’s going to be productivity. There’s going to be kind of automation at the business process level. Then there’s going to be these big new things that we didn’t even imagine that people are going to come up with. We are actually seeing the early signals of this in every industry. We see retailers getting data at the hourly and the minute level so that they can integrate much more closely with their supply chains. We’re seeing much more targeted customer 360-degree use cases where as retailers or as consumers, we get annoyed by ads, but now it’s so contextualized and you have so much information about what really matters to your target customer, you’re giving them value added kind of information and that’s engaging them more. There’s a whole bunch of innovation happening with agentic commerce and things like concierge and virtualized shopping. You look at any industry, there’s definitely new ways of doing things. This is what’s really exciting about AI, but you really have to not get too far ahead without thinking about what are the foundational things. You mentioned this earlier, which is open data platform, making sure you have governance correctly, making sure you think about your historical analytical data and your application data that’s going to be real time, having a good foundation to build on, that’s going to allow you to scale and move more quickly and compete in this new world. We’re very excited about what we’re seeing with our customers and what they’re building. And honestly, that’s the best part about being in my role at Databricks, which is our teams really go to customers and say, “What are the outcomes you’re driving?” The early signals have been super positive. We’re seeing companies that get serious about all the foundational elements and really are methodical about building really outcome-based AI solutions, that 5% of projects that are being successful, those are wildly successful. That’s why we’re growing as a company because once you get a good project under your belt, that gets visibility within executives. The last thing is that historically, a lot of tech has been in the IT department. You get the business designing how they want to go to market and how they’re going to compete and what products and services they want to offer. IT was the enabler and in many cases became the cost center and was relegated to rationalizing the portfolio of spend and tools. But now we’re seeing the business kind of take the lead with AI where they want to understand, they want to know, “Hey, what can I be doing now that was not possible before?” We see this big opportunity just with AI literacy with business users where they’re very eager to understand how they should be thinking about AI. What does AI mean when you peel the covers? What are the pieces and the building blocks that you need to put in place, both from a technology and a training and an enablement standpoint? We’re spending a lot of time with executives helping them along this journey. We definitely see a lot of amazing opportunities ahead. Megan: Yeah. So much innovation going on. And finally, how about yourself, Rajan? What on the horizon is exciting you the most? Rajan: I think Bavesh covered quite a bit, but I think the way I’m seeing is today predominantly we are talking about labor shift. That means unlocking the potential of human or shifting the current way of working to the new way of working with the more efficiency game. It’s predominantly more of an efficiency game. I think that is what we are seeing now and the majority of the successful use cases around the labor shift. But what is pretty promising is the two kinds of shift, the business shifts. What we are seeing as a new way of thinking or the new thing that is coming up is moving from system of execution or a system of engagement to system of action. That is the new way we see the road ahead. That is where some of the points that I touched upon. The business wants to have access to it, but how does it really make the real difference for it? One classical example that I could clearly see which we have implemented for one of our customers primarily in the manufacturing space, is around the lifecycle of creation of a product and then publishing the content around the product in line with their different B2B marketplaces. Some of those, you are not just talking about recommending, creating, but actually you are able to reimagine this process, which used to involve five different departments, now can be done much faster, but at the same time gives you that veracity in terms of the decisioning that you are able to do and as far as how you’re able to actionize. That is the second thing which we are seeing. The third part I think is also going to be is the way how the commerce has evolved. There is also not beyond that agentic commerce, but I think what we are seeing is that agent to agent commerce, agent to human commerce and agent to agent payments, agent to human payments, and then the content monetization. These are the new set of business opportunities like building new business agentic products. It could be for family techs, it could be for on the consumer side, or it could be on the industrial technology side. These are going to be what I’m calling the economy shift, labor shift, business shift, because that is going to bring a new set of system of actions, moving them from the system of executions or the typical SaaS application with the bolt-on agentic, the so called agentic application. That is going to be a major transformation, and we are underway. But on the technology side, what is very critical for entrepreneuring is in today’s world you have data, analytical data, operational data, and then there is intelligence, there are different facets of it. I think both this analytical core and operational core is going to really come into one. That’s why we are so gung-ho about the releases of Lakebase and other things because that is the way the future is going to drive. When they are really thinking about being ready for AI technology use cases, they should really think, how do you really create this unified core for the newer world? The second part is people have to reimagine today, if I take SAP as an example, you do hundreds of edge applications, business applications needed to integrate another thing. Typically, we create sprawl of these integrations. One technology use case, people can say, “Hey, how do I really create a domain-based service mesh on top of this unified core and how do I make it more agentic integration ready?” That is one of the technology use cases that we are advising to the client. I think now with a lot of the new areas that are coming around SAP, BDC with the Databricks, and this zero-based integration, that makes them rethink the way they need to integrate, the way they need to do things. The third part, I think from a technology investment and technology, the use cases that most come for the technology that I would talk about is don’t just talk about now. This is the time that you have to, the way you own the people, the FTEs for your organizations. Agents are going to be your new FTEs. That means that some of the new technology paradigm is going to be you will end up creating these co-intellects within your organization. That means you need to invest on what we call this agentic grid, where it becomes like a unified agentic fabric where every other agents can really collaborate and integrate and building on top of the same, the unified operational analytical core, the unified agentic integration on top of it, which is going to create a new set of experiences, agentic experiences rather than the traditional experiences or conversational experiences. Then the new collaboration methods are going to be some of the critical aspects from a technology side that people have to really think from a technology standpoint. To start with, I would say you start looking at it from a data standpoint, building that unified core, building that unified integration and building that collaboration layer for both sharing and collaborating with intelligence as well as the agentic collaboration all governed under single umbrella. That is going to be the one critical use case which no one will feel bad about, and they are going to get really a 100X of their investments out of it. Megan: Certainly no shortage of exciting developments on the horizon. Thank you both so much for that conversation. That was Bavesh Patel, senior vice president for Go-to-Market at Databricks and Rajan Padmanabhan, unit technology officer for data analytics and AI at Infosys, whom I spoke with from Brighton, England.That’s it for this episode of Business Lab. I’m your host, Megan Tatum. I’m a contributing editor and host for Insights, the custom publishing division of MIT Technology Review. We were founded in 1899 at the Massachusetts Institute of Technology, and you can find us in print, on the web and at events each year around the world. For more information about us and the show, please check out our website at technologyreview.com.This show is available wherever you get your podcasts, and if you enjoyed this episode, we hope you’ll take a moment to rate and review us. Business Lab is a production of MIT Technology Review, and this episode was produced by Giro Studios. Thanks for listening. This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.

The Download: DeepSeek’s latest AI breakthrough, and the race to build world models

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. Three reasons why DeepSeek’s new model matters On Friday, Chinese AI firm DeepSeek released a preview of V4, its long-awaited new flagship model. Notably, the model can process much longer prompts than its last generation, thanks to a new design that handles large amounts of text more efficiently. While the model remains open source, its performance matches leading closed-source rivals from Anthropic, OpenAI, and Google. It is also DeepSeek’s first release optimized Huawei’s Ascend chips—a key test of China’s dependence on Nvidia. Here are three ways V4 could shake up AI.

—Caiwei Chen The rise of world models AI systems have already gained impressive mastery over the digital world, but the physical world remains humanity’s domain. As it turns out, building an AI that composes novels or code apps is far easier than developing one to fold laundry or navigate city streets. To bridge this gap, many researchers believe you need something called a world model.

Proponents like Stanford professor Fei-Fei Li and AMI Labs founder Yann LeCun argue these models can overcome the well-known limitations of LLMs—and realize AI’s promise for robotics. Find out why they’ve brought world models to the forefront of the field. —Grace Huckins World models are on our list of the 10 Things That Matter in AI Right Now, our essential guide to what’s really worth your attention in the field. Subscribers can watch an exclusive roundtable unveiling the technologies and trends on the list, with analysis from MIT Technology Review’s AI reporter Grace Huckins and executive editors Amy Nordrum and Niall Firth. The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology. 1 China has blocked Meta’s $2 billion acquisition of AI startup ManusRegulators cited national security grounds. (WSJ $)+ Beijing called the deal a “conspiratorial” attempt to hollow out its tech base. (FT $)+ The country is tightening its grip on AI firms that try to leave. (TechCrunch)+ The decision escalates China’s AI rivalry with the US. (Bloomberg $)+ But there will be no winners in their competition. (MIT Technology Review)2 Google is investing up to $40 billion in AnthropicIn a deal valuing the AI firm at $350 billion. (CNBC)+ The funding will support the firm’s growing computing needs. (TechCrunch)+ Anthropic and OpenAI are fighting for compute capacity. (Axios) 3 President Trump just fired the entire National Science BoardThe NSF has played a crucial role in developing technology. (The Verge)+ The move heightens fears over political interference in US science. (Nature)4 Conspiracy theories about the Washington shooting are proliferating onlineOver 300,000 posts appeared on X using the keyword “staged.” (NYT $)+ The theories are also swirling on Bluesky and Instagram. (Wired)5 The AI compute crunch is starting to hit the broader economy.It’s affecting jobs, gadgets, and electricity prices. (404 Media)+ The AI compute explosion is the tech story of our time. (MIT Technology Review)6 Elon Musk says a new banking tool brings X close to a “super app”He’s pledged to launch the tool this month. (Bloomberg)7 AI optimism is surging across Asia while US sentiment coolsThe divide could shape where adoption happens fastest. (Rest of World)8 Apple is tying its new CEO’s ascent to its first foldable iPhoneIt wants to build the buzz around John Ternus. (Gizmodo) 9 Twelve firms are developing the Golden Dome’s space-based interceptorsThey’ve won contracts worth up to $3.2 billion. (Ars Technica)10 NASA has shared promising results from Artemis IIThe spacecraft and rocket fared well. (Engadget) Quote of the day

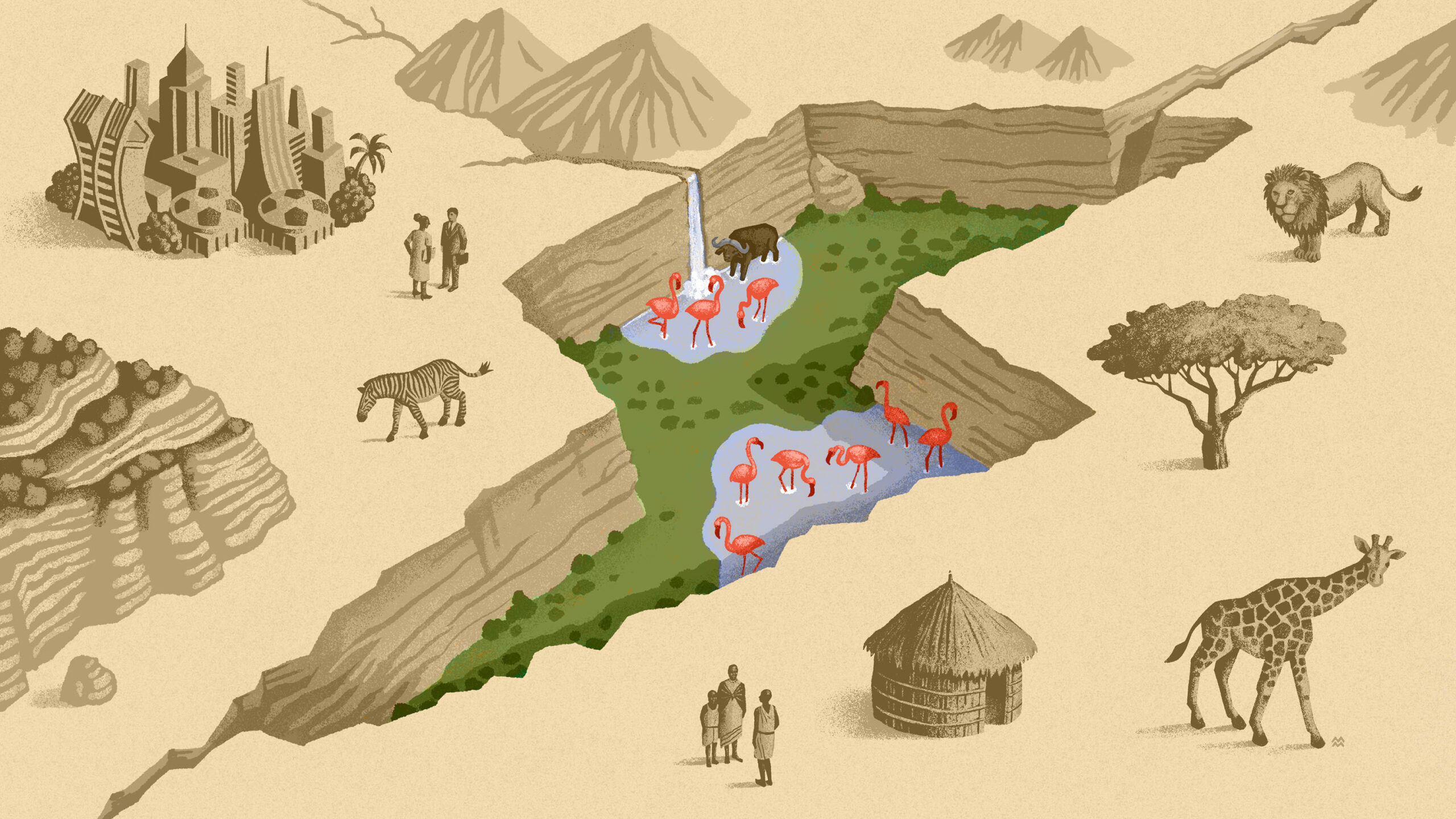

“Getting out the truth and establishing facts and reliable information takes time. But our audiences really don’t have that kind of patience.” —Amanda Crawford, associate professor at the University of Connecticut, tells the NYT why conspiracy theories are gaining traction online. One More Thing MIRIAM MARTINCIC Welcome to Kenya’s Great Carbon Valley: a bold new gamble to fight climate change Kenya’s Great Rift Valley is home to five geothermal power stations, which harness clouds of steam to generate about a quarter of the country’s electricity. But some of the energy escapes into the atmosphere, while even more remains underground for lack of demand. That’s what brought Octavia Carbon here. Last year, the startup began harnessing some of that excess energy to remove CO2 from the air. The company says the method is efficient, affordable, and—crucially—scalable. But the project also faces fierce opposition.

Announcing our partnership with the Republic of Korea

Bringing frontier AI models to Korea’s scientific communityKorea’s Ministry of Science and ICT (MSIT) has recently launched the K-Moonshot Missions, an initiative aimed at unlocking step-change improvements in research productivity and addressing national grand challenges.Helping make this vision a reality, Google will establish an AI Campus in the Republic of Korea — an AI-focused facility within its Seoul offices.The AI Campus will be a hub for Korean academia and research institutions to collaborate with Google’s world-leading AI experts to accelerate scientific breakthroughs through research and access to our most advanced AI for Science models, programs and events. We will begin by exploring collaborations with research-oriented institutions including Seoul National University (SNU), Korea Advanced Institute of Science and Technology (KAIST) and the Ministry’s three AI Bio Innovation Hubs, leveraging our models in fields such as life sciences, energy, weather and climate, for example:AlphaEvolve – a Gemini-powered coding agent for designing and optimising advanced algorithms. This has shown beneficial impact across many areas in computing and math, and we are seeing similar examples emerge in drug discovery and energy.AlphaGenome – an AI model to help scientists better understand how mutations in human DNA sequences impact a wide range of gene functions, speeding up research on genome biology and helping to improve disease understanding.AlphaFold – already used by more than 85,000 researchers in Korea, we will explore accelerating AI-enabled predictions for proteins, DNA and RNA.AI co-scientist – a multi-agent AI system that acts as a virtual scientific collaborator to help researchers brainstorm and verify hypotheses. This is showing promising benefits in a range of biomedical applications and we look forward to collaborating through joint research exploration and technical advisory to support the Ministry’s AI Scientist Project on ways to best integrate the system.WeatherNext – we will explore collaborations to support Korea’s energy and sustainability goals in predicting and analyzing the impacts of extreme weather events and optimizing renewable energy on grids.Cultivating AI talent and partnering on safetyRealizing the full potential of AI requires investing in people and building responsibly. To support the next generation of Korean AI talent, we are opening doors to forge connections with Google DeepMind, including exploring internship opportunities for Korean students. This builds on Google’s broader commitment to the region, including the recent milestone of providing 50,000 AI Essentials scholarships to help job seekers gain foundational skills.Finally, following our Frontier AI Safety Commitments made at the AI Seoul Summit, we will collaborate with the Korean AI Safety Institute (AISI) on research and best practices.Building on the AlphaGo legacyAs we look back on the legacy of AlphaGo, we are incredibly excited for what lies ahead. We look forward to collaborating with the government as they invest in important local AI infrastructure, such as a new National AI for Science Center (NAIS), due to open in May.By combining Google DeepMind’s frontier AI models with the brilliant scientific minds in Korea, we believe we can unlock scientific discoveries that will benefit society for generations to come.

Data Center World 2026: Innovation Spotlight

Belden + OptiCool: Modular Cooling for the AI Middle Market At Data Center World 2026, company representatives from Belden and OptiCool described a joint push into integrated rack-level infrastructure—pairing connectivity, power, and modular cooling into a single deployable system aimed squarely at enterprise and mid-market colocation providers. The partnership reflects a shift already underway inside Belden itself. Long known as a manufacturer of wire, cable, and connectivity products, the company said it has spent the last several years evolving into a solutions provider—leveraging a broader portfolio that spans industrial networking, automation, and control systems. That repositioning is now extending into AI infrastructure. From Components to Fully Integrated Systems Rather than selling discrete products into bid cycles, Belden is now packaging racks, PDUs, cable management, and cooling into a unified offering—delivered as a manufacturer-backed system rather than a third-party integration. “We can bring a full solution to the table now,” a company representative said, emphasizing that the company is “standing behind the solution as a manufacturer, not as a system integrator.” The cooling layer comes via OptiCool, whose rear-door heat exchanger (RDHx) technology is designed to scale alongside uncertain AI workloads. Two-Phase Rear Door Cooling at Rack Scale OptiCool’s approach centers on two-phase cooling applied at the rear door, combining the non-invasive characteristics of RDHx with the efficiency gains typically associated with direct-to-chip liquid cooling. According to company representatives, the system: Supports up to 120 kW per rack (with 60 kW demonstrated on the show floor) Delivers up to 10x cooling capacity compared to traditional approaches Operates at roughly one-third the energy consumption of comparable single-phase systems Instead of injecting cold air, the system extracts heat using refrigerant as the heat sink, reducing demand on CRAC units and broader facility cooling infrastructure. Designing for Uncertainty: Modular, Swappable Capacity The defining feature—and

The Trillion-Dollar AIDC Boom Gets Real: Omdia Maps the Path From Megaclusters to Microgrids

The AI data center buildout is getting bigger, denser, and more electrically complex than even many bullish observers expected. That was the core message from Omdia’s Data Center World analyst summit, where Senior Director Vlad Galabov and Practice Lead Shen Wang laid out a view of the market that has grown more expansive in just the past year. What had been a large-scale infrastructure story is now, in Omdia’s telling, something closer to a full-stack industrial transition: hyperscalers are still leading, but enterprises, second-tier cloud providers, and new AI use cases are beginning to add demand on top of demand. Omdia’s updated forecast reflects that shift. Galabov said the firm has now raised its 2030 projection for data center investment beyond the $1.6 trillion figure it showed a year ago, arguing that surging AI usage, expanding buyer classes, and the emergence of new power infrastructure categories have all forced a rethink. “One of the reasons why we raised it is that people keep using more AI,” Galabov said. “And that just means more money, because we need to buy more GPUs to run the AI.” That is the simple version. The more consequential one is that AI is no longer behaving like a contained technology cycle. It is spilling outward into adjacent infrastructure markets, including batteries, gas-fired onsite generation, and high-voltage DC power architectures that until recently sat well outside the mainstream data center conversation. A Market Moving Faster Than the Forecasts Galabov opened by revisiting the predictions Omdia made last year for 2030. On several fronts, he said, the market is already validating them faster than expected. AI applications are becoming commonplace. AI has become the dominant driver of data center investment. Self-generation is no longer a fringe strategy. Even some of the rack-scale architecture concepts that once looked

AI’s Execution Era: Aligned and Netrality on Power, Speed, and the New Data Center Reality

At Data Center World 2026, the industry didn’t need convincing that something fundamental has shifted. “This feels different,” said Bill Kleyman as he opened a keynote fireside with Phill Lawson-Shanks and Amber Caramella. “In the past 24 months, we’ve seen more evolution… than in the two decades before.” What followed was less a forecast than a field report from the front lines of the AI infrastructure buildout—where demand is immediate, power is decisive, and execution is everything. A Different Kind of Growth Cycle For Caramella, the shift starts with scale—and speed. “What feels fundamentally different is just the sheer pace and breadth of the demand combined with a real shift in architecture,” she said. Vacancy rates have collapsed even as capacity expands. AI workloads are not just additive—they are redefining absorption curves across the market. But the deeper change is behavioral. “Over 75% of people are using AI in their day-to-day business… and now the conversation is shifting to agentic AI,” Caramella noted. That shift—from tools to delegated workflows—points to a second wave of infrastructure demand that has not yet fully materialized. Lawson-Shanks framed the transformation in more structural terms. The industry, he said, has always followed a predictable chain: workload → software → hardware → facility → location. That chain has broken. “We had a very predictable industry… prior to Covid. And Covid changed everything,” he said, describing how hyperscale demand compressed deployment cycles overnight. What followed was a surge that utilities—and supply chains—were not prepared to meet. From Capacity to Constraint: Power Becomes Strategy If AI has a gating factor, it is no longer compute. It is power. “Before it used to be an operational convenience,” Caramella said. “Now it’s a strategic advantage—or constraint if you don’t have it.” That shift is reshaping executive decision-making. Power is no

Rebuilding the data stack for AI

In partnership withInfosys Topaz Artificial intelligence may be dominating boardroom agendas, but many enterprises are discovering that the biggest obstacle to meaningful adoption is the state of their data. While consumer-facing AI tools have dazzled users with speed and ease, enterprise leaders are discovering that deploying AI at scale requires something far less glamorous but far more consequential: data infrastructure that is unified, governed, and fit for purpose. That gap between AI ambition and enterprise readiness is becoming one of the defining challenges of this next phase of digital transformation. As Bavesh Patel, senior vice president of Databricks, puts it, “the quality of that AI and how effective that AI is, is really dependent on information in your organization.” Yet in many companies, that information remains fragmented across legacy systems, siloed applications, and disconnected formats, making it nearly impossible for AI systems to generate trustworthy, context-rich outputs. “Really, the big competitive differentiator for most organizations is their own data and then their third-party data that they can add to it,” says Patel. For enterprise AI to deliver value, data must be consolidated into open formats, governed with precision, and made accessible across functions. Without that foundation, businesses risk “terrible AI,” as Patel bluntly describes it. That means moving beyond siloed SaaS platforms and disconnected dashboards toward a unified, open data architecture capable of combining structured and unstructured data, preserving real-time context, and enforcing rigorous access controls. When the groundwork is laid correctly, organizations can move toward measurable outcomes, unlocking efficiencies, automating complex workflows, and even launching entirely new lines of business.

That value focus is critical, says Rajan Padmanabhan, unit technology officer at Infosys, especially as enterprises seek precision in the outputs driving business decisions. Rather than treating AI initiatives as isolated innovation projects, leading companies are tying AI deployment directly to business metrics, using governance frameworks to determine what delivers results and what should be abandoned quickly. “We see this big opportunity just with AI literacy with business users, where they’re very eager to understand how they should be thinking about AI,” adds Patel. “What does AI mean when you peel the covers? What are the pieces and the building blocks that you need to put in place, both from a technology and a training and an enablement standpoint?”

The possibilities ahead are substantial. As AI agents evolve from copilots into autonomous operators capable of managing workflows and transactions, the organizations that win will be those that build the right foundation now. “What we are seeing as a new way of thinking is moving from a system of execution or a system of engagement to a system of action,” notes Padmanabhan. “That is the new way we see the road ahead.” The future of AI in the enterprise will be determined by whether businesses can turn fragmented information into a strategic asset capable of powering both smarter decisions and entirely new ways of operating. This episode of Business Lab is produced in partnership with Infosys Topaz. Full Transcript: Megan Tatum: From MIT Technology Review, I’m Megan Tatum, and this is Business Lab, the show that helps business leaders make sense of new technologies coming out of the lab and into the marketplace. This episode is produced in partnership with Infosys Topaz.Now, recent advancements in AI may have unlocked some compelling new industrial applications, but a reliance on inadequate data models means that many enterprises are hitting a brick wall. AI and agentic AI in particular place a whole new set of demands on data. The technology requires greater access, context, and guardrails to operate effectively. Existing data models often fall short. They’re too fragmented or siloed. Data itself often lacks quality. To bridge the gap, they require an AI-ready upgrade. Two words for you: data reconfigured.My guest today, are Bavesh Patel, senior vice president for Go-to-Market at Databricks, and Rajan Padmanabhan, unit technology officer for data analytics and AI at Infosys.

Welcome, Bavesh and Rajan. Rajan Padmanabhan: Thank you. Thanks for having us. Bavesh Patel: Thanks for having us. Megan: Fantastic. Thank you both so much for joining us today. Bavesh, if I could come to you first, when we talk about AI-ready data, what exactly do we mean? What new demands does AI place on data, and how does this impact the way it needs to be structured and used? Bavesh: Yeah. Great question. Appreciate you hosting us today. I think that obviously the whole world is enamored with AI because of all of the power that we can all see as users. AI is now democratized across hundreds of millions of users. And when we think about enterprises and businesses using AI, the quality of that AI and how effective that AI is really dependent on information in your organization, and that’s data. And what we found is that most enterprises, their data is kind of locked away in these different applications and different systems. And it’s very difficult to get a good view of, what is all my data? How trustworthy is it? How recent and fresh is it? And all of that is being injected into the AI. Unless you have a proper understanding of your data, the ability to ensure that it’s data that’s accurate and that can be used so that the AI can take advantage of it, you’re actually going to end up having terrible AI. We see a lot of customers spend time on cleansing their data, organizing their data, making sure it’s access controlled correctly, and that tends to be the fuel of good AI. Megan: Yeah. It’s such a foundational thing, isn’t it? But it can be missed, I think, quite easily. Rajan, what difference can having AI-ready data really make for enterprises as they unlock that full potential of AI and its applications? Rajan: First and foremost, thanks for having us. It’s a pleasure. I think in continuation of what Bavesh talked about, see, data and AI is pretty synonymous. And similarly, the consumer AI and enterprise AI and enterprise agentic AI are different because first and foremost, the business needs to have the context. That context from your enterprise information, which is not only structured, both structured and unstructured and user-generated contents and all forms of data is going to be very, very critical to really get the context right, and really get any model that you pick. That’s where the platforms like Databricks really help with the plethora of models or whether you want to build your own models or whether you want to ground the model based on your data. That is going to be very, very critical. That is where getting the data for AI is going to be very, very critical.

The third critical part, and this actually will be one of the roadblocks for adoption of AI. That’s why if you see the AI adoption on the consumer side is skyrocketing, but on the enterprise side, the enterprises are struggling is primarily around the precision of their output, because you are taking a business decisions where you are taking a buy decision, you are taking a sell decision, or you are trying to recommend something, recommend the content. It could be 20 different use cases. For that, the precision is going to be very critical. We are seeing our customers, the successful customers, definitely for the precision to be more than 92% is not aspiration, that is a must-have. If you have that, definitely being that AI data is going to be the entrepreneur right now for that. Megan: And I suppose if we’ve outlined there how critical this is, where should enterprises start then, professional perhaps, the level, what are the foundations when it comes to building an AI-ready data model?

Bavesh: Yeah. And I think Rajan hit the nail on the head. I mean, enterprises are grappling with a different set of problems than consumer AI. The first thing is that you’ve got to get a handle on your data. As I mentioned, a lot of the data is locked in. Ensuring that you have ability to put your data in a place where you can understand the holistic view of as much of your data as possible. That kind of starts with putting your data in open formats. A lot of the valuable data today in an organization is locked away in some proprietary SaaS app or some system, and all the datasets aren’t connected together to form that context. The first step is to really do an analysis of what is your data estate? What are the critical pieces of data that need to be put into a place where you can start to understand them and how they’re connected to one another? Thinking about how do you set up your data catalog, thinking about how do the relationships between the data assets work, putting data governance around it, that seems to be the first step. And if you think about how ChatGPT was built, it took all the data on the internet and then aggregated it, synthesized it, and then built these transformer models, while enterprises, they don’t really have a handle of all their data within the organization. That’s the first foundation that you really want to think about. The second thing is that you don’t want to just go ad hoc, go and do random AI projects. You really need to be thinking about business value. A lot of our customers are looking at AI much more strategically in that they want to be able to get projects on the board with wins and then generate business value. Building an AI value roadmap, which is connected to how well your data is organized, those two things seem to be foundational to how do you launch AI successfully in your organization. Megan: That value piece is so important, isn’t it? And as I understand it, Infosys and Databricks have worked closely together to guide organizations through this transformation. I wondered, can you share some examples of the impact you’ve seen enterprises you’ve worked with, Rajan, what difference has it made to the ways in which they can integrate more sophisticated AI and agentic AI applications? Rajan: Well, that’s a very, very good question. What both Databricks and Infosys has done is we have come up with, a kind of a framework first. First and foremost, it all needs to start with the value. One of the largest food products company where we collaborated together, what we have done is we have applied this framework. The framework consists of six different things. First and foremost, very critical is the value management, which Bavesh touched upon. We have worked together to come up with a 3M measurement framework, what we call adaptability, business value, and then responsible. You can’t just go and do a garage project. It has to be measurable. It should be responsible, follow all those things. That is going to be very critical. And we helped this client to prioritize, which will give them the most value for money, the investments that they are making. The second critical part here is it is not like most of the enterprises today are not everybody’s AI-born companies. Most of them were born during analog days; most of them were born in digital days. There are companies which are applying AI for modernization, because a lot of your historical information, which is actually helping you to build that long-term context. And that is where we have worked closely with some of the native tools of Databricks, like Lakebridge or the AI assistants that are there, and then create composable services on top of it to help the clients unlock the value bringing into Databricks. And then the second part where we help the client is exactly to the point, the readying of data. Now you brought in the data, now you have to bring both the structured, unstructured, analytical and all these aspects.

And that is where the third layer, we closely work with the Databricks, which is part of leveraging all the great capabilities within the Databricks, be it Unity Catalog, be it the open formats, or be it the gateways and other aspects. We were able to make the data available for this client. What has really helped our client, the third part, is Agent Bricks, which is one of the differentiatiors. It gives you the flavor for the enterprise. That is where we have closely worked, and we built some of our industry-specific agents, be it CPG, be it energy, be it FS. And for this client, what we have done is we have taken some of those CPG-specific use cases. Either it could be on the HR space or the procurement space or on the marketing space. And this has really helped our client be able to build a business capability surrounding this and unlock eight to nine use cases, we call it as a products, agentic AI products, which can really drive more value for them, solving the real business problems. And this kind of a comprehensive set of frameworks plus set of suites of services, plus our solution assets, Infosys solution assets, as well asunlocking the value from Databricks has really helped these clients. And we see similar patents for a lot of these successful engagements where we were able to continuously drive the value by applying this framework actually. Megan: Right. Sounds like it made a real material difference. Rajan mentioned a few of the tools in Databricks catalog there, Bavesh. I know you’ve recently worked to launch an operational database for AI agents and apps. I wonder how does a platform like that help organizations in this journey? What makes it different from some of the other platforms out there right now? Bavesh: Databricks has come to market with a new offering called Lakebase, which is really an OLTP database where you can build your AI apps. And if you think about it, there’s really two main types of data in an enterprise. There’s all the historical data, which is all the things that have happened, and that’s really what your analytics is based on. You have an old app system where you have put all your historical data and Databricks has come to market with what we call the Lakehouse, which is essentially a data warehouse with all of your data that is not operational in nature. It’s historical data. And I think that Lakehouse concept is really pushing forward with AI because a lot of our customers have thousands of users within their business and they need to get data. And what they’ve done is they’ve actually gone down the BI route, which is really building a dashboard or a report.