Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

Microsoft Builds for Two Worlds: Sovereign Cloud and AI Factories

So far in 2026, across the United States and overseas, Microsoft is building an infrastructure portfolio at full hyperscale. The strategy runs on two tracks. The first is familiar: sovereign cloud expansion involving new regions, local data residency, and compliance-driven enterprise infrastructure. The second is larger and more consequential: purpose-built AI factory campuses designed for dense GPU clusters, liquid cooling, private fiber, and power acquisition at a scale that extends far beyond traditional cloud infrastructure. Despite reports last year that Microsoft was pulling back on data center development, the company is accelerating. It is not only advancing its own large-scale campuses, but also absorbing premium AI capacity originally aligned with OpenAI. In Texas and Norway, projects tied to OpenAI’s infrastructure plans have shifted back into Microsoft’s orbit. Even after contractual changes gave OpenAI greater flexibility to source compute elsewhere, Microsoft remains the market’s most reliable backstop buyer for top-tier AI infrastructure. It no longer needs to control every OpenAI build to maintain its position. In 2026, Microsoft is still the company best positioned to turn uncertain AI demand into deployed capacity, e.g. concrete, steel, power, and silicon at scale. Building at Industrial Scale The clearest indicator of Microsoft’s intent is its capital spending. In its January 2026 earnings cycle, Reuters reported that Microsoft’s quarterly capital expenditures reached a record $37.5 billion, up nearly 66% year over year. The company’s cloud backlog rose to $625 billion, with roughly 45% of remaining performance obligations tied to OpenAI. About two-thirds of that quarterly capex was directed toward compute chips. To be clear: this is no speculative buildout. Microsoft is deploying capital against a massive, committed demand pipeline, even as it maintains significant exposure to OpenAI-driven workloads. The company is solving two infrastructure problems at once: supporting broad Azure and Copilot growth, while ensuring

BYOP Moves to the Center of Data Center Strategy

Self-Sufficiency Becomes a Feature, Not a Risk Consider Wyoming’s Project Jade, where county commissioners approved an AI campus tied to 2.7 GW of new natural gas-fired generation being developed by Tallgrass Energy. Reporting from POWER described the project as a “bring your own power” model designed for a high degree of self-sufficiency, with a mix of natural gas generation and Bloom fuel cells. The campus is expected to scale significantly over time. What stands out is not only the size, but the positioning. Self-sufficiency is becoming a selling point both for developers seeking to de-risk timelines, and for local stakeholders wary of overloading existing utility infrastructure. Fuel Cells and Nuclear: The Middle Ground and the Long Game Fuel cells occupy an important middle ground in this shift. Bloom Energy’s 2026 report positions fuel cells as a leading onsite option due to shorter lead times, modular deployment, and lower local emissions. Market activity suggests that interest is real. For developers, fuel cells can be easier to permit than large turbine installations and can be deployed incrementally. That makes them effective as bridge-to-grid solutions or as permanent components of hybrid architectures. Advanced nuclear remains the most strategically significant, but least immediate, BYOP pathway. Companies including Switch and other data center operators have explored partnerships with Oklo around its Aurora small modular reactor design. Nuclear holds long-term appeal because it offers firm, low-carbon power at scale. But for current AI buildouts, it remains a future option rather than a near-term construction solution. The immediate reality is that gas and modular onsite systems are closing the time-to-power gap, while nuclear is being positioned as a longer-duration successor as licensing and deployment timelines evolve. The model itself is also evolving. BYOP is beginning to blur the line between developer, energy provider, and compute customer. Reuters

Meta’s compute grab continues with agreement to deploy tens of millions of AWS Graviton cores

“This is really about control of the AI system, not just scale,” said Kimball. As AI evolves toward persistent, agentic workloads, the role of the CPU becomes “quite meaningful;” it serves as the control plane, handling orchestration, managing memory, scheduling, and other intensive tasks across accelerators. “This is especially true in agentic environments, where the workloads will be less linear and more stateful,” he pointed out. So, ensuring a supply of these resources just makes sense. The agreement builds on Meta’s long-standing partnership with AWS, but also reflects what the company calls its “diversified approach” to infrastructure. “No single chip architecture can efficiently serve every workload,” the company emphasized. Proving the point, Meta recently announced four new generations of its MTIA training and inference accelerator chip and signed a massive deal with AMD to tap into 6GW worth of CPUs and AI accelerators. It also entered into a multi-year partnership with Nvidia to access millions of Blackwell and Rubin GPUs and to integrate Nvidia Spectrum-X Ethernet switches into its platform, and was also one of Arm’s first major CPU customers. In the wake of all this, Nabeel Sherif, a principal advisory director at Info-Tech Research Group, posed the burning question: “What are they going to do with all this capacity?” Primarily it will support Meta’s internal experimentation and innovation, he said, but it also lays the groundwork and provides the capacity for Meta to offer its own agentic AI services, for instance, its Llama AI model as an API, to the market.

Three reasons why DeepSeek’s new model V4 matters

On Friday, Chinese AI firm DeepSeek released a preview of V4, its long-awaited new flagship model. Notably, the model can process much longer prompts than its last generation, thanks to a new design that helps it handle large amounts of text more efficiently. Like DeepSeek’s previous models, V4 is open source, meaning it is available for anyone to download, use, and modify. V4 marks DeepSeek’s most significant release since R1, the reasoning model it launched in January 2025. R1, which was trained on limited computing resources, stunned the global AI industry with its strong performance and efficiency, turning DeepSeek from a little-known research team into China’s best-known AI company almost overnight. It also helped set off a wave of open-weight model releases from other Chinese AI firms. DeepSeek has kept a relatively low profile since then—but earlier this month, it effectively teased V4’s release when it added “expert” and “flash” modes to the online version of its model, prompting speculation that the updates were tied to a bigger upcoming release. While the company has become a powerful symbol of China’s AI ambitions, its big return to cutting-edge frontier models comes after months of scrutiny—including major personnel departures, delays to previous model launches, and growing scrutiny from both the US and Chinese governments.

So, will V4 shake the AI field the way R1 did? Almost certainly not, but here are three big reasons why this release matters.

1. It breaks new ground for an open-source model. As with R1 before it, DeepSeek claims that V4’s performance rivals the best models available at a fraction of the price. This is great news for developers and for companies using the tech, because it means they can access frontier AI capabilities on their own terms, and without worrying about skyrocketing costs. The new model comes in two versions, both of which are available on DeepSeek’s website and in its app, with API access also open to developers. V4-Pro is a larger model built for coding and complex agent tasks, and V4-Flash is a smaller version designed to be faster and cheaper to run. Both versions offer reasoning modes, in which the model can carefully parse a user’s prompt and show each step as it works through the problem. For V4-Pro, DeepSeek charges $1.74 per million input tokens and $3.48 per million output tokens, a fraction of the cost of comparable models from OpenAI and Anthropic. V4-Flash is even cheaper, at about $0.14 per million input tokens and about $0.28 per million output tokens, making it one of the cheapest top-tier models available. This would make it a very appealing model to build applications on. In terms of performance, V4 is, perhaps unsurprisingly, a huge jump from R1—and it seems to be a strong alternative to just about all the latest big AI models. On the major benchmarks, according to results shared by the company, DeepSeek V4-Pro competes with leading closed-source models, matching the performance of Anthropic’s Claude-Opus-4.6, OpenAI’s GPT-5.4, and Google’s Gemini-3.1. And compared to other open-source models, such as Alibaba’s Qwen-3.5 or Z.ai’s GLM-5.1, DeepSeek V4 exceeds them all on coding, math, and STEM problems, making it one of the strongest open-source models ever released. DeepSeek also says that V4-Pro now ranks among the strongest open-source models on benchmarks for agentic coding tasks and performs well on other tests that measure ability to carry out multistep problems. Its writing ability and world knowledge also leads the field, according to benchmarking results shared by the company. In a technical report released alongside the model, DeepSeek shared results from an internal survey of 85 experienced developers: More than 90% included V4-Pro among their top model choices for coding tasks. DeepSeek says it has specifically optimized V4 for popular agent frameworks such as Claude Code, OpenClaw, and CodeBuddy.

2. It delivers on a new approach to memory efficiency. One of the key innovations of V4 is its long context window—the amount of text the model can process at once. Both versions can handle 1 million tokens, which is large enough to fit all three volumes of The Lord of the Rings and The Hobbit combined. The company says this context window size is now the default across all DeepSeek services and it matches what is offered by cutting-edge versions of models like Gemini and Claude. But it’s important to know not just that DeepSeek has made this leap, but how it did so. V4 makes significant architectural changes to the company’s former models—especially in the attention mechanism, which is the feature of AI models that helps them understand each part of a prompt in relation to the rest. As the prompt text gets longer, these comparisons become much more costly, making attention one of the main bottlenecks for long-context models. DeepSeek’s innovation was to make the model more selective about what it pays attention to. Instead of treating all earlier text as equally important, V4 compresses older information and focuses on the parts most likely to matter in the present moment, while still keeping nearby text in full so it does not miss important details. DeepSeek says this sharply reduces the cost of using long context. In a 1-million-token context, V4-Pro uses only 27% of the computing power required by its previous model, V3.2, while cutting memory use to 10%. The reduction in V4-Flash is even larger, using just 10% of the computing power and 7% of the memory. In practice, this could make it cheaper to build tools that need to work across huge amounts of material, such as an AI coding assistant that can read an entire codebase or a research agent that can analyze a long archive of documents without constantly forgetting what came before. DeepSeek’s interest in long context windows didn’t start with V4. Over the past year and a half, the company has quietly published a series of papers on how AI models “remember” information, experimenting with compression and mathematical techniques to extend what AI models could realistically handle.

3. It marks the first steps on the hard road away from Nvidia. V4 is DeepSeek’s first model optimized for domestic Chinese chips, such as Huawei’s Ascend—a move that has turned the launch into something of a test of whether China’s homegrown AI industry can begin to loosen its dependence on US chip giant Nvidia.

This was largely expected, since The Information reported earlier this month that DeepSeek did not give American chipmakers like Nvidia and AMD early access to V4, though prerelease access is common to allow chipmakers to optimize support of the new model ahead of a launch. Instead, the company reportedly gave early access only to Chinese chipmakers. On Friday, Huawei said its Ascend supernode products, based on the Ascend 950 series, would support DeepSeek V4. This means that companies and individuals who want to run their own modified version of Deepseek V4 will be able to use Huawei chips easily. Reuters previously reported that Chinese government officials recommended that DeepSeek integrate Huawei chips in its training process. And this pressure fits a broader pattern in China’s industrial policy: Strategic sectors are often pushed, and sometimes effectively required, to align with national self-reliance goals. But there’s a particular urgency when it comes to AI. Since 2022, US export controls have cut Chinese firms off from Nvidia’s most powerful chips, and they later also restricted access to downgraded China-market versions. Beijing’s response has been to accelerate the push for a domestic AI stack, from chips to software frameworks to data centers. Chinese authorities have reportedly been pushing data centers and public computing projects to use more domestic chips, including through reported bans on foreign-made chips, sourcing quotas, and requirements to pair Nvidia chips with Chinese alternatives from companies such as Huawei and Cambricon. Still, replacing Nvidia is not as simple as swapping one chip for another. Nvidia’s advantage lies not only in its chips, but in the software ecosystem developers have spent years building around them. Moving to Huawei’s Ascend chips means adapting model code, rebuilding tools, and proving that systems built around those chips are stable enough for serious use. To be clear, DeepSeek does not appear to have fully moved beyond Nvidia. The company’s technical report reveals that it is using Chinese chips to run the model for inference, or when someone asks the model to complete a task. But Liu Zhiyuan, a computer science professor at Tsinghua University, told MIT Technology Review that DeepSeek appears to have adapted only part of V4’s training process for Chinese chips. The report does not say whether some key long-context features were adapted to domestic chips, so Liu says V4 may still have been trained mainly on Nvidia chips. Multiple sources who spoke on the condition of anonymity, due to political sensitivity around these issues, told MIT Technology Review that Chinese chips still don’t perform as well as Nvidia chips but are better suited for inference than training. DeepSeek is also tying the future costs of V4 to this hardware shift. The company says V4-Pro prices could fall significantly after Huawei’s Ascend 950 supernodes begin shipping at scale in the second half of this year. If that works, V4 could be an early sign that China is successfully building a parallel AI infrastructure.

Cirrascale to offer on-prem Google Gemini models

Google Distributed Cloud can be deployed in customer-controlled environments, including installations that are disconnected from the Internet, which is a key requirement for some government and critical-infrastructure users. One of the big challenges is that these models are incredibly valuable and they need to be delivered in a trusted, secure environment, said Driggers. “That’s what’s really the most important thing to Google, is this model. So they need to be delivered in a confidential compute manner,” he said. The model is not stored on a hard drive; it is stored in memory. If there’s any intrusion to the machine, the machine basically turns itself off, and the model is gone, so it cannot be stolen, according to Cirrascale. Cirrascale said it will provide the hardware configurations, performance tuning and support needed to run Gemini inference at scale as part of its Cirrascale Inference Platform. The company said the service is aimed at customers that want a production environment without rebuilding existing infrastructure and includes what it described as optimized systems for Gemini inference and ongoing operational support. “It’s Google’s model. Our secret sauce is being a trusted partner to be able to deliver that model to the clients,” said Driggers. “It’s part of our inference as a service offering. So for our customers, we have a software layer on top of the model that allows them to tailor how they use it, so they can set user queues up and set user limitations.”

Space data-center news: Roundup of extraterrestrial AI endeavors

Orbital is betting that distributed inference can scale as a constellation, with each satellite handling workloads in parallel. The company is also filing with the FCC for a larger constellation. Lonestar announces first commercial space data storage service April 2026: Lonestar Data Holdings announced StarVault, which it’s calling “the world’s first commercially operational space-based sovereign data storage platform.” The service launches in October 2026 aboard Sidus Space’s LizzieSat-4 mission. StarVault isn’t a full data center — it’s data storage with “advanced cryptographic key escrow capabilities,” according to the announcement. But it’s the first commercial space data service that enterprises can actually buy. Lonestar says demand from governments, financial institutions, and critical infrastructure operators has already exceeded expectations, and the company has ordered a second payload for launch next year. Lonestar has already flown four proof-of-concept data centers to space, including two to the Moon, according to the announcement. This is different because it’s the first one designed for paying customers. Atomic-6 launches a marketplace for buying orbital capacity April 2026: Atomic-6, a space systems company in Marietta, Georgia, has launched ODC.space — basically, a marketplace where you spec, price, and order orbital data center capacity the way you’d order a rack from a colo provider. You can buy either a sovereign satellite, where you get the whole thing, or colocated, where you rent space on someone else’s capacity, according to the announcement. Atomic-6 handles spacecraft build, launch, licensing, and operations through a partner network. You just supply the processors and the workload. Delivery runs two to three years, which Atomic-6 is carefully positioning against terrestrial data center timelines that now routinely run five-plus. Base configurations start with 1U nodes on satellites rated up to 100 kW. Connectivity starts at 1 Gbps. A sovereign rack runs $3.5 million a month, Atomic-6

Microsoft Builds for Two Worlds: Sovereign Cloud and AI Factories

So far in 2026, across the United States and overseas, Microsoft is building an infrastructure portfolio at full hyperscale. The strategy runs on two tracks. The first is familiar: sovereign cloud expansion involving new regions, local data residency, and compliance-driven enterprise infrastructure. The second is larger and more consequential: purpose-built AI factory campuses designed for dense GPU clusters, liquid cooling, private fiber, and power acquisition at a scale that extends far beyond traditional cloud infrastructure. Despite reports last year that Microsoft was pulling back on data center development, the company is accelerating. It is not only advancing its own large-scale campuses, but also absorbing premium AI capacity originally aligned with OpenAI. In Texas and Norway, projects tied to OpenAI’s infrastructure plans have shifted back into Microsoft’s orbit. Even after contractual changes gave OpenAI greater flexibility to source compute elsewhere, Microsoft remains the market’s most reliable backstop buyer for top-tier AI infrastructure. It no longer needs to control every OpenAI build to maintain its position. In 2026, Microsoft is still the company best positioned to turn uncertain AI demand into deployed capacity, e.g. concrete, steel, power, and silicon at scale. Building at Industrial Scale The clearest indicator of Microsoft’s intent is its capital spending. In its January 2026 earnings cycle, Reuters reported that Microsoft’s quarterly capital expenditures reached a record $37.5 billion, up nearly 66% year over year. The company’s cloud backlog rose to $625 billion, with roughly 45% of remaining performance obligations tied to OpenAI. About two-thirds of that quarterly capex was directed toward compute chips. To be clear: this is no speculative buildout. Microsoft is deploying capital against a massive, committed demand pipeline, even as it maintains significant exposure to OpenAI-driven workloads. The company is solving two infrastructure problems at once: supporting broad Azure and Copilot growth, while ensuring

BYOP Moves to the Center of Data Center Strategy

Self-Sufficiency Becomes a Feature, Not a Risk Consider Wyoming’s Project Jade, where county commissioners approved an AI campus tied to 2.7 GW of new natural gas-fired generation being developed by Tallgrass Energy. Reporting from POWER described the project as a “bring your own power” model designed for a high degree of self-sufficiency, with a mix of natural gas generation and Bloom fuel cells. The campus is expected to scale significantly over time. What stands out is not only the size, but the positioning. Self-sufficiency is becoming a selling point both for developers seeking to de-risk timelines, and for local stakeholders wary of overloading existing utility infrastructure. Fuel Cells and Nuclear: The Middle Ground and the Long Game Fuel cells occupy an important middle ground in this shift. Bloom Energy’s 2026 report positions fuel cells as a leading onsite option due to shorter lead times, modular deployment, and lower local emissions. Market activity suggests that interest is real. For developers, fuel cells can be easier to permit than large turbine installations and can be deployed incrementally. That makes them effective as bridge-to-grid solutions or as permanent components of hybrid architectures. Advanced nuclear remains the most strategically significant, but least immediate, BYOP pathway. Companies including Switch and other data center operators have explored partnerships with Oklo around its Aurora small modular reactor design. Nuclear holds long-term appeal because it offers firm, low-carbon power at scale. But for current AI buildouts, it remains a future option rather than a near-term construction solution. The immediate reality is that gas and modular onsite systems are closing the time-to-power gap, while nuclear is being positioned as a longer-duration successor as licensing and deployment timelines evolve. The model itself is also evolving. BYOP is beginning to blur the line between developer, energy provider, and compute customer. Reuters

Meta’s compute grab continues with agreement to deploy tens of millions of AWS Graviton cores

“This is really about control of the AI system, not just scale,” said Kimball. As AI evolves toward persistent, agentic workloads, the role of the CPU becomes “quite meaningful;” it serves as the control plane, handling orchestration, managing memory, scheduling, and other intensive tasks across accelerators. “This is especially true in agentic environments, where the workloads will be less linear and more stateful,” he pointed out. So, ensuring a supply of these resources just makes sense. The agreement builds on Meta’s long-standing partnership with AWS, but also reflects what the company calls its “diversified approach” to infrastructure. “No single chip architecture can efficiently serve every workload,” the company emphasized. Proving the point, Meta recently announced four new generations of its MTIA training and inference accelerator chip and signed a massive deal with AMD to tap into 6GW worth of CPUs and AI accelerators. It also entered into a multi-year partnership with Nvidia to access millions of Blackwell and Rubin GPUs and to integrate Nvidia Spectrum-X Ethernet switches into its platform, and was also one of Arm’s first major CPU customers. In the wake of all this, Nabeel Sherif, a principal advisory director at Info-Tech Research Group, posed the burning question: “What are they going to do with all this capacity?” Primarily it will support Meta’s internal experimentation and innovation, he said, but it also lays the groundwork and provides the capacity for Meta to offer its own agentic AI services, for instance, its Llama AI model as an API, to the market.

Three reasons why DeepSeek’s new model V4 matters

On Friday, Chinese AI firm DeepSeek released a preview of V4, its long-awaited new flagship model. Notably, the model can process much longer prompts than its last generation, thanks to a new design that helps it handle large amounts of text more efficiently. Like DeepSeek’s previous models, V4 is open source, meaning it is available for anyone to download, use, and modify. V4 marks DeepSeek’s most significant release since R1, the reasoning model it launched in January 2025. R1, which was trained on limited computing resources, stunned the global AI industry with its strong performance and efficiency, turning DeepSeek from a little-known research team into China’s best-known AI company almost overnight. It also helped set off a wave of open-weight model releases from other Chinese AI firms. DeepSeek has kept a relatively low profile since then—but earlier this month, it effectively teased V4’s release when it added “expert” and “flash” modes to the online version of its model, prompting speculation that the updates were tied to a bigger upcoming release. While the company has become a powerful symbol of China’s AI ambitions, its big return to cutting-edge frontier models comes after months of scrutiny—including major personnel departures, delays to previous model launches, and growing scrutiny from both the US and Chinese governments.

So, will V4 shake the AI field the way R1 did? Almost certainly not, but here are three big reasons why this release matters.

1. It breaks new ground for an open-source model. As with R1 before it, DeepSeek claims that V4’s performance rivals the best models available at a fraction of the price. This is great news for developers and for companies using the tech, because it means they can access frontier AI capabilities on their own terms, and without worrying about skyrocketing costs. The new model comes in two versions, both of which are available on DeepSeek’s website and in its app, with API access also open to developers. V4-Pro is a larger model built for coding and complex agent tasks, and V4-Flash is a smaller version designed to be faster and cheaper to run. Both versions offer reasoning modes, in which the model can carefully parse a user’s prompt and show each step as it works through the problem. For V4-Pro, DeepSeek charges $1.74 per million input tokens and $3.48 per million output tokens, a fraction of the cost of comparable models from OpenAI and Anthropic. V4-Flash is even cheaper, at about $0.14 per million input tokens and about $0.28 per million output tokens, making it one of the cheapest top-tier models available. This would make it a very appealing model to build applications on. In terms of performance, V4 is, perhaps unsurprisingly, a huge jump from R1—and it seems to be a strong alternative to just about all the latest big AI models. On the major benchmarks, according to results shared by the company, DeepSeek V4-Pro competes with leading closed-source models, matching the performance of Anthropic’s Claude-Opus-4.6, OpenAI’s GPT-5.4, and Google’s Gemini-3.1. And compared to other open-source models, such as Alibaba’s Qwen-3.5 or Z.ai’s GLM-5.1, DeepSeek V4 exceeds them all on coding, math, and STEM problems, making it one of the strongest open-source models ever released. DeepSeek also says that V4-Pro now ranks among the strongest open-source models on benchmarks for agentic coding tasks and performs well on other tests that measure ability to carry out multistep problems. Its writing ability and world knowledge also leads the field, according to benchmarking results shared by the company. In a technical report released alongside the model, DeepSeek shared results from an internal survey of 85 experienced developers: More than 90% included V4-Pro among their top model choices for coding tasks. DeepSeek says it has specifically optimized V4 for popular agent frameworks such as Claude Code, OpenClaw, and CodeBuddy.

2. It delivers on a new approach to memory efficiency. One of the key innovations of V4 is its long context window—the amount of text the model can process at once. Both versions can handle 1 million tokens, which is large enough to fit all three volumes of The Lord of the Rings and The Hobbit combined. The company says this context window size is now the default across all DeepSeek services and it matches what is offered by cutting-edge versions of models like Gemini and Claude. But it’s important to know not just that DeepSeek has made this leap, but how it did so. V4 makes significant architectural changes to the company’s former models—especially in the attention mechanism, which is the feature of AI models that helps them understand each part of a prompt in relation to the rest. As the prompt text gets longer, these comparisons become much more costly, making attention one of the main bottlenecks for long-context models. DeepSeek’s innovation was to make the model more selective about what it pays attention to. Instead of treating all earlier text as equally important, V4 compresses older information and focuses on the parts most likely to matter in the present moment, while still keeping nearby text in full so it does not miss important details. DeepSeek says this sharply reduces the cost of using long context. In a 1-million-token context, V4-Pro uses only 27% of the computing power required by its previous model, V3.2, while cutting memory use to 10%. The reduction in V4-Flash is even larger, using just 10% of the computing power and 7% of the memory. In practice, this could make it cheaper to build tools that need to work across huge amounts of material, such as an AI coding assistant that can read an entire codebase or a research agent that can analyze a long archive of documents without constantly forgetting what came before. DeepSeek’s interest in long context windows didn’t start with V4. Over the past year and a half, the company has quietly published a series of papers on how AI models “remember” information, experimenting with compression and mathematical techniques to extend what AI models could realistically handle.

3. It marks the first steps on the hard road away from Nvidia. V4 is DeepSeek’s first model optimized for domestic Chinese chips, such as Huawei’s Ascend—a move that has turned the launch into something of a test of whether China’s homegrown AI industry can begin to loosen its dependence on US chip giant Nvidia.

This was largely expected, since The Information reported earlier this month that DeepSeek did not give American chipmakers like Nvidia and AMD early access to V4, though prerelease access is common to allow chipmakers to optimize support of the new model ahead of a launch. Instead, the company reportedly gave early access only to Chinese chipmakers. On Friday, Huawei said its Ascend supernode products, based on the Ascend 950 series, would support DeepSeek V4. This means that companies and individuals who want to run their own modified version of Deepseek V4 will be able to use Huawei chips easily. Reuters previously reported that Chinese government officials recommended that DeepSeek integrate Huawei chips in its training process. And this pressure fits a broader pattern in China’s industrial policy: Strategic sectors are often pushed, and sometimes effectively required, to align with national self-reliance goals. But there’s a particular urgency when it comes to AI. Since 2022, US export controls have cut Chinese firms off from Nvidia’s most powerful chips, and they later also restricted access to downgraded China-market versions. Beijing’s response has been to accelerate the push for a domestic AI stack, from chips to software frameworks to data centers. Chinese authorities have reportedly been pushing data centers and public computing projects to use more domestic chips, including through reported bans on foreign-made chips, sourcing quotas, and requirements to pair Nvidia chips with Chinese alternatives from companies such as Huawei and Cambricon. Still, replacing Nvidia is not as simple as swapping one chip for another. Nvidia’s advantage lies not only in its chips, but in the software ecosystem developers have spent years building around them. Moving to Huawei’s Ascend chips means adapting model code, rebuilding tools, and proving that systems built around those chips are stable enough for serious use. To be clear, DeepSeek does not appear to have fully moved beyond Nvidia. The company’s technical report reveals that it is using Chinese chips to run the model for inference, or when someone asks the model to complete a task. But Liu Zhiyuan, a computer science professor at Tsinghua University, told MIT Technology Review that DeepSeek appears to have adapted only part of V4’s training process for Chinese chips. The report does not say whether some key long-context features were adapted to domestic chips, so Liu says V4 may still have been trained mainly on Nvidia chips. Multiple sources who spoke on the condition of anonymity, due to political sensitivity around these issues, told MIT Technology Review that Chinese chips still don’t perform as well as Nvidia chips but are better suited for inference than training. DeepSeek is also tying the future costs of V4 to this hardware shift. The company says V4-Pro prices could fall significantly after Huawei’s Ascend 950 supernodes begin shipping at scale in the second half of this year. If that works, V4 could be an early sign that China is successfully building a parallel AI infrastructure.

Cirrascale to offer on-prem Google Gemini models

Google Distributed Cloud can be deployed in customer-controlled environments, including installations that are disconnected from the Internet, which is a key requirement for some government and critical-infrastructure users. One of the big challenges is that these models are incredibly valuable and they need to be delivered in a trusted, secure environment, said Driggers. “That’s what’s really the most important thing to Google, is this model. So they need to be delivered in a confidential compute manner,” he said. The model is not stored on a hard drive; it is stored in memory. If there’s any intrusion to the machine, the machine basically turns itself off, and the model is gone, so it cannot be stolen, according to Cirrascale. Cirrascale said it will provide the hardware configurations, performance tuning and support needed to run Gemini inference at scale as part of its Cirrascale Inference Platform. The company said the service is aimed at customers that want a production environment without rebuilding existing infrastructure and includes what it described as optimized systems for Gemini inference and ongoing operational support. “It’s Google’s model. Our secret sauce is being a trusted partner to be able to deliver that model to the clients,” said Driggers. “It’s part of our inference as a service offering. So for our customers, we have a software layer on top of the model that allows them to tailor how they use it, so they can set user queues up and set user limitations.”

Space data-center news: Roundup of extraterrestrial AI endeavors

Orbital is betting that distributed inference can scale as a constellation, with each satellite handling workloads in parallel. The company is also filing with the FCC for a larger constellation. Lonestar announces first commercial space data storage service April 2026: Lonestar Data Holdings announced StarVault, which it’s calling “the world’s first commercially operational space-based sovereign data storage platform.” The service launches in October 2026 aboard Sidus Space’s LizzieSat-4 mission. StarVault isn’t a full data center — it’s data storage with “advanced cryptographic key escrow capabilities,” according to the announcement. But it’s the first commercial space data service that enterprises can actually buy. Lonestar says demand from governments, financial institutions, and critical infrastructure operators has already exceeded expectations, and the company has ordered a second payload for launch next year. Lonestar has already flown four proof-of-concept data centers to space, including two to the Moon, according to the announcement. This is different because it’s the first one designed for paying customers. Atomic-6 launches a marketplace for buying orbital capacity April 2026: Atomic-6, a space systems company in Marietta, Georgia, has launched ODC.space — basically, a marketplace where you spec, price, and order orbital data center capacity the way you’d order a rack from a colo provider. You can buy either a sovereign satellite, where you get the whole thing, or colocated, where you rent space on someone else’s capacity, according to the announcement. Atomic-6 handles spacecraft build, launch, licensing, and operations through a partner network. You just supply the processors and the workload. Delivery runs two to three years, which Atomic-6 is carefully positioning against terrestrial data center timelines that now routinely run five-plus. Base configurations start with 1U nodes on satellites rated up to 100 kW. Connectivity starts at 1 Gbps. A sovereign rack runs $3.5 million a month, Atomic-6

Valero progresses Port Arthur refinery restart after March fire

Valero Energy Corp. has partially restarted operations at its 380,000-b/d refinery in Port Arthur, Tex., after a late-March explosion and subsequent fire at the site, according to a Reuters report citing sources familiar with plant operations. One crude distillation unit (CDU), the 115,000-b/d AVU 147, has resumed production, with the second CDU—the 210,000-b/d AVU-146—still offline as of Apr. 16 amid ongoing repairs to a damaged heater tube identified during post-incident inspections, according to Reuters. The March 23 incident, which occurred “after an unforeseeable release of process fluid in the [refinery’s] diesel hydrotreater (DHT-243) resulted in an ignition event and multiple process unit upsets,” resulted in shutdown of multiple processing units at the site,” according to a final air emission event report issued by the Texas Commission on Environmental Quality (TCEQ) issued on Apr. 4. To date, Valero has remained silent publicly on the mid-March explosion and operational status of the refinery, but an update on the Port Arthur situation will likely be forthcoming when the company announces first-quarter 2026 earnings results on Apr. 30. As of early April, a formal investigation into the incident was still ongoing, TCEQ said. In a sobering coincidence, the Mar. 23 explosion at Valero’s Port Arthur complex happened to occur on the 21st anniversary of the BP Texas City refinery disaster in 2005 that killed 15 workers and injured more than 170 others. Associated litigation While the explosion and accompanying fire at the Port Arthur refinery did not result in any officially reported injuries, at least one lawsuit has been filed against Valero and its operating subsidiary alleging negligence, gross negligence, nuisance, and trespass against Valero in the wake of the blast. On Apr. 20, Brent Coon & Associates (BC&A)—a Beaumont-based law firm representing residents and property owners affected by the explosion—confirmed via an e-mailed

Ceasefire extension, Hormuz risk drive volatile crude trading

Global crude markets traded in a volatile range Wednesday, Apr. 22, as geopolitical signals from Washington and renewed security concerns in the Strait of Hormuz kept traders on edge. US President Donald Trump announced he would extend the existing ceasefire with Iran past its original deadline, conditioning its longevity on tangible progress in parallel peace negotiations. The announcement initially acted as a pressure valve for elevated risk premiums, pulling Brent benchmark crude lower to $97/bbl in early trading, as market participants unwound some of the geopolitical hedges built up in recent sessions. Stay updated on oil price volatility, shipping disruptions, LNG market analysis, and production output at OGJ’s Iran war content hub. The relief rally, however, proved short-lived. Reports emerged of attacks targeting commercial vessels transiting the Strait of Hormuz—the narrow Persian Gulf waterway through which around one-fifth of the world’s oil supply flows daily—reigniting fears of a broader supply disruption. Prices rebounded swiftly, surging back above the $100/bbl threshold. Adding a further layer of complexity, the Trump administration doubled down on its existing sanctions posture, reaffirming that US restrictions on Iranian port access would remain firmly in place until Tehran tables what the President characterized as a “unified proposal.” The statement underscored Washington’s dual-track approach: dangling a diplomatic off-ramp while simultaneously maintaining maximum economic pressure on Iranian export channels—a combination that markets are likely to interpret as a sustained source of supply-side uncertainty in the near term. Separately, the European Union (EU) is considering requiring member states to build up jet fuel reserves and may introduce mechanisms to reallocate supplies based on regional demand and shortages, amid mounting concerns that the Iran war could disrupt fuel availability. China is likely to resume large-scale crude purchases within weeks, according to trading house Mercuria, after drawing down inventories during the height

Equinor plugs dry well east of Visund field in North Sea

Equinor Energy AS has plugged a dry well in the Skoll and Hati prospects, east of Visund field in the North Sea, the Norwegian Offshore Directorate (NOD) noted in a release Apr. 22. Wildcat well 34/8-A-37 H was drilled in production license 120, 140 km west of Florø and 4 km east of the Visund A platform. The well was drilled to a vertical depth of 3,081 m and a measured depth of 6,662 m subsea. It was terminated in the Lunde Formation in the Upper Triassic. Water depth at the site is 335 m. The well was drilled from Visund A. Geological information The well’s primary exploration target was to prove petroleum in reservoir rocks in the Statfjord Group in the Lower Jurassic, NOD said. The secondary exploration target was to prove petroleum in reservoir rocks in the Lunde Formation in the Upper Triassic. The well encountered the Statfjord Group with around 112 m, a total of 53 m of which with good reservoir quality. The sandstone layers in the Statfjord Group were aquiferous with hydrocarbon shows. The well also encountered the Lunde Formation totaling 150 m, 50 m of which were sandstone layers with moderate to good reservoir quality. There were hydrocarbon shows in the Lunde formation. The well is classified as dry. Equinor is operator of the license with 53.2% interest. Partners are Petoro AS (30%), ConocoPhillips Skandinavia AS (9.1%), and Repsol Norge AS (7.7%).

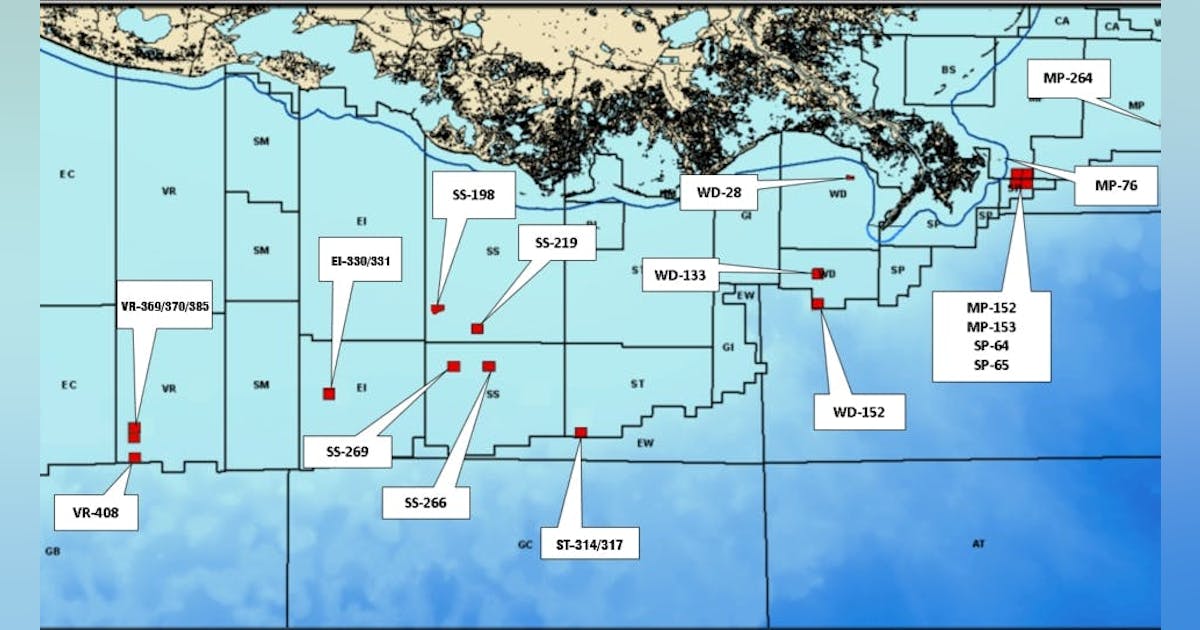

1947 Oil & Gas acquires Renaissance Offshore, plans Gulf of Mexico asset build

Newly formed 1947 Oil & Gas plc signed a term sheet to acquire Renaissance Offshore LLC in a deal the company said provides “immediate oil-weighted cash flows” and a platform to build its US oil and gas production business. The company has named former Talos Energy founder Tim Duncan as executive chairman. Renaissance Offshore, Houston, currently produces 3,000 boe/d in shallow waters in the Gulf of Mexico and has targeted a production increase to more than 4,000 boe/d in 2027. Duncan, now executive chairman of 1947 Oil & Gas, previously founded and led multiple exploration and production companies, including Talos Energy. He also currently serves on the board of Expand Energy Corp. In a release Apr. 17, Duncan said “[t]here is an enormous opportunity to roll-up mature conventional oil-weighted assets with the goal of managing cost, executing low-risk development opportunities and distributing cash flows to investors specifically in the Gulf of America [US Gulf of Mexico] and along the US Gulf Coast. Our first acquisition gives us a strong operational base and a credible platform to grow and achieve those goals.” 1947 Oil & Gas was founded by Jeff Currie and Ivan Murphy. Currie is former global head of commodities research at Goldman Sachs and previous managing director and chief strategy officer of Energy Pathways at The Carlyle Group. Murphy was a co-founder and director of Cove Energy plc and is currently executive chairman of Harena Rare Earths plc. They both will serve as directors of 1947. Brian Romere, current president, co-founder, and chief financial officer of Renaissance Offshore, will serve as president and chief financial officer of 1947 Oil & Gas.

Eni makes gas discovery with Geliga-1 well offshore Indonesia

Eni SPA has made a natural gas discovery with the Geliga‑1 exploration well in the Kutei basin, offshore Indonesia, about 70 km off East Kalimantan. The well, drilled in about 2,000 m of water to a total depth of roughly 5,100 m, encountered a ‘significant’ gas column in a Miocene reservoir with excellent petrophysical properties, Eni said in an Apr. 20 release. Preliminary estimates place in‑place resources at about 5 tcf of gas and 300 million bbl of condensate. A drillstem test (DST) is planned to evaluate reservoir productivity. Geliga‑1 lies in the Ganal production‑sharing contract, operated by Eni with 82% interest. Sinopec holds the remaining 18%. The natural gas discovery follows recent exploration successes in the basin, including the Geng North discovery in late 2023 and the Konta‑1 discovery in late 2025, confirming the scale of the gas play in the area, the company said. The new discovery lies adjacent to the undeveloped Gula gas discovery, estimated to contain 2 tcf of gas in place and 75 million bbl of condensate. Initial assessments indicate that combined Geliga and Gula resources could support production of about 1 bcfd of gas and 80,000 b/d of condensate.

Oil prices surge as Hormuz tensions reignite market volatility

Oil prices jumped sharply on Monday, Apr. 20, as renewed geopolitical tensions in the Middle East rattled markets and revived fears of major supply disruptions. Brent crude jumped about 5% to around $95/bbl, while US West Texas Intermediate (WTI) climbed into the high $80s. The latest price surge follows escalating tensions between the US and Iran, including the seizure of an Iranian cargo vessel and renewed attacks on commercial shipping. Stay updated on oil price volatility, shipping disruptions, LNG market analysis, and production output at OGJ’s Iran war content hub. Oil markets have whipsawed in recent days. Oil prices dropped 10% last week on expectations that the strait would reopen, only to rebound sharply as tensions flared again. The rebound underscores the sharp swings currently driving the market. Crucially, uncertainty surrounding the Strait of Hormuz—a chokepoint responsible for roughly 20% of global oil flows—has returned to center stage. Disruptions or closures in the strait have immediate implications for global supply, prompting traders to bid prices higher. Mixed signals on ceasefire negotiations have added to market anxiety. While diplomatic talks have been discussed, recent incidents suggest the conflict could persist, keeping supply risks elevated. The next critical deadline is fast approaching, as the ceasefire agreement between the US and Iran is set to expire at 8 p.m. Eastern Time on Tuesday (early Wednesday morning in Tehran). In response to ongoing disruptions, countries have begun tapping emergency stockpiles. Members of the International Energy Agency (IEA) have coordinated releases totaling hundreds of millions of barrels to stabilize markets. Looking ahead, oil prices are expected to remain highly volatile, with key drivers including the pace of any full reopening of the Strait of Hormuz, the trajectory of US–Iran negotiations, and the degree of demand destruction triggered by sustained high price levels.

West of Orkney developers helped support 24 charities last year

The developers of the 2GW West of Orkney wind farm paid out a total of £18,000 to 24 organisations from its small donations fund in 2024. The money went to projects across Caithness, Sutherland and Orkney, including a mental health initiative in Thurso and a scheme by Dunnet Community Forest to improve the quality of meadows through the use of traditional scythes. Established in 2022, the fund offers up to £1,000 per project towards programmes in the far north. In addition to the small donations fund, the West of Orkney developers intend to follow other wind farms by establishing a community benefit fund once the project is operational. West of Orkney wind farm project director Stuart McAuley said: “Our donations programme is just one small way in which we can support some of the many valuable initiatives in Caithness, Sutherland and Orkney. “In every case we have been immensely impressed by the passion and professionalism each organisation brings, whether their focus is on sport, the arts, social care, education or the environment, and we hope the funds we provide help them achieve their goals.” In addition to the local donations scheme, the wind farm developers have helped fund a £1 million research and development programme led by EMEC in Orkney and a £1.2m education initiative led by UHI. It also provided £50,000 to support the FutureSkills apprenticeship programme in Caithness, with funds going to employment and training costs to help tackle skill shortages in the North of Scotland. The West of Orkney wind farm is being developed by Corio Generation, TotalEnergies and Renewable Infrastructure Development Group (RIDG). The project is among the leaders of the ScotWind cohort, having been the first to submit its offshore consent documents in late 2023. In addition, the project’s onshore plans were approved by the

Biden bans US offshore oil and gas drilling ahead of Trump’s return

US President Joe Biden has announced a ban on offshore oil and gas drilling across vast swathes of the country’s coastal waters. The decision comes just weeks before his successor Donald Trump, who has vowed to increase US fossil fuel production, takes office. The drilling ban will affect 625 million acres of federal waters across America’s eastern and western coasts, the eastern Gulf of Mexico and Alaska’s Northern Bering Sea. The decision does not affect the western Gulf of Mexico, where much of American offshore oil and gas production occurs and is set to continue. In a statement, President Biden said he is taking action to protect the regions “from oil and natural gas drilling and the harm it can cause”. “My decision reflects what coastal communities, businesses, and beachgoers have known for a long time: that drilling off these coasts could cause irreversible damage to places we hold dear and is unnecessary to meet our nation’s energy needs,” Biden said. “It is not worth the risks. “As the climate crisis continues to threaten communities across the country and we are transitioning to a clean energy economy, now is the time to protect these coasts for our children and grandchildren.” Offshore drilling ban The White House said Biden used his authority under the 1953 Outer Continental Shelf Lands Act, which allows presidents to withdraw areas from mineral leasing and drilling. However, the law does not give a president the right to unilaterally reverse a drilling ban without congressional approval. This means that Trump, who pledged to “unleash” US fossil fuel production during his re-election campaign, could find it difficult to overturn the ban after taking office. Sunset shot of the Shell Olympus platform in the foreground and the Shell Mars platform in the background in the Gulf of Mexico Trump

The Download: our 10 Breakthrough Technologies for 2025

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. Introducing: MIT Technology Review’s 10 Breakthrough Technologies for 2025 Each year, we spend months researching and discussing which technologies will make the cut for our 10 Breakthrough Technologies list. We try to highlight a mix of items that reflect innovations happening in various fields. We look at consumer technologies, large industrial-scale projects, biomedical advances, changes in computing, climate solutions, the latest in AI, and more.We’ve been publishing this list every year since 2001 and, frankly, have a great track record of flagging things that are poised to hit a tipping point. It’s hard to think of another industry that has as much of a hype machine behind it as tech does, so the real secret of the TR10 is really what we choose to leave off the list.Check out the full list of our 10 Breakthrough Technologies for 2025, which is front and center in our latest print issue. It’s all about the exciting innovations happening in the world right now, and includes some fascinating stories, such as: + How digital twins of human organs are set to transform medical treatment and shake up how we trial new drugs.+ What will it take for us to fully trust robots? The answer is a complicated one.+ Wind is an underutilized resource that has the potential to steer the notoriously dirty shipping industry toward a greener future. Read the full story.+ After decades of frustration, machine-learning tools are helping ecologists to unlock a treasure trove of acoustic bird data—and to shed much-needed light on their migration habits. Read the full story.

+ How poop could help feed the planet—yes, really. Read the full story.

Roundtables: Unveiling the 10 Breakthrough Technologies of 2025 Last week, Amy Nordrum, our executive editor, joined our news editor Charlotte Jee to unveil our 10 Breakthrough Technologies of 2025 in an exclusive Roundtable discussion. Subscribers can watch their conversation back here. And, if you’re interested in previous discussions about topics ranging from mixed reality tech to gene editing to AI’s climate impact, check out some of the highlights from the past year’s events. This international surveillance project aims to protect wheat from deadly diseases For as long as there’s been domesticated wheat (about 8,000 years), there has been harvest-devastating rust. Breeding efforts in the mid-20th century led to rust-resistant wheat strains that boosted crop yields, and rust epidemics receded in much of the world.But now, after decades, rusts are considered a reemerging disease in Europe, at least partly due to climate change. An international initiative hopes to turn the tide by scaling up a system to track wheat diseases and forecast potential outbreaks to governments and farmers in close to real time. And by doing so, they hope to protect a crop that supplies about one-fifth of the world’s calories. Read the full story. —Shaoni Bhattacharya

The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology. 1 Meta has taken down its creepy AI profiles Following a big backlash from unhappy users. (NBC News)+ Many of the profiles were likely to have been live from as far back as 2023. (404 Media)+ It also appears they were never very popular in the first place. (The Verge) 2 Uber and Lyft are racing to catch up with their robotaxi rivalsAfter abandoning their own self-driving projects years ago. (WSJ $)+ China’s Pony.ai is gearing up to expand to Hong Kong. (Reuters)3 Elon Musk is going after NASA He’s largely veered away from criticising the space agency publicly—until now. (Wired $)+ SpaceX’s Starship rocket has a legion of scientist fans. (The Guardian)+ What’s next for NASA’s giant moon rocket? (MIT Technology Review) 4 How Sam Altman actually runs OpenAIFeaturing three-hour meetings and a whole lot of Slack messages. (Bloomberg $)+ ChatGPT Pro is a pricey loss-maker, apparently. (MIT Technology Review) 5 The dangerous allure of TikTokMigrants’ online portrayal of their experiences in America aren’t always reflective of their realities. (New Yorker $) 6 Demand for electricity is skyrocketingAnd AI is only a part of it. (Economist $)+ AI’s search for more energy is growing more urgent. (MIT Technology Review) 7 The messy ethics of writing religious sermons using AISkeptics aren’t convinced the technology should be used to channel spirituality. (NYT $)

8 How a wildlife app became an invaluable wildfire trackerWatch Duty has become a safeguarding sensation across the US west. (The Guardian)+ How AI can help spot wildfires. (MIT Technology Review) 9 Computer scientists just love oracles 🔮 Hypothetical devices are a surprisingly important part of computing. (Quanta Magazine)

10 Pet tech is booming 🐾But not all gadgets are made equal. (FT $)+ These scientists are working to extend the lifespan of pet dogs—and their owners. (MIT Technology Review) Quote of the day “The next kind of wave of this is like, well, what is AI doing for me right now other than telling me that I have AI?” —Anshel Sag, principal analyst at Moor Insights and Strategy, tells Wired a lot of companies’ AI claims are overblown.

The big story Broadband funding for Native communities could finally connect some of America’s most isolated places September 2022 Rural and Native communities in the US have long had lower rates of cellular and broadband connectivity than urban areas, where four out of every five Americans live. Outside the cities and suburbs, which occupy barely 3% of US land, reliable internet service can still be hard to come by.

The covid-19 pandemic underscored the problem as Native communities locked down and moved school and other essential daily activities online. But it also kicked off an unprecedented surge of relief funding to solve it. Read the full story. —Robert Chaney We can still have nice things A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line or skeet ’em at me.) + Rollerskating Spice Girls is exactly what your Monday morning needs.+ It’s not just you, some people really do look like their dogs!+ I’m not sure if this is actually the world’s healthiest meal, but it sure looks tasty.+ Ah, the old “bitten by a rabid fox chestnut.”

Equinor Secures $3 Billion Financing for US Offshore Wind Project

Equinor ASA has announced a final investment decision on Empire Wind 1 and financial close for $3 billion in debt financing for the under-construction project offshore Long Island, expected to power 500,000 New York homes. The Norwegian majority state-owned energy major said in a statement it intends to farm down ownership “to further enhance value and reduce exposure”. Equinor has taken full ownership of Empire Wind 1 and 2 since last year, in a swap transaction with 50 percent co-venturer BP PLC that allowed the former to exit the Beacon Wind lease, also a 50-50 venture between the two. Equinor has yet to complete a portion of the transaction under which it would also acquire BP’s 50 percent share in the South Brooklyn Marine Terminal lease, according to the latest transaction update on Equinor’s website. The lease involves a terminal conversion project that was intended to serve as an interconnection station for Beacon Wind and Empire Wind, as agreed on by the two companies and the state of New York in 2022. “The expected total capital investments, including fees for the use of the South Brooklyn Marine Terminal, are approximately $5 billion including the effect of expected future tax credits (ITCs)”, said the statement on Equinor’s website announcing financial close. Equinor did not disclose its backers, only saying, “The final group of lenders includes some of the most experienced lenders in the sector along with many of Equinor’s relationship banks”. “Empire Wind 1 will be the first offshore wind project to connect into the New York City grid”, the statement added. “The redevelopment of the South Brooklyn Marine Terminal and construction of Empire Wind 1 will create more than 1,000 union jobs in the construction phase”, Equinor said. On February 22, 2024, the Bureau of Ocean Energy Management (BOEM) announced

USA Crude Oil Stocks Drop Week on Week

U.S. commercial crude oil inventories, excluding those in the Strategic Petroleum Reserve (SPR), decreased by 1.2 million barrels from the week ending December 20 to the week ending December 27, the U.S. Energy Information Administration (EIA) highlighted in its latest weekly petroleum status report, which was released on January 2. Crude oil stocks, excluding the SPR, stood at 415.6 million barrels on December 27, 416.8 million barrels on December 20, and 431.1 million barrels on December 29, 2023, the report revealed. Crude oil in the SPR came in at 393.6 million barrels on December 27, 393.3 million barrels on December 20, and 354.4 million barrels on December 29, 2023, the report showed. Total petroleum stocks – including crude oil, total motor gasoline, fuel ethanol, kerosene type jet fuel, distillate fuel oil, residual fuel oil, propane/propylene, and other oils – stood at 1.623 billion barrels on December 27, the report revealed. This figure was up 9.6 million barrels week on week and up 17.8 million barrels year on year, the report outlined. “At 415.6 million barrels, U.S. crude oil inventories are about five percent below the five year average for this time of year,” the EIA said in its latest report. “Total motor gasoline inventories increased by 7.7 million barrels from last week and are slightly below the five year average for this time of year. Finished gasoline inventories decreased last week while blending components inventories increased last week,” it added. “Distillate fuel inventories increased by 6.4 million barrels last week and are about six percent below the five year average for this time of year. Propane/propylene inventories decreased by 0.6 million barrels from last week and are 10 percent above the five year average for this time of year,” it went on to state. In the report, the EIA noted

More telecom firms were breached by Chinese hackers than previously reported

Broader implications for US infrastructure The Salt Typhoon revelations follow a broader pattern of state-sponsored cyber operations targeting the US technology ecosystem. The telecom sector, serving as a backbone for industries including finance, energy, and transportation, remains particularly vulnerable to such attacks. While Chinese officials have dismissed the accusations as disinformation, the recurring breaches underscore the pressing need for international collaboration and policy enforcement to deter future attacks. The Salt Typhoon campaign has uncovered alarming gaps in the cybersecurity of US telecommunications firms, with breaches now extending to over a dozen networks. Federal agencies and private firms must act swiftly to mitigate risks as adversaries continue to evolve their attack strategies. Strengthening oversight, fostering industry-wide collaboration, and investing in advanced defense mechanisms are essential steps toward safeguarding national security and public trust.

There is no nature anymore

When people talk about “nature,” they’re generally talking about things that aren’t made by human beings. Rocks. Reefs. Red wolves. But while there is plenty of God’s creation to go around, it is hard to think of anything on Earth that human hands haven’t affected. In the Brazilian rainforest, scientists have found microplastics in the bellies of animals ranging from red howler monkeys to manatees. In remotest Yakutia, where much of the earth remains untrodden by human feet, the carbon in the sky above melts the permafrost below. In the Arctic Ocean, artificial light from ship traffic—on the rise as the polar ice cap melts away—now disrupts the nightly journey of zooplankton to the ocean surface, one of the largest animal migrations on the planet. The remote mountain lakes of the Alps are contaminated with all kinds of synthetic chemicals. Polar bears are full of flame retardants. Cesium-137, fallout from nuclear bomb explosions, lightly rimes the entire planet. These examples are mostly pollution—nuclear, carbon, chemical, light—but I raise them not to highlight the ways human industry and technology degrade the environment but to note how the things humans build change it. Nobody really knows what the exact effects of all that will be, but my point is that no part of the globe is free of human fingerprints. We have literally changed the world. We’ve changed ourselves as well. Humans are especially adept at bending human nature. Everything about us is up for grabs—appearance, health, our very thoughts. Pharmaceuticals, surgeries, vaccines, and hormones give us longer lives, take away our pain, ease our anxiety and depression, make us faster, stronger, more resilient. We’re getting glimpses of technologies that will let us change who our children will become before they’re even born. Electrodes implanted in people’s brains let them control computers and translate thoughts into speech. Prosthetics and exoskeletons straight out of comic books restore and enhance physical abilities, while gene-editing technologies like CRISPR are rewriting our very DNA. And meanwhile, people have taken the sum total of all the information we have ever written down and poured it into vast calculating machines in an effort—at least by some—to build an intelligence greater than our own.

So what even is nature, or natural, in this context? Is it “environmentalist,” in the conventional sense, to try to preserve what one could argue no longer exists? Should we employ technology to try to make the world more “natural”? Those questions led us to approach this Nature issue with humility. We try to grapple with them all the time—MIT Technology Review is, after all, a review of how people have altered and built upon nature.

And it’s a place to think about how we might repair it. Take solar geoengineering, for example—a subject we have covered with increasing frequency over the past few years. The basic idea of geoengineering is to find a technological fix for a problem technology caused: Burning petrochemicals to fuel the Industrial Revolution turned Earth’s atmosphere into a heat sink, fundamentally breaking the climate. Some geoengineers think that releasing particulate matter into the stratosphere would reflect sunlight back into space, thus reducing global temperatures. After years of theoretical discussions, some companies have begun to actively experiment with such technologies. This might seem like a great way to restore the world to a more natural state. It’s also fraught with controversy and peril. It could, for example, benefit some nations while harming others. It may give us license to continue burning fossil fuels and releasing greenhouse gases. The list goes on. Nature isn’t easy. In our May/June issue, we have attempted to take a hard look at nature in our unnatural world. We have stories about birds that can’t sing, wolves that aren’t wolves, and grass that isn’t grass. We look for the meaning of life under Arctic ice and within ourselves—and in the far future, on a distant world, courtesy of new fiction by the renowned author Jeff VanderMeer. I don’t know if any of that will answer the questions I’ve been asking here—but we can’t help but try. It’s in our nature.

This tool could show how consciousness works

How does the physical matter in our brains translate into thoughts, sensations, and emotions? It’s hard to explore that question without neurosurgery. But in a recent paper, MIT philosopher Matthias Michel, Lincoln Lab researcher Daniel Freeman, and colleagues outline a strategy for doing so with an emerging tool called transcranial focused ultrasound. This noninvasive technology reaches deeper into the brain, with greater resolution, than techniques such as EEG and MRI. It works by sending acoustic waves through the skull to focus on an area of a few millimeters, allowing specific brain structures to be stimulated so the effects can be studied. The researchers lay out an experimental approach that would use the tool to help test two competing conceptions of consciousness. The “cognitivist” concept holds that brain activity generating conscious experience must involve higher-level processes such as reasoning or self-reflection, likely using the frontal cortex. The “non-cognitivist” idea is that specific patterns of neural activity—more localized in subcortical structures or at the back of the cortex—give rise to subjective experiences directly. “This is a tool that’s not just useful for medicine, or even basic science, but could also help address the hard problem of consciousness,” Freeman says. “It can probe where in the brain are the neural circuits that generate a sense of pain, a sense of vision, or even something as complex as human thought.”

Analog computing from waste heat

Heat generated by electronic devices is usually a problem, but a team led by Giuseppe Romano, a research scientist at MIT’s Institute for Soldier Nanotechnologies, has found a way to use it for data processing that doesn’t rely on electricity. In this analog computing method, input data is encoded not as binary 1s and 0s but as a set of temperatures based on the waste heat already present in a device. The flow and distribution of that heat through tiny silicon structures, designed by a physics-based optimization algorithm they developed, forms the basis of the calculation. Then the output is represented by the power collected at the other end. The researchers used these structures to perform a simple form of matrix vector multiplication, the fundamental mathematical technique machine-learning models like large language models use to process information and make predictions. The results were more than 99% accurate in many cases. The researchers still have to overcome many hurdles to scale up this computing method for modern deep-learning models, such as the challenges involved in tiling millions of these structures together. As the matrices become more complicated, the results also become less accurate, especially when there is a large distance between the input and output terminals. But the technique could also have a more immediate use: detecting problematic heat sources and measuring temperature changes in electronics without consuming extra energy. This would also eliminate the need for multiple temperature sensors that can currently take up space on a chip. “Most of the time, when you are performing computations in an electronic device, heat is the waste product,” says Caio Silva, an undergraduate student in the Department of Physics and lead author of a paper on the work. “You often want to get rid of as much heat as you can. But here, we’ve taken the opposite approach by using heat as a form of information itself.”

Early life may have breathed oxygen earlier than believed

Around 2.3 billion years ago, a pivotal period known as the Great Oxidation Event set the evolutionary course for oxygen-breathing life on Earth. But MIT geobiologists and colleagues have found evidence that some early forms of life evolved the ability to use oxygen hundreds of millions of years before that. By mapping enzyme sequences from several thousand modern organisms onto an evolutionary tree of life, the researchers traced the origins of an enzyme that enables organisms to use oxygen to the Mesoarchean period, 3.2 to 2.8 billion years ago. The team’s results may help explain a longstanding puzzle in Earth’s history: Given that the first oxygen-producing microbes likely emerged before the Mesoarchean, why didn’t oxygen build up in the atmosphere until hundreds of millions of years later? Having evolved the key enzyme, organisms living near those microbes, called cyanobacteria, may have gobbled up the small amounts of oxygen they produced. “This does dramatically change the story of aerobic respiration,” says Fatima Husain, SM ’18, PhD ’25, a research scientist in MIT’s Department of Earth, Atmospheric, and Planetary Sciences (EAPS) and a coauthor with Gregory Fournier, an associate professor of geobiology, of a paper on the research. “It shows us how incredibly innovative life is at all periods in Earth’s history.”

Recent books from the MIT community

Priority Technologies: Ensuring US Security and Shared ProsperityEdited by Elisabeth B. Reynolds, professor of the practice of urban studies and planning and former executive director of the MIT Task Force on the Work of the Future MIT PRESS, 2026, $24.95 The Shape of Wonder: How Scientists Think, Work, and LiveBy Alan Lightman, professor of the practice of the humanities, and Martin ReesPENGUIN RANDOM HOUSE, 2025, $28 Spheres of Injustice: The Ethical Promise of Minority Presence By Bruno Perreau, professor of French studies and language MIT PRESS, 2025, $34 The Analytics Edge in HealthcareBy Dimitris Bertsimas, SM ’87, PhD ’88, professor of management and operations research and associate dean of online education and AI; Agni Orfanoudaki, PhD ’21; and Holly Wiberg DYNAMIC IDEAS, 2025, $110

The Art of Monetary Policy: Lessons from Sun Tzu for Central BanksBy Kristin J. Forbes, PhD ’98, professor of management and global economicsMIT PRESS, 2026, $30 SuperShifts: Transforming How We Live, Learn, and Work in the Age of Intelligence By Ja-Naé Duane, academic research fellow at MIT’s Center for Information Systems Research, and Steve FisherWILEY, 2025, $28 Send book news to [email protected] or 196 Broadway, 3rd Floor Cambridge, MA 02139

Get ready for hotter, muggier, stormier summers

A long stretch of humid heat followed by a powerful thunderstorm is a familiar weather pattern in the tropics, but it’s also becoming more common in midlatitude regions such as the US Midwest. A recent study by two MIT scientists identifies a key atmospheric condition that determines how hot, humid, and stormy such a region can get: inversions, in which a layer of warm air settles over cooler air. Inversions were already known to act as an atmospheric blanket that traps pollutants at ground level. Now Funing Li, a postdoc in MIT’s Department of Earth, Atmospheric and Planetary Sciences (EAPS), and Talia Tamarin-Brodsky, an assistant professor of EAPS, have found that they also trap heat and moisture at the surface. The more persistent an inversion, the more heat and humidity a region can accumulate, which can lead to more oppressive, longer-lasting humid heat waves. And when an inversion eventually weakens, intense thunderstorms and heavy rainfall can be the result. In typical conditions, the atmosphere’s layers get colder with altitude, and a heat wave that warms the air at ground level will trigger convection: The warmer, lighter air will rise, prompting colder air to sink. When the warm air hits colder altitudes, it condenses into droplets that fall as rain, often cooling things down. Li and Tamarin-Brodsky found that when warm or light air has settled over colder or heavier ground-level air, more heat and moisture are needed for a given “parcel” of air to build up enough energy to rise through that inversion layer. The upper limit on how hot and humid it can get depends on how stable the inversion is. If a blanket of warm air parks over a region for a long time without moving, it allows more moisture and heat to build up, which also makes the eventual storm more intense when it finally happens.

Inversions often form at night, when surfaces that warmed during the day radiate heat to space so that the air in contact with them becomes cooler and denser than the air above. Or they can form when a shallow layer of cool marine air moves inland and slides beneath warmer air over the land. In some cases, however, persistent inversions can form when air heated over sun-warmed mountains is carried over low-lying regions. In the US, Li says, “the Great Plains and the Midwest have had many inversions historically due to the Rocky Mountains.” But global warming is likely to make the effect more pronounced. “Our analysis shows that the eastern and midwestern regions of the US and the eastern Asian regions may be new hot spots for humid heat,” he says. “As the climate warms, theoretically the atmosphere will be able to hold more moisture,” says Tamarin-Brodsky.And because inversions will likely intensify, “new regions in the midlatitudes could experience moist heat waves that will cause stress that they weren’t used to before.” She adds, “Our theory gives an understanding of the limit for humid heat and severe convection for these communities that will be future heat wave and thunderstorm hot spots.”

Microsoft Builds for Two Worlds: Sovereign Cloud and AI Factories