Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

AI Infrastructure Brief: Power, Capital, and the Feeling That Something Is Tightening

It was one of those weeks where the headlines kept coming in terms of deals, campuses, gigawatts, billions. Taken together they indicated a quieter signal beneath the noise: the AI infrastructure buildout is accelerating, but the system supporting it is beginning to show its seams. Not cracking, not breaking. But tightening. Power, Everywhere, All at Once Start with power, because everything now starts with power. Bloom Energy and Oracle expanded their partnership toward 2.8 gigawatts of deployment – an almost casual number at this point, except it isn’t. It’s the kind of figure that used to define regional grids, now repurposed for compute. Elsewhere: And then there was the U.S. Air Force, quietly exploring Alaska bases as potential AI data center sites; because if the grid won’t come to you, you start looking for where it already exists? Even Microsoft’s expansion in Cheyenne fits the pattern: go where the power can be made to work. At the same time, Maine’s legislature passed what’s being described as the first-in-the-nation ban on data centers; a move that may or may not hold, because it’s temporary and includes exemptions. But doesn’t need to last forever. The signal for 2026 is enough: the social license layer is no longer hypothetical. Capital Is Still Flowing But It’s Wearing a Suit Now If power is the constraint, capital is still the accelerant; but it’s currently trending as more self-aware: And then the demand-side gravity: These are no exploratory partnerships. They are pre-committed consumption curves, locked in ahead of capacity that is still being negotiated, permitted, and in some cases imagined. Capital hasn’t pulled back. But it has started asking quieter questions. Speed Is the New Differentiator (or the New Risk) AWS has reportedly launched something called “Project Houdini,” aimed at accelerating data center construction timelines, which

The Download: supercharged scams and studying AI healthcare

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. We’re in a new era of AI-driven scams When ChatGPT was released in late 2022, it showed how easily generative AI could create human-like text. This quickly caught the eye of cybercriminals, who began using LLMs to compose malicious emails. Since then, they’ve adopted AI for everything from turbocharged phishing and hyperrealistic deepfakes to automated vulnerability scans. Many organizations are now struggling to cope with the sheer volume of cyberattacks. AI is making them faster, cheaper, and easier to carry out, a problem set to worsen as more cybercriminals adopt these tools—and their capabilities improve. Read the full story on how AI is reshaping cybercrime. —Rhiannon Williams

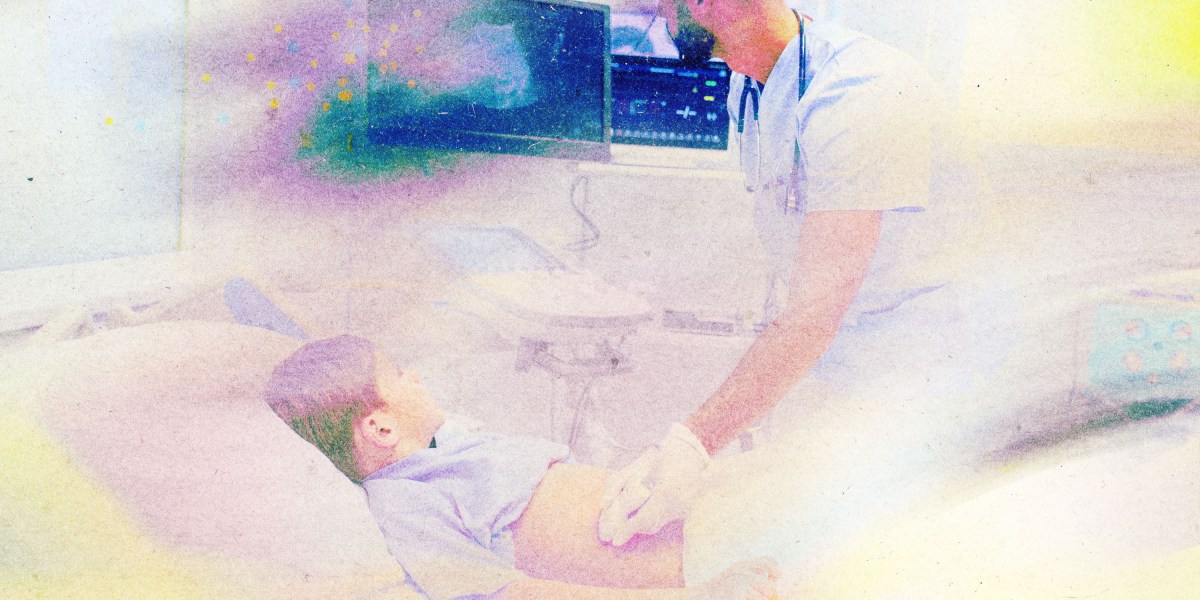

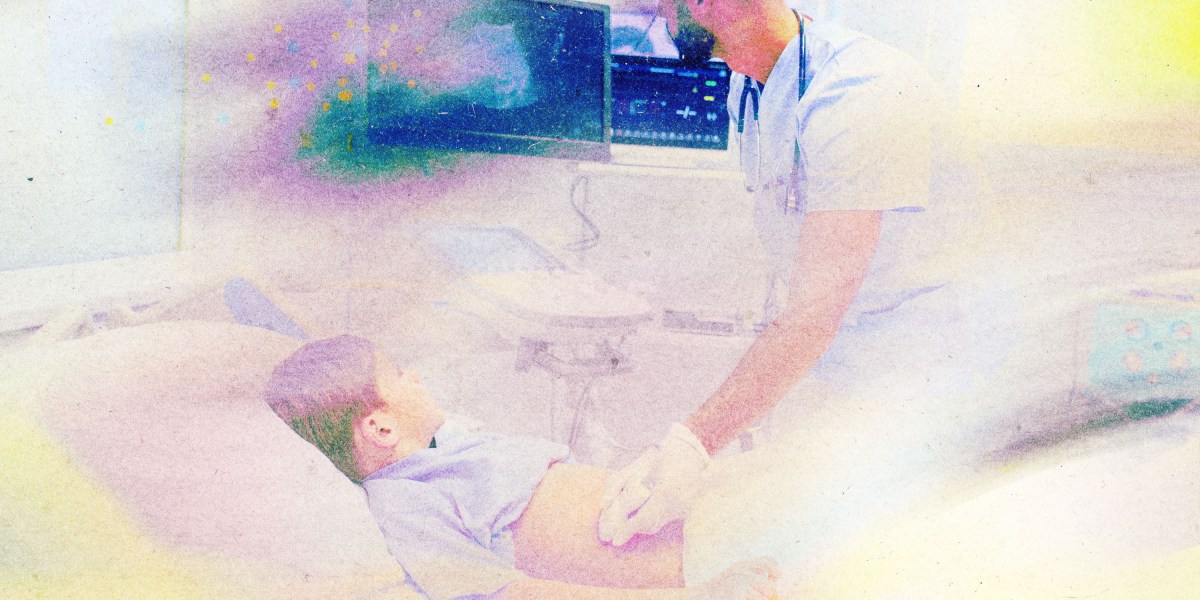

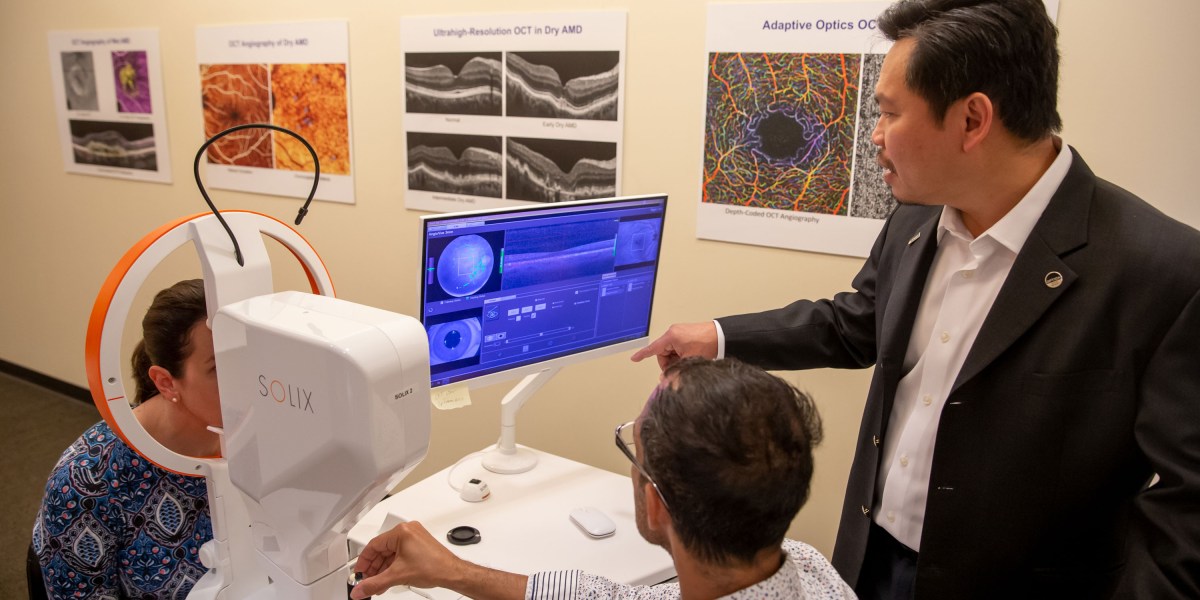

“Supercharged scams” is one of the 10 Things That Matter in AI Right Now, our essential guide to what’s really worth your attention in the field. Subscribers can watch an exclusive roundtable unveiling the technologies and trends on the list, with analysis from MIT Technology Review’s AI reporter Grace Huckins and executive editors Amy Nordrum and Niall Firth. Healthcare AI is here. We don’t know if it actually helps patients. Doctors are using AI to help them with notetaking. AI-based tools are trawling through patient records, flagging people who may require certain support or treatments. They are also used to interpret medical exam results and X-rays.

A growing number of studies suggest that many of these tools can deliver accurate results. But there’s a bigger question here: Does using them actually translate into better health outcomes for patients? We don’t yet have a good answer—here’s why. —Jessica Hamzelou The story is from The Checkup, our weekly newsletter that gives you the latest from the worlds of health and biotech. Sign up to receive it in your inbox every Thursday. The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology. 1 DeepSeek has unveiled its long-awaited new AI modelThe Chinese company has just launched preview versions of DeepSeek-V4. (CNN)+It says V4 is the most powerful open-source platform. (Bloomberg $) + And rivals top closed-source models from OpenAI and DeepMind. (SCMP)+ The model is adapted for Huawei chip technology. (Reuters $)2 More countries are curbing children’s social media accessNorway is set to enforce the latest ban. (Reuters $)+ The Philippines could follow soon. (Bloomberg $)+ Americans are pushing to get AI out of schools. (The New Yorker)3 The US has accused China of mass AI theft as tensions riseA White House memo claims Chinese firms are exploiting American models. (BBC)+ Beijing calls the accusations “slander.” (Ars Technica)4 OpenAI set itself apart from Anthropic by widely releasing its new modelIt’s releasing GPT-5.5 to all ChatGPT users, despite cybersecurity concerns. (NYT $)+ OpenAI says the new model is better at coding and more efficient. (The Verge)5 Meta is cutting 10% of jobs to offset AI spendingRoughly 8,000 layoffs are set to be announced on May 20. (QZ)+ Anti-AI protests are growing. (MIT Technology Review)6 Palantir is facing a backlash from employeesThanks to its work with ICE and the Trump administration. (Wired $)+ Surveillance tech is reshaping the fight for privacy. (MIT Technology Review)7 The era of free access to advanced AI is coming to an endAI labs are under mounting pressure to start turning profits. (The Verge)8 Elon Musk’s feud with Sam Altman is heading to court The case has already revealed several unflattering secrets. (WP $)9 A new movement is encouraging people to ditch their smartphones for a month“Month Offline” is like a Dry January for smartphones. (The Atlantic)10 Spotify has revealed its most-streamed music of the last 20 yearsFeaturing Taylor Swift, Bad Bunny, and The Weeknd. (Gizmodo) Quote of the day “We want a childhood where children get to be children. Play, friendships, and everyday life must not be taken over by algorithms and screens.” —Norwegian Prime Minister Jonas Gahr Store announces age restrictions for social media.

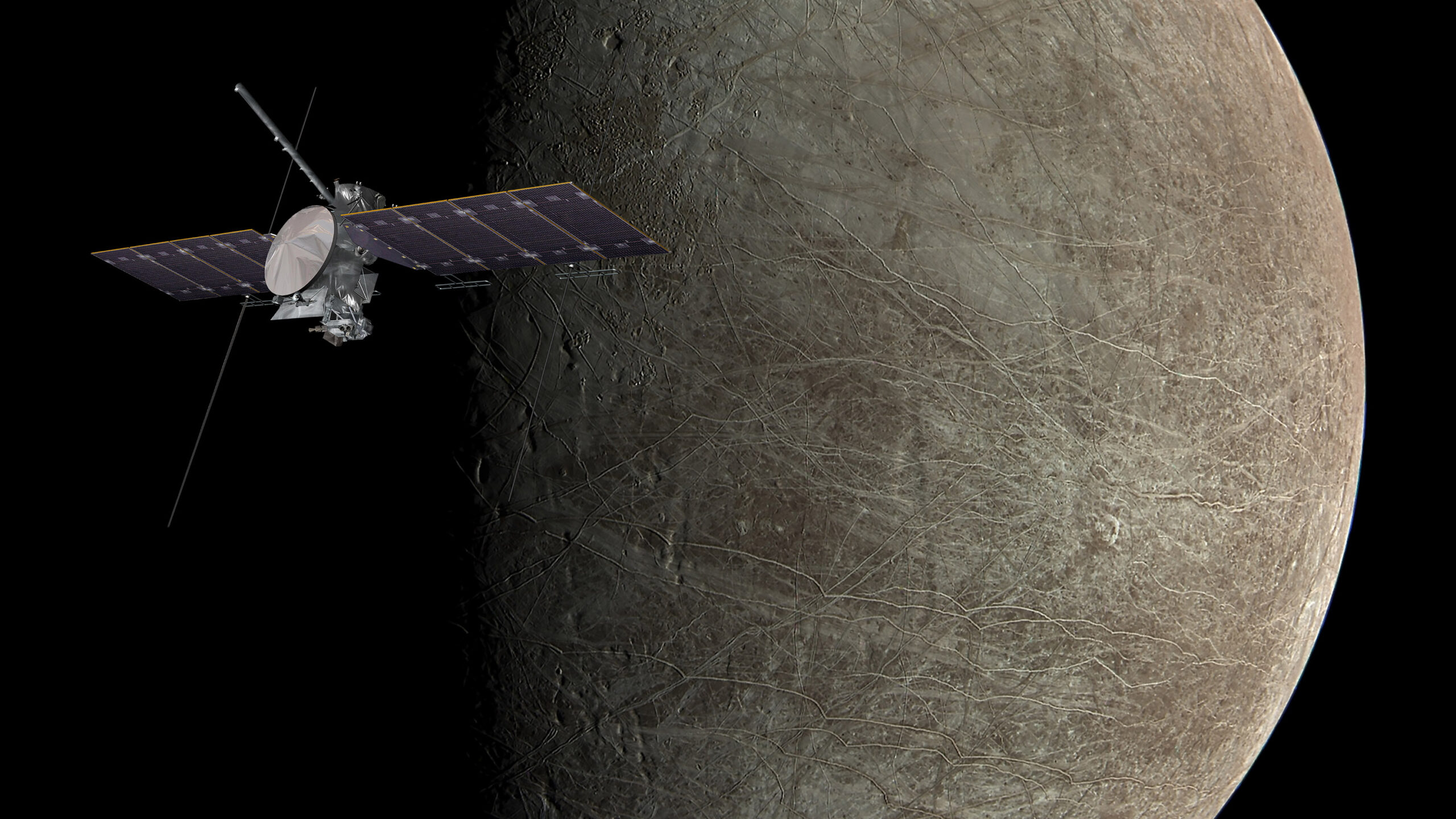

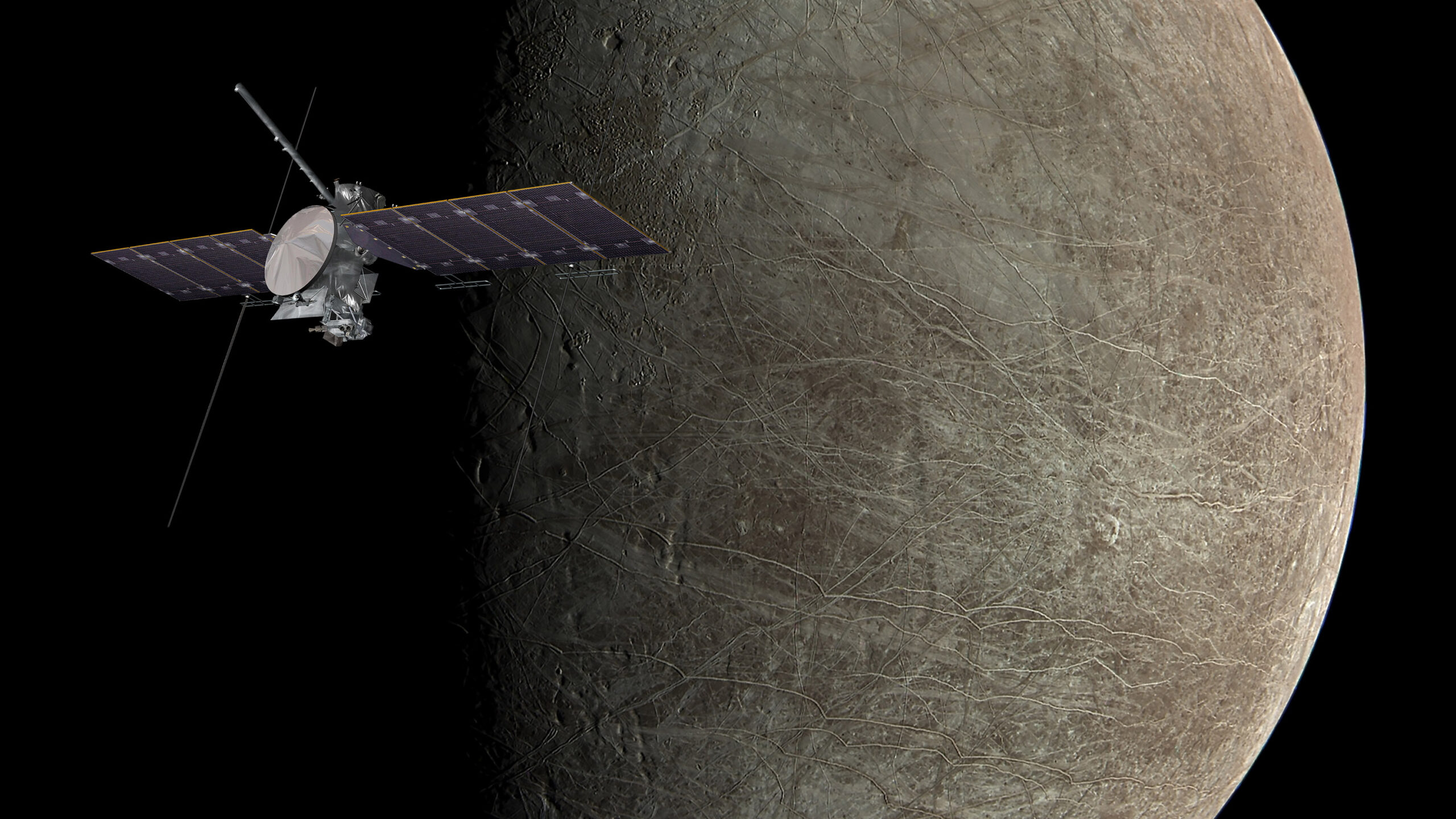

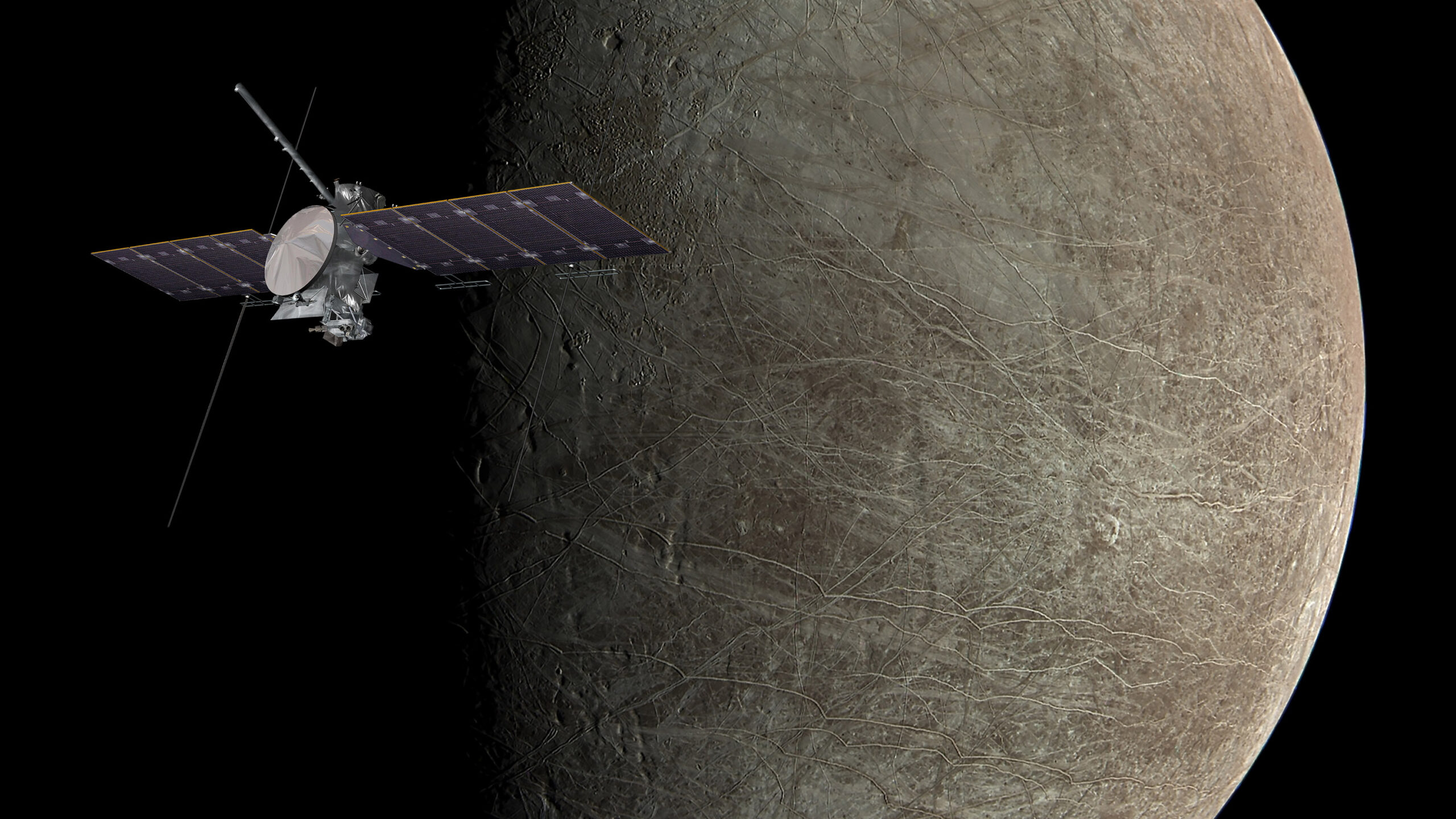

One More Thing NASA/JPL-CALTECH VIA WIKIMEDIA COMMONS; CRAFT NASA/JPL-CALTECH/SWRI/MSSS; IMAGE PROCESSING: KEVIN M. GILL The search for extraterrestrial life is targeting Jupiter’s icy moon Europa As astronomers have discovered more about Europa over the past few decades, Jupiter’s fourth-largest moon has excited planetary scientists interested in the geophysics of alien worlds. All that water and energy—and hints of elements essential for building organic molecules —point to an extraordinary possibility. In the depths of its ocean, or perhaps crowded in subsurface lakes or below icy surface vents, Jupiter’s big, bright moon could host life. To find further evidence, NASA is now searching for signs of alien existence on Europa. Read the full story on the mission.

Health-care AI is here. We don’t know if it actually helps patients.

I don’t need to tell you that AI is everywhere. Or that it is being used, increasingly, in hospitals. Doctors are using AI to help them with notetaking. AI-based tools are trawling through patient records, flagging people who may require certain support or treatments. They are also used to interpret medical exam results and X-rays. A growing number of studies suggest that many of these tools can deliver accurate results. But there’s a bigger question here: Does using them actually translate into better health outcomes for patients? We don’t yet have a good answer.

That’s what Jenna Wiens, a computer scientist at the University of Michigan, and Anna Goldenberg of the University of Toronto, argue in a paper published in the journal Nature Medicine this week. Wiens tells me she has spent years investigating how AI might benefit health care. For the first decade of her career she tried to pitch the technology to clinicians. Over the last few years, she says, it’s as though “a switch flipped.” Health-care providers not only appear much more interested in the promise of these technologies, they have also begun rapidly deploying them.

The problem is that many providers aren’t rigorously assessing how well they actually work. Take “ambient AI” tools, for example. Also known as AI scribes, they “listen” to conversations between doctors and patients, then transcribe and summarize them. Multiple tools are available, and they are already being widely adopted by health-care providers. A few months ago, a staffer at a major New York medical center who develops AI tools for doctors told me that, anecdotally, medics are “overjoyed” by the technology—it allows them to focus all their attention on their patients during appointments, and it saves them from a lot of time-consuming paperwork. Early studies support these anecdotes and suggest that the tools can reduce clinician burnout. That’s all well and good. But what about patient health outcomes? “[Researchers] have evaluated provider or clinician and patient satisfaction, but not really how these tools are affecting clinical decision-making,” says Wiens. “We just don’t know.” The same holds true for other AI-based technologies used in health-care settings. Some are used to predict patients’ health trajectories, others to recommend treatments. They are designed to make health care more effective and efficient. But even a tool that is “accurate” won’t necessarily improve health outcomes. AI might speed up the interpretation of a chest X-ray, for example. But how much will a doctor rely on its analysis? How will that tool affect the way a doctor interacts with patients or recommends treatment? And ultimately: What will this mean for those patients? The answers to those questions might vary between hospitals or departments and could depend on clinical workflows, says Wiens. They might also differ between doctors at various stages of their careers. Take the AI scribes, as another example. Some research on AI use in education suggests that such tools can impact the way people cognitively process information. Could they affect the way a doctor processes a patient’s information? Will the tools affect the way medical students think about patient data in a way that impacts care? These questions need to be explored, says Wiens. “We like things that save us time, but we have to think about the unintended consequences of this,” she says.

In a study published in January 2025, Paige Nong at the University of Minnesota and her colleagues found that around 65% of US hospitals used AI-assisted predictive tools. Only two-thirds of those hospitals evaluated their accuracy. Even fewer assessed them for bias. The number of hospitals using these tools has probably increased since then, says Wiens. Those hospitals, or entities other than the companies developing the tools, need to evaluate how much they help in specific settings. There’s a possibility that they could leave patients worse off, although it’s more likely that AI tools just aren’t as beneficial as health-care providers might assume they are, says Wiens. “I do believe in the potential of AI to really improve clinical care,” says Wiens, who stresses that she doesn’t want to stop the adoption of AI tools in health care. She just wants more information about how they are affecting people. “I have to believe that in the future it’s not all AI or no AI,” she says. “It’s somewhere in between.” This article first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. To receive it in your inbox every Thursday, and read articles like this first, sign up here.

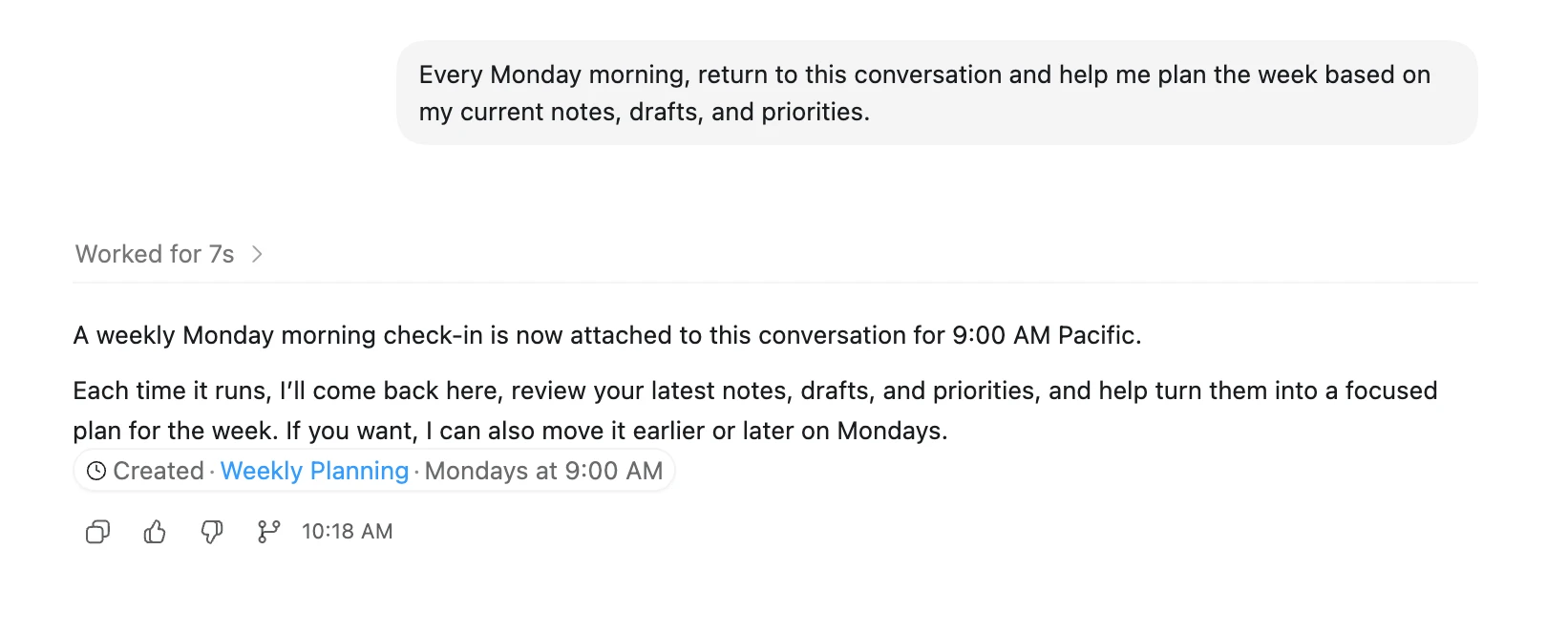

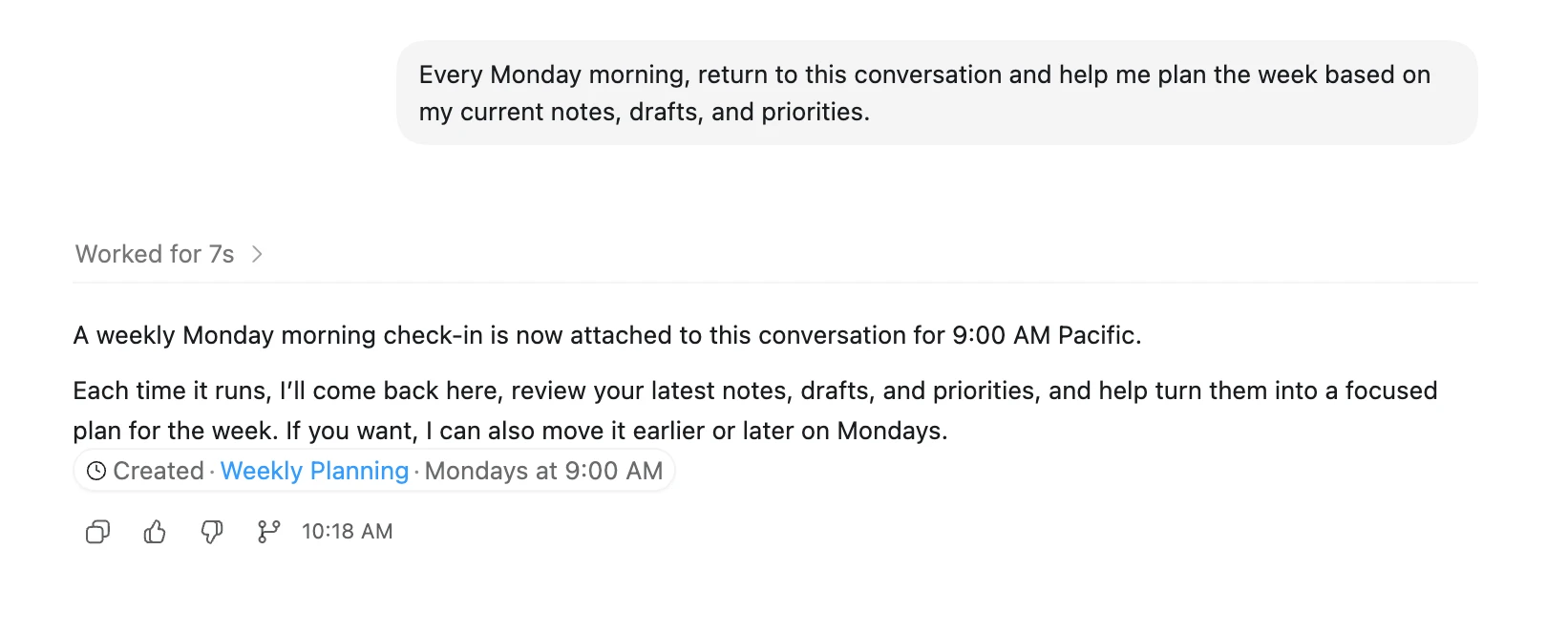

Automations

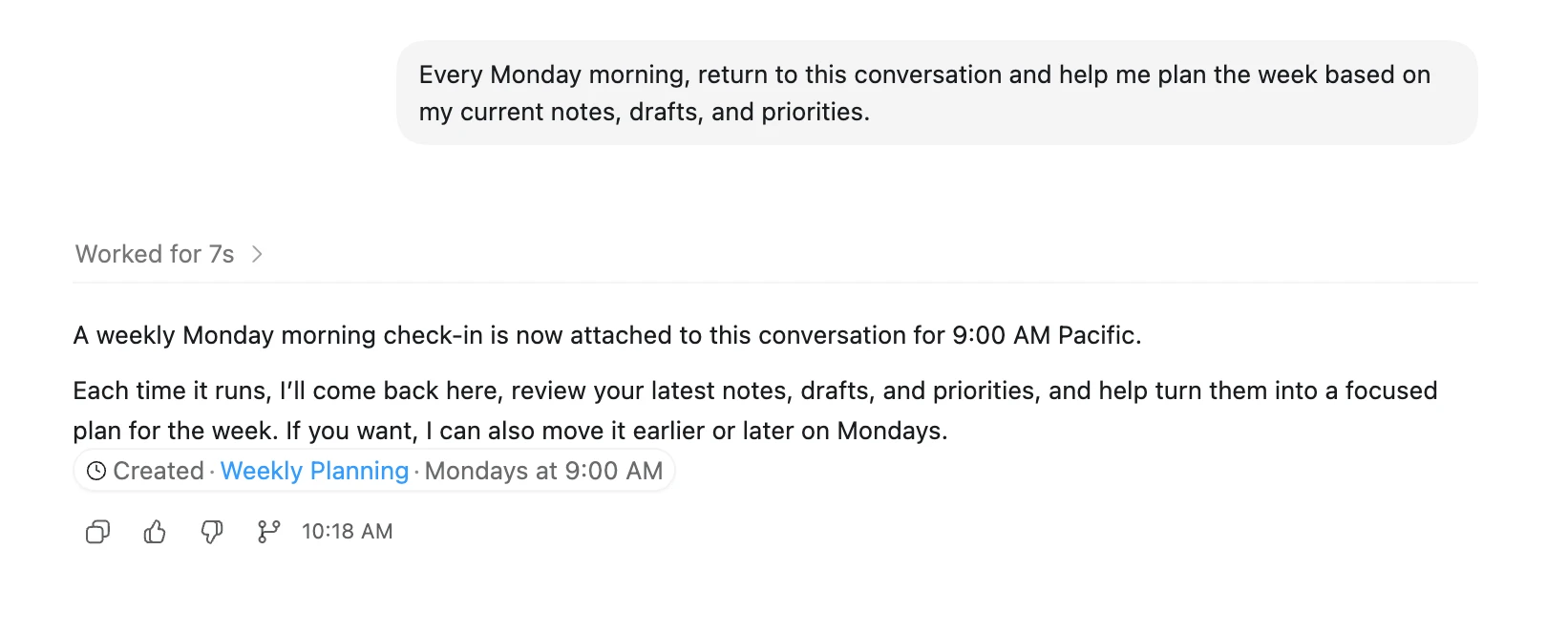

Codex can automatically run tasks on a schedule.This makes Codex proactive. Instead of waiting for you to come back and ask for an update, Codex can return at the scheduled time, do the work, and surface the result for you to review.This is useful for recurring work, like preparing for the day, reviewing what changed, checking for updates, summarizing recent activity, or creating a weekly report.For example, you might use a thread automation to:Write a weekly review every FridayCreate a morning brief from yesterday’s workSummarize new files added to a folderClean up a weekly data exportCheck for missing or inconsistent informationCreate a recurring project status updateSome automations can also return to the same conversation and continue from the context already there. That is especially useful when you want Codex to pick up an ongoing task instead of starting fresh each time.A good automation is specific, repeatable, and easy to review.For example:Try itEvery Monday morning, return to this conversation and help me plan the week based on my current notes, drafts, and priorities.Try itEvery Friday, review my recent work and write a short summary of what I finished this week, what is still open, and what needs attention next.Note: If you’re running Codex locally, automations work best when your laptop is awake and Codex is running.Start by chatting back and forth with Codex to zero in on the exact kind of behavior and output you’re looking for. Once Codex understands exactly what you need, turn that task into an automation.

Decoupled DiLoCo: A new frontier for resilient, distributed AI training

Decoupled DiLoCo is not only more resilient to failures, but is also practical for executing production-level, fully distributed pre-training. We successfully trained a 12 billion parameter model across four separate U.S. regions using 2-5 Gbps of wide-area networking (a level relatively achievable using existing internet connectivity between datacenter facilities, rather than requiring new custom network infrastructure between facilities). Notably, the system achieved this training result more than 20 times faster than conventional synchronization methods. This is because our system incorporates required communication into longer periods of computation, avoiding the “blocking” bottlenecks where one part of the system must wait for another.Driving the evolution of AI training infrastructureAt Google, we take a full-stack approach to AI training, spanning hardware, software infrastructure and research. Increasingly, gains are coming from rethinking how these layers fit together.Decoupled DiLoCo is one example. By enabling training jobs at internet-scale bandwidth, it can tap any unused compute wherever it sits, turning stranded resources into useful capacity.Beyond efficiency and resilience, this training paradigm also unlocks the ability to mix different hardware generations, such as TPU v6e and TPU v5p, in a single training run. This approach not only extends the useful life of existing hardware, but also increases the total compute available for model training. In our experiments, chips from different generations running at different speeds still matched the ML performance of single-chip-type training runs, ensuring that even older hardware can meaningfully accelerate AI training.What’s more, because new generations of hardware don’t arrive everywhere all at once, being able to train across generations can alleviate recurring logistical and capacity bottlenecks.As we push the frontiers of AI infrastructure today, we’re continuing to explore approaches to resilient systems needed to unlock the next generation of AI.

Cisco switch aimed at building practical quantum networks

Cisco today unveiled a prototype switch it says will significantly accelerate the timeline for practical, distributed, quantum-computing-based networks. Cisco’s Universal Quantum Switch is designed to connect quantum systems from different vendors, such as IBM, IonQ, Google and Rigetti, in all major qubit encoding technologies, at room temperature, and over standard telecom fiber, according to Vijoy Pandey, senior vice president and general manager of Outshift, Cisco’s emerging technologies and incubation group. “Today we have quantum computers operating at roughly 100 to 1,000 qubits in size, and from the public roadmaps of leading quantum players, we believe this number [is] going up to 10,000 in the next three years,” Pandey said. “Actual quantum computers will get bigger over time, of course, but it creates a big scalability problem once you start connecting them. What the switch will do is effectively link smaller quantum computers and create a large, distributed quantum computer, allowing faster scaling than building one massive quantum computer alone.”

AI Infrastructure Brief: Power, Capital, and the Feeling That Something Is Tightening

It was one of those weeks where the headlines kept coming in terms of deals, campuses, gigawatts, billions. Taken together they indicated a quieter signal beneath the noise: the AI infrastructure buildout is accelerating, but the system supporting it is beginning to show its seams. Not cracking, not breaking. But tightening. Power, Everywhere, All at Once Start with power, because everything now starts with power. Bloom Energy and Oracle expanded their partnership toward 2.8 gigawatts of deployment – an almost casual number at this point, except it isn’t. It’s the kind of figure that used to define regional grids, now repurposed for compute. Elsewhere: And then there was the U.S. Air Force, quietly exploring Alaska bases as potential AI data center sites; because if the grid won’t come to you, you start looking for where it already exists? Even Microsoft’s expansion in Cheyenne fits the pattern: go where the power can be made to work. At the same time, Maine’s legislature passed what’s being described as the first-in-the-nation ban on data centers; a move that may or may not hold, because it’s temporary and includes exemptions. But doesn’t need to last forever. The signal for 2026 is enough: the social license layer is no longer hypothetical. Capital Is Still Flowing But It’s Wearing a Suit Now If power is the constraint, capital is still the accelerant; but it’s currently trending as more self-aware: And then the demand-side gravity: These are no exploratory partnerships. They are pre-committed consumption curves, locked in ahead of capacity that is still being negotiated, permitted, and in some cases imagined. Capital hasn’t pulled back. But it has started asking quieter questions. Speed Is the New Differentiator (or the New Risk) AWS has reportedly launched something called “Project Houdini,” aimed at accelerating data center construction timelines, which

The Download: supercharged scams and studying AI healthcare

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. We’re in a new era of AI-driven scams When ChatGPT was released in late 2022, it showed how easily generative AI could create human-like text. This quickly caught the eye of cybercriminals, who began using LLMs to compose malicious emails. Since then, they’ve adopted AI for everything from turbocharged phishing and hyperrealistic deepfakes to automated vulnerability scans. Many organizations are now struggling to cope with the sheer volume of cyberattacks. AI is making them faster, cheaper, and easier to carry out, a problem set to worsen as more cybercriminals adopt these tools—and their capabilities improve. Read the full story on how AI is reshaping cybercrime. —Rhiannon Williams

“Supercharged scams” is one of the 10 Things That Matter in AI Right Now, our essential guide to what’s really worth your attention in the field. Subscribers can watch an exclusive roundtable unveiling the technologies and trends on the list, with analysis from MIT Technology Review’s AI reporter Grace Huckins and executive editors Amy Nordrum and Niall Firth. Healthcare AI is here. We don’t know if it actually helps patients. Doctors are using AI to help them with notetaking. AI-based tools are trawling through patient records, flagging people who may require certain support or treatments. They are also used to interpret medical exam results and X-rays.

A growing number of studies suggest that many of these tools can deliver accurate results. But there’s a bigger question here: Does using them actually translate into better health outcomes for patients? We don’t yet have a good answer—here’s why. —Jessica Hamzelou The story is from The Checkup, our weekly newsletter that gives you the latest from the worlds of health and biotech. Sign up to receive it in your inbox every Thursday. The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology. 1 DeepSeek has unveiled its long-awaited new AI modelThe Chinese company has just launched preview versions of DeepSeek-V4. (CNN)+It says V4 is the most powerful open-source platform. (Bloomberg $) + And rivals top closed-source models from OpenAI and DeepMind. (SCMP)+ The model is adapted for Huawei chip technology. (Reuters $)2 More countries are curbing children’s social media accessNorway is set to enforce the latest ban. (Reuters $)+ The Philippines could follow soon. (Bloomberg $)+ Americans are pushing to get AI out of schools. (The New Yorker)3 The US has accused China of mass AI theft as tensions riseA White House memo claims Chinese firms are exploiting American models. (BBC)+ Beijing calls the accusations “slander.” (Ars Technica)4 OpenAI set itself apart from Anthropic by widely releasing its new modelIt’s releasing GPT-5.5 to all ChatGPT users, despite cybersecurity concerns. (NYT $)+ OpenAI says the new model is better at coding and more efficient. (The Verge)5 Meta is cutting 10% of jobs to offset AI spendingRoughly 8,000 layoffs are set to be announced on May 20. (QZ)+ Anti-AI protests are growing. (MIT Technology Review)6 Palantir is facing a backlash from employeesThanks to its work with ICE and the Trump administration. (Wired $)+ Surveillance tech is reshaping the fight for privacy. (MIT Technology Review)7 The era of free access to advanced AI is coming to an endAI labs are under mounting pressure to start turning profits. (The Verge)8 Elon Musk’s feud with Sam Altman is heading to court The case has already revealed several unflattering secrets. (WP $)9 A new movement is encouraging people to ditch their smartphones for a month“Month Offline” is like a Dry January for smartphones. (The Atlantic)10 Spotify has revealed its most-streamed music of the last 20 yearsFeaturing Taylor Swift, Bad Bunny, and The Weeknd. (Gizmodo) Quote of the day “We want a childhood where children get to be children. Play, friendships, and everyday life must not be taken over by algorithms and screens.” —Norwegian Prime Minister Jonas Gahr Store announces age restrictions for social media.

One More Thing NASA/JPL-CALTECH VIA WIKIMEDIA COMMONS; CRAFT NASA/JPL-CALTECH/SWRI/MSSS; IMAGE PROCESSING: KEVIN M. GILL The search for extraterrestrial life is targeting Jupiter’s icy moon Europa As astronomers have discovered more about Europa over the past few decades, Jupiter’s fourth-largest moon has excited planetary scientists interested in the geophysics of alien worlds. All that water and energy—and hints of elements essential for building organic molecules —point to an extraordinary possibility. In the depths of its ocean, or perhaps crowded in subsurface lakes or below icy surface vents, Jupiter’s big, bright moon could host life. To find further evidence, NASA is now searching for signs of alien existence on Europa. Read the full story on the mission.

Health-care AI is here. We don’t know if it actually helps patients.

I don’t need to tell you that AI is everywhere. Or that it is being used, increasingly, in hospitals. Doctors are using AI to help them with notetaking. AI-based tools are trawling through patient records, flagging people who may require certain support or treatments. They are also used to interpret medical exam results and X-rays. A growing number of studies suggest that many of these tools can deliver accurate results. But there’s a bigger question here: Does using them actually translate into better health outcomes for patients? We don’t yet have a good answer.

That’s what Jenna Wiens, a computer scientist at the University of Michigan, and Anna Goldenberg of the University of Toronto, argue in a paper published in the journal Nature Medicine this week. Wiens tells me she has spent years investigating how AI might benefit health care. For the first decade of her career she tried to pitch the technology to clinicians. Over the last few years, she says, it’s as though “a switch flipped.” Health-care providers not only appear much more interested in the promise of these technologies, they have also begun rapidly deploying them.

The problem is that many providers aren’t rigorously assessing how well they actually work. Take “ambient AI” tools, for example. Also known as AI scribes, they “listen” to conversations between doctors and patients, then transcribe and summarize them. Multiple tools are available, and they are already being widely adopted by health-care providers. A few months ago, a staffer at a major New York medical center who develops AI tools for doctors told me that, anecdotally, medics are “overjoyed” by the technology—it allows them to focus all their attention on their patients during appointments, and it saves them from a lot of time-consuming paperwork. Early studies support these anecdotes and suggest that the tools can reduce clinician burnout. That’s all well and good. But what about patient health outcomes? “[Researchers] have evaluated provider or clinician and patient satisfaction, but not really how these tools are affecting clinical decision-making,” says Wiens. “We just don’t know.” The same holds true for other AI-based technologies used in health-care settings. Some are used to predict patients’ health trajectories, others to recommend treatments. They are designed to make health care more effective and efficient. But even a tool that is “accurate” won’t necessarily improve health outcomes. AI might speed up the interpretation of a chest X-ray, for example. But how much will a doctor rely on its analysis? How will that tool affect the way a doctor interacts with patients or recommends treatment? And ultimately: What will this mean for those patients? The answers to those questions might vary between hospitals or departments and could depend on clinical workflows, says Wiens. They might also differ between doctors at various stages of their careers. Take the AI scribes, as another example. Some research on AI use in education suggests that such tools can impact the way people cognitively process information. Could they affect the way a doctor processes a patient’s information? Will the tools affect the way medical students think about patient data in a way that impacts care? These questions need to be explored, says Wiens. “We like things that save us time, but we have to think about the unintended consequences of this,” she says.

In a study published in January 2025, Paige Nong at the University of Minnesota and her colleagues found that around 65% of US hospitals used AI-assisted predictive tools. Only two-thirds of those hospitals evaluated their accuracy. Even fewer assessed them for bias. The number of hospitals using these tools has probably increased since then, says Wiens. Those hospitals, or entities other than the companies developing the tools, need to evaluate how much they help in specific settings. There’s a possibility that they could leave patients worse off, although it’s more likely that AI tools just aren’t as beneficial as health-care providers might assume they are, says Wiens. “I do believe in the potential of AI to really improve clinical care,” says Wiens, who stresses that she doesn’t want to stop the adoption of AI tools in health care. She just wants more information about how they are affecting people. “I have to believe that in the future it’s not all AI or no AI,” she says. “It’s somewhere in between.” This article first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. To receive it in your inbox every Thursday, and read articles like this first, sign up here.

Automations

Codex can automatically run tasks on a schedule.This makes Codex proactive. Instead of waiting for you to come back and ask for an update, Codex can return at the scheduled time, do the work, and surface the result for you to review.This is useful for recurring work, like preparing for the day, reviewing what changed, checking for updates, summarizing recent activity, or creating a weekly report.For example, you might use a thread automation to:Write a weekly review every FridayCreate a morning brief from yesterday’s workSummarize new files added to a folderClean up a weekly data exportCheck for missing or inconsistent informationCreate a recurring project status updateSome automations can also return to the same conversation and continue from the context already there. That is especially useful when you want Codex to pick up an ongoing task instead of starting fresh each time.A good automation is specific, repeatable, and easy to review.For example:Try itEvery Monday morning, return to this conversation and help me plan the week based on my current notes, drafts, and priorities.Try itEvery Friday, review my recent work and write a short summary of what I finished this week, what is still open, and what needs attention next.Note: If you’re running Codex locally, automations work best when your laptop is awake and Codex is running.Start by chatting back and forth with Codex to zero in on the exact kind of behavior and output you’re looking for. Once Codex understands exactly what you need, turn that task into an automation.

Decoupled DiLoCo: A new frontier for resilient, distributed AI training

Decoupled DiLoCo is not only more resilient to failures, but is also practical for executing production-level, fully distributed pre-training. We successfully trained a 12 billion parameter model across four separate U.S. regions using 2-5 Gbps of wide-area networking (a level relatively achievable using existing internet connectivity between datacenter facilities, rather than requiring new custom network infrastructure between facilities). Notably, the system achieved this training result more than 20 times faster than conventional synchronization methods. This is because our system incorporates required communication into longer periods of computation, avoiding the “blocking” bottlenecks where one part of the system must wait for another.Driving the evolution of AI training infrastructureAt Google, we take a full-stack approach to AI training, spanning hardware, software infrastructure and research. Increasingly, gains are coming from rethinking how these layers fit together.Decoupled DiLoCo is one example. By enabling training jobs at internet-scale bandwidth, it can tap any unused compute wherever it sits, turning stranded resources into useful capacity.Beyond efficiency and resilience, this training paradigm also unlocks the ability to mix different hardware generations, such as TPU v6e and TPU v5p, in a single training run. This approach not only extends the useful life of existing hardware, but also increases the total compute available for model training. In our experiments, chips from different generations running at different speeds still matched the ML performance of single-chip-type training runs, ensuring that even older hardware can meaningfully accelerate AI training.What’s more, because new generations of hardware don’t arrive everywhere all at once, being able to train across generations can alleviate recurring logistical and capacity bottlenecks.As we push the frontiers of AI infrastructure today, we’re continuing to explore approaches to resilient systems needed to unlock the next generation of AI.

Cisco switch aimed at building practical quantum networks

Cisco today unveiled a prototype switch it says will significantly accelerate the timeline for practical, distributed, quantum-computing-based networks. Cisco’s Universal Quantum Switch is designed to connect quantum systems from different vendors, such as IBM, IonQ, Google and Rigetti, in all major qubit encoding technologies, at room temperature, and over standard telecom fiber, according to Vijoy Pandey, senior vice president and general manager of Outshift, Cisco’s emerging technologies and incubation group. “Today we have quantum computers operating at roughly 100 to 1,000 qubits in size, and from the public roadmaps of leading quantum players, we believe this number [is] going up to 10,000 in the next three years,” Pandey said. “Actual quantum computers will get bigger over time, of course, but it creates a big scalability problem once you start connecting them. What the switch will do is effectively link smaller quantum computers and create a large, distributed quantum computer, allowing faster scaling than building one massive quantum computer alone.”

Fire hits Viva Energy’s Geelong refinery

Viva Energy Group Ltd.’s 120,000-b/d Geelong refinery adjacent to Corio Bay, about 50 km west of Melbourne, in Victoria, Australia, is running at reduced production rates following a fire at the site’s gasoline unit, the company said on Apr. 16. The incident occurred on the evening of Apr. 15 within the refinery’s gasoline complex. Emergency responders from Fire Rescue Victoria attended the site and brought the situation under control. According to Viva Energy, all personnel were accounted for and no injuries were reported. The refinery continues operating at reduced throughput while the extent of damage is assessed. The company said it expects production impacts to be concentrated on gasoline and aviation gasoline production, though a full evaluation will follow once it is safe to access affected units. Viva Energy said there is no immediate disruption to fuel supply, noting it will offset any production shortfall through its established fuel import program. ULSG project startup Confirmation of the fire follows startup of the refinery’s ultralow-sulfur gasoline (ULSG) project, which occurred in second-half 2025. The project enables production of gasoline with sulfur content of 10 ppm, aligning with new Australian fuel quality standards that took effect in late 2025. The ULSG upgrade is part of the operator’s broader strategy to modernize the Geelong refinery and support compliance with tightening emissions regulations while maintaining domestic refining capacity. Gas terminal approval, energy hub plans Separately, Viva Energy confirmed on Apr. 9 that its proposed LPG import terminal at Geelong received federal environmental approval under Australia’s Environment Protection and Biodiversity Conservation Act on Apr. 1. The approval follows a prior positive environmental assessment by the Victorian government and allows the project to proceed subject to conditions. The planned terminal, to be located at the refinery’s existing pier infrastructure near Corio Bay, is intended to supply

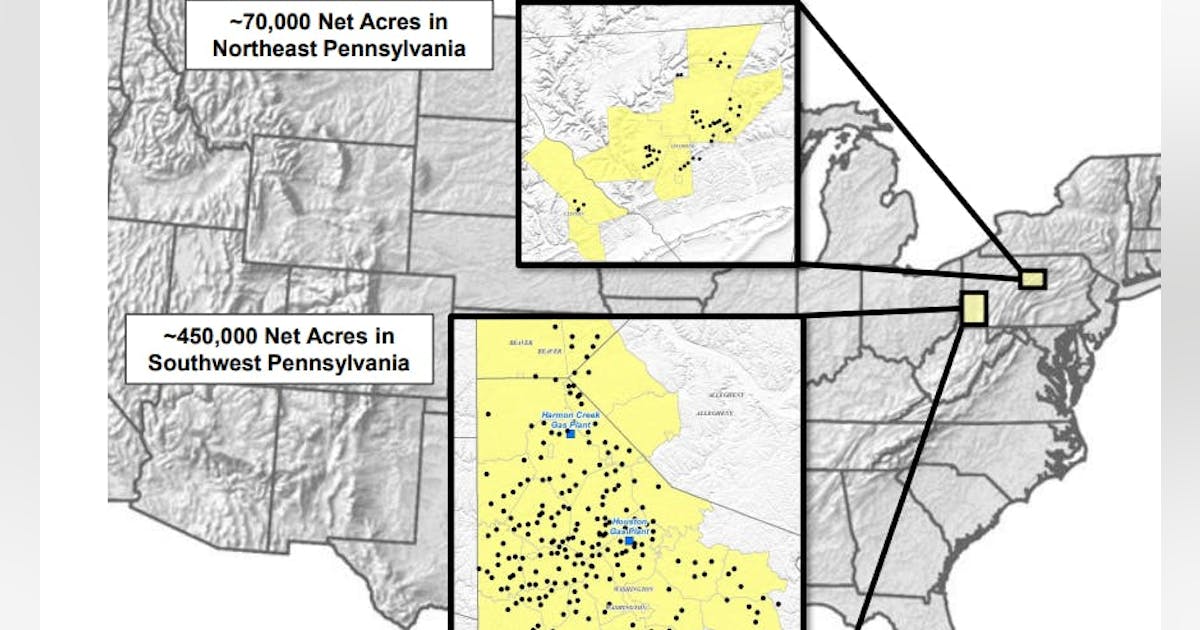

Range Resources sticking to measured 2026 growth plan

By year’s end, Degner told analysts on an Apr. 22 conference call, production from Range’s roughly 52,000 net acres in Pennsylvania should grow to 2.5 bcfed as unfinished wells start to be turned in line. Those additions, outlined by executives 2 months ago, will help Range’s output get to 2.6 bcfed in 2027 but near-term growth beyond that will need a strong market signal. “When we think about what’s beyond 2027, […] it’s going to start with a conversation around what kind of demand and opportunity further materializes,” Degner said. “We think there’s a really strong opportunity for that to take shape. But it’s got to take shape.” Degner and his team are sticking to their full-year production guidance of 2.35-2.40 bcfed and their capital spending forecast of $650-700 million. Should the Range team decide not to invest in more growth over the near term, Degner said the company’s capex budget could shrink to $570-600 million while maintaining production around 2.6 bcfed. Degner said Range executives expect to be able to invest in “another thoughtful wedge of growth” late this decade, when commodity prices are expected to be consistently higher. The first quarter’s rise in prices, first from harsh winter weather and then from the Iran war, boosted Range’s average price per mcfe to $4.84 from $4.02 early last year. Degner said the operator’s natural gas liquids premium over the benchmark Mont Belvieu Index was $4.41, the highest in Range’s history. That helped net income in the first 3 months of the year pop to $342 million from $97 million in first-quarter 2025 as revenues rose to $1.03 billion from $691 million. Shares of Range (Ticker: RRC) were up more than 4% to $43.55 in afternoon trading on Apr. 22. They have now climbed more than 20% over the past 6

Energy Department Awards New Contracts from Strategic Petroleum Reserve, Advancing Emergency Exchange

WASHINGTON—The U.S. Department of Energy’s (DOE) Hydrocarbons and Geothermal Energy Office (HGEO) today announced awards of contracts to exchange 26 million barrels of crude oil from the Strategic Petroleum Reserve (SPR) at the West Hackberry site, marking the next phase of DOE’s execution of the United States’ 172-million-barrel contribution to the International Energy Agency’s collective action to stabilize global oil supply. These awards follow DOE’s recent Request for Proposal (RFP) for this portion of the emergency exchange, with deliveries beginning immediately as the Department continues to move quickly to address short-term supply disruptions and strengthen energy security for the United States. “Through this emergency exchange, the Department is taking swift action to support near‑term supply needs while strengthening the Strategic Petroleum Reserve for the long term,” said Kyle Haustveit, Assistant Secretary of the Hydrocarbons and Geothermal Energy Office. “By returning additional premium barrels at no cost to taxpayers, this exchange reinforces market reliability today and delivers meaningful value to the American people when those barrels are returned.” Under these awards, DOE will move forward with an exchange of 26 million barrels of crude oil, which will be returned with additional premium barrels by next year—supporting energy security and delivering value for the American people at no cost to taxpayers. This action builds on earlier exchange actions, which have already awarded approximately 55 million barrels from the Bayou Choctaw, Bryan Mound, and West Hackberry sites, demonstrating the reserve’s ability to deliver crude efficiently under emergency conditions. To date, more than 10 million barrels have already been delivered to market. The exchange also allows participating companies to take advantage of the President’s limited Jones Act waiver, helping accelerate critical near-term oil flows into the market. Companies can begin scheduling deliveries immediately. DOE will continue to evaluate market conditions and operational capacity as it advances

Apply Now: 2026 Waste to Energy and Materials Technical Assistance for State, Local, and Tribal Governments

The U.S. Department of Energy’s Alternative Fuels and Feedstocks Office (AFFO), formerly known as the Bioenergy Technologies Office, and the National Laboratory of the Rockies (NLR) are launching the 2026 Waste to Energy and Materials Technical Assistance Program for state, local, and Tribal governments. The scope of this year’s program has been expanded to include additional municipal solid waste materials such as electronics, industrial wastewater, and other byproducts. U.S. waste streams present significant logistical and economic challenges for states, counties, municipalities, and Tribal governments. However, waste is also a resource that can be used as an unconventional additional source of energy, advanced materials, and critical minerals. This program provides no-cost technical assistance to states, counties, municipalities, and Tribal governments with the most relevant data to guide decision-making—providing local solutions to the various aspects of waste management, taking into consideration current handling practices, costs, and infrastructure. It is designed to help officials evaluate the most sensible end uses for their waste, whether repurposing it for on-site heat and power, upgrading it into transportation fuels, or using it for material and mineral recovery. Program technical assistance includes: Waste resource information Infrastructure considerations Techno-economic comparison of energy, material, and mineral recovery options Evaluation and sharing of case studies (to the extent possible) from similar communities/projects The 2026 Waste to Energy and Materials Technical Assistance application portal is now open and applications will be accepted through May 30, 2026. For information on applicant eligibility and how to apply, please visit NLR’s technical assistance webpage. Timeline for Technical Assistance Opportunity Date Action April 15, 2026 Application Portal Opens May 30, 2026 Application Portal Closes July – August 2026 Selections Made and Recipients Informed Learn more about AFFO-supported waste to energy and materials technical assistance. If you have further questions, please see frequently asked questions or contact the Waste to

Energy Deputy Secretary Danly Commends FERC Action on Large Load Interconnection Reform

WASHINGTON—U.S. Deputy Secretary of Energy James P. Danly issued the following statement after the Federal Energy Regulatory Commission (FERC or Commission) announced it will take action by June 2026 on the large load interconnection proceeding initiated at the direction of U.S. Secretary of Energy Chris Wright: “FERC’s announcement today demonstrates Chairman Swett’s commitment to implement Secretary Wright’s directive that the Commission ensure the timely and orderly integration of large electric loads that deliver on President Trump’s goal of American energy dominance. “I expect that the Commission will act quickly and decisively to improve interconnection processes, support the co-location of load and generation, and accelerate the addition of new generation to ensure that supply is built alongside demand—delivering affordable, reliable, and secure energy for all Americans. “Having served at FERC as commissioner and chairman, I understand FERC’s role in ensuring the reliability of the nation’s bulk power system, and I commend Chairman Swett for focusing on affordability and reliability.” ###

Petrobras discovers hydrocarbons in Campos basin presalt offshore Brazil

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Petrobras has discovered presence in the Campos basin presalt offshore Brazil during exploration in sector SC-AP4, block CM-477. Samples taken from the well, 1-BRSA-1404DC-RJS, will be sent for laboratory analysis with the aim of characterizing the conditions of the reservoirs and fluids found to enable continued evaluation of the area’s potential, the company said in a release Apr. 13. The discovery well was drilled 201 km off the coast of the state of Rio de Janeiro in water depth of 2,984 m. The hydrocarbon-bearing interval was confirmed through electrical profiles, gas evidence, and fluid sampling. Petrobras is the operator of block CM-477 with 70% interest. bp plc holds the remaining 30%.

AI means the end of internet search as we’ve known it

We all know what it means, colloquially, to google something. You pop a few relevant words in a search box and in return get a list of blue links to the most relevant results. Maybe some quick explanations up top. Maybe some maps or sports scores or a video. But fundamentally, it’s just fetching information that’s already out there on the internet and showing it to you, in some sort of structured way. But all that is up for grabs. We are at a new inflection point. The biggest change to the way search engines have delivered information to us since the 1990s is happening right now. No more keyword searching. No more sorting through links to click. Instead, we’re entering an era of conversational search. Which means instead of keywords, you use real questions, expressed in natural language. And instead of links, you’ll increasingly be met with answers, written by generative AI and based on live information from all across the internet, delivered the same way. Of course, Google—the company that has defined search for the past 25 years—is trying to be out front on this. In May of 2023, it began testing AI-generated responses to search queries, using its large language model (LLM) to deliver the kinds of answers you might expect from an expert source or trusted friend. It calls these AI Overviews. Google CEO Sundar Pichai described this to MIT Technology Review as “one of the most positive changes we’ve done to search in a long, long time.”

AI Overviews fundamentally change the kinds of queries Google can address. You can now ask it things like “I’m going to Japan for one week next month. I’ll be staying in Tokyo but would like to take some day trips. Are there any festivals happening nearby? How will the surfing be in Kamakura? Are there any good bands playing?” And you’ll get an answer—not just a link to Reddit, but a built-out answer with current results. More to the point, you can attempt searches that were once pretty much impossible, and get the right answer. You don’t have to be able to articulate what, precisely, you are looking for. You can describe what the bird in your yard looks like, or what the issue seems to be with your refrigerator, or that weird noise your car is making, and get an almost human explanation put together from sources previously siloed across the internet. It’s amazing, and once you start searching that way, it’s addictive.

And it’s not just Google. OpenAI’s ChatGPT now has access to the web, making it far better at finding up-to-date answers to your queries. Microsoft released generative search results for Bing in September. Meta has its own version. The startup Perplexity was doing the same, but with a “move fast, break things” ethos. Literal trillions of dollars are at stake in the outcome as these players jockey to become the next go-to source for information retrieval—the next Google. Not everyone is excited for the change. Publishers are completely freaked out. The shift has heightened fears of a “zero-click” future, where search referral traffic—a mainstay of the web since before Google existed—vanishes from the scene. I got a vision of that future last June, when I got a push alert from the Perplexity app on my phone. Perplexity is a startup trying to reinvent web search. But in addition to delivering deep answers to queries, it will create entire articles about the news of the day, cobbled together by AI from different sources. On that day, it pushed me a story about a new drone company from Eric Schmidt. I recognized the story. Forbes had reported it exclusively, earlier in the week, but it had been locked behind a paywall. The image on Perplexity’s story looked identical to one from Forbes. The language and structure were quite similar. It was effectively the same story, but freely available to anyone on the internet. I texted a friend who had edited the original story to ask if Forbes had a deal with the startup to republish its content. But there was no deal. He was shocked and furious and, well, perplexed. He wasn’t alone. Forbes, the New York Times, and Condé Nast have now all sent the company cease-and-desist orders. News Corp is suing for damages. People are worried about what these new LLM-powered results will mean for our fundamental shared reality. It could spell the end of the canonical answer. It was precisely the nightmare scenario publishers have been so afraid of: The AI was hoovering up their premium content, repackaging it, and promoting it to its audience in a way that didn’t really leave any reason to click through to the original. In fact, on Perplexity’s About page, the first reason it lists to choose the search engine is “Skip the links.” But this isn’t just about publishers (or my own self-interest). People are also worried about what these new LLM-powered results will mean for our fundamental shared reality. Language models have a tendency to make stuff up—they can hallucinate nonsense. Moreover, generative AI can serve up an entirely new answer to the same question every time, or provide different answers to different people on the basis of what it knows about them. It could spell the end of the canonical answer. But make no mistake: This is the future of search. Try it for a bit yourself, and you’ll see.

Sure, we will always want to use search engines to navigate the web and to discover new and interesting sources of information. But the links out are taking a back seat. The way AI can put together a well-reasoned answer to just about any kind of question, drawing on real-time data from across the web, just offers a better experience. That is especially true compared with what web search has become in recent years. If it’s not exactly broken (data shows more people are searching with Google more often than ever before), it’s at the very least increasingly cluttered and daunting to navigate. Who wants to have to speak the language of search engines to find what you need? Who wants to navigate links when you can have straight answers? And maybe: Who wants to have to learn when you can just know? In the beginning there was Archie. It was the first real internet search engine, and it crawled files previously hidden in the darkness of remote servers. It didn’t tell you what was in those files—just their names. It didn’t preview images; it didn’t have a hierarchy of results, or even much of an interface. But it was a start. And it was pretty good. Then Tim Berners-Lee created the World Wide Web, and all manner of web pages sprang forth. The Mosaic home page and the Internet Movie Database and Geocities and the Hampster Dance and web rings and Salon and eBay and CNN and federal government sites and some guy’s home page in Turkey. Until finally, there was too much web to even know where to start. We really needed a better way to navigate our way around, to actually find the things we needed. And so in 1994 Jerry Yang created Yahoo, a hierarchical directory of websites. It quickly became the home page for millions of people. And it was … well, it was okay. TBH, and with the benefit of hindsight, I think we all thought it was much better back then than it actually was. But the web continued to grow and sprawl and expand, every day bringing more information online. Rather than just a list of sites by category, we needed something that actually looked at all that content and indexed it. By the late ’90s that meant choosing from a variety of search engines: AltaVista and AlltheWeb and WebCrawler and HotBot. And they were good—a huge improvement. At least at first. But alongside the rise of search engines came the first attempts to exploit their ability to deliver traffic. Precious, valuable traffic, which web publishers rely on to sell ads and retailers use to get eyeballs on their goods. Sometimes this meant stuffing pages with keywords or nonsense text designed purely to push pages higher up in search results. It got pretty bad.

And then came Google. It’s hard to overstate how revolutionary Google was when it launched in 1998. Rather than just scanning the content, it also looked at the sources linking to a website, which helped evaluate its relevance. To oversimplify: The more something was cited elsewhere, the more reliable Google considered it, and the higher it would appear in results. This breakthrough made Google radically better at retrieving relevant results than anything that had come before. It was amazing. Google CEO Sundar Pichai describes AI Overviews as “one of the most positive changes we’ve done to search in a long, long time.”JENS GYARMATY/LAIF/REDUX For 25 years, Google dominated search. Google was search, for most people. (The extent of that domination is currently the subject of multiple legal probes in the United States and the European Union.)

But Google has long been moving away from simply serving up a series of blue links, notes Pandu Nayak, Google’s chief scientist for search. “It’s not just so-called web results, but there are images and videos, and special things for news. There have been direct answers, dictionary answers, sports, answers that come with Knowledge Graph, things like featured snippets,” he says, rattling off a litany of Google’s steps over the years to answer questions more directly. It’s true: Google has evolved over time, becoming more and more of an answer portal. It has added tools that allow people to just get an answer—the live score to a game, the hours a café is open, or a snippet from the FDA’s website—rather than being pointed to a website where the answer may be. But once you’ve used AI Overviews a bit, you realize they are different. Take featured snippets, the passages Google sometimes chooses to highlight and show atop the results themselves. Those words are quoted directly from an original source. The same is true of knowledge panels, which are generated from information stored in a range of public databases and Google’s Knowledge Graph, its database of trillions of facts about the world. While these can be inaccurate, the information source is knowable (and fixable). It’s in a database. You can look it up. Not anymore: AI Overviews can be entirely new every time, generated on the fly by a language model’s predictive text combined with an index of the web.

“I think it’s an exciting moment where we have obviously indexed the world. We built deep understanding on top of it with Knowledge Graph. We’ve been using LLMs and generative AI to improve our understanding of all that,” Pichai told MIT Technology Review. “But now we are able to generate and compose with that.” The result feels less like a querying a database than like asking a very smart, well-read friend. (With the caveat that the friend will sometimes make things up if she does not know the answer.) “[The company’s] mission is organizing the world’s information,” Liz Reid, Google’s head of search, tells me from its headquarters in Mountain View, California. “But actually, for a while what we did was organize web pages. Which is not really the same thing as organizing the world’s information or making it truly useful and accessible to you.” That second concept—accessibility—is what Google is really keying in on with AI Overviews. It’s a sentiment I hear echoed repeatedly while talking to Google execs: They can address more complicated types of queries more efficiently by bringing in a language model to help supply the answers. And they can do it in natural language.

That will become even more important for a future where search goes beyond text queries. For example, Google Lens, which lets people take a picture or upload an image to find out more about something, uses AI-generated answers to tell you what you may be looking at. Google has even showed off the ability to query live video. When it doesn’t have an answer, an AI model can confidently spew back a response anyway. For Google, this could be a real problem. For the rest of us, it could actually be dangerous. “We are definitely at the start of a journey where people are going to be able to ask, and get answered, much more complex questions than where we’ve been in the past decade,” says Pichai. There are some real hazards here. First and foremost: Large language models will lie to you. They hallucinate. They get shit wrong. When it doesn’t have an answer, an AI model can blithely and confidently spew back a response anyway. For Google, which has built its reputation over the past 20 years on reliability, this could be a real problem. For the rest of us, it could actually be dangerous. In May 2024, AI Overviews were rolled out to everyone in the US. Things didn’t go well. Google, long the world’s reference desk, told people to eat rocks and to put glue on their pizza. These answers were mostly in response to what the company calls adversarial queries—those designed to trip it up. But still. It didn’t look good. The company quickly went to work fixing the problems—for example, by deprecating so-called user-generated content from sites like Reddit, where some of the weirder answers had come from. Yet while its errors telling people to eat rocks got all the attention, the more pernicious danger might arise when it gets something less obviously wrong. For example, in doing research for this article, I asked Google when MIT Technology Review went online. It helpfully responded that “MIT Technology Review launched its online presence in late 2022.” This was clearly wrong to me, but for someone completely unfamiliar with the publication, would the error leap out? I came across several examples like this, both in Google and in OpenAI’s ChatGPT search. Stuff that’s just far enough off the mark not to be immediately seen as wrong. Google is banking that it can continue to improve these results over time by relying on what it knows about quality sources. “When we produce AI Overviews,” says Nayak, “we look for corroborating information from the search results, and the search results themselves are designed to be from these reliable sources whenever possible. These are some of the mechanisms we have in place that assure that if you just consume the AI Overview, and you don’t want to look further … we hope that you will still get a reliable, trustworthy answer.” In the case above, the 2022 answer seemingly came from a reliable source—a story about MIT Technology Review’s email newsletters, which launched in 2022. But the machine fundamentally misunderstood. This is one of the reasons Google uses human beings—raters—to evaluate the results it delivers for accuracy. Ratings don’t correct or control individual AI Overviews; rather, they help train the model to build better answers. But human raters can be fallible. Google is working on that too. “Raters who look at your experiments may not notice the hallucination because it feels sort of natural,” says Nayak. “And so you have to really work at the evaluation setup to make sure that when there is a hallucination, someone’s able to point out and say, That’s a problem.” The new search Google has rolled out its AI Overviews to upwards of a billion people in more than 100 countries, but it is facing upstarts with new ideas about how search should work. Search Engine GoogleThe search giant has added AI Overviews to search results. These overviews take information from around the web and Google’s Knowledge Graph and use the company’s Gemini language model to create answers to search queries. What it’s good at Google’s AI Overviews are great at giving an easily digestible summary in response to even the most complex queries, with sourcing boxes adjacent to the answers. Among the major options, its deep web index feels the most “internety.” But web publishers fear its summaries will give people little reason to click through to the source material. PerplexityPerplexity is a conversational search engine that uses third-party largelanguage models from OpenAI and Anthropic to answer queries. Perplexity is fantastic at putting together deeper dives in response to user queries, producing answers that are like mini white papers on complex topics. It’s also excellent at summing up current events. But it has gotten a bad rep with publishers, who say it plays fast and loose with their content. ChatGPTWhile Google brought AI to search, OpenAI brought search to ChatGPT. Queries that the model determines will benefit from a web search automatically trigger one, or users can manually select the option to add a web search. Thanks to its ability to preserve context across a conversation, ChatGPT works well for performing searches that benefit from follow-up questions—like planning a vacation through multiple search sessions. OpenAI says users sometimes go “20 turns deep” in researching queries. Of these three, it makes links out to publishers least prominent. When I talked to Pichai about this, he expressed optimism about the company’s ability to maintain accuracy even with the LLM generating responses. That’s because AI Overviews is based on Google’s flagship large language model, Gemini, but also draws from Knowledge Graph and what it considers reputable sources around the web. “You’re always dealing in percentages. What we have done is deliver it at, like, what I would call a few nines of trust and factuality and quality. I’d say 99-point-few-nines. I think that’s the bar we operate at, and it is true with AI Overviews too,” he says. “And so the question is, are we able to do this again at scale? And I think we are.” There’s another hazard as well, though, which is that people ask Google all sorts of weird things. If you want to know someone’s darkest secrets, look at their search history. Sometimes the things people ask Google about are extremely dark. Sometimes they are illegal. Google doesn’t just have to be able to deploy its AI Overviews when an answer can be helpful; it has to be extremely careful not to deploy them when an answer may be harmful. “If you go and say ‘How do I build a bomb?’ it’s fine that there are web results. It’s the open web. You can access anything,” Reid says. “But we do not need to have an AI Overview that tells you how to build a bomb, right? We just don’t think that’s worth it.” But perhaps the greatest hazard—or biggest unknown—is for anyone downstream of a Google search. Take publishers, who for decades now have relied on search queries to send people their way. What reason will people have to click through to the original source, if all the information they seek is right there in the search result? Rand Fishkin, cofounder of the market research firm SparkToro, publishes research on so-called zero-click searches. As Google has moved increasingly into the answer business, the proportion of searches that end without a click has gone up and up. His sense is that AI Overviews are going to explode this trend. “If you are reliant on Google for traffic, and that traffic is what drove your business forward, you are in long- and short-term trouble,” he says. Don’t panic, is Pichai’s message. He argues that even in the age of AI Overviews, people will still want to click through and go deeper for many types of searches. “The underlying principle is people are coming looking for information. They’re not looking for Google always to just answer,” he says. “Sometimes yes, but the vast majority of the times, you’re looking at it as a jumping-off point.” Reid, meanwhile, argues that because AI Overviews allow people to ask more complicated questions and drill down further into what they want, they could even be helpful to some types of publishers and small businesses, especially those operating in the niches: “You essentially reach new audiences, because people can now express what they want more specifically, and so somebody who specializes doesn’t have to rank for the generic query.” “I’m going to start with something risky,” Nick Turley tells me from the confines of a Zoom window. Turley is the head of product for ChatGPT, and he’s showing off OpenAI’s new web search tool a few weeks before it launches. “I should normally try this beforehand, but I’m just gonna search for you,” he says. “This is always a high-risk demo to do, because people tend to be particular about what is said about them on the internet.” He types my name into a search field, and the prototype search engine spits back a few sentences, almost like a speaker bio. It correctly identifies me and my current role. It even highlights a particular story I wrote years ago that was probably my best known. In short, it’s the right answer. Phew? A few weeks after our call, OpenAI incorporated search into ChatGPT, supplementing answers from its language model with information from across the web. If the model thinks a response would benefit from up-to-date information, it will automatically run a web search (OpenAI won’t say who its search partners are) and incorporate those responses into its answer, with links out if you want to learn more. You can also opt to manually force it to search the web if it does not do so on its own. OpenAI won’t reveal how many people are using its web search, but it says some 250 million people use ChatGPT weekly, all of whom are potentially exposed to it. “There’s an incredible amount of content on the web. There are a lot of things happening in real time. You want ChatGPT to be able to use that to improve its answers and to be a better super-assistant for you.” Kevin Weil, chief product officer, OpenAI According to Fishkin, these newer forms of AI-assisted search aren’t yet challenging Google’s search dominance. “It does not appear to be cannibalizing classic forms of web search,” he says. OpenAI insists it’s not really trying to compete on search—although frankly this seems to me like a bit of expectation setting. Rather, it says, web search is mostly a means to get more current information than the data in its training models, which tend to have specific cutoff dates that are often months, or even a year or more, in the past. As a result, while ChatGPT may be great at explaining how a West Coast offense works, it has long been useless at telling you what the latest 49ers score is. No more. “I come at it from the perspective of ‘How can we make ChatGPT able to answer every question that you have? How can we make it more useful to you on a daily basis?’ And that’s where search comes in for us,” Kevin Weil, the chief product officer with OpenAI, tells me. “There’s an incredible amount of content on the web. There are a lot of things happening in real time. You want ChatGPT to be able to use that to improve its answers and to be able to be a better super-assistant for you.” Today ChatGPT is able to generate responses for very current news events, as well as near-real-time information on things like stock prices. And while ChatGPT’s interface has long been, well, boring, search results bring in all sorts of multimedia—images, graphs, even video. It’s a very different experience. Weil also argues that ChatGPT has more freedom to innovate and go its own way than competitors like Google—even more than its partner Microsoft does with Bing. Both of those are ad-dependent businesses. OpenAI is not. (At least not yet.) It earns revenue from the developers, businesses, and individuals who use it directly. It’s mostly setting large amounts of money on fire right now—it’s projected to lose $14 billion in 2026, by some reports. But one thing it doesn’t have to worry about is putting ads in its search results as Google does. “For a while what we did was organize web pages. Which is not really the same thing as organizing the world’s information or making it truly useful and accessible to you,” says Google head of search, Liz Reid.WINNI WINTERMEYER/REDUX Like Google, ChatGPT is pulling in information from web publishers, summarizing it, and including it in its answers. But it has also struck financial deals with publishers, a payment for providing the information that gets rolled into its results. (MIT Technology Review has been in discussions with OpenAI, Google, Perplexity, and others about publisher deals but has not entered into any agreements. Editorial was neither party to nor informed about the content of those discussions.) But the thing is, for web search to accomplish what OpenAI wants—to be more current than the language model—it also has to bring in information from all sorts of publishers and sources that it doesn’t have deals with. OpenAI’s head of media partnerships, Varun Shetty, told MIT Technology Review that it won’t give preferential treatment to its publishing partners. Instead, OpenAI told me, the model itself finds the most trustworthy and useful source for any given question. And that can get weird too. In that very first example it showed me—when Turley ran that name search—it described a story I wrote years ago for Wired about being hacked. That story remains one of the most widely read I’ve ever written. But ChatGPT didn’t link to it. It linked to a short rewrite from The Verge. Admittedly, this was on a prototype version of search, which was, as Turley said, “risky.” When I asked him about it, he couldn’t really explain why the model chose the sources that it did, because the model itself makes that evaluation. The company helps steer it by identifying—sometimes with the help of users—what it considers better answers, but the model actually selects them. “And in many cases, it gets it wrong, which is why we have work to do,” said Turley. “Having a model in the loop is a very, very different mechanism than how a search engine worked in the past.” Indeed! The model, whether it’s OpenAI’s GPT-4o or Google’s Gemini or Anthropic’s Claude, can be very, very good at explaining things. But the rationale behind its explanations, its reasons for selecting a particular source, and even the language it may use in an answer are all pretty mysterious. Sure, a model can explain very many things, but not when that comes to its own answers. It was almost a decade ago, in 2016, when Pichai wrote that Google was moving from “mobile first” to “AI first”: “But in the next 10 years, we will shift to a world that is AI-first, a world where computing becomes universally available—be it at home, at work, in the car, or on the go—and interacting with all of these surfaces becomes much more natural and intuitive, and above all, more intelligent.” We’re there now—sort of. And it’s a weird place to be. It’s going to get weirder. That’s especially true as these things we now think of as distinct—querying a search engine, prompting a model, looking for a photo we’ve taken, deciding what we want to read or watch or hear, asking for a photo we wish we’d taken, and didn’t, but would still like to see—begin to merge. The search results we see from generative AI are best understood as a waypoint rather than a destination. What’s most important may not be search in itself; rather, it’s that search has given AI model developers a path to incorporating real-time information into their inputs and outputs. And that opens up all sorts of possibilities. “A ChatGPT that can understand and access the web won’t just be about summarizing results. It might be about doing things for you. And I think there’s a fairly exciting future there,” says OpenAI’s Weil. “You can imagine having the model book you a flight, or order DoorDash, or just accomplish general tasks for you in the future. It’s just once the model understands how to use the internet, the sky’s the limit.” This is the agentic future we’ve been hearing about for some time now, and the more AI models make use of real-time data from the internet, the closer it gets. Let’s say you have a trip coming up in a few weeks. An agent that can get data from the internet in real time can book your flights and hotel rooms, make dinner reservations, and more, based on what it knows about you and your upcoming travel—all without your having to guide it. Another agent could, say, monitor the sewage output of your home for certain diseases, and order tests and treatments in response. You won’t have to search for that weird noise your car is making, because the agent in your vehicle will already have done it and made an appointment to get the issue fixed. “It’s not always going to be just doing search and giving answers,” says Pichai. “Sometimes it’s going to be actions. Sometimes you’ll be interacting within the real world. So there is a notion of universal assistance through it all.” And the ways these things will be able to deliver answers is evolving rapidly now too. For example, today Google can not only search text, images, and even video; it can create them. Imagine overlaying that ability with search across an array of formats and devices. “Show me what a Townsend’s warbler looks like in the tree in front of me.” Or “Use my existing family photos and videos to create a movie trailer of our upcoming vacation to Puerto Rico next year, making sure we visit all the best restaurants and top landmarks.” “We have primarily done it on the input side,” he says, referring to the ways Google can now search for an image or within a video. “But you can imagine it on the output side too.” This is the kind of future Pichai says he is excited to bring online. Google has already showed off a bit of what that might look like with NotebookLM, a tool that lets you upload large amounts of text and have it converted into a chatty podcast. He imagines this type of functionality—the ability to take one type of input and convert it into a variety of outputs—transforming the way we interact with information. In a demonstration of a tool called Project Astra this summer at its developer conference, Google showed one version of this outcome, where cameras and microphones in phones and smart glasses understand the context all around you—online and off, audible and visual—and have the ability to recall and respond in a variety of ways. Astra can, for example, look at a crude drawing of a Formula One race car and not only identify it, but also explain its various parts and their uses. But you can imagine things going a bit further (and they will). Let’s say I want to see a video of how to fix something on my bike. The video doesn’t exist, but the information does. AI-assisted generative search could theoretically find that information somewhere online—in a user manual buried in a company’s website, for example—and create a video to show me exactly how to do what I want, just as it could explain that to me with words today. These are the kinds of things that start to happen when you put the entire compendium of human knowledge—knowledge that’s previously been captured in silos of language and format; maps and business registrations and product SKUs; audio and video and databases of numbers and old books and images and, really, anything ever published, ever tracked, ever recorded; things happening right now, everywhere—and introduce a model into all that. A model that maybe can’t understand, precisely, but has the ability to put that information together, rearrange it, and spit it back in a variety of different hopefully helpful ways. Ways that a mere index could not. That’s what we’re on the cusp of, and what we’re starting to see. And as Google rolls this out to a billion people, many of whom will be interacting with a conversational AI for the first time, what will that mean? What will we do differently? It’s all changing so quickly. Hang on, just hang on.

Subsea7 Scores Various Contracts Globally

Subsea 7 S.A. has secured what it calls a “sizeable” contract from Turkish Petroleum Offshore Technology Center AS (TP-OTC) to provide inspection, repair and maintenance (IRM) services for the Sakarya gas field development in the Black Sea. The contract scope includes project management and engineering executed and managed from Subsea7 offices in Istanbul, Türkiye, and Aberdeen, Scotland. The scope also includes the provision of equipment, including two work class remotely operated vehicles, and construction personnel onboard TP-OTC’s light construction vessel Mukavemet, Subsea7 said in a news release. The company defines a sizeable contract as having a value between $50 million and $150 million. Offshore operations will be executed in 2025 and 2026, Subsea7 said. Hani El Kurd, Senior Vice President of UK and Global Inspection, Repair, and Maintenance at Subsea7, said: “We are pleased to have been selected to deliver IRM services for TP-OTC in the Black Sea. This contract demonstrates our strategy to deliver engineering solutions across the full asset lifecycle in close collaboration with our clients. We look forward to continuing to work alongside TP-OTC to optimize gas production from the Sakarya field and strengthen our long-term presence in Türkiye”. North Sea Project Subsea7 also announced the award of a “substantial” contract by Inch Cape Offshore Limited to Seaway7, which is part of the Subsea7 Group. The contract is for the transport and installation of pin-pile jacket foundations and transition pieces for the Inch Cape Offshore Wind Farm. The 1.1-gigawatt Inch Cape project offshore site is located in the Scottish North Sea, 9.3 miles (15 kilometers) off the Angus coast, and will comprise 72 wind turbine generators. Seaway7’s scope of work includes the transport and installation of 18 pin-pile jacket foundations and 54 transition pieces with offshore works expected to begin in 2026, according to a separate news

Driving into the future