Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

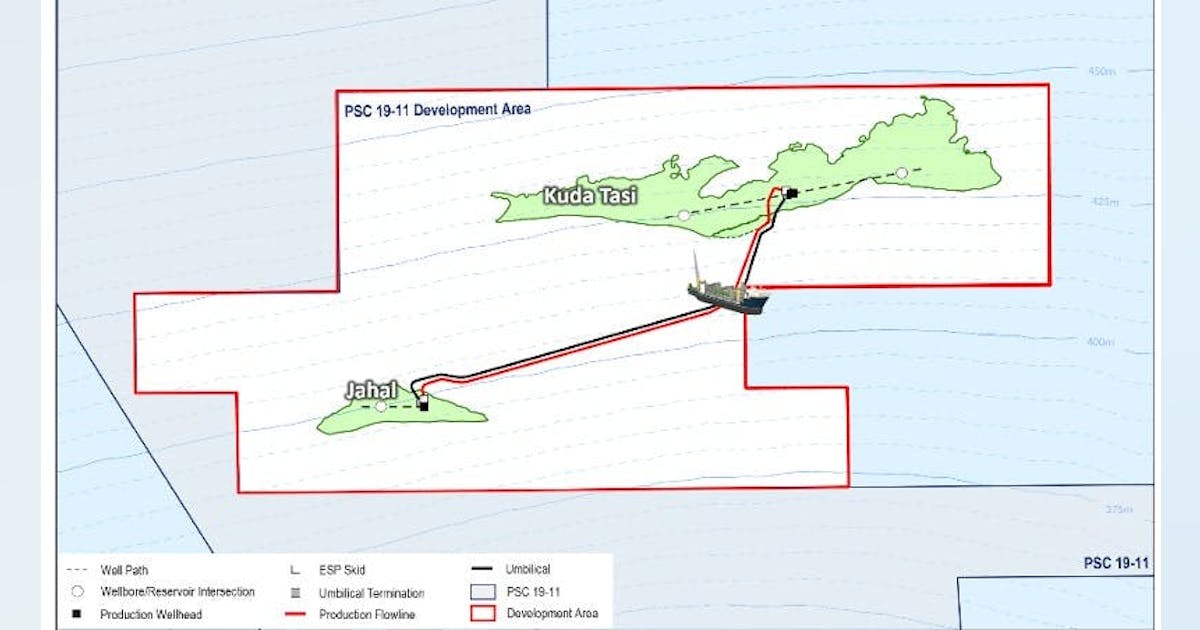

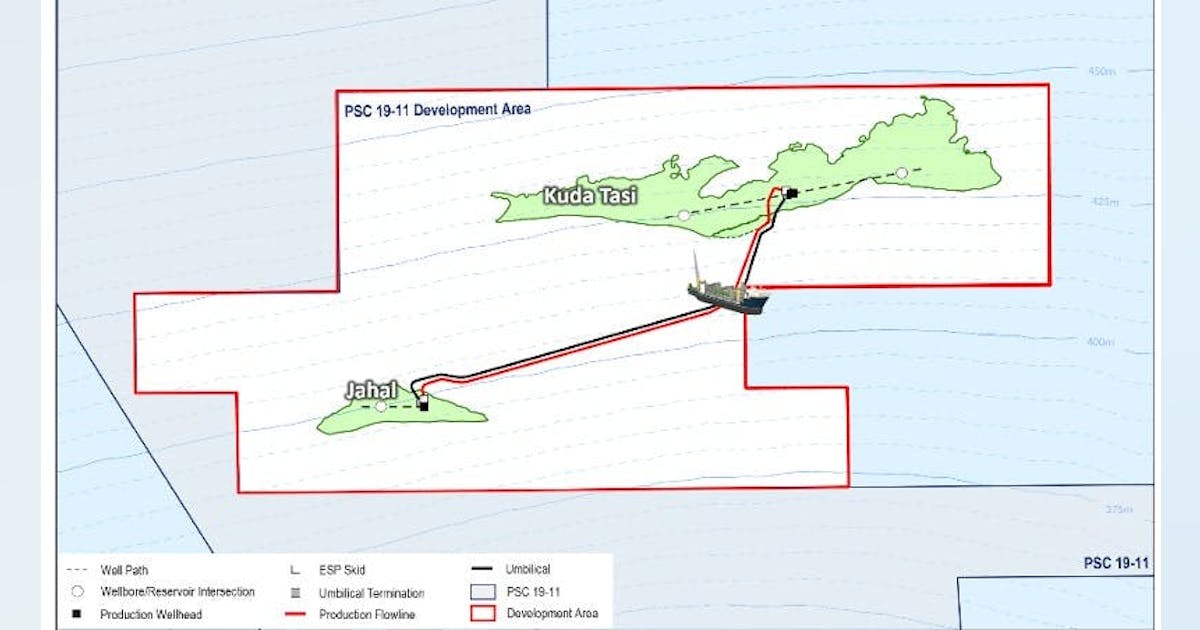

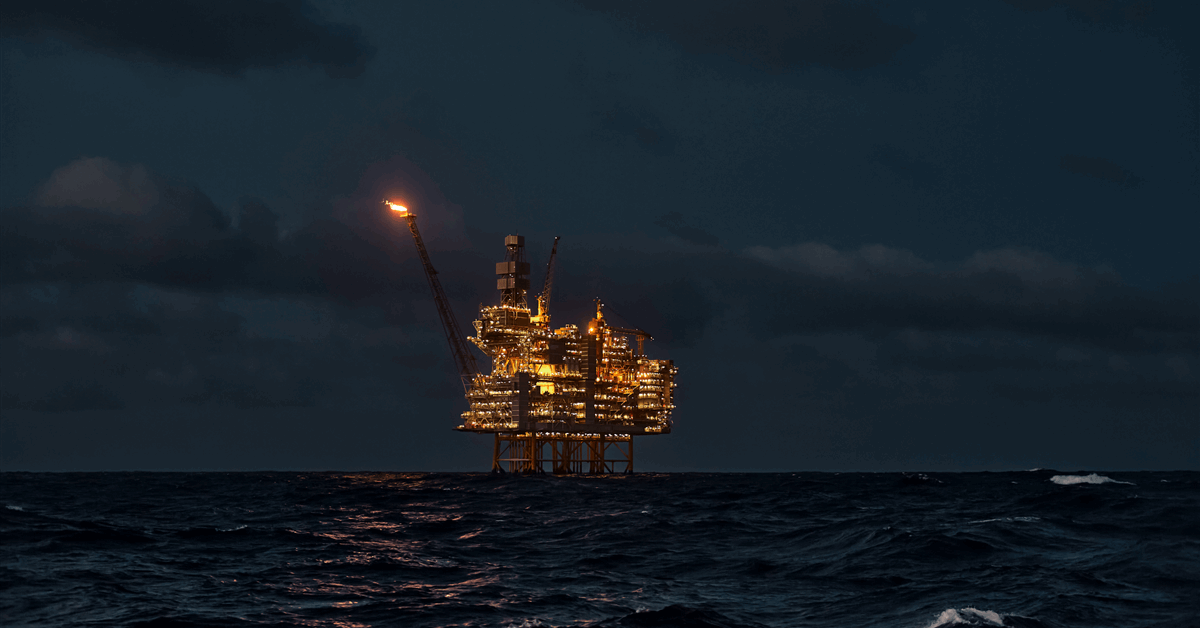

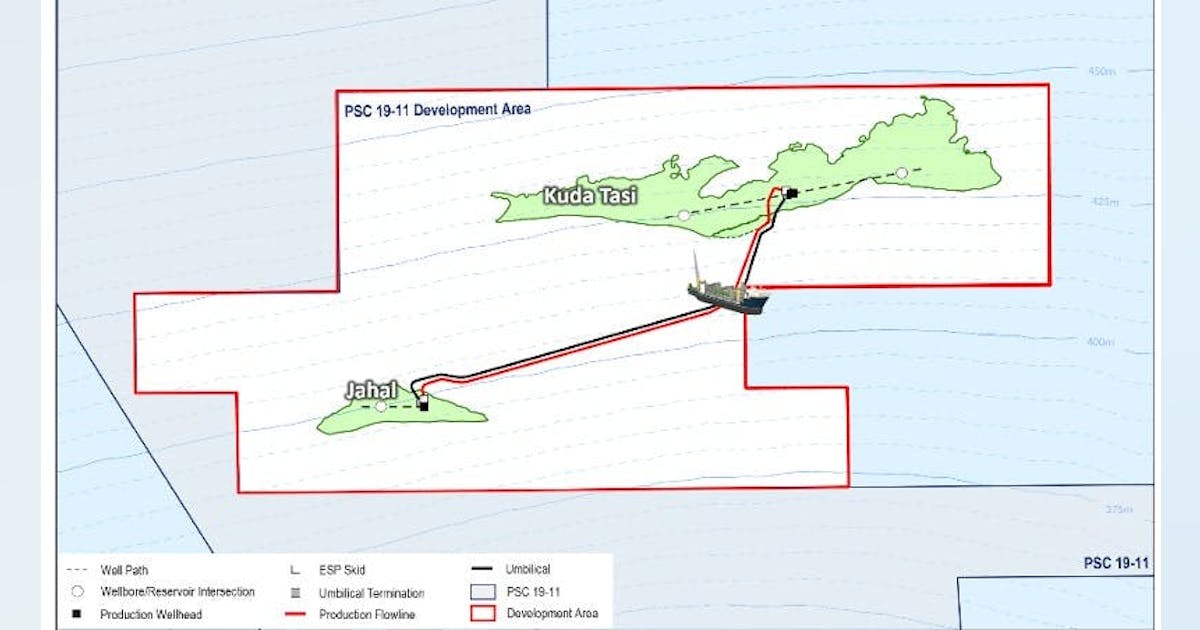

Finder Energy advances KTJ Project with development area approval

Finder Energy Holdings Ltd. received regulatory approval for a development area covering the Kuda Tasi and Jahal oil fields offshore Timor‑Leste, enabling progression toward field development. Autoridade Nacional do Petróleo (ANP) approved an 88‑sq km development area over the Kuda Tasi and Jahal oil fields (KTJ Project) within PSC 19‑11 offshore Timor‑Leste, representing the first stage of the regulatory approvals process for the project. The declaration of the development area is a precursor to the field development plan (FDP), which Finder is currently preparing for submission to ANP in second‑quarter 2026. Upon approval of the FDP, the development area would secure tenure for up to 25 years or until production ceases, allowing Finder to conduct development and production operations within the area, subject to applicable regulatory approvals and conditions. The company said its upside strategy centers on the potential for the Petrojarl I FPSO to serve as a central processing and export hub for future tiebacks of surrounding discoveries, contingent on successful appraisal and/or exploration activities within PSC 19‑11. Alternatively, longer tie‑back distances could be accommodated through a secondary standalone development in the southern portion of the PSC. Finder is continuing technical evaluation of appraisal and exploration opportunities to generate drilling targets. PSC 19‑11 lies within the Laminaria High oil province of Timor‑Leste. The KTJ Project contains an estimated 25 million bbl of gross 2C contingent resources, with identified upside of an additional 23 million bbl gross 2C contingent resources and 116 million bbl gross 2U prospective resources. Finder operates PSC 19‑11 with a 66% working interest.

Libya’s NOC, Chevron sign MoU for technical study for offshore Block NC146

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } The National Oil Corp. of Libya (NOC) signed a memorandum of understanding (MoU) with Chevron Corp. to conduct a comprehensive technical study of offshore Block NC146. The block is an unexplored area with “encouraging geological indicator that could lead to significant discoveries, helping to strengthen national reserves,” NOC noted Chairman Masoud Suleman as saying, noting that the partnership is “a message of confidence in the Libyan investment environment and evidence of the return of major companies to work and explore promising opportunities in our country.” According to the NOC, Libya produces 1.4 million b/d of oil and aims to increase oil production in the coming 3-5 years to 2 million b/d and then to 3 million b/d following years of instability that impacted the country’s production. Chevron is working to add to its diverse exploration and production portfolio in the Mediterranean and Africa and continues to assess potential future opportunities in the region. The operator earlier this year entered Libya after it was designated as a winning bidder for Contract Area 106 in the Sirte basin in the 2025 Libyan Bid Round. That followed the January 2026 signing of a

Market Focus: LNG supply shocks expose limited market flexibility

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } In this Market Focus episode of the Oil & Gas Journal ReEnterprised podcast, Conglin Xu, managing editor, economics, takes a look into the LNG market shock caused by the effective closure of the Strait of Hormuz and the sudden loss of Qatari LNG supply as the Iran war continues. Xu speaks with Edward O’Toole, director of global gas analysis, RBAC Inc., to examine how these disruptions are intensifying global supply constraints at a time when European inventories were already under pressure following a colder-than-average winter and weaker storage levels. Drawing on RBAC’s G2M2 global gas market model, O’Toole outlines disruption scenarios analyzed in the firm’s recent report and explains how current events align with their findings. With global LNG production already operating near maximum utilization, the market response is being driven by higher prices and reduced consumption. Europe faces sharper price pressure due to storage refill needs, while Asian markets are expected to see greater demand reductions as consumers switch fuels. O’Toole underscores the importance of scenario-based modeling and supply diversification as geopolitical risk exposes structural vulnerabilities in the LNG market—offering insights for stakeholders navigating an increasingly uncertain global

Latin America returns to the energy security conversation at CERAWeek

With geopolitical risk central to conversations about energy, and with long-cycle supply once again in focus, Latin America’s mix of hydrocarbons and export potential drew renewed attention at CERAWeek by S&P Global in Houston. Argentina, resource story to export platform Among the regional stories, Argentina stood out as Vaca Muerta was no longer discussed simply as a large unconventional resource, but whether the country could turn resource quality into sustained export capacity. Country officials talked about scale: more operators, more services, more infrastructure, and a larger industrial base around the unconventional play. Daniel González, Vice Minister of Energy and Mining for Argentina, put it plainly: “The time has come to expand the Vaca Muerta ecosystem.” What is at stake now is not whether the basin works, but whether the country can build enough above-ground capacity and regulatory consistency to keep development moving. Horacio Marín, chairman and chief executive officer of YPF, offered an expansive version of that argument. He said Argentina’s energy exports could reach $50 billion/year by 2031, backed by roughly $130 billion in cumulative investment in oil, LNG, and transportation infrastructure. He said Argentine crude output could reach 1 million b/d by end-2026. He said Argentina wants to be seen less as a recurrent frontier story and more as a future supplier with scale. “The time to invest in Vaca Muerta is now,” Marín said. The LNG piece is starting to take shape. Eni, YPF, and XRG signed a joint development agreement in February to move Argentina LNG forward, with a first phase planned at 12 million tonnes/year. Southern Energy—backed by PAE, YPF, Pampa Energía, Harbour Energy, and Golar LNG—holds a long-term agreement with SEFE for 2 million tonnes/year over 8 years. The movement by global standards is early-stage and relatively modest, but it adds to Argentina’s export

Nscale Expands AI Factory Strategy With Power, Platform, and Scale

Nscale has moved quickly from startup to serious contender in the race to build infrastructure for the AI era. Founded in 2024, the company has positioned itself as a vertically integrated “neocloud” operator, combining data center development, GPU fleet ownership, and a software stack designed to deliver large-scale AI compute. That model has helped it attract backing from investors including Nvidia, and in early March 2026 the company raised another $2 billion at a reported $14.6 billion valuation. Reuters has described Nscale’s approach as owning and operating its own data centers, GPUs, and software stack to support major customers including Microsoft and OpenAI. What makes Nscale especially relevant now is that it is no longer content to operate as a cloud intermediary or capacity provider. Over the past year, the company has increasingly framed itself as an AI hyperscaler and AI factory builder, seeking to combine land, power, data center shells, GPU procurement, customer offtake, and software services into a single integrated platform. Its acquisition of American Intelligence & Power Corporation, or AIPCorp, is the clearest signal yet of that shift, bringing energy infrastructure directly into the center of Nscale’s business model. The AIPCorp transaction is significant because it gives Nscale more than additional development capacity. The company said the deal includes the Monarch Compute Campus in Mason County, West Virginia, a site of up to 2,250 acres with a state-certified AI microgrid and a power runway it says can scale beyond 8 gigawatts. Nscale also said the acquisition establishes a new division, Nscale Energy & Power, headquartered in Houston, extending its platform further into power development. That positioning reflects a broader shift in the AI infrastructure market. The central bottleneck is no longer simply access to GPUs. It is the ability to assemble power, cooling, land, permits, data center

Four things we’d need to put data centers in space

MIT Technology Review Explains: Let our writers untangle the complex, messy world of technology to help you understand what’s coming next. You can read more from the series here. In January, Elon Musk’s SpaceX filed an application with the US Federal Communications Commission to launch up to one million data centers into Earth’s orbit. The goal? To fully unleash the potential of AI without triggering an environmental crisis on Earth. But could it work? SpaceX is the latest in a string of high-tech companies extolling the potential of orbital computing infrastructure. Last year, Amazon founder Jeff Bezos said that the tech industry will move toward large-scale computing in space. Google has plans to loft data-crunching satellites, aiming to launch a test constellation of 80 as early as next year. And last November Starcloud, a startup based in Washington State, launched a satellite fitted with a high-performance Nvidia H100 GPU, marking the first orbital test of an advanced AI chip. The company envisions orbiting data centers as large as those on Earth by 2030. Proponents believe that putting data centers in space makes sense. The current AI boom is straining energy grids and adding to the demand for water, which is needed to cool the computers. Communities in the vicinity of large-scale data centers worry about increasing prices for those resources as a result of the growing demand, among other issues.

In space, advocates say, the water and energy problems would be solved. In constantly illuminated sun-synchronous orbits, space-borne data centers would have uninterrupted access to solar power. At the same time, the excess heat they produce would be easily expelled into the cold vacuum of space. And with the cost of space launches decreasing, and mega-rockets such as SpaceX’s Starship promising to push prices even lower, there could be a point at which moving the world’s data centers into space makes sound business sense. Detractors, on the other hand, tell a different story and point to a variety of technological hurdles, though some say it’s possible they may be surmountable in the not-so-distant future. Here are four of the must-haves we’d need to make space-based data centers a reality. A way to carry away heat AI data centers produce a lot of heat. Space might seem like a great place to dispel that heat without using up massive amounts of water. But it’s not so simple. To get the power needed to run 24-7, a space-based data center would have to be in a constantly illuminated orbit, circling the planet from pole to pole, and never hide in Earth’s shadow. And in that orbit, the temperature of the equipment would never drop below 80 °C, which is way too hot for electronics to operate safely in the long term.

Getting the heat out of such a system is surprisingly challenging. “Thermal management and cooling in space is generally a huge problem,” says Lilly Eichinger, CEO of the Austrian space tech startup Satellives. On Earth, heat dissipates mostly through the natural process of convection, which relies on the movement of gases and liquids like air and water. In the vacuum of space, heat has to be removed through the far less efficient process of radiation. Safely removing the heat produced by the computers, as well as what’s absorbed from the sun, requires large radiative surfaces. The bulkier the satellite, the harder it is to send all the heat inside it out into space. But Yves Durand, former director of technology at the European aerospace giant Thales Alenia Space, says that technology already exists to tackle the problem. The company previously developed a system for large telecommunications satellites that can pipe refrigerant fluid through a network of tubing using a mechanical pump, ultimately transferring heat from within a spacecraft to radiators on the exterior. Durand led a 2024 feasibility study on space-based data centers, which found that although challenges exist, it should be possible for Europe to put gigawatt-scale data centers (on par with the largest Earthbound facilities) into orbit before 2050. These would be considerably larger than those envisioned by SpaceX, featuring solar arrays hundreds of meters in size—larger than the International Space Station. Computer chips that can withstand a radiation onslaught The space around Earth is constantly battered by cosmic particles and lashed by solar radiation. On Earth’s surface, humans and their electronic devices are protected from this corrosive soup of charged particles by the planet’s atmosphere and magnetosphere. But the farther away from Earth you venture, the weaker that protection becomes. Studies show that aircraft crews have a higher risk of developing cancer because of their frequent exposure to high radiation at cruising altitude, where the atmosphere is thin and less protective. Electronics in space are at risk of three types of problems caused by high radiation levels, says Ken Mai, a principal systems scientist in electrical and computer engineering at Carnegie Mellon University. Phenomena known as single-event upsets can cause bit flips and corrupt stored data when charged particles hit chips and memory devices. Over time, electronics in space accumulate damage from ionizing radiation that degrades their performance. And sometimes a charged particle can strike the component in a way that physically displaces atoms on the chip, creating permanent damage, Mai explains. Traditionally, computers launched to space had to undergo years of testing and were specifically designed to withstand the intense radiation present in Earth’s orbit. These space-hardened electronics are much more expensive, though, and their performance is also years behind the state-of-the-art devices for Earth-based computing. Launching conventional chips is a gamble. But Durand says cutting-edge computer chips use technologies that are by default more resistant to radiation than past systems. And in mid-March, Nvidia touted hardware, including a new GPU, that is “bringing AI compute to orbital data centers.” Nvidia’s head of edge AI marketing, Chen Su, told MIT Technology Review, that “Nvidia systems are inherently commercial off the shelf, with radiation resilience achieved at the system level rather than through radiation‑hardened silicon alone.” He added that satellite makers increase the chips’ resiliency with the help of shielding, advanced software for error detection, and architectures that combine the consumer-grade devices with bespoke, hardened technologies.

Still, Mai says that the data-crunching chips are only one issue. The data centers would also need memory and storage devices, both of which are vulnerable to damage by excessive radiation. And operators would need the ability to swap things out or adapt when issues arise. The feasibility and affordability of using robots or astronaut missions for maintenance is a major question mark hanging over the idea of large-scale orbiting data centers. “You not only need to throw up a data center to space that meets your current needs; you need redundancy, extra parts, and reconfigurability, so when stuff breaks, you can just change your configuration and continue working,” says Mai. “It’s a very challenging problem because on one hand you have free energy and power in space, but there are a lot of disadvantages. It’s quite possible that those problems will outweigh the advantages that you get from putting a data center into space.” In addition to the need for regular maintenance, there’s also the potential for catastrophic loss. During periods of intense space weather, satellites can be flooded with enough radiation to kill all their electronics. The sun has just passed the most active phase of its 11-year cycle with relatively little impact on satellites. Still, experts warn that since the space age began, the planet has not experienced the worst the sun is capable of. Many doubt whether the low-cost new space systems that dominate Earth’s orbits today are prepared for that. A plan to dodge space debris Both large-scale orbiting data centers such as those envisioned by Thales Alenia Space and the mega-constellations of smaller satellites as proposed by SpaceX give a headache to space sustainability experts. The space around Earth is already quite crowded with satellites. Starlink satellites alone perform hundreds of thousands of collision avoidance maneuvers every year to dodge debris and other spacecraft. The more stuff in space, the higher the likelihood of a devastating collision that would clutter the orbit with thousands of dangerous fragments. Large structures with hundreds of square meters of solar arrays would quickly suffer damage from small pieces of space debris and meteorites, which would over time degrade the performance of their solar panels and create more debris in orbit. Operating one million satellites in low Earth orbit, the region of space at the altitude of up to 2,000 kilometers, might be impossible to do safely unless all satellites in that area are part of the same network so they can communicate effectively to maneuver around each other, Greg Vialle, the founder of the orbital recycling startup Lunexus Space, told MIT Technology Review. “You can fit roughly four to five thousand satellites in one orbital shell,” Vialle says. “If you count all the shells in low Earth orbit, you get to a number of around 240,000 satellites maximum.” And spacecraft must be able to pass each other at a safe distance to avoid collisions, he says. “You also need to be able to get stuff up to higher orbits and back down to de-orbit,” he adds. “So you need to have gaps of at least 10 kilometers between the satellites to do that safely. Mega-constellations like Starlink can be packed more tightly because the satellites communicate with each other. But you can’t have one million satellites around Earth unless it’s a monopoly.”

On top of that, Starlink would likely want to regularly upgrade its orbiting data centers with more modern technology. Replacing a million satellites perhaps every five years would mean even more orbital traffic—and it could increase the rate of debris reentry into Earth’s atmosphere from around three or four pieces of junk a day to about one every three minutes, according to a group of astronomers who filed objections against SpaceX’s FCC application. Some scientists are concerned that reentering debris could damage the ozone layer and alter Earth’s thermal balance. Economical launch and assembly The longer hardware survives in orbit, the better the return on investment. But for orbital data centers to make economic sense, companies will have to find a relatively cheap way to get that hardware in orbit. SpaceX is betting on its upcoming Starship mega-rocket, which will be able to carry up to six times as much payload as the current workhorse, Falcon 9. The Thales Alenia Space study concluded that if Europe were to build its own orbital data centers, it would have to develop a similarly potent launcher.

But launch is only part of the equation. A large-scale orbital data center won’t fit in a rocket—even a mega-rocket. It will need to be assembled in orbit. And that will likely require advanced robotic systems that do not exist yet. Various companies have conducted Earth-based tests with precursors of such systems, but they are still far from real-world use. Durand says that in the short term, smaller-scale data centers are likely to establish themselves as an integral part of the orbital infrastructure, by processing images from Earth-observing satellites directly in space without having to send them to Earth. That would be a huge help for companies selling insights from space, as many of these data sets are extremely large, and competition for opportunities to downlink them to Earth for processing via ground stations is growing. “The good thing with orbital data centers is that you can start with small servers and gradually increase and build up larger data centers,” says Durand. “You can use modularity. You can learn little by little and gradually develop industrial capacity in space. We have all the technology, and the demand for space-based data processing infrastructure is huge, so it makes sense to think about it.” Smaller facilities probably won’t do much to offset the strain that terrestrial data centers are placing on the planet’s water and electricity, though. That vision of the future might take decades to come to fruition, some critics think—if it even gets off the ground at all.

Finder Energy advances KTJ Project with development area approval

Finder Energy Holdings Ltd. received regulatory approval for a development area covering the Kuda Tasi and Jahal oil fields offshore Timor‑Leste, enabling progression toward field development. Autoridade Nacional do Petróleo (ANP) approved an 88‑sq km development area over the Kuda Tasi and Jahal oil fields (KTJ Project) within PSC 19‑11 offshore Timor‑Leste, representing the first stage of the regulatory approvals process for the project. The declaration of the development area is a precursor to the field development plan (FDP), which Finder is currently preparing for submission to ANP in second‑quarter 2026. Upon approval of the FDP, the development area would secure tenure for up to 25 years or until production ceases, allowing Finder to conduct development and production operations within the area, subject to applicable regulatory approvals and conditions. The company said its upside strategy centers on the potential for the Petrojarl I FPSO to serve as a central processing and export hub for future tiebacks of surrounding discoveries, contingent on successful appraisal and/or exploration activities within PSC 19‑11. Alternatively, longer tie‑back distances could be accommodated through a secondary standalone development in the southern portion of the PSC. Finder is continuing technical evaluation of appraisal and exploration opportunities to generate drilling targets. PSC 19‑11 lies within the Laminaria High oil province of Timor‑Leste. The KTJ Project contains an estimated 25 million bbl of gross 2C contingent resources, with identified upside of an additional 23 million bbl gross 2C contingent resources and 116 million bbl gross 2U prospective resources. Finder operates PSC 19‑11 with a 66% working interest.

Libya’s NOC, Chevron sign MoU for technical study for offshore Block NC146

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } The National Oil Corp. of Libya (NOC) signed a memorandum of understanding (MoU) with Chevron Corp. to conduct a comprehensive technical study of offshore Block NC146. The block is an unexplored area with “encouraging geological indicator that could lead to significant discoveries, helping to strengthen national reserves,” NOC noted Chairman Masoud Suleman as saying, noting that the partnership is “a message of confidence in the Libyan investment environment and evidence of the return of major companies to work and explore promising opportunities in our country.” According to the NOC, Libya produces 1.4 million b/d of oil and aims to increase oil production in the coming 3-5 years to 2 million b/d and then to 3 million b/d following years of instability that impacted the country’s production. Chevron is working to add to its diverse exploration and production portfolio in the Mediterranean and Africa and continues to assess potential future opportunities in the region. The operator earlier this year entered Libya after it was designated as a winning bidder for Contract Area 106 in the Sirte basin in the 2025 Libyan Bid Round. That followed the January 2026 signing of a

Market Focus: LNG supply shocks expose limited market flexibility

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } In this Market Focus episode of the Oil & Gas Journal ReEnterprised podcast, Conglin Xu, managing editor, economics, takes a look into the LNG market shock caused by the effective closure of the Strait of Hormuz and the sudden loss of Qatari LNG supply as the Iran war continues. Xu speaks with Edward O’Toole, director of global gas analysis, RBAC Inc., to examine how these disruptions are intensifying global supply constraints at a time when European inventories were already under pressure following a colder-than-average winter and weaker storage levels. Drawing on RBAC’s G2M2 global gas market model, O’Toole outlines disruption scenarios analyzed in the firm’s recent report and explains how current events align with their findings. With global LNG production already operating near maximum utilization, the market response is being driven by higher prices and reduced consumption. Europe faces sharper price pressure due to storage refill needs, while Asian markets are expected to see greater demand reductions as consumers switch fuels. O’Toole underscores the importance of scenario-based modeling and supply diversification as geopolitical risk exposes structural vulnerabilities in the LNG market—offering insights for stakeholders navigating an increasingly uncertain global

Latin America returns to the energy security conversation at CERAWeek

With geopolitical risk central to conversations about energy, and with long-cycle supply once again in focus, Latin America’s mix of hydrocarbons and export potential drew renewed attention at CERAWeek by S&P Global in Houston. Argentina, resource story to export platform Among the regional stories, Argentina stood out as Vaca Muerta was no longer discussed simply as a large unconventional resource, but whether the country could turn resource quality into sustained export capacity. Country officials talked about scale: more operators, more services, more infrastructure, and a larger industrial base around the unconventional play. Daniel González, Vice Minister of Energy and Mining for Argentina, put it plainly: “The time has come to expand the Vaca Muerta ecosystem.” What is at stake now is not whether the basin works, but whether the country can build enough above-ground capacity and regulatory consistency to keep development moving. Horacio Marín, chairman and chief executive officer of YPF, offered an expansive version of that argument. He said Argentina’s energy exports could reach $50 billion/year by 2031, backed by roughly $130 billion in cumulative investment in oil, LNG, and transportation infrastructure. He said Argentine crude output could reach 1 million b/d by end-2026. He said Argentina wants to be seen less as a recurrent frontier story and more as a future supplier with scale. “The time to invest in Vaca Muerta is now,” Marín said. The LNG piece is starting to take shape. Eni, YPF, and XRG signed a joint development agreement in February to move Argentina LNG forward, with a first phase planned at 12 million tonnes/year. Southern Energy—backed by PAE, YPF, Pampa Energía, Harbour Energy, and Golar LNG—holds a long-term agreement with SEFE for 2 million tonnes/year over 8 years. The movement by global standards is early-stage and relatively modest, but it adds to Argentina’s export

Nscale Expands AI Factory Strategy With Power, Platform, and Scale

Nscale has moved quickly from startup to serious contender in the race to build infrastructure for the AI era. Founded in 2024, the company has positioned itself as a vertically integrated “neocloud” operator, combining data center development, GPU fleet ownership, and a software stack designed to deliver large-scale AI compute. That model has helped it attract backing from investors including Nvidia, and in early March 2026 the company raised another $2 billion at a reported $14.6 billion valuation. Reuters has described Nscale’s approach as owning and operating its own data centers, GPUs, and software stack to support major customers including Microsoft and OpenAI. What makes Nscale especially relevant now is that it is no longer content to operate as a cloud intermediary or capacity provider. Over the past year, the company has increasingly framed itself as an AI hyperscaler and AI factory builder, seeking to combine land, power, data center shells, GPU procurement, customer offtake, and software services into a single integrated platform. Its acquisition of American Intelligence & Power Corporation, or AIPCorp, is the clearest signal yet of that shift, bringing energy infrastructure directly into the center of Nscale’s business model. The AIPCorp transaction is significant because it gives Nscale more than additional development capacity. The company said the deal includes the Monarch Compute Campus in Mason County, West Virginia, a site of up to 2,250 acres with a state-certified AI microgrid and a power runway it says can scale beyond 8 gigawatts. Nscale also said the acquisition establishes a new division, Nscale Energy & Power, headquartered in Houston, extending its platform further into power development. That positioning reflects a broader shift in the AI infrastructure market. The central bottleneck is no longer simply access to GPUs. It is the ability to assemble power, cooling, land, permits, data center

Four things we’d need to put data centers in space

MIT Technology Review Explains: Let our writers untangle the complex, messy world of technology to help you understand what’s coming next. You can read more from the series here. In January, Elon Musk’s SpaceX filed an application with the US Federal Communications Commission to launch up to one million data centers into Earth’s orbit. The goal? To fully unleash the potential of AI without triggering an environmental crisis on Earth. But could it work? SpaceX is the latest in a string of high-tech companies extolling the potential of orbital computing infrastructure. Last year, Amazon founder Jeff Bezos said that the tech industry will move toward large-scale computing in space. Google has plans to loft data-crunching satellites, aiming to launch a test constellation of 80 as early as next year. And last November Starcloud, a startup based in Washington State, launched a satellite fitted with a high-performance Nvidia H100 GPU, marking the first orbital test of an advanced AI chip. The company envisions orbiting data centers as large as those on Earth by 2030. Proponents believe that putting data centers in space makes sense. The current AI boom is straining energy grids and adding to the demand for water, which is needed to cool the computers. Communities in the vicinity of large-scale data centers worry about increasing prices for those resources as a result of the growing demand, among other issues.

In space, advocates say, the water and energy problems would be solved. In constantly illuminated sun-synchronous orbits, space-borne data centers would have uninterrupted access to solar power. At the same time, the excess heat they produce would be easily expelled into the cold vacuum of space. And with the cost of space launches decreasing, and mega-rockets such as SpaceX’s Starship promising to push prices even lower, there could be a point at which moving the world’s data centers into space makes sound business sense. Detractors, on the other hand, tell a different story and point to a variety of technological hurdles, though some say it’s possible they may be surmountable in the not-so-distant future. Here are four of the must-haves we’d need to make space-based data centers a reality. A way to carry away heat AI data centers produce a lot of heat. Space might seem like a great place to dispel that heat without using up massive amounts of water. But it’s not so simple. To get the power needed to run 24-7, a space-based data center would have to be in a constantly illuminated orbit, circling the planet from pole to pole, and never hide in Earth’s shadow. And in that orbit, the temperature of the equipment would never drop below 80 °C, which is way too hot for electronics to operate safely in the long term.

Getting the heat out of such a system is surprisingly challenging. “Thermal management and cooling in space is generally a huge problem,” says Lilly Eichinger, CEO of the Austrian space tech startup Satellives. On Earth, heat dissipates mostly through the natural process of convection, which relies on the movement of gases and liquids like air and water. In the vacuum of space, heat has to be removed through the far less efficient process of radiation. Safely removing the heat produced by the computers, as well as what’s absorbed from the sun, requires large radiative surfaces. The bulkier the satellite, the harder it is to send all the heat inside it out into space. But Yves Durand, former director of technology at the European aerospace giant Thales Alenia Space, says that technology already exists to tackle the problem. The company previously developed a system for large telecommunications satellites that can pipe refrigerant fluid through a network of tubing using a mechanical pump, ultimately transferring heat from within a spacecraft to radiators on the exterior. Durand led a 2024 feasibility study on space-based data centers, which found that although challenges exist, it should be possible for Europe to put gigawatt-scale data centers (on par with the largest Earthbound facilities) into orbit before 2050. These would be considerably larger than those envisioned by SpaceX, featuring solar arrays hundreds of meters in size—larger than the International Space Station. Computer chips that can withstand a radiation onslaught The space around Earth is constantly battered by cosmic particles and lashed by solar radiation. On Earth’s surface, humans and their electronic devices are protected from this corrosive soup of charged particles by the planet’s atmosphere and magnetosphere. But the farther away from Earth you venture, the weaker that protection becomes. Studies show that aircraft crews have a higher risk of developing cancer because of their frequent exposure to high radiation at cruising altitude, where the atmosphere is thin and less protective. Electronics in space are at risk of three types of problems caused by high radiation levels, says Ken Mai, a principal systems scientist in electrical and computer engineering at Carnegie Mellon University. Phenomena known as single-event upsets can cause bit flips and corrupt stored data when charged particles hit chips and memory devices. Over time, electronics in space accumulate damage from ionizing radiation that degrades their performance. And sometimes a charged particle can strike the component in a way that physically displaces atoms on the chip, creating permanent damage, Mai explains. Traditionally, computers launched to space had to undergo years of testing and were specifically designed to withstand the intense radiation present in Earth’s orbit. These space-hardened electronics are much more expensive, though, and their performance is also years behind the state-of-the-art devices for Earth-based computing. Launching conventional chips is a gamble. But Durand says cutting-edge computer chips use technologies that are by default more resistant to radiation than past systems. And in mid-March, Nvidia touted hardware, including a new GPU, that is “bringing AI compute to orbital data centers.” Nvidia’s head of edge AI marketing, Chen Su, told MIT Technology Review, that “Nvidia systems are inherently commercial off the shelf, with radiation resilience achieved at the system level rather than through radiation‑hardened silicon alone.” He added that satellite makers increase the chips’ resiliency with the help of shielding, advanced software for error detection, and architectures that combine the consumer-grade devices with bespoke, hardened technologies.

Still, Mai says that the data-crunching chips are only one issue. The data centers would also need memory and storage devices, both of which are vulnerable to damage by excessive radiation. And operators would need the ability to swap things out or adapt when issues arise. The feasibility and affordability of using robots or astronaut missions for maintenance is a major question mark hanging over the idea of large-scale orbiting data centers. “You not only need to throw up a data center to space that meets your current needs; you need redundancy, extra parts, and reconfigurability, so when stuff breaks, you can just change your configuration and continue working,” says Mai. “It’s a very challenging problem because on one hand you have free energy and power in space, but there are a lot of disadvantages. It’s quite possible that those problems will outweigh the advantages that you get from putting a data center into space.” In addition to the need for regular maintenance, there’s also the potential for catastrophic loss. During periods of intense space weather, satellites can be flooded with enough radiation to kill all their electronics. The sun has just passed the most active phase of its 11-year cycle with relatively little impact on satellites. Still, experts warn that since the space age began, the planet has not experienced the worst the sun is capable of. Many doubt whether the low-cost new space systems that dominate Earth’s orbits today are prepared for that. A plan to dodge space debris Both large-scale orbiting data centers such as those envisioned by Thales Alenia Space and the mega-constellations of smaller satellites as proposed by SpaceX give a headache to space sustainability experts. The space around Earth is already quite crowded with satellites. Starlink satellites alone perform hundreds of thousands of collision avoidance maneuvers every year to dodge debris and other spacecraft. The more stuff in space, the higher the likelihood of a devastating collision that would clutter the orbit with thousands of dangerous fragments. Large structures with hundreds of square meters of solar arrays would quickly suffer damage from small pieces of space debris and meteorites, which would over time degrade the performance of their solar panels and create more debris in orbit. Operating one million satellites in low Earth orbit, the region of space at the altitude of up to 2,000 kilometers, might be impossible to do safely unless all satellites in that area are part of the same network so they can communicate effectively to maneuver around each other, Greg Vialle, the founder of the orbital recycling startup Lunexus Space, told MIT Technology Review. “You can fit roughly four to five thousand satellites in one orbital shell,” Vialle says. “If you count all the shells in low Earth orbit, you get to a number of around 240,000 satellites maximum.” And spacecraft must be able to pass each other at a safe distance to avoid collisions, he says. “You also need to be able to get stuff up to higher orbits and back down to de-orbit,” he adds. “So you need to have gaps of at least 10 kilometers between the satellites to do that safely. Mega-constellations like Starlink can be packed more tightly because the satellites communicate with each other. But you can’t have one million satellites around Earth unless it’s a monopoly.”

On top of that, Starlink would likely want to regularly upgrade its orbiting data centers with more modern technology. Replacing a million satellites perhaps every five years would mean even more orbital traffic—and it could increase the rate of debris reentry into Earth’s atmosphere from around three or four pieces of junk a day to about one every three minutes, according to a group of astronomers who filed objections against SpaceX’s FCC application. Some scientists are concerned that reentering debris could damage the ozone layer and alter Earth’s thermal balance. Economical launch and assembly The longer hardware survives in orbit, the better the return on investment. But for orbital data centers to make economic sense, companies will have to find a relatively cheap way to get that hardware in orbit. SpaceX is betting on its upcoming Starship mega-rocket, which will be able to carry up to six times as much payload as the current workhorse, Falcon 9. The Thales Alenia Space study concluded that if Europe were to build its own orbital data centers, it would have to develop a similarly potent launcher.

But launch is only part of the equation. A large-scale orbital data center won’t fit in a rocket—even a mega-rocket. It will need to be assembled in orbit. And that will likely require advanced robotic systems that do not exist yet. Various companies have conducted Earth-based tests with precursors of such systems, but they are still far from real-world use. Durand says that in the short term, smaller-scale data centers are likely to establish themselves as an integral part of the orbital infrastructure, by processing images from Earth-observing satellites directly in space without having to send them to Earth. That would be a huge help for companies selling insights from space, as many of these data sets are extremely large, and competition for opportunities to downlink them to Earth for processing via ground stations is growing. “The good thing with orbital data centers is that you can start with small servers and gradually increase and build up larger data centers,” says Durand. “You can use modularity. You can learn little by little and gradually develop industrial capacity in space. We have all the technology, and the demand for space-based data processing infrastructure is huge, so it makes sense to think about it.” Smaller facilities probably won’t do much to offset the strain that terrestrial data centers are placing on the planet’s water and electricity, though. That vision of the future might take decades to come to fruition, some critics think—if it even gets off the ground at all.

ExxonMobil begins Turrum Phase 3 drilling off Australia’s east coast

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Esso Australia Pty Ltd., a subsidiary of ExxonMobil Corp. and current operator of the Gippsland basin oil and gas fields in Bass Strait offshore eastern Victoria, has started drilling the Turrum Phase 3 project in Australia. This $350-million investment will see the VALARIS 107 jack-up rig drill five new wells into Turrum and North Turrum gas fields within Production License VIC/L03 to support Australia’s east coast domestic gas market. The new wells will be drilled from Marlin B platform, about 42 km off the Gippsland coastline, southeast of Lakes Entrance in water depths of about 60 m, according to a 2025 information bulletin. <!–> Turrum Phase 3, which builds on nearly $1 billion in recent investment across the Gippsland basin, is expected to be online before winter 2027, the company said in a post to its LinkedIn account Mar. 24. In 2025, Esso made a final investment decision to develop the Turrum Phase 3 project targeting underdeveloped gas resources. The Gippsland Basin joint venture is a 50-50 partnership between Esso Australia Resources and Woodside Energy (Bass Strait) and operated by Esso Australia. ]–><!–> ]–>

The Golden Rule of the oil market: Understanding global price dynamics and emerging exceptions

Mark FinleyBaker Institute, Rice University In recent weeks, questions surrounding the oil market crisis have been framed around a core principle described as the Golden Rule of the Oil Market: it is a global market. When conditions change anywhere—positively or negatively—prices respond everywhere. That framework helps explain why gasoline prices are rising in the US despite limited direct imports from the Middle East and the US’s status as a significant net exporter of oil. It also explains why oil cargoes that Iran permits to transit the Strait of Hormuz reduce Iran’s leverage over global oil prices, and by extension over US consumers and policymakers concerned about prices at the pump. Alongside its own exports, Iran has allowed a handful of additional tankers to transit the Strait, including several tankers destined for China and LPG shipments for India. The greater the volume of oil transiting the Strait, the smaller the disruption to the global oil market and the less upward pressure on global prices. The same logic applies to US efforts to ease sanctions on Iranian and Russian oil cargoes already at sea, which are unlikely to provide meaningful relief for rising oil prices. Under the Golden Rule, those barrels—having already been produced and shipped—would have found buyers regardless of sanctions, with price discounts sufficient to offset the risk of US penalties, as has been the case for Russian oil since 2022. Exceptions The Golden Rule has described oil market dynamics effectively for decades. However, a small number of potential exceptions have begun to emerge. For now, those exceptions remain relatively inconsequential, though larger risks may be developing. The non-market player There are two ways that supply and demand can be equalized. In a global market, it is achieved by price changes. Prices rise or fall to ensure that there is

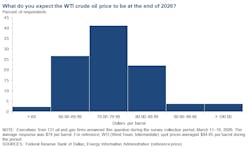

Dallas Fed survey: War uncertainty capping firms’ ambitions

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Seven out of 10 oil-and-gas executives surveyed by the Federal Reserve Bank of Dallas think the price of a barrel of West Texas Intermediate (WTI), which flirted with $100 in the last 2 weeks, will finish 2026 below $80. But with the war with Iran “wreaking havoc” in commodity markets, most firms aren’t rushing to overhaul their 2026 production plans. Fed researchers’ quarterly survey of industry players from about 130 companies in Texas and parts of Louisiana and New Mexico showed that the average WTI price forecast for year-end is around $74. That’s up significantly from the $62 outlook from 3 months ago and well below the roughly $94/bbl at which WTI was being priced during the Fed’s survey period earlier this month. At $74, WTI would also be at a price high enough for most production to be profitable. Federal Reserve Bank of Dallas Only about 5% of recent Dallas Fed Energy Survey respondents think WTI prices will be above $90 at year’s end. <!–> ]–> But the spike in uncertainty from the conflict in the Middle East means most executives are being sober about their options in

Trinidad and Tabago enlists Maire for refinery restart study

The government of Trinidad and Tobago has launched a study to evaluate the potential restart of state-owned Guaracara Refining Co. Ltd.’s (Guaracara) Pointe-a-Pierre refinery—the island nation’s only—which ceased processing activities in late 2018 amid the government’s restructuring of former operator Petroleum Co. of Trinidad and Tobago Ltd. (Petrotrin) As part of a contract award announced on Mar. 25, Maire SPA subsidiary Tecnimont SPA will conduct a rehabilitation study for the upgrading of the currently idled Guaracara refinery complex, Maire said. Tecnimont’s scope of work under the $50-million includes execution of a comprehensive technical and integrity assessment of the Guaracara complex’s existing units and equipment for development of a rehabilitation study that could lead to restart of the 150,000-b/d refinery, according to Maire. Alongside identifying areas of the refinery requiring necessary upgrading or refurbishment, the assessment will also evaluate: The adequacy of existing technologies against the manufacturing site’s long-term operational and performance objectives. The complex’s energy efficiency and environmental performance. Preliminary CAPEX and OPEX estimates to support the possible refurbishment and restart project. Tecnimont’s scope additionally covers engineering of advanced water intake and cooling systems, “all to be designed in accordance with the most stringent international standards,” the parent company said. To be completed in two phases, Maire said it anticipates Tecnimont’s work on the study to be completed by early 2027, after which the service provider expects to receive subsequent contracts upon project approval for front-end engineering and design (FEED), engineering, procurement, and construction (EPC), and ongoing operations and maintenance services associated with the complex’s full rehabilitation. Alessandro Bernini, chief executive officer of MAIRE, commented: “This project further strengthens our geographic diversification expanding our presence in Central America, and confirms the strategic relevance of upgrading initiatives. Emphasizing the company’s engineering expertise and technological know-how to support transformation of existing assets

JPM Energía targets infrastructure-led development as new Vaca Muerta asset operator

JPM Energía is entering Argentina’s unconventional upstream sector through an asset-acquisition agreement with Pluspetrol. If the transaction closes as expected, JPM Energía will become a new independent operator in the Vaca Muerta shale play. The company agreed to acquire Pluspetrol’s 80% interest in Los Toldos I Sur and a 50% interest in Pampa de las Yeguas I. Gustavo Nagel, JPM president, said the acquisition is focused on operational execution, not exploration upside. “These are not exploration blocks. They are assets with infrastructure, wells and processing capacity. The value here is execution—completing wells, optimizing facilities and increasing throughput,” Nagel said. The acquired areas include gas treatment plants, oil handling infrastructure, and pipeline connections, and the development strategy will be based on reactivating existing assets rather than building new infrastructure. “Our model is not large-scale drilling from day one. The plan is phased development, starting with DUCs [drilled but uncompleted wells], facility optimization, and incremental production growth,” Nagel said. “We saw an opportunity in assets with existing infrastructure and low activity. With the right operational approach, these blocks can increase production without massive initial capital,” Nagel continued. Pluspetrol retained its pipeline capacity so JPM would need to negotiate new transportation agreements as production ramps up, Nagel said.

EIA: US crude inventories up 6.9 million bbl

US crude oil inventories for the week ended Mar. 20, excluding the Strategic Petroleum Reserve, increased by 6.9 million bbl from the previous week, according to data from the US Energy Information Administration (EIA). At 456.2 million bbl, US crude oil inventories are about 0.1% above the 5-year average for this time of year, the EIA report indicated. EIA said total motor gasoline inventories decreased by 2.6 million bbl from last week and are 3% above the 5-year average for this time of year. Both finished gasoline inventories and blending components inventories decreased last week. Distillate fuel inventories increased by 3.0 million bbl last week and are about 0.4% below the 5-year average for this time of year. Propane-propylene inventories increased by 500,000 bbl from last week and are 59% above the 5-year average for this time of year, EIA said. US crude oil refinery inputs averaged 16.6 million b/d for the week ended Mar. 20, which was 366,000 b/d more than the previous week’s average. Refineries operated at 92.9% of operable capacity. Gasoline production increased, averaging 9.7 million b/d. Distillate fuel production increased by 158,000 b/d, averaging 5.0 million b/d. US crude oil imports averaged 6.5 million b/d, down by 730,000 b/d from the previous week. Over the last 4 weeks, crude oil imports averaged about 6.6 million b/d, 15.5% more than the same 4-week period last year. Total motor gasoline imports averaged 443,000 b/d. Distillate fuel imports averaged 155,000 b/d.

AI means the end of internet search as we’ve known it

We all know what it means, colloquially, to google something. You pop a few relevant words in a search box and in return get a list of blue links to the most relevant results. Maybe some quick explanations up top. Maybe some maps or sports scores or a video. But fundamentally, it’s just fetching information that’s already out there on the internet and showing it to you, in some sort of structured way. But all that is up for grabs. We are at a new inflection point. The biggest change to the way search engines have delivered information to us since the 1990s is happening right now. No more keyword searching. No more sorting through links to click. Instead, we’re entering an era of conversational search. Which means instead of keywords, you use real questions, expressed in natural language. And instead of links, you’ll increasingly be met with answers, written by generative AI and based on live information from all across the internet, delivered the same way. Of course, Google—the company that has defined search for the past 25 years—is trying to be out front on this. In May of 2023, it began testing AI-generated responses to search queries, using its large language model (LLM) to deliver the kinds of answers you might expect from an expert source or trusted friend. It calls these AI Overviews. Google CEO Sundar Pichai described this to MIT Technology Review as “one of the most positive changes we’ve done to search in a long, long time.”

AI Overviews fundamentally change the kinds of queries Google can address. You can now ask it things like “I’m going to Japan for one week next month. I’ll be staying in Tokyo but would like to take some day trips. Are there any festivals happening nearby? How will the surfing be in Kamakura? Are there any good bands playing?” And you’ll get an answer—not just a link to Reddit, but a built-out answer with current results. More to the point, you can attempt searches that were once pretty much impossible, and get the right answer. You don’t have to be able to articulate what, precisely, you are looking for. You can describe what the bird in your yard looks like, or what the issue seems to be with your refrigerator, or that weird noise your car is making, and get an almost human explanation put together from sources previously siloed across the internet. It’s amazing, and once you start searching that way, it’s addictive.

And it’s not just Google. OpenAI’s ChatGPT now has access to the web, making it far better at finding up-to-date answers to your queries. Microsoft released generative search results for Bing in September. Meta has its own version. The startup Perplexity was doing the same, but with a “move fast, break things” ethos. Literal trillions of dollars are at stake in the outcome as these players jockey to become the next go-to source for information retrieval—the next Google. Not everyone is excited for the change. Publishers are completely freaked out. The shift has heightened fears of a “zero-click” future, where search referral traffic—a mainstay of the web since before Google existed—vanishes from the scene. I got a vision of that future last June, when I got a push alert from the Perplexity app on my phone. Perplexity is a startup trying to reinvent web search. But in addition to delivering deep answers to queries, it will create entire articles about the news of the day, cobbled together by AI from different sources. On that day, it pushed me a story about a new drone company from Eric Schmidt. I recognized the story. Forbes had reported it exclusively, earlier in the week, but it had been locked behind a paywall. The image on Perplexity’s story looked identical to one from Forbes. The language and structure were quite similar. It was effectively the same story, but freely available to anyone on the internet. I texted a friend who had edited the original story to ask if Forbes had a deal with the startup to republish its content. But there was no deal. He was shocked and furious and, well, perplexed. He wasn’t alone. Forbes, the New York Times, and Condé Nast have now all sent the company cease-and-desist orders. News Corp is suing for damages. People are worried about what these new LLM-powered results will mean for our fundamental shared reality. It could spell the end of the canonical answer. It was precisely the nightmare scenario publishers have been so afraid of: The AI was hoovering up their premium content, repackaging it, and promoting it to its audience in a way that didn’t really leave any reason to click through to the original. In fact, on Perplexity’s About page, the first reason it lists to choose the search engine is “Skip the links.” But this isn’t just about publishers (or my own self-interest). People are also worried about what these new LLM-powered results will mean for our fundamental shared reality. Language models have a tendency to make stuff up—they can hallucinate nonsense. Moreover, generative AI can serve up an entirely new answer to the same question every time, or provide different answers to different people on the basis of what it knows about them. It could spell the end of the canonical answer. But make no mistake: This is the future of search. Try it for a bit yourself, and you’ll see.

Sure, we will always want to use search engines to navigate the web and to discover new and interesting sources of information. But the links out are taking a back seat. The way AI can put together a well-reasoned answer to just about any kind of question, drawing on real-time data from across the web, just offers a better experience. That is especially true compared with what web search has become in recent years. If it’s not exactly broken (data shows more people are searching with Google more often than ever before), it’s at the very least increasingly cluttered and daunting to navigate. Who wants to have to speak the language of search engines to find what you need? Who wants to navigate links when you can have straight answers? And maybe: Who wants to have to learn when you can just know? In the beginning there was Archie. It was the first real internet search engine, and it crawled files previously hidden in the darkness of remote servers. It didn’t tell you what was in those files—just their names. It didn’t preview images; it didn’t have a hierarchy of results, or even much of an interface. But it was a start. And it was pretty good. Then Tim Berners-Lee created the World Wide Web, and all manner of web pages sprang forth. The Mosaic home page and the Internet Movie Database and Geocities and the Hampster Dance and web rings and Salon and eBay and CNN and federal government sites and some guy’s home page in Turkey. Until finally, there was too much web to even know where to start. We really needed a better way to navigate our way around, to actually find the things we needed. And so in 1994 Jerry Yang created Yahoo, a hierarchical directory of websites. It quickly became the home page for millions of people. And it was … well, it was okay. TBH, and with the benefit of hindsight, I think we all thought it was much better back then than it actually was. But the web continued to grow and sprawl and expand, every day bringing more information online. Rather than just a list of sites by category, we needed something that actually looked at all that content and indexed it. By the late ’90s that meant choosing from a variety of search engines: AltaVista and AlltheWeb and WebCrawler and HotBot. And they were good—a huge improvement. At least at first. But alongside the rise of search engines came the first attempts to exploit their ability to deliver traffic. Precious, valuable traffic, which web publishers rely on to sell ads and retailers use to get eyeballs on their goods. Sometimes this meant stuffing pages with keywords or nonsense text designed purely to push pages higher up in search results. It got pretty bad.

And then came Google. It’s hard to overstate how revolutionary Google was when it launched in 1998. Rather than just scanning the content, it also looked at the sources linking to a website, which helped evaluate its relevance. To oversimplify: The more something was cited elsewhere, the more reliable Google considered it, and the higher it would appear in results. This breakthrough made Google radically better at retrieving relevant results than anything that had come before. It was amazing. Google CEO Sundar Pichai describes AI Overviews as “one of the most positive changes we’ve done to search in a long, long time.”JENS GYARMATY/LAIF/REDUX For 25 years, Google dominated search. Google was search, for most people. (The extent of that domination is currently the subject of multiple legal probes in the United States and the European Union.)

But Google has long been moving away from simply serving up a series of blue links, notes Pandu Nayak, Google’s chief scientist for search. “It’s not just so-called web results, but there are images and videos, and special things for news. There have been direct answers, dictionary answers, sports, answers that come with Knowledge Graph, things like featured snippets,” he says, rattling off a litany of Google’s steps over the years to answer questions more directly. It’s true: Google has evolved over time, becoming more and more of an answer portal. It has added tools that allow people to just get an answer—the live score to a game, the hours a café is open, or a snippet from the FDA’s website—rather than being pointed to a website where the answer may be. But once you’ve used AI Overviews a bit, you realize they are different. Take featured snippets, the passages Google sometimes chooses to highlight and show atop the results themselves. Those words are quoted directly from an original source. The same is true of knowledge panels, which are generated from information stored in a range of public databases and Google’s Knowledge Graph, its database of trillions of facts about the world. While these can be inaccurate, the information source is knowable (and fixable). It’s in a database. You can look it up. Not anymore: AI Overviews can be entirely new every time, generated on the fly by a language model’s predictive text combined with an index of the web.

“I think it’s an exciting moment where we have obviously indexed the world. We built deep understanding on top of it with Knowledge Graph. We’ve been using LLMs and generative AI to improve our understanding of all that,” Pichai told MIT Technology Review. “But now we are able to generate and compose with that.” The result feels less like a querying a database than like asking a very smart, well-read friend. (With the caveat that the friend will sometimes make things up if she does not know the answer.) “[The company’s] mission is organizing the world’s information,” Liz Reid, Google’s head of search, tells me from its headquarters in Mountain View, California. “But actually, for a while what we did was organize web pages. Which is not really the same thing as organizing the world’s information or making it truly useful and accessible to you.” That second concept—accessibility—is what Google is really keying in on with AI Overviews. It’s a sentiment I hear echoed repeatedly while talking to Google execs: They can address more complicated types of queries more efficiently by bringing in a language model to help supply the answers. And they can do it in natural language.