Your Gateway to Power, Energy, Datacenters, Bitcoin and AI

Dive into the latest industry updates, our exclusive Paperboy Newsletter, and curated insights designed to keep you informed. Stay ahead with minimal time spent.

Discover What Matters Most to You

AI

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Bitcoin:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Datacenter:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Energy:

Lorem Ipsum is simply dummy text of the printing and typesetting industry.

Discover What Matter Most to You

Featured Articles

Sable Offshore set to resume oil production at second platform offshore California

Sable Offshore Corp. has been given clearance from the US Bureau of Safety and Environmental Enforcement (BSEE) to resume operations at Platform Heritage, part of Sable Offshore’s Santa Ynez Unit (SYU) in federal waters offshore Santa Barbara, Calif. The move follows a completed final pre-restart inspection and a March directive by the US Secretary of Energy for Sable Offshore to restore SYU operations under authorities delegated through the Defense Production Act and certain executive orders. Last month, Sable Offshore restarted operations at SYU and the associated Santa Ynez Pipeline System offshore southern California as part of the directive, cited by Energy Secretary Chris Wright as needed to ensure adequate oil supply to West Coast military installations. The infrastructure had been shut in after a 2015 oil spill. The SYU includes Platforms Heritage, Harmony, and Hondo, all sit 5-9 miles offshore Santa Barbara County in shallow water depths of 900-1,200 ft. The newly cleared Platform Heritage is set to begin production soon, BSEE said. In a release Mar. 30, Sable Offshore said Platform Harmony, which came online in May 2025, is producing about 22,000 b/d of oil (gross). Platform Heritage, the second SYU platform to come online, is expected to produce over 30,000 b/d (gross). Platform Hondo, expected to produce over 10,000 b/d, is expected to be online by the end of this year’s second quarter.

BLM lease sales in Colorado, Nevada, Utah generate $64.8 million

The Bureau of Land Management (BLM) on Mar. 31 leased 136 parcels across 131,121 acres in Colorado, Nevada, and Utah for $64.8 million in total receipts during quarterly oil and gas lease sales. Combined lease bonus bids and rentals are distributed between the federal government and the state where the parcels are located. Specifically, federal lands leased in Utah realized the bulk of the generated income, reaping $56.4 million from 11 parcels on 68,632 acres, BLM said. Meanwhile, in Colorado, BLM leased 68 parcels on 42,532 acres, making $8.1 million. The Nevada lease sale earned nearly $300,000 on 11 parcels on 19,957 acres. Nine companies won multiple leases in the Utah sale: Otter Creek LLC, VR Utah Holdings LLC, UT 100 LLC, Bro Energy LLC, Topaz Energy Resources, R&R Royalty Ltd., Drake Land Services LLC, Kirkwood Oil and Gas LLC, and WEM Mancos LLC. In Colorado, companies winning multiple parcels include Bison IV Properties Colorado LLC, Laramie Energy LLC, Topaz Energy Resources, R&R Royalty Ltd., QB Energy Nominee Corp., Context Energy Co., and SG Interests LLC. National Minerals Trust LLC won all 11 parcels in Nevada. Companies will pay a 12.5% royalty rate on production, BLM said.

Why California gasoline prices are rising faster than the US average

California’s Middle East crude oil imports California is materially more dependent on Middle Eastern crude than the rest of the US, largely due to refinery configuration and logistics. California refineries are optimized for heavier, sour crude oil. Declining in-state production (e.g., San Joaquin Valley) and no pipeline connectivity to other US producing regions means California regularly imports a meaningful share of its crude slate from the Middle East. Recent California Energy Commission data show that Iraq and Saudi Arabia alone provide 35-40% of total California barrels (roughly equivalent to California crude oil production), plus smaller flows from the UAE and others in the Persian Gulf. For the rest of the US, about 10% of crude oil imports come from Persian Gulf suppliers. As a result, California fuel prices are more exposed to disruptions or price spikes linked to Gulf supply and chokepoints like the Strait of Hormuz than refineries in other parts of the country. Out-of-state refinery constraints California imports roughly 20% of its refined fuels from Asia (a record 128,000 b/d of gasoline/additives in 2025), mostly from South Korea and India. These refiners are cutting back exports, prioritizing their own domestic markets, and scrambling to re-optimize crude slates as Hormuz disruptions squeeze Middle East supply. Shortages of Gulf crude oil are forcing refineries to reduce runs and declare force majeure on product deliveries. Several governments or companies have temporarily suspended clean‑product exports, directly throttling flows of CARB‑grade gasoline and jet to the US West Coast. These refiners also are bidding up alternative crude oil barrels from other regions and paying longer‑haul freight rates, which raises their marginal cost of gasoline and narrows the price window to ship gasoline cargoes to California. Volumes available for California are at risk of shrinking and becoming more sporadic, while the barrels that do

Asia bears brunt of energy shock as Middle East war disrupts liquid flows

Asia is facing a dual energy crisis marked by both soaring prices and physical supply disruptions as escalating war in the Middle East constrains flows through the Strait of Hormuz, according to a new report by Morningstar DBRS. The report highlights that roughly one-fifth of global crude oil and LNG supply has been affected by disruptions at the critical chokepoint, with Asia absorbing the majority of the impact due to its heavy dependence on imported hydrocarbons. About 83% of oil and LNG shipments passing through Hormuz are destined for Asian markets, amplifying the region’s exposure. Asia’s structural reliance on Middle Eastern energy imports has intensified the shock. Countries such as Japan and South Korea import nearly all of their energy needs, while China and India depend heavily on foreign supplies, much of it sourced from the Gulf. This dependence, combined with limited alternative shipping routes, has turned what initially appeared to be a price-driven shock into a broader supply and logistics crisis. Governments across the region have begun implementing emergency measures, including fuel rationing, price controls, and strategic reserve releases, to manage shortages and rising costs. Policy responses vary In North Asia, policymakers are leveraging stronger buffers. Japan has tapped strategic oil reserves and introduced subsidies to cushion consumers, while South Korea is relying on LNG stockpiles and fuel-switching capabilities. China has deployed administrative controls to stabilize domestic fuel prices and restrict refined product exports. By contrast, parts of South and Southeast Asia are more vulnerable. India has introduced tax relief and prioritized gas allocation, while countries such as the Philippines and Vietnam have declared energy emergencies and rolled out conservation measures. Several ASEAN (the Association of Southeast Asian Nations) economies have even implemented partial work-from-home policies to curb fuel consumption. Broader economic spillovers intensify Beyond energy markets, the disruption

Vår Energi lets 3-year contract for harsh-environment rig for NCS work

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Vår Energi ASA has awarded a 3‑year firm contract to Transocean for the Transocean Barents rig on the Norwegian Continental Shelf. The rig, which will mobilize in mid‑2027, aims to strengthen the operator’s drilling capacity across infill and project wells as well as complex exploration wells, the company said Apr. 2. Transocean Barents is a high‑specification, fully winterized harsh‑environment rig, capable of operating across the entire NCS. Transocean, in a release Apr. 2, said the $450,000/day contract (excluding additional services) is expected to contribute about $490 million in backlog, excluding compensation for mobilization and demobilization. The contract also includes options that, if fully exercised, could keep the rig working in Norway into 2034.

Newly formed Polar LNG aims to develop nearshore LNG project on Alaska’s North Slope

Polar Train LNG LLC, a newly launched company aiming to build an LNG plant (Polar LNG) on Alaska’s North Slope, has appointed Joel Riddle as president and chief executive officer. “Alaska’s North Slope holds one of the most significant undeveloped natural gas resources in the world,” said Riddle, adding “Polar LNG is uniquely positioned to bring this resource online—delivering reliable energy for Alaska and a strategic supply for the United States… and provides trusted energy to our allies.” In a release Mar. 31, the company said it is advancing a nearshore project at Prudhoe Bay, citing “one of the shortest LNG shipping routes from North America to key Asian markets, approximately 3,600 miles to Japan compared to over 10,000 miles from the US Gulf Coast.” The company is aiming for first LNG from the 7-million tonnes/year plant—to be developed nearshore with modular infrastructure—in 2029-2030 at a cost of $8–9 billion. According to Polar LNG, natural gas would be sourced from existing infrastructure at Prudhoe Bay and transported via a short pipeline to a nearshore plant. There, a modular gravity-based structure would process and liquefy the gas. LNG would then be loaded onto specialized ice-class carriers for year-round export. The company is exploring potential repurposing of sanctioned equipment built for Russia’s Arctic LNG 2 project and is seeking permission from the US govenment to acquire parts impacted by the sanctions, according to reports. Before joining Polar LNG, Riddle served as managing director and chief executive officer of Tamboran Resources Ltd.

Sable Offshore set to resume oil production at second platform offshore California

Sable Offshore Corp. has been given clearance from the US Bureau of Safety and Environmental Enforcement (BSEE) to resume operations at Platform Heritage, part of Sable Offshore’s Santa Ynez Unit (SYU) in federal waters offshore Santa Barbara, Calif. The move follows a completed final pre-restart inspection and a March directive by the US Secretary of Energy for Sable Offshore to restore SYU operations under authorities delegated through the Defense Production Act and certain executive orders. Last month, Sable Offshore restarted operations at SYU and the associated Santa Ynez Pipeline System offshore southern California as part of the directive, cited by Energy Secretary Chris Wright as needed to ensure adequate oil supply to West Coast military installations. The infrastructure had been shut in after a 2015 oil spill. The SYU includes Platforms Heritage, Harmony, and Hondo, all sit 5-9 miles offshore Santa Barbara County in shallow water depths of 900-1,200 ft. The newly cleared Platform Heritage is set to begin production soon, BSEE said. In a release Mar. 30, Sable Offshore said Platform Harmony, which came online in May 2025, is producing about 22,000 b/d of oil (gross). Platform Heritage, the second SYU platform to come online, is expected to produce over 30,000 b/d (gross). Platform Hondo, expected to produce over 10,000 b/d, is expected to be online by the end of this year’s second quarter.

BLM lease sales in Colorado, Nevada, Utah generate $64.8 million

The Bureau of Land Management (BLM) on Mar. 31 leased 136 parcels across 131,121 acres in Colorado, Nevada, and Utah for $64.8 million in total receipts during quarterly oil and gas lease sales. Combined lease bonus bids and rentals are distributed between the federal government and the state where the parcels are located. Specifically, federal lands leased in Utah realized the bulk of the generated income, reaping $56.4 million from 11 parcels on 68,632 acres, BLM said. Meanwhile, in Colorado, BLM leased 68 parcels on 42,532 acres, making $8.1 million. The Nevada lease sale earned nearly $300,000 on 11 parcels on 19,957 acres. Nine companies won multiple leases in the Utah sale: Otter Creek LLC, VR Utah Holdings LLC, UT 100 LLC, Bro Energy LLC, Topaz Energy Resources, R&R Royalty Ltd., Drake Land Services LLC, Kirkwood Oil and Gas LLC, and WEM Mancos LLC. In Colorado, companies winning multiple parcels include Bison IV Properties Colorado LLC, Laramie Energy LLC, Topaz Energy Resources, R&R Royalty Ltd., QB Energy Nominee Corp., Context Energy Co., and SG Interests LLC. National Minerals Trust LLC won all 11 parcels in Nevada. Companies will pay a 12.5% royalty rate on production, BLM said.

Why California gasoline prices are rising faster than the US average

California’s Middle East crude oil imports California is materially more dependent on Middle Eastern crude than the rest of the US, largely due to refinery configuration and logistics. California refineries are optimized for heavier, sour crude oil. Declining in-state production (e.g., San Joaquin Valley) and no pipeline connectivity to other US producing regions means California regularly imports a meaningful share of its crude slate from the Middle East. Recent California Energy Commission data show that Iraq and Saudi Arabia alone provide 35-40% of total California barrels (roughly equivalent to California crude oil production), plus smaller flows from the UAE and others in the Persian Gulf. For the rest of the US, about 10% of crude oil imports come from Persian Gulf suppliers. As a result, California fuel prices are more exposed to disruptions or price spikes linked to Gulf supply and chokepoints like the Strait of Hormuz than refineries in other parts of the country. Out-of-state refinery constraints California imports roughly 20% of its refined fuels from Asia (a record 128,000 b/d of gasoline/additives in 2025), mostly from South Korea and India. These refiners are cutting back exports, prioritizing their own domestic markets, and scrambling to re-optimize crude slates as Hormuz disruptions squeeze Middle East supply. Shortages of Gulf crude oil are forcing refineries to reduce runs and declare force majeure on product deliveries. Several governments or companies have temporarily suspended clean‑product exports, directly throttling flows of CARB‑grade gasoline and jet to the US West Coast. These refiners also are bidding up alternative crude oil barrels from other regions and paying longer‑haul freight rates, which raises their marginal cost of gasoline and narrows the price window to ship gasoline cargoes to California. Volumes available for California are at risk of shrinking and becoming more sporadic, while the barrels that do

Asia bears brunt of energy shock as Middle East war disrupts liquid flows

Asia is facing a dual energy crisis marked by both soaring prices and physical supply disruptions as escalating war in the Middle East constrains flows through the Strait of Hormuz, according to a new report by Morningstar DBRS. The report highlights that roughly one-fifth of global crude oil and LNG supply has been affected by disruptions at the critical chokepoint, with Asia absorbing the majority of the impact due to its heavy dependence on imported hydrocarbons. About 83% of oil and LNG shipments passing through Hormuz are destined for Asian markets, amplifying the region’s exposure. Asia’s structural reliance on Middle Eastern energy imports has intensified the shock. Countries such as Japan and South Korea import nearly all of their energy needs, while China and India depend heavily on foreign supplies, much of it sourced from the Gulf. This dependence, combined with limited alternative shipping routes, has turned what initially appeared to be a price-driven shock into a broader supply and logistics crisis. Governments across the region have begun implementing emergency measures, including fuel rationing, price controls, and strategic reserve releases, to manage shortages and rising costs. Policy responses vary In North Asia, policymakers are leveraging stronger buffers. Japan has tapped strategic oil reserves and introduced subsidies to cushion consumers, while South Korea is relying on LNG stockpiles and fuel-switching capabilities. China has deployed administrative controls to stabilize domestic fuel prices and restrict refined product exports. By contrast, parts of South and Southeast Asia are more vulnerable. India has introduced tax relief and prioritized gas allocation, while countries such as the Philippines and Vietnam have declared energy emergencies and rolled out conservation measures. Several ASEAN (the Association of Southeast Asian Nations) economies have even implemented partial work-from-home policies to curb fuel consumption. Broader economic spillovers intensify Beyond energy markets, the disruption

Vår Energi lets 3-year contract for harsh-environment rig for NCS work

@import url(‘https://fonts.googleapis.com/css2?family=Inter:[email protected]&display=swap’); a { color: var(–color-primary-main); } .ebm-page__main h1, .ebm-page__main h2, .ebm-page__main h3, .ebm-page__main h4, .ebm-page__main h5, .ebm-page__main h6 { font-family: Inter; } body { line-height: 150%; letter-spacing: 0.025em; font-family: Inter; } button, .ebm-button-wrapper { font-family: Inter; } .label-style { text-transform: uppercase; color: var(–color-grey); font-weight: 600; font-size: 0.75rem; } .caption-style { font-size: 0.75rem; opacity: .6; } #onetrust-pc-sdk [id*=btn-handler], #onetrust-pc-sdk [class*=btn-handler] { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-policy a, #onetrust-pc-sdk a, #ot-pc-content a { color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-pc-sdk .ot-active-menu { border-color: #c19a06 !important; } #onetrust-consent-sdk #onetrust-accept-btn-handler, #onetrust-banner-sdk #onetrust-reject-all-handler, #onetrust-consent-sdk #onetrust-pc-btn-handler.cookie-setting-link { background-color: #c19a06 !important; border-color: #c19a06 !important; } #onetrust-consent-sdk .onetrust-pc-btn-handler { color: #c19a06 !important; border-color: #c19a06 !important; } Vår Energi ASA has awarded a 3‑year firm contract to Transocean for the Transocean Barents rig on the Norwegian Continental Shelf. The rig, which will mobilize in mid‑2027, aims to strengthen the operator’s drilling capacity across infill and project wells as well as complex exploration wells, the company said Apr. 2. Transocean Barents is a high‑specification, fully winterized harsh‑environment rig, capable of operating across the entire NCS. Transocean, in a release Apr. 2, said the $450,000/day contract (excluding additional services) is expected to contribute about $490 million in backlog, excluding compensation for mobilization and demobilization. The contract also includes options that, if fully exercised, could keep the rig working in Norway into 2034.

Newly formed Polar LNG aims to develop nearshore LNG project on Alaska’s North Slope

Polar Train LNG LLC, a newly launched company aiming to build an LNG plant (Polar LNG) on Alaska’s North Slope, has appointed Joel Riddle as president and chief executive officer. “Alaska’s North Slope holds one of the most significant undeveloped natural gas resources in the world,” said Riddle, adding “Polar LNG is uniquely positioned to bring this resource online—delivering reliable energy for Alaska and a strategic supply for the United States… and provides trusted energy to our allies.” In a release Mar. 31, the company said it is advancing a nearshore project at Prudhoe Bay, citing “one of the shortest LNG shipping routes from North America to key Asian markets, approximately 3,600 miles to Japan compared to over 10,000 miles from the US Gulf Coast.” The company is aiming for first LNG from the 7-million tonnes/year plant—to be developed nearshore with modular infrastructure—in 2029-2030 at a cost of $8–9 billion. According to Polar LNG, natural gas would be sourced from existing infrastructure at Prudhoe Bay and transported via a short pipeline to a nearshore plant. There, a modular gravity-based structure would process and liquefy the gas. LNG would then be loaded onto specialized ice-class carriers for year-round export. The company is exploring potential repurposing of sanctioned equipment built for Russia’s Arctic LNG 2 project and is seeking permission from the US govenment to acquire parts impacted by the sanctions, according to reports. Before joining Polar LNG, Riddle served as managing director and chief executive officer of Tamboran Resources Ltd.

Energy Department Initiates Additional Strategic Petroleum Reserve Emergency Exchange to Stabilize Global Oil Supply

WASHINGTON—The U.S. Department of Energy (DOE) issued a Request for Proposal (RFP) today for an emergency exchange of 10-million-barrels from the Strategic Petroleum Reserve (SPR). This action is part of the coordinated release of 400-million-barrels from IEA member nations’ strategic reserves President Trump previously announced. The United States continues to deliver on its 172-million-barrel release commitment. The crude oil will originate from the Strategic Petroleum Reserve’s (SPR) Bryan Mound site. Today’s action builds on the initial phase of the Emergency Exchange, which moved quickly to award 45.2 million barrels from the Bayou Choctaw, Bryan Mound, and West Hackberry SPR sites. The 10-million-barrel exchange leverages the full capabilities of the SPR, alongside the President’s limited Jones Act waiver, to accelerate critical near-term oil flows into the market. “Today’s action furthers the United States’ efforts to move oil quickly to the market and mitigate short-term supply disruptions,” said DOE Assistant Secretary of the Hydrocarbons and Geothermal Energy Office Kyle Haustveit. “Thanks to President Trump, America is managing our national security assets responsibly again. Through this exchange, we will continue to refill the Strategic Petroleum Reserve by bringing additional barrels back at a later date through this pragmatic exchange structure, strengthening its long-term readiness and all at no cost to the American taxpayer.” Under DOE’s exchange authority, participating companies will return the borrowed 10 million barrels with additional premium barrels by next year. This exchange delivers immediate crude to refiners and the market while generating additional barrels for the American people at no cost to taxpayers. Bids for the solicitation are due no later than 11:00 A.M. CT on Monday, April 6, 2026. For more information on the SPR, please visit DOE’s website.

Trump Administration Keeps Colorado Coal Plant Open to Ensure Affordable, Reliable and Secure Power in Colorado

WASHINGTON—U.S. Secretary of Energy Chris Wright today issued an emergency order to keep a Colorado coal plant operational to ensure Americans maintain access to affordable, reliable and secure electricity. The order directs Tri-State Generation and Transmission Association (Tri-State), Platte River Power Authority, Salt River Project, PacifiCorp, and Public Service Company of Colorado (Xcel Energy), in coordination with the Western Area Power Administration (WAPA) Rocky Mountain Region and Southwest Power Pool (SPP), to take all measures necessary to ensure that Unit 1 at the Craig Station in Craig, Colorado is available to operate. Unit One of the coal plant was scheduled to shut down at the end of 2025 but on December 30, 2025, Secretary Wright issued an emergency order directing Tri-State and the co-owners to ensure that Unit 1 at the Craig Station remains available to operate. “The last administration’s energy subtraction policies threatened America’s energy security and positioned our nation to likely experience significantly more blackouts in the coming years—thankfully, President Trump won’t let that happen,” said Energy Secretary Wright. “The Trump Administration will continue taking action to ensure we don’t lose critical generation sources. Americans deserve access to affordable, reliable, and secure energy to power their homes all the time, regardless of whether the wind is blowing or the sun is shining.” Thanks to President Trump’s leadership, coal plants across the country are reversing plans to shut down. In 2025, more than 17 gigawatts (GW) of coal-power electricity generation were saved. On April 1, once Tri-State and the WAPA Rocky Mountain Region join the SPP RTO West expansion, SPP is directed to take every step to employ economic dispatch to minimize costs to ratepayers. According to DOE’s Resource Adequacy Report, blackouts were on track to potentially increase 100 times by 2030 if the U.S. continued to take reliable

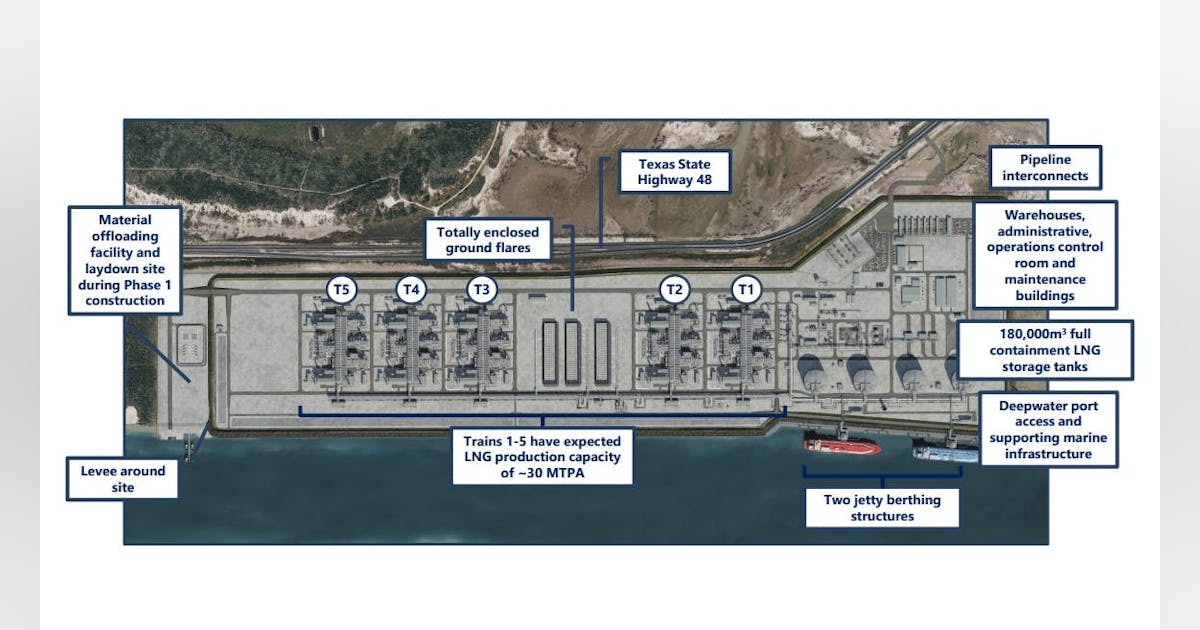

NextDecade contractor Bechtel awards ABB more Rio Grande LNG automation work

NextDecade Corp. contractor Bechtel Corp. has awarded ABB Ltd. additional integrated automation and electrical solution orders, extending its scope to Trains 4 and 5 of NextDecade’s 30-million tonne/year (tpy) Rio Grande LNG (RGLNG) plant in Brownsville, Tex. The orders were booked in third- and fourth-quarters 2025 and build on ABB’s Phase 1 work with Trains 1-3, totaling 17 million tpy. The scope for RGLNG Trains 4 and 5 includes deployment of an integrated control and safety system consisting of a distributed control system, emergency shutdown, and fire and gas systems. An electrical controls and monitoring system will provide unified visibility of the plant’s electrical infrastructure. These two overarching solutions will provide a common automation platform. ABB will also supply medium-voltage drives, synchronous motors, transformers, motor controllers and switchgear. The orders also include local equipment buildings—two for Train 4 and one for Train 5— housing critical control and electrical systems in prefabricated modules to streamline installation and commissioning on site. The solutions being delivered to Bechtel use ABB adaptive execution, a methodology for capital projects designed to optimize engineering work and reduce delivery timelines. Phase 1 of RGLNG is under construction and expected to begin operations in 2027. Operations at Train 4 are expected in 2030 and Train 5 in 2031. ABB’s senior vice-president for the Americas, Scott McCay, confirmed to Oil & Gas Journal at CERAWeek by S&P Global in Houston that the company is doing similar work through Tecnimont for Argent LNG’s planned 25-million tpy plant in Port Fourchon, La.; 10-million tpy Phase 1 and 15-million tpy Phase 2. Argent is targeting 2030 completion for its plant.

Persistent oil flow imbalances drive Enverus to increase crude price forecast

Citing impacts from the Iran war, near-zero flows through the Strait of Hormuz, accelerating global stock draws, and expectations for a muted US production response despite higher prices, Enverus Intelligence Research (EIR) raised its Brent crude oil price forecast. EIR now expects Brent to average $95/bbl for the remainder of 2026 and $100/bbl in 2027, reflecting what it described as a persistent global oil flow imbalance that continues to draw down inventories. “The world has an oil flow problem that is draining stocks,” said Al Salazar, director of research at EIR. “Whenever that oil flow problem is resolved, the world is left with low stocks. That’s what drives our oil price outlook higher for longer.” The outlook assumes the Strait of Hormuz remains largely closed for 3 months. EIR estimates that each month of constrained flows shifts the price outlook by about $10–15/bbl, underscoring the scale of the disruption and uncertainty around its duration. Despite West Texas Intermediate (WTI) prices of $90–100/bbl, EIR does not expect US producers to materially increase output. The firm forecasts US liquids production growth of 370,000 b/d by end-2026 and 580,000 b/d by end-2027, citing drilling-to-production lags, industry consolidation, and continued capital discipline. Global oil demand growth for 2026 has been reduced to about 500,000 b/d from 1.0 million b/d as higher energy prices and anticipated supply disruptions weigh on economic activity. Cumulative global oil stock draws are estimated at roughly 1 billion bbl through 2027, with non-OECD inventories—particularly in Asia—absorbing nearly half of the impact. A 60-day Jones Act waiver may provide limited short-term US shipping flexibility, but EIR said the measure is unlikely to materially affect global oil prices given broader market forces.

Equinor begins drilling $9-billion natural gas development project offshore Brazil

Equinor has started drilling the Raia natural gas project in the Campos basin presalt offshore Brazil. The $9-billion project is Equinor’s largest international investment, its largest project under execution, and marks the deepest water depth operation in its portfolio. The drilling campaign, which began Mar. 24 with the Valaris DS‑17 drillship, includes six wells in the Raia area 200 km offshore in water depths of around 2,900 m. The area is expected to hold recoverable natural gas and condensate reserves of over 1 billion boe. Raia’s development concept is based on production through wells connected to a 126,000-b/d floating production, storage and offloading unit (FPSO), which will treat produced oil/condensate and gas. Natural gas will be transported through a 200‑km pipeline from the FPSO to Cabiúnas, in the city of Macaé, Rio de Janeiro state. Once in operation, expected in 2028, the project will have the capacity to export up to 16 million cu m/day of natural gas, which could represent 15% of Brazil’s natural gas demand, the company said in a release Mar. 24. “While drilling takes place, integration and commissioning activities on the FPSO are progressing well putting us on track towards a safe start of operations in 2028,” said Geir Tungesvik, executive vice-president, projects, drilling and procurement, Equinor. The Raia project is operated by Equinor (35%), in partnership with Repsol Sinopec Brasil (35%) and Petrobras (30%).

Woodfibre LNG receives additional modules as construction advances

Woodfibre LNG LP has received two major modules within a week for its under‑construction, 2.1‑million tonne/year (tpy) LNG export plant near Squamish, British Columbia, advancing construction to about 65% complete. The deliveries include the liquefaction module—the project’s heaviest and most critical process unit—and the powerhouse module, which will serve as the plant’s central power and control hub. The liquefaction module, delivered aboard the heavy cargo vessel Red Zed 1, is the 15th of 19 modules scheduled for installation at the site, the company said in a Mar. 24 release. Weighing about 10,847 metric tonnes and occupying a footprint roughly equivalent to a football field, it is among the largest modules fabricated for the project. Once installed and commissioned, the liquefaction module will cool natural gas to about –162°C, converting it into LNG for export. Shortly after the liquefaction module’s arrival, Woodfibre LNG received the powerhouse module, the 16th module delivered to site. Weighing more than 4,200 metric tonnes, the powerhouse module will function as a power and control system, receiving electricity from BC Hydro and managing and distributing power to the plant’s electric‑drive compressors. The Woodfibre LNG project is designed as the first LNG export plant to use electric‑drive motors for liquefaction, replacing conventional gas‑turbine‑driven compressors. The Siemens electric‑drive system will be powered by renewable hydroelectricity from BC Hydro, eliminating the largest operational source of greenhouse gas emissions typically associated with liquefaction, the company said. The project is being built near the community of Squamish on the traditional territory of the Sḵwx̱wú7mesh Úxwumixw (Squamish Nation) and is regulated in part by the Indigenous government. All 19 modules are expected to arrive on site by spring 2026. Construction is scheduled for completion in 2027. Woodfibre LNG is owned by Woodfibre LNG Ltd. Partnership, which is 70% owned by Pacific Energy Corp.

West of Orkney developers helped support 24 charities last year

The developers of the 2GW West of Orkney wind farm paid out a total of £18,000 to 24 organisations from its small donations fund in 2024. The money went to projects across Caithness, Sutherland and Orkney, including a mental health initiative in Thurso and a scheme by Dunnet Community Forest to improve the quality of meadows through the use of traditional scythes. Established in 2022, the fund offers up to £1,000 per project towards programmes in the far north. In addition to the small donations fund, the West of Orkney developers intend to follow other wind farms by establishing a community benefit fund once the project is operational. West of Orkney wind farm project director Stuart McAuley said: “Our donations programme is just one small way in which we can support some of the many valuable initiatives in Caithness, Sutherland and Orkney. “In every case we have been immensely impressed by the passion and professionalism each organisation brings, whether their focus is on sport, the arts, social care, education or the environment, and we hope the funds we provide help them achieve their goals.” In addition to the local donations scheme, the wind farm developers have helped fund a £1 million research and development programme led by EMEC in Orkney and a £1.2m education initiative led by UHI. It also provided £50,000 to support the FutureSkills apprenticeship programme in Caithness, with funds going to employment and training costs to help tackle skill shortages in the North of Scotland. The West of Orkney wind farm is being developed by Corio Generation, TotalEnergies and Renewable Infrastructure Development Group (RIDG). The project is among the leaders of the ScotWind cohort, having been the first to submit its offshore consent documents in late 2023. In addition, the project’s onshore plans were approved by the

Biden bans US offshore oil and gas drilling ahead of Trump’s return

US President Joe Biden has announced a ban on offshore oil and gas drilling across vast swathes of the country’s coastal waters. The decision comes just weeks before his successor Donald Trump, who has vowed to increase US fossil fuel production, takes office. The drilling ban will affect 625 million acres of federal waters across America’s eastern and western coasts, the eastern Gulf of Mexico and Alaska’s Northern Bering Sea. The decision does not affect the western Gulf of Mexico, where much of American offshore oil and gas production occurs and is set to continue. In a statement, President Biden said he is taking action to protect the regions “from oil and natural gas drilling and the harm it can cause”. “My decision reflects what coastal communities, businesses, and beachgoers have known for a long time: that drilling off these coasts could cause irreversible damage to places we hold dear and is unnecessary to meet our nation’s energy needs,” Biden said. “It is not worth the risks. “As the climate crisis continues to threaten communities across the country and we are transitioning to a clean energy economy, now is the time to protect these coasts for our children and grandchildren.” Offshore drilling ban The White House said Biden used his authority under the 1953 Outer Continental Shelf Lands Act, which allows presidents to withdraw areas from mineral leasing and drilling. However, the law does not give a president the right to unilaterally reverse a drilling ban without congressional approval. This means that Trump, who pledged to “unleash” US fossil fuel production during his re-election campaign, could find it difficult to overturn the ban after taking office. Sunset shot of the Shell Olympus platform in the foreground and the Shell Mars platform in the background in the Gulf of Mexico Trump

The Download: our 10 Breakthrough Technologies for 2025

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology. Introducing: MIT Technology Review’s 10 Breakthrough Technologies for 2025 Each year, we spend months researching and discussing which technologies will make the cut for our 10 Breakthrough Technologies list. We try to highlight a mix of items that reflect innovations happening in various fields. We look at consumer technologies, large industrial-scale projects, biomedical advances, changes in computing, climate solutions, the latest in AI, and more.We’ve been publishing this list every year since 2001 and, frankly, have a great track record of flagging things that are poised to hit a tipping point. It’s hard to think of another industry that has as much of a hype machine behind it as tech does, so the real secret of the TR10 is really what we choose to leave off the list.Check out the full list of our 10 Breakthrough Technologies for 2025, which is front and center in our latest print issue. It’s all about the exciting innovations happening in the world right now, and includes some fascinating stories, such as: + How digital twins of human organs are set to transform medical treatment and shake up how we trial new drugs.+ What will it take for us to fully trust robots? The answer is a complicated one.+ Wind is an underutilized resource that has the potential to steer the notoriously dirty shipping industry toward a greener future. Read the full story.+ After decades of frustration, machine-learning tools are helping ecologists to unlock a treasure trove of acoustic bird data—and to shed much-needed light on their migration habits. Read the full story.

+ How poop could help feed the planet—yes, really. Read the full story.

Roundtables: Unveiling the 10 Breakthrough Technologies of 2025 Last week, Amy Nordrum, our executive editor, joined our news editor Charlotte Jee to unveil our 10 Breakthrough Technologies of 2025 in an exclusive Roundtable discussion. Subscribers can watch their conversation back here. And, if you’re interested in previous discussions about topics ranging from mixed reality tech to gene editing to AI’s climate impact, check out some of the highlights from the past year’s events. This international surveillance project aims to protect wheat from deadly diseases For as long as there’s been domesticated wheat (about 8,000 years), there has been harvest-devastating rust. Breeding efforts in the mid-20th century led to rust-resistant wheat strains that boosted crop yields, and rust epidemics receded in much of the world.But now, after decades, rusts are considered a reemerging disease in Europe, at least partly due to climate change. An international initiative hopes to turn the tide by scaling up a system to track wheat diseases and forecast potential outbreaks to governments and farmers in close to real time. And by doing so, they hope to protect a crop that supplies about one-fifth of the world’s calories. Read the full story. —Shaoni Bhattacharya

The must-reads I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology. 1 Meta has taken down its creepy AI profiles Following a big backlash from unhappy users. (NBC News)+ Many of the profiles were likely to have been live from as far back as 2023. (404 Media)+ It also appears they were never very popular in the first place. (The Verge) 2 Uber and Lyft are racing to catch up with their robotaxi rivalsAfter abandoning their own self-driving projects years ago. (WSJ $)+ China’s Pony.ai is gearing up to expand to Hong Kong. (Reuters)3 Elon Musk is going after NASA He’s largely veered away from criticising the space agency publicly—until now. (Wired $)+ SpaceX’s Starship rocket has a legion of scientist fans. (The Guardian)+ What’s next for NASA’s giant moon rocket? (MIT Technology Review) 4 How Sam Altman actually runs OpenAIFeaturing three-hour meetings and a whole lot of Slack messages. (Bloomberg $)+ ChatGPT Pro is a pricey loss-maker, apparently. (MIT Technology Review) 5 The dangerous allure of TikTokMigrants’ online portrayal of their experiences in America aren’t always reflective of their realities. (New Yorker $) 6 Demand for electricity is skyrocketingAnd AI is only a part of it. (Economist $)+ AI’s search for more energy is growing more urgent. (MIT Technology Review) 7 The messy ethics of writing religious sermons using AISkeptics aren’t convinced the technology should be used to channel spirituality. (NYT $)

8 How a wildlife app became an invaluable wildfire trackerWatch Duty has become a safeguarding sensation across the US west. (The Guardian)+ How AI can help spot wildfires. (MIT Technology Review) 9 Computer scientists just love oracles 🔮 Hypothetical devices are a surprisingly important part of computing. (Quanta Magazine)

10 Pet tech is booming 🐾But not all gadgets are made equal. (FT $)+ These scientists are working to extend the lifespan of pet dogs—and their owners. (MIT Technology Review) Quote of the day “The next kind of wave of this is like, well, what is AI doing for me right now other than telling me that I have AI?” —Anshel Sag, principal analyst at Moor Insights and Strategy, tells Wired a lot of companies’ AI claims are overblown.

The big story Broadband funding for Native communities could finally connect some of America’s most isolated places September 2022 Rural and Native communities in the US have long had lower rates of cellular and broadband connectivity than urban areas, where four out of every five Americans live. Outside the cities and suburbs, which occupy barely 3% of US land, reliable internet service can still be hard to come by.

The covid-19 pandemic underscored the problem as Native communities locked down and moved school and other essential daily activities online. But it also kicked off an unprecedented surge of relief funding to solve it. Read the full story. —Robert Chaney We can still have nice things A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line or skeet ’em at me.) + Rollerskating Spice Girls is exactly what your Monday morning needs.+ It’s not just you, some people really do look like their dogs!+ I’m not sure if this is actually the world’s healthiest meal, but it sure looks tasty.+ Ah, the old “bitten by a rabid fox chestnut.”

Equinor Secures $3 Billion Financing for US Offshore Wind Project

Equinor ASA has announced a final investment decision on Empire Wind 1 and financial close for $3 billion in debt financing for the under-construction project offshore Long Island, expected to power 500,000 New York homes. The Norwegian majority state-owned energy major said in a statement it intends to farm down ownership “to further enhance value and reduce exposure”. Equinor has taken full ownership of Empire Wind 1 and 2 since last year, in a swap transaction with 50 percent co-venturer BP PLC that allowed the former to exit the Beacon Wind lease, also a 50-50 venture between the two. Equinor has yet to complete a portion of the transaction under which it would also acquire BP’s 50 percent share in the South Brooklyn Marine Terminal lease, according to the latest transaction update on Equinor’s website. The lease involves a terminal conversion project that was intended to serve as an interconnection station for Beacon Wind and Empire Wind, as agreed on by the two companies and the state of New York in 2022. “The expected total capital investments, including fees for the use of the South Brooklyn Marine Terminal, are approximately $5 billion including the effect of expected future tax credits (ITCs)”, said the statement on Equinor’s website announcing financial close. Equinor did not disclose its backers, only saying, “The final group of lenders includes some of the most experienced lenders in the sector along with many of Equinor’s relationship banks”. “Empire Wind 1 will be the first offshore wind project to connect into the New York City grid”, the statement added. “The redevelopment of the South Brooklyn Marine Terminal and construction of Empire Wind 1 will create more than 1,000 union jobs in the construction phase”, Equinor said. On February 22, 2024, the Bureau of Ocean Energy Management (BOEM) announced

USA Crude Oil Stocks Drop Week on Week

U.S. commercial crude oil inventories, excluding those in the Strategic Petroleum Reserve (SPR), decreased by 1.2 million barrels from the week ending December 20 to the week ending December 27, the U.S. Energy Information Administration (EIA) highlighted in its latest weekly petroleum status report, which was released on January 2. Crude oil stocks, excluding the SPR, stood at 415.6 million barrels on December 27, 416.8 million barrels on December 20, and 431.1 million barrels on December 29, 2023, the report revealed. Crude oil in the SPR came in at 393.6 million barrels on December 27, 393.3 million barrels on December 20, and 354.4 million barrels on December 29, 2023, the report showed. Total petroleum stocks – including crude oil, total motor gasoline, fuel ethanol, kerosene type jet fuel, distillate fuel oil, residual fuel oil, propane/propylene, and other oils – stood at 1.623 billion barrels on December 27, the report revealed. This figure was up 9.6 million barrels week on week and up 17.8 million barrels year on year, the report outlined. “At 415.6 million barrels, U.S. crude oil inventories are about five percent below the five year average for this time of year,” the EIA said in its latest report. “Total motor gasoline inventories increased by 7.7 million barrels from last week and are slightly below the five year average for this time of year. Finished gasoline inventories decreased last week while blending components inventories increased last week,” it added. “Distillate fuel inventories increased by 6.4 million barrels last week and are about six percent below the five year average for this time of year. Propane/propylene inventories decreased by 0.6 million barrels from last week and are 10 percent above the five year average for this time of year,” it went on to state. In the report, the EIA noted

More telecom firms were breached by Chinese hackers than previously reported

Broader implications for US infrastructure The Salt Typhoon revelations follow a broader pattern of state-sponsored cyber operations targeting the US technology ecosystem. The telecom sector, serving as a backbone for industries including finance, energy, and transportation, remains particularly vulnerable to such attacks. While Chinese officials have dismissed the accusations as disinformation, the recurring breaches underscore the pressing need for international collaboration and policy enforcement to deter future attacks. The Salt Typhoon campaign has uncovered alarming gaps in the cybersecurity of US telecommunications firms, with breaches now extending to over a dozen networks. Federal agencies and private firms must act swiftly to mitigate risks as adversaries continue to evolve their attack strategies. Strengthening oversight, fostering industry-wide collaboration, and investing in advanced defense mechanisms are essential steps toward safeguarding national security and public trust.

Inside the stealthy startup that pitched brainless human clones

After operating in secrecy for years, a startup company called R3 Bio, in Richmond, California, suddenly shared details about its work last week—saying it had raised money to create nonsentient monkey “organ sacks” as an alternative to animal testing. In an interview with Wired, R3 listed three investors: billionaire Tim Draper, the Singapore-based fund Immortal Dragons, and life-extension investors LongGame Ventures. But there is more to the story. And R3 doesn’t want that story told. MIT Technology Review discovered that the stealth startup’s founder John Schloendorn also pitched a startling, medically graphic, and ethically charged vision for what he’s called “brainless clones” to serve the role of backup human bodies.

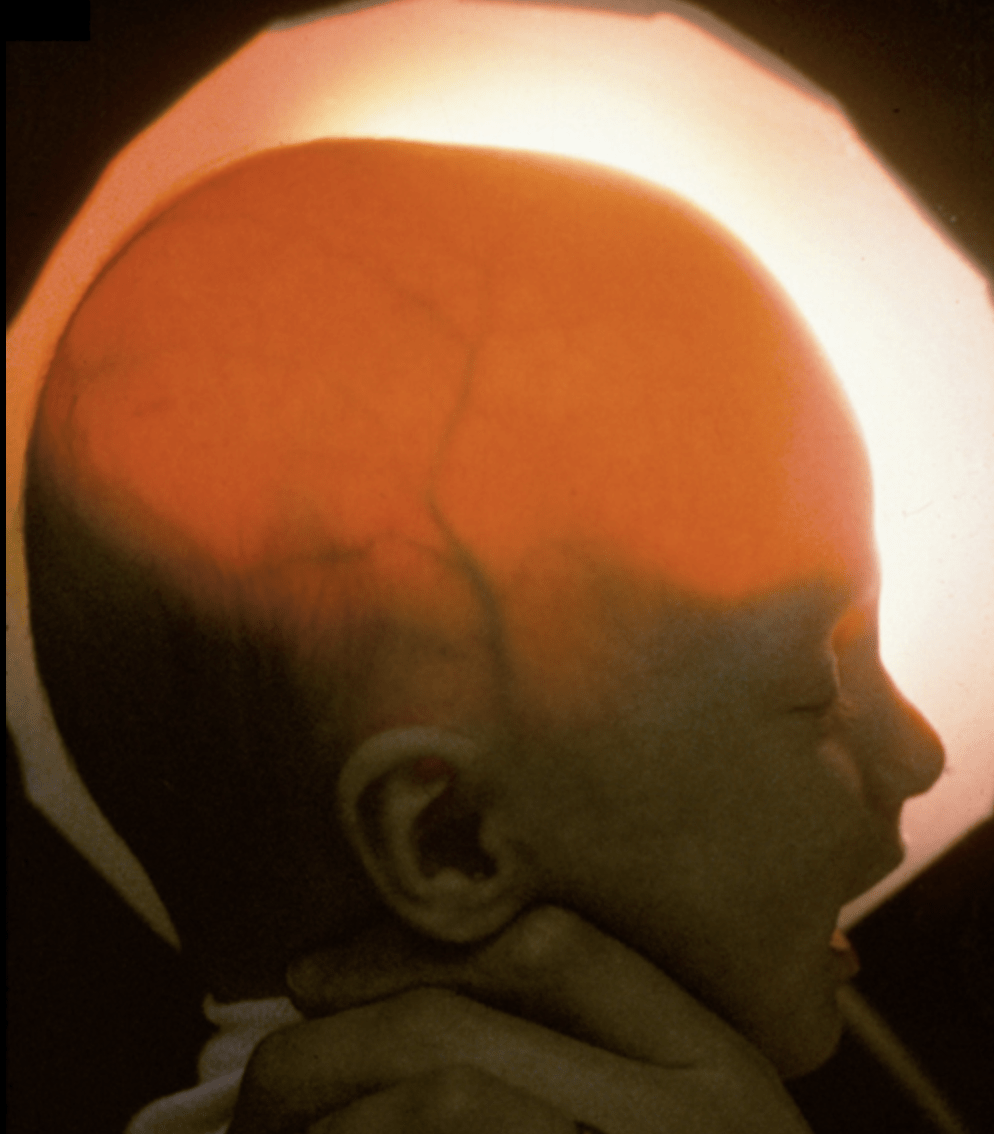

Imagine it like this: a baby version of yourself with only enough of a brain structure to be alive in case you ever need a new kidney or liver. Or, alternatively, he has speculated, you might one day get your brain placed into a younger clone. That could be a way to gain a second lifespan through a still hypothetical procedure known as a body transplant.

The fuller context of R3’s proposals, as well as activities of another stealth startup with related goals, have not previously been reported. They’ve been kept secret by a circle of extreme life-extension proponents who fear that their plans for immortality could be derailed by clickbait headlines and public backlash. And that’s because the idea can sound like something straight from a creepy science fiction film. One person who heard R3’s clone presentation, and spoke on the condition of anonymity, was left reeling by its implications and shaken by Schloendorn’s enthusiastic delivery. The briefing, this person said, was like a “close encounter of the third kind” with “Dr. Strangelove.” A key inspiration for Schloendorn is a birth defect in which children are born missing most of their cortical hemispheres; he’s shown people medical scans of these kids’ nearly empty skulls as evidence that a body can live without much of a brain. And he’s talked about how to grow a clone. Since artificial wombs don’t exist yet, brainless bodies can’t be grown in a lab. So he’s said the first batch of brainless clones would have to be carried by women paid to do the job. In the future, though, one brainless clone could give birth to another. Last Monday, the same day it announced itself to the world in Wired, R3 sent us a sweeping disavowal of our findings. It said Schloendorn “never made any statement regarding hypothetical ‘non-sentient human clones’ [that] would be carried by surrogates.” The most overarching of these challenges was its insistence that “any allegations of intent or conspiracy to create human clones or humans with brain damage are categorically false.” But even Schloendorn and his cofounder, Alice Gilman, can’t seem to keep away from the topic. Just last September, the pair presented at Abundance Longevity, a $70,000-per-ticket event in Boston organized by the anti-aging promoter Peter Diamandis. Although the presentation to about 40 people was not recorded and was meant to be confidential, a copy of the agenda for the event shows that Schloendorn was there to outline his “final bid to defeat aging” in a session called “Full Body Replacement.” According to a person who was there, both animal research and personal clones for spare organs were discussed. During the presentation, Gilman and Schloendorn even stood in front of an image of a cloning needle. Pressed on whether this was a talk about brainless clones, Gilman told us that while R3’s current business is replacing animal models, “the team reserves the right to hold hypothetical futuristic discussions.” MIT Technology Review found no evidence that R3 has cloned anyone, or even any animal bigger than a rodent. What we did find were documents, additional meeting agendas, and other sources outlining a technical road map for what R3 called “body replacement cloning” in a 2023 letter to supporters. That road map involved improvements to the cloning process and genetic wiring diagrams for how to create animals without complete brains.

A child with hydranencephaly, a rare condition in which most of the brain is missing. Could a human clone also be created without much of a brain as an ethical source of spare organs?DIMITRI AGAMANOLIS, M.D. VIA WIKIPEDIA A main purpose of the fundraising, investors say, was to support efforts to try these techniques in monkeys from a base in the Caribbean. That offered a path to a nearer-term business plan for more ethical medical experiments and toxicology testing—if the company could develop what it now calls monkey “organ sacks.” However, this work would clearly inform any possible human version. Though he holds a PhD, Schloendorn is a biotech outsider who has published little and is best known for having once outfitted a DIY lab in his Bay Area garage. Still, his ties to the experimental fringe of longevity science have earned him a network in Silicon Valley and allies at a risk-taking US health innovation agency, ARPA-H. Together with his success at raising money from investors, this signals that the brainless-clone concept should be taken seriously by a wider community of scientists, doctors, and ethicists, some of whom expressed grave concerns. “It sounds crazy, in my opinion,” said Jose Cibelli, a researcher at Michigan State University, after MIT Technology Review described R3’s brainless-clone idea to him. “How do you demonstrate safety? What is safety when you’re trying to create an abnormal human?” Twenty-five years ago, Cibelli was among the first scientists to try to clone human embryos, but he was trying to obtain matched stem cells, not make a baby. “There is no limit to human imagination and ways to make money, but there have to be boundaries,” he says. “And this is the boundary of making a human being who is not a human being.” “Feasibility research” Since Dolly the sheep was born in 1996, researchers have cloned dogs, cats, camels, horses, cattle, ferrets, and other species of mammal. Injecting a cell from an existing animal into an egg creates a carbon-copy embryo that can develop, although not always without problems. Defects, deformities, and stillbirths remain common. Those grave risks are why we’ve never heard of a human clone, even though it’s theoretically possible to create one. But brainless clones flip the script. That’s because the ultimate aim is to create not a healthy person but an unconscious body that would probably need life support, like a feeding tube, to stay alive. Because this body would share the DNA of the person being copied, its organs would be a near-perfect immunological match. Backers of this broad concept argue that a nonsentient body would be ethically acceptable to harvest organs from. Some also believe that swapping in fresh, young body parts—known as “replacement”—is the likeliest path to life extension, since so far no drug can reverse aging.

And then there’s the idea of a complete body transplant. “Certainly, for the cryonics patients, that sounds like something really promising,” says Anders Sandberg, a prominent Swedish transhumanist and expert in the ethics of future technologies. He notes that many people who opt to be stored in cryonic chambers after death choose the less expensive “head only” option, so “there might be a market for having an extra cloned body.” MIT Technology Review first approached Schloendorn two years ago after learning he’d led a confidential online seminar called the Body Replacement Mini Conference, in which he presented “recent lab progress towards making replacement bodies.”

According to a copy of the agenda, that 2023 session also included a presentation by a cloning expert, Young Gie Chung. And there was another from Jean Hébert, who was then a professor at the Albert Einstein College of Medicine and is now a program manager at ARPA-H, where he oversees a project to use stem cells to restore damaged brain tissue. Hébert popularized the so-called replacement solution to avoiding death in a 2020 book called Replacing Aging. In an interview prior to joining the government in 2024, Hébert described an informal but “very collaborative” relationship with Schloendorn. The overall idea was that to stop aging, one of them would determine how to repair a brain, while the other would figure out how to create a body without one. “It’s a perfect match, right? Body, brain,” Hébert told MIT Technology Review at the time. Schloendorn, by working outside the mainstream, had the huge advantage of “not being bound by getting the next paper out, or the next grant,” Hébert said, adding, “It’s such a wonderful way of doing research. It’s just clean and pure.” R3 now appears on the ARPA-H website on a list of prospective partners for Hébert’s program. In a LinkedIn message exchanged with Schloendorn that same year, he described his work as “feasibility research in body replacement.” “We will try to do it in a way that produces defined societal benefits early on, and we need to be prepared to take no for an answer, if it turns out that this cannot be done safely,” Schloendorn wrote at the time. He declined an interview then, saying that before exiting stealth mode, he wants to be sure the benefits are “reasonably grounded in reality.” That could prove challenging. While body-part replacement sounds logical, like swapping the timing belt on an old car, in reality there’s scant evidence that receiving organs from a younger twin would make you live any longer.

A complete body transplant, meanwhile, would probably be fatal, at least with current techniques. In the latest test of the concept, published last July, Russian surgeons removed a pig’s head and then sewed it back on. The animal did live—breathing weakly and lapping water from a syringe. But because its spinal cord had been cut, it was otherwise totally paralyzed. (As yet, there’s no proven method to rejoin a severed spinal cord.) In an act of mercy, the doctors ended the pig’s life after about 12 hours. Even some of R3’s investors say the endeavor is a risky, low-odds project, on par with colonizing Mars. Boyang Wang, head of Immortal Dragons, has spoken at longevity conferences about body-swapping technology, referring to the chance that “when the time comes, you can transplant your brain into a new body.” Wang confirmed in a January Zoom call that he’d been referring to R3 and that he invested $500,000 in the company during a 2024 fundraising round. But since making his investment, Wang says, he’s become less bullish. He now views whole-body transplant as “very infeasible, not even very scientific” and “far away from hope for any realistic application.” Still, he says, the investment in R3 fits with his philosophy of making unorthodox bets that could be breakthroughs against aging. “What can really move the needle?” he asks. “Because time is running out.”

Stealth mode Clonal bodies sit at the extreme frontier of an advancing cluster of technologies all aimed at growing spare parts. Researchers are exploring stem cells, synthetic embryos, and blob-like organoids, and some companies are cloning genetically engineered pigs whose kidneys and hearts have already been transplanted into a few patients. Each of these methods seeks to harness development—the process by which animal bodies naturally form in the womb—to grow fully functional organs. There’s even a growing cadre of mainstream scientists who say nonsentient bodies could solve the organ shortage, if they could be grown through artificial means. Two Stanford University professors, calling these structures “bodyoids,” published an editorial in favor of manufacturing spare human bodies in MIT Technology Review last year. While that editorial left many details to the imagination, they called the idea “at least plausible—and possibly revolutionary.” “There are a lot of variations on this where they’re trying to find a socially acceptable form,” says George Church, a Harvard University professor who advises startups in the field. But Church says gestating an entire body is probably taking things too far, especially since nearly all patients on transplant lists are waiting for just a single organ, like a heart or kidney. “There’s almost no scenario where you need a whole body,” he says. “I just think even if it’s someday acceptable, it’s not a good place to start.” For the moment, Church says, brainless human bodies are “not very useful, in addition to being repulsive.” That’s arguably why body replacement technology still feels risky to talk about, even among life-extension enthusiasts who are otherwise ready to inject Chinese peptides or have their bodies cryogenically frozen. “I think it’s exciting or interesting from a scientific perspective, but I think the world is not fully ready for it yet,” says Emil Kendziorra, CEO of Tomorrow Bio, a company in Berlin that stores bodies at -196 °C in the hope they can be restored to life in the future. “Everybody’s like, yeah, you know, cryopreservation makes total sense,” he says. “And then you talk about total body replacement. And then everybody’s like, Whoa, whoa, whoa.” Even so, “replacement” technology has found a fervent base of support among a group of self-described “hardcore” longevity adherents who follow a philosophy called Vitalism, which holds that society should redirect resources toward achieving unlimited lifespans. The growing influence of this movement, achieved through lobbying, investment, recruiting, and public messaging, was detailed earlier this year in MIT Technology Review. Last spring, during a meetup for this community, Kendziorra was among the attendees at an invite-only “Replacement Day” gathering that took place off the public schedule. It was where more radical ideas could be discussed freely, since to some in the Vitalist circle, replacing body parts has emerged as the most plausible, least expensive way to beat death. At least that was the conclusion of a road map for anti-aging technology produced by one Vitalist group, the Longevity Biotech Fellowship, which reckoned that a proof-of-concept human clone lacking a neocortex would cost $40 million to create—a tiny amount, relatively speaking. Its report cited the existence of two stealth companies working on cloning whole nonsentient bodies, although it took care not to name them. If these companies’ activities become public, “there will be a huge backlash—people will hate it,” the entrepreneur Kris Borer said while presenting the road map at a French resort last August. “There are a ton of dystopian movies and novels about this kind of stuff. That is why I didn’t talk about any of the companies working on it. They are trying to hide from public attention,” he said. “We have to have the angel investors and other people invest kind of in secret until things are ready.” Borer did say what he sees as the best way to go public: first, to slowly ease body replacement into society’s awareness by disclosing more limited aims, which will be palatable. “We are not going to start with Let’s clone you and give you a body. We are going to start with Let’s solve the organ shortage,” he said. “Eventually people will warm up to it, and then we can go to the more hardcore stuff.” In an interview earlier this month, Borer declined to name the companies involved in his immortality road map, or to say if R3 is one of them. But we did identify one additional stealthy startup, this one focused on replacing a person’s internal organs, not the whole body. Called Kind Biotechnology, it is a New Hampshire–based company headed by the anti-aging researcher Justin Rebo, a sometime collaborator of Schloendorn’s. A patent image from Kind Biotechnology shows a mouse pup engineered to lack anatomical features (left) next to a normal animal. The company’s goal is to grow organ “sacks” with a “complete lack of ability to feel, think, or sense.” WO2025260099 VIA WIPO According to patent applications filed by the company, Rebo’s team is working to create animals with a “complete lack of ability to feel, think, or sense the environment.” Images included in the patents show mice the company produced that lack a complete brain, and others that don’t have faces or limbs. They did that by deleting genes in embryos using the gene-editing technology CRISPR with the goal of creating a “sack of organs that grows mostly on its own,” with only a minimal nervous system. A cartoon rendering submitted to the patent office shows what looks like a fleshy duffel bag connected to life support tubes. In an email, Rebo said his company is working on an “ethical and scalable” way to create animal organs for experimental transplant to humans. He notes that “thousands die while waiting” for an organ. Some of Kind’s patent applications do cover the possibility of producing these organ sacks from human cells. Rebo says that’s more of a speculative possibility. But he does see his work as part of the “replacement” approach to longevity. Firstly, that’s because a “scalable production of young, high-quality organs” would let surgeons try transplants in more types of patients, including many with heart disease in old age who aren’t candidates for a transplant now. “With abundant high-quality organs, replacement could become a direct form of rejuvenation by replacement of failing parts,” he says. And Rebo imagines that simultaneously replacing multiple internal organs (grown together in the sack) could have even broader rejuvenating effects. “Ultimately, replacing failing parts is a direct path to extending healthy human lifespan,” he says. Church, who agreed earlier this year to advise Kind Bio, sees this work as part of an effort to “nudge” these technologies “toward something that is more useful and more acceptable from the get-go,” he says. “And then let’s see how society responds to that—rather than jumping to the most repulsive and most useless form, which some of them seem to be aiming for.” “There’s one way to find out” People who know Schloendorn describe a dynamo-like presence who is “100% dedicated” to the goal of extreme life extension. In 2006, he penned a paper in a bioethics journal outlining why the “desire to live forever” is rational, and his doctoral research at the University of Arizona was sponsored by a longevity research organization called the SENS Foundation. He’s also well connected. In an interview, Aubrey de Grey, the influential and controversial fundraiser and prognosticator who cofounded SENS, called Schloendorn “one of my protégés.” And around 2010, Peter Thiel reportedly invested $1.5 million in ImmunePath, a company started by Schloendorn to develop stem-cell treatments, though it soon failed. (A representative for Thiel did not respond to a request to confirm the figure.) By 2021, Schloendorn had moved on, founding R3 Biotechnologies. He began to circulate the body replacement idea and discuss a step-by-step scheme to get there: assess techniques in the lab first, then in monkeys, and maybe eventually in humans. A 2023 “letter to stakeholders” signed by Schloendorn begins by saying that “body replacement cloning will require multicomponent genetic engineering on a scale that has never been attempted in primates.” Fortunately, it adds, molecular techniques for “brain knockout” are well known in mice and should also be expected to function in “birthing whole primates,” a class that includes both monkeys and humans. Would it work? “There’s one way to find out,” the letter says. Wang, the investor at Immortal Dragons, says he put money into R3 after it showed him it is possible to create mice without complete brains. “There were imperfections, but the resulting mice survived, grew up, and to me, that is a pretty strong experiment,” he says; it was evidence enough for him to fund R3’s attempt to “replicate the result in primates.” (In its emailed statement, R3 said the company and its founders “never produced any degree of brain alterations in any species, did not attempt to do so, did not hire another party to do so, and have no specific plans to do so in the future.” It added: “We do not work with live non-human primates.”) The bigger technical obstacle, though, remains the cloning. Out of 100 attempts to clone an animal, only a few typically succeed. That fact alone makes cloning a human—or a monkey—almost infeasible. But R3 does seem to have made an effort to tackle the efficiency problem. In one document reviewed by MIT Technology Review, it claims to have implemented improvements to the basic procedure in rodents, referencing a protein, called a histone demethylase, that helps erase a cell’s genetic memory. Adding it can greatly increase the chance that the cell will form a cloned embryo after being injected into an egg in the lab. Those molecules were used in the first successful cloning of a monkey, which occurred in 2018 in China. But it still wasn’t easy—in fact, it was a huge and costly effort to handle a crowd of monkeys in estrus and perform IVF on them. According to Michigan State’s Cibelli, monkey cloning remains nearly impossible, at least on US territory, just because it’s “unaffordable.” Nevertheless, success in monkeys did help prove, at least biologically, that human reproductive cloning could be possible. The company may also have tried to tackle a second long-standing obstacle to cloning: defects in how the placenta works. Because of such problems, some cloned animals die quickly after birth. The R3 document refers to a “birthing fix” it developed to further improve the cloning success rate. While MIT Technology Review didn’t learn what R3’s process entails, we found a reference to it on the LinkedIn page of Maitriyee Mahanta, a scientist who cosigned the 2023 letter to R3 stakeholders and is a former research assistant to Hébert. (We were unable to reach Mahanta for comment.) Her page described her current role as “molecular lead” studying cloning, “birth rate fixing,” and cortical development using cells from nonhuman primates. Her job affiliation is given as the Longevity Escape Velocity Foundation, a nonprofit where de Grey is the president and chief science officer. But de Grey says his foundation only arranged a work visa for Mahanta as part of a partnership “with the company she actually spends her time at.” Like several other people interviewed for this article, de Grey made a resourceful effort to avoid directly confirming the existence of R3 when we spoke, while at the same time freely discussing theoretical aspects of body cloning technology. For instance, he talked about ways to shorten the wait for your double to grow up to a size suitable for organ harvesting; a further genetic mutation could be added to cause “central precocious puberty” in the clone, he said. This condition causes a growth spurt, even pubic hair, in a toddler. Cloning dictators Who would clone a body and pay to keep it alive for years, until it’s needed? The first customers for this costly technology (if it ever proves feasible) would likely be the ultra-rich or the ultra-powerful. Indeed, somehow the world’s top dictators seem to have gotten the memo about replacement parts. In September, a hot mic picked up a conversation between Russian president Vladimir Putin and Chinese leader Xi Jinping as they walked through Beijing with North Korean autocrat Kim Jong Un; in the exchange, the Russian speculated on life extension. “Biotechnology is continuously developing. Human organs can be continuously transplanted. The longer you live, the younger you become, and [you can] even achieve immortality,” Putin said through an interpreter. “Some predict that in this century, humans will live to 150 years old,” Xi responded agreeably. How the leaders learned of these possibilities is unknown. But scenarios involving dictators are a constant topic among body replacement enthusiasts. “There are companies working on this. They are in stealth—we can’t reveal too much about them—but the general concept on this is if you didn’t have any ethical qualms, you could do most of it today,” Will Harborne, the chief investment officer of LongGame Advisors, said last year, during an interview with the podcaster Julian Issa. “If you were the dictator of some country and wanted a clone of yourself, you can already go grow one. You can create a cloned embryo of yourself, you can get a surrogate to carry it to term, and you can grow [a] body until age 18 with a brain, and eventually, if you were a dictator, you could kill them and try to transplant your head on their body.” “And now no one is suggesting you do that—it’s very unethical—but most of the technology is there,” he said. He noted that the reason for removing the cortex of a clone created for such a purpose is that “we don’t want to kill other people to live forever.” Harborne subsequently confirmed to MIT Technology Review that the fund invested $1 million in R3 about a year and a half ago. In order to make the body replacement process ethical, the clone’s brain needs to be stunted so it lacks consciousness. That is where the interest in birth defects comes in. Remarkable medical scans of kids with a rare condition, hydranencephaly, show a total absence of the cerebral hemispheres. Yet if they are cared for, they may be able to live into their 20s, even though they cannot speak or engage in purposeful movement. The technical question, then, is how to intentionally produce such a condition in a clone. Sandberg, the futurist, says he’s visited R3’s lab, talked to Gilman, and sat through a presentation about how genetic engineering can be used to shape brain growth. Previous work has shown that by adding a toxic gene, it is possible to kill specific cell types in a growing embryo but spare others, leading to a mouse without a neocortex. While Sandberg isn’t an expert in biotechnology, he says R3’s theory looked sensible to him. “I think it’s possible to actually prevent the development of the brain well enough that you can say ‘Yeah, there is almost certainly no consciousness here,’” Sandberg says. “Hence, there can’t be any suffering, or any individual, in a practical sense.” “I think the overall aim—actually, it looks ethically pretty good,” he says. Monkeys were successfully cloned in China for the first time in 2018. Although it was was a costly and difficult undertaking, the feat suggested human cloning is biologically possible. QIANG SUN AND MU-MING POO/CHINESE ACADEMY OF SCIENCES VIA AP Yet it could be difficult to really determine where consciousness starts and ends. Under current medical standards, taking the organs of people with hydranencephaly isn’t allowed because they don’t meet the standard of brain death: They have a functioning brain stem. An even more serious problem is evidence that the brain stem alone produces a basic form of consciousness. If that is so, says Bjorn Merker, a neuroscientist who surveyed caretakers of more than a hundred children with hydranencephaly, a plan “to harvest organs from organisms modeled on this condition would be unethical.” Of course, the most extreme version of the replacement dream isn’t just to take organs. It’s to take over the body entirely. Sergio Canavero, a controversial Italian surgeon who has proposed head and brain transplants, says he was approached for advice by Schloendorn and others a few years ago. “They told me they were looking at a head transplant on a two- or three-year-old,” he says. “I stopped short. How could you even conceive of that? The biomechanical compatibility is not there. You have to wait until at least 14. And I would say 16. It was very clear to me these guys are not surgeons—they are biologists.” Canavero says he’s not opposed to cloning bodies for transplant—he thinks it could work. “But if you want to use a clone,” he says, “it must be a nonsentient clone. Otherwise it’s murder, a homicide.” MIT Technology Review has not found any evidence that R3 has yet created an “organ sack,” much less a brainless human clone. And there are many reasons to believe their hypothetical future of “full body replacement” will never come to pass—that it is just a live-forever fantasy. “There are so many barriers,” says Cibelli. It’s a long list: Human cloning is illegal in many countries, it’s unsafe, and few competent experts would want, or dare, to participate. And then there’s the inconvenient fact that for now, there’s no way to grow a brainless clone to birth, except in a woman’s body. Think about it, Cibelli says: “You’d have to convince a woman to carry a fetus that is going to be abnormal.” Sandberg agrees that is where things could start to get tricky. “The problem here, of course,” he says, “is that the yuck factor is magnificent.”

Helping disaster response teams turn AI into action across Asia