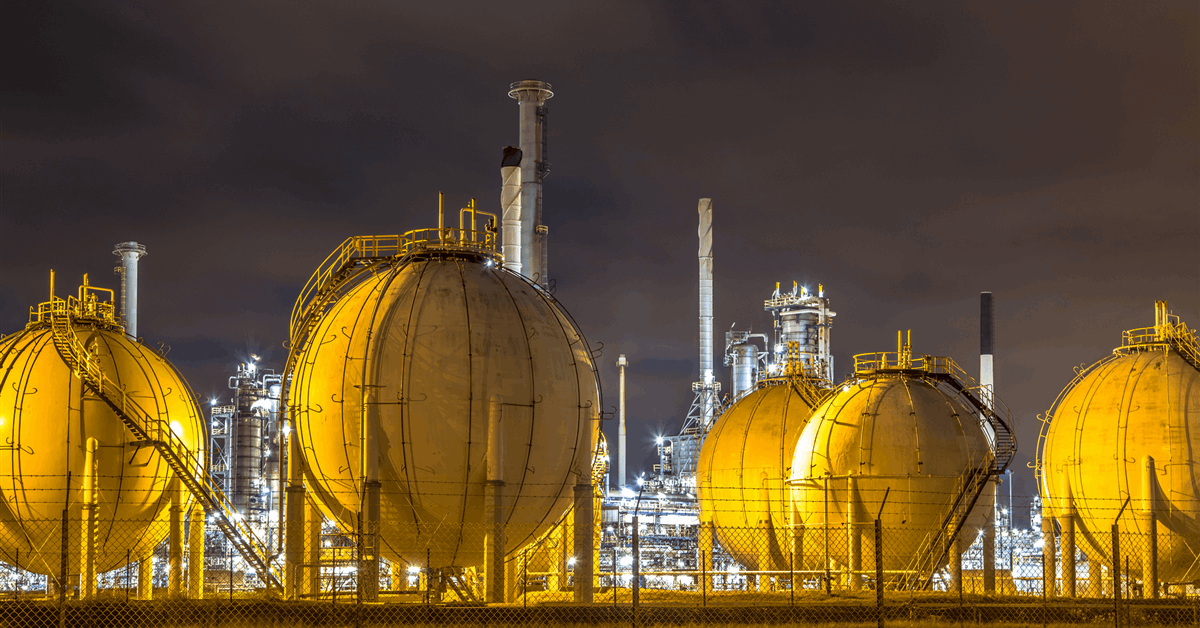

Global liquefied natural gas exports grew at the slowest pace since 2015, threatening to keep prices elevated until new supply comes online to meet rising demand.

Annual LNG shipments are set to rise 0.4% to roughly 414 million tons this year, according to data compiled by Kpler. Delays to US projects and sanctions against Russia’s newest facility curbed new supply into the market.

The LNG market has been finely balanced since the 2022 invasion of Ukraine cut Russian pipeline gas to Europe, forcing the continent to depend more on the super-chilled fuel. The lack of new exports has made the market susceptible to price spikes for buyers in Europe and Asia.

The market could find some relief in 2025 as new US projects ramp up production and another facility starts in Canada. Venture Global LNG Inc.’s Plaquemines plant exported its first shipment last week, and Cheniere Energy Inc.’s Corpus Christi plant began production from the first phase of its expansion on Monday.

The US was the world’s largest exporter, shipping a record 87 million tons in 2024, roughly on par with the previous year, Kpler data showed.

China was the biggest LNG buyer for the second year in a row. The country received over 78 million tons, up 8.5% year-over-year, according to the data. That’s still slightly lower than 2021, when China imported about 80 million tons.

WHAT DO YOU THINK?

Generated by readers, the comments included herein do not reflect the views and opinions of Rigzone. All comments are subject to editorial review. Off-topic, inappropriate or insulting comments will be removed.

MORE FROM THIS AUTHOR

Bloomberg